mdadm Command in Linux

The mdadm command in Linux is a powerful utility used to manage and maintain RAID (Redundant Array of Independent Disks) arrays. RAID arrays combine multiple physical disks into a single logical unit to improve performance, redundancy, or both.

The mdadm toolset provides comprehensive management capabilities for RAID arrays, including creating, assembling, monitoring, and managing them.

Table of Contents

Here is a comprehensive guide to the options available with the mdadm command −

Understanding mdadm Command

RAID is a technology that combines multiple physical disks into a single logical unit to achieve various goals, such as increased performance, redundancy, or both. There are different RAID levels, each offering a unique combination of these benefits. The mdadm command is used to create, assemble, monitor, and manage RAID arrays, making them available for use by the operating system.

Installing the mdadm Package

Before using the mdadm command, you need to ensure that the mdadm package is installed on your system. You can install it using your package manager.

For example, on Debian-based systems like Ubuntu, you can use the following command −

sudo apt-get install mdadm

On Red Hat-based systems like CentOS, you can use −

sudo yum install mdadm

Syntax of mdadm Command in Linux

The mdadm command is used to create new RAID arrays. Here is the basic syntax for creating a RAID array −

sudo mdadm --create /dev/md0 --level=raid_level --raid-devices=num_devices device1 device2 ...

- /dev/md0 − The RAID device to be created.

- --level=raid_level − The RAID level (e.g., 0, 1, 5, 6, 10).

- --raid-devices=num_devices − The number of devices in the RAID array.

- device1 device2 − The component devices that make up the RAID array.

Examples of mdadm Command in Linux

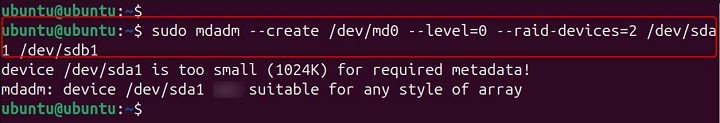

Creating a RAID 0 Array

RAID 0 provides increased performance by striping data across multiple disks. To create a RAID 0 array, you can use the following command −

sudo mdadm --create /dev/md0 --level=0 --raid-devices=2 /dev/sda1 /dev/sdb1

In this example, the mdadm command creates a RAID 0 array /dev/md0 using the component devices /dev/sda1 and /dev/sdb1.

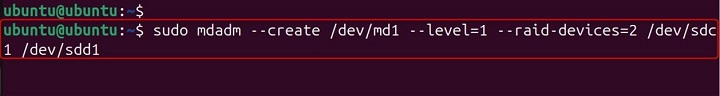

Creating a RAID 1 Array

RAID 1 provides redundancy by mirroring data across multiple disks. To create a RAID 1 array, you can use the following command −

sudo mdadm --create /dev/md1 --level=1 --raid-devices=2 /dev/sdc1 /dev/sdd1

In this example, the mdadm command creates a RAID 1 array /dev/md1 using the component devices /dev/sdc1 and /dev/sdd1.

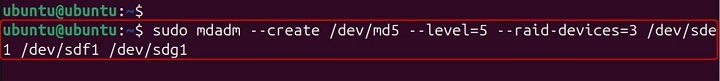

Creating a RAID 5 Array

RAID 5 provides both performance and redundancy by distributing parity information across multiple disks. To create a RAID 5 array, you can use the following command −

sudo mdadm --create /dev/md5 --level=5 --raid-devices=3 /dev/sde1 /dev/sdf1 /dev/sdg1

In this example, the mdadm command creates a RAID 5 array /dev/md5 using the component devices /dev/sde1, /dev/sdf1, and /dev/sdg1.

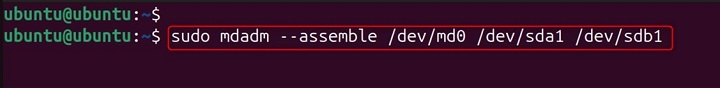

Assembling a RAID Array

To assemble an existing RAID array, you can use the following command −

sudo mdadm --assemble /dev/md0 /dev/sda1 /dev/sdb1

In this example, the mdadm command assembles the RAID array /dev/md0 using the component devices /dev/sda1 and /dev/sdb1.

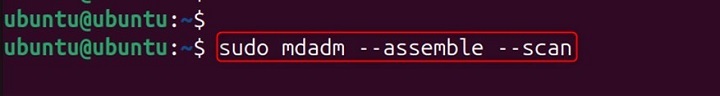

Assembling All RAID Arrays

To assemble all RAID arrays defined in the configuration file, you can use the --scan option −

sudo mdadm --assemble --scan

In this example, the mdadm command scans the configuration file (usually /etc/mdadm/mdadm.conf) and assembles all defined RAID arrays.

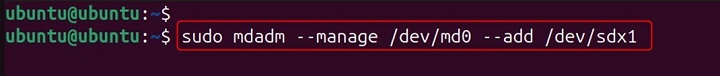

Adding a New Component Device

To add a new component device to a RAID array, you can use the --add option −

sudo mdadm --manage /dev/md0 --add /dev/sdx1

In this example, the mdadm command adds the component device /dev/sdx1 to the RAID array /dev/md0.

Removing a Component Device

To remove a component device from a RAID array, you can use the --remove option −

sudo mdadm --manage /dev/md0 --remove /dev/sdx1

In this example, the mdadm command removes the component device /dev/sdx1 from the RAID array /dev/md0.

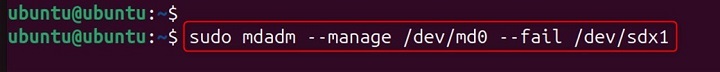

Marking a Component Device as Faulty

To mark a component device as faulty, you can use the --fail option −

sudo mdadm --manage /dev/md0 --fail /dev/sdx1

In this example, the mdadm command marks the component device /dev/sdx1 as faulty in the RAID array /dev/md0.

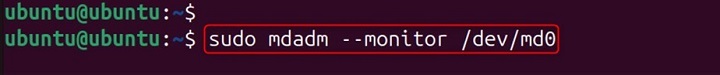

Monitoring a RAID Array

To monitor the status of a RAID array, you can use the following command −

sudo mdadm --monitor /dev/md0

In this example, the mdadm command monitors the status of the RAID array /dev/md0.

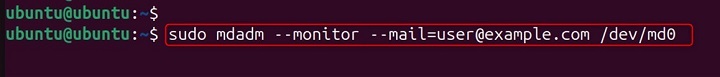

Sending Email Notifications

To send email notifications when the status of a RAID array changes, you can use the --mail option −

sudo mdadm --monitor --mail=user@example.com /dev/md0

In this example, the mdadm command monitors the status of the RAID array /dev/md0 and sends email notifications to user@example.com when the status changes.

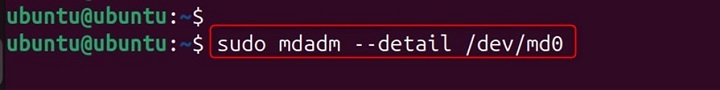

Displaying Detailed Information

To display detailed information about a RAID array, you can use the following command −

sudo mdadm --detail /dev/md0

In this example, the mdadm command displays detailed information about the RAID array /dev/md0, including its status, component devices, and configuration.

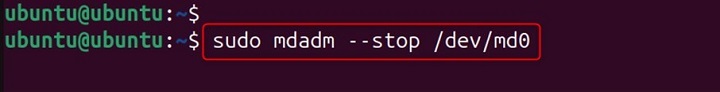

Stopping a RAID Array

To stop a RAID array, you can use the following command −

sudo mdadm --stop /dev/md0

In this example, the mdadm command stops the RAID array /dev/md0.

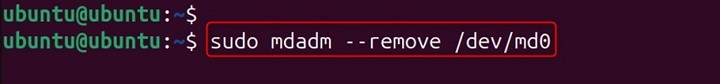

Removing a RAID Array

To remove a RAID array, you can use the following command −

sudo mdadm --remove /dev/md0

Conclusion

The mdadm toolset provides comprehensive management capabilities for RAID arrays, including creating, assembling, monitoring, and managing them. By following this guide, you can enable to deal possible operations of RAID array.