- Home

- Adjusted R-Squared

- Analysis of Variance

- Arithmetic Mean

- Arithmetic Median

- Arithmetic Mode

- Arithmetic Range

- Bar Graph

- Best Point Estimation

- Beta Distribution

- Binomial Distribution

- Black-Scholes model

- Boxplots

- Central limit theorem

- Chebyshev's Theorem

- Chi-squared Distribution

- Chi Squared table

- Circular Permutation

- Cluster sampling

- Cohen's kappa coefficient

- Combination

- Combination with replacement

- Comparing plots

- Continuous Uniform Distribution

- Continuous Series Arithmetic Mean

- Continuous Series Arithmetic Median

- Continuous Series Arithmetic Mode

- Cumulative Frequency

- Co-efficient of Variation

- Correlation Co-efficient

- Cumulative plots

- Cumulative Poisson Distribution

- Data collection

- Data collection - Questionaire Designing

- Data collection - Observation

- Data collection - Case Study Method

- Data Patterns

- Deciles Statistics

- Discrete Series Arithmetic Mean

- Discrete Series Arithmetic Median

- Discrete Series Arithmetic Mode

- Dot Plot

- Exponential distribution

- F distribution

- F Test Table

- Factorial

- Frequency Distribution

- Gamma Distribution

- Geometric Mean

- Geometric Probability Distribution

- Goodness of Fit

- Grand Mean

- Gumbel Distribution

- Harmonic Mean

- Harmonic Number

- Harmonic Resonance Frequency

- Histograms

- Hypergeometric Distribution

- Hypothesis testing

- Individual Series Arithmetic Mean

- Individual Series Arithmetic Median

- Individual Series Arithmetic Mode

- Interval Estimation

- Inverse Gamma Distribution

- Kolmogorov Smirnov Test

- Kurtosis

- Laplace Distribution

- Linear regression

- Log Gamma Distribution

- Logistic Regression

- Mcnemar Test

- Mean Deviation

- Means Difference

- Multinomial Distribution

- Negative Binomial Distribution

- Normal Distribution

- Odd and Even Permutation

- One Proportion Z Test

- Outlier Function

- Permutation

- Permutation with Replacement

- Pie Chart

- Poisson Distribution

- Pooled Variance (r)

- Power Calculator

- Probability

- Probability Additive Theorem

- Probability Multiplecative Theorem

- Probability Bayes Theorem

- Probability Density Function

- Process Capability (Cp) & Process Performance (Pp)

- Process Sigma

- Quadratic Regression Equation

- Qualitative Data Vs Quantitative Data

- Quartile Deviation

- Range Rule of Thumb

- Rayleigh Distribution

- Regression Intercept Confidence Interval

- Relative Standard Deviation

- Reliability Coefficient

- Required Sample Size

- Residual analysis

- Residual sum of squares

- Root Mean Square

- Sample planning

- Sampling methods

- Scatterplots

- Shannon Wiener Diversity Index

- Signal to Noise Ratio

- Simple random sampling

- Skewness

- Standard Deviation

- Standard Error ( SE )

- Standard normal table

- Statistical Significance

- Statistics Formulas

- Statistics Notation

- Stem and Leaf Plot

- Stratified sampling

- Student T Test

- Sum of Square

- T-Distribution Table

- Ti 83 Exponential Regression

- Transformations

- Trimmed Mean

- Type I & II Error

- Variance

- Venn Diagram

- Weak Law of Large Numbers

- Z table

- Statistics Useful Resources

- Statistics - Discussion

Statistics - Kurtosis

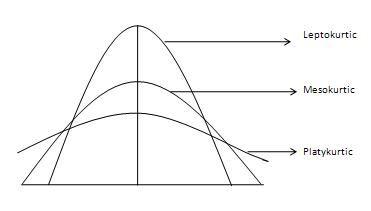

The degree of tailedness of a distribution is measured by kurtosis. It tells us the extent to which the distribution is more or less outlier-prone (heavier or light-tailed) than the normal distribution. Three different types of curves, courtesy of Investopedia, are shown as follows −

It is difficult to discern different types of kurtosis from the density plots (left panel) because the tails are close to zero for all distributions. But differences in the tails are easy to see in the normal quantile-quantile plots (right panel).

The normal curve is called Mesokurtic curve. If the curve of a distribution is more outlier prone (or heavier-tailed) than a normal or mesokurtic curve then it is referred to as a Leptokurtic curve. If a curve is less outlier prone (or lighter-tailed) than a normal curve, it is called as a platykurtic curve. Kurtosis is measured by moments and is given by the following formula −

Formula

${\beta_2 = \frac{\mu_4}{\mu_2}}$

Where −

${\mu_4 = \frac{\sum(x- \bar x)^4}{N}}$

The greater the value of \beta_2 the more peaked or leptokurtic the curve. A normal curve has a value of 3, a leptokurtic has \beta_2 greater than 3 and platykurtic has \beta_2 less then 3.

Example

Problem Statement:

The data on daily wages of 45 workers of a factory are given. Compute \beta_1 and \beta_2 using moment about the mean. Comment on the results.

| Wages(Rs.) | Number of Workers |

|---|---|

| 100-200 | 1 |

| 120-200 | 2 |

| 140-200 | 6 |

| 160-200 | 20 |

| 180-200 | 11 |

| 200-200 | 3 |

| 220-200 | 2 |

Solution:

| Wages (Rs.) | Number of Workers (f) | Mid-pt m | m-${\frac{170}{20}}$ d | ${fd}$ | ${fd^2}$ | ${fd^3}$ | ${fd^4}$ |

|---|---|---|---|---|---|---|---|

| 100-200 | 1 | 110 | -3 | -3 | 9 | -27 | 81 |

| 120-200 | 2 | 130 | -2 | -4 | 8 | -16 | 32 |

| 140-200 | 6 | 150 | -1 | -6 | 6 | -6 | 6 |

| 160-200 | 20 | 170 | 0 | 0 | 0 | 0 | 0 |

| 180-200 | 11 | 190 | 1 | 11 | 11 | 11 | 11 |

| 200-200 | 3 | 210 | 2 | 6 | 12 | 24 | 48 |

| 220-200 | 2 | 230 | 3 | 6 | 18 | 54 | 162 |

| ${N=45}$ | ${\sum fd = 10}$ | ${\sum fd^2 = 64}$ | ${\sum fd^3 = 40}$ | ${\sum fd^4 = 330}$ |

Since the deviations have been taken from an assumed mean, hence we first calculate moments about arbitrary origin and then moments about mean. Moments about arbitrary origin '170'

Moments about mean

From the value of movement about mean, we can now calculate ${\beta_1}$ and ${\beta_2}$:

From the above calculations, it can be concluded that ${\beta_1}$, which measures skewness is almost zero, thereby indicating that the distribution is almost symmetrical. ${\beta_2}$ Which measures kurtosis, has a value greater than 3, thus implying that the distribution is leptokurtic.