- TSSN - Home

- TSSN - Introduction

- TSSN - Switching Systems

- Elements of a Switching System

- TSSN - Strowger Switching System

- TSSN - Switching Mechanisms

- TSSN - Common Control

- TSSN - Touch-tone Dial Telephone

- TSSN - Crossbar Switching

- Crossbar Switch Configurations

- TSSN - Crosspoint Technology

- TSSN - Stored Program Control

- TSSN - Software Architecture

- TSSN - Switching Techniques

- TSSN - Time Division Switching

- TSSN - Telephone Networks

- TSSN - Signaling Techniques

- TSSN - ISDN

TSSN - Software Architecture

In this chapter, we will learn about the Software Architecture of Telecommunication Switching Systems and Networks.

The software of the SPC systems can be categorized into two for better understanding System Software and Application Software. The Software architecture deals with the system software environment of SPC including the language processors. Many features along with call processing are part of the operating system under which operations and Management functions are carried out.

Call Processing is the main processing function, which is event oriented. The event that occurs at the subscribers line or trunk triggers the call processing. Call setup is not done in one continuous processing sequence in the exchange. This entire process is consistent of many elementary processes that last for few tens or hundreds of milliseconds and many calls are processed as such simultaneously and each call is handled by a separate Process. A Process is an active entity which is a program in execution, sometimes even termed as a task.

Process in a Multiprogramming Environment

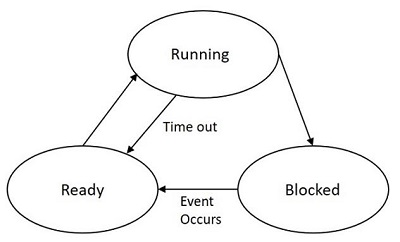

In this section, we will see what a process in a multiprogramming environment is. A Process in a multiprogramming environment may be one of the following −

- Running

- Ready

- Blocked

The state of a process is defined by its current activity and the process it executes and the transitions that its state undergoes.

A Process is said to be running, if an instruction is currently being executed by the processor.

A Process is said to be ready if the next instruction of running a process is waiting or has an instruction that is timed out.

A Process is said to be blocked, if it is waiting for some event to occur before it can proceed.

The following figure indicates the process that shows the transition between running, ready and blocked.

While some processes are in the running state, some will be in the ready state while others are blocked up. The processes in the ready list will be according to the priorities. The blocked processes are unordered and they unblock in the order in which the events are waiting to occur. If a process is not executed and waits for some other instruction or resource, the processor time is saved by pushing such process to the ready list and will be unblocked when its priority is high.

Process Control Block

The Process Control Block represents each process in the operating system. PCB is a data structure containing the following information about the process.

Current running state of the process

Process priority which are in the ready state

CPU scheduling parameters

Saves the content of CPU, when a process gets interrupted

Memory allocation to the process

The details of process like its number, CPU usage etc. are present

Status of events and I/O resources that are associated with the process

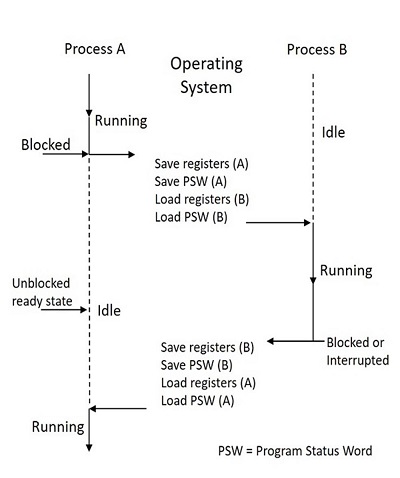

PCB has all the information about the processes to be executed next when it gets the CPU. The CPU registers include a Program Status Word (PSW) that contains the address of the next instruction to be executed, the types of interrupts enabled or disabled currently, etc.

While the CPU executes some process, that process needs to be switched when the currently running process becomes blocked or an event or interrupt that triggers a high priority process occurs. Such situation is called Process Switching, which is also known as Context Switching. Such interrupt priority mechanism is described in the following figure.

If a process A scans a particular subscriber line and finds it free, then the process establishes a call with that subscriber. However, if another process B claims the priority and establishes a call with the same subscriber at the same time, then both the processes need to make a call to the same subscriber at the same time, which is not suggestable. A similar problem might occur with other shared tables and files also.

Information about the resources of the exchange (trunks, registers etc.) and their current utilization is kept in the form of tables. These tables when needed are shared by different processes. The problem occurs when two or more processes opt for the same table at the same time. This problem can be solved by giving access to each process to a shared table.

Sharing Resources

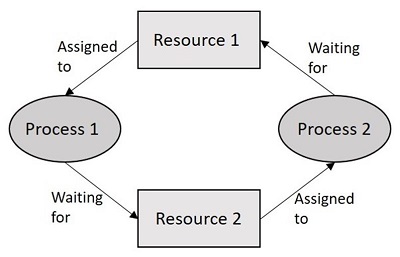

Whenever a process uses a shared table or any shared resource, all the other processes that needs the same are to be kept waiting. When the running process finishes using the resource, it will be allotted to the first prioritized ready process which is kept waiting. This process of using the shared resources is called Mutual Exclusion. The process, which is accessing the shared resource, is said to be in its Critical Section or Critical Region. Mutual Exclusion implies that only one process can be in the critical region at any instance for a given shared resource. The coding for the process to be in the critical section is done very carefully that there are no infinite loops. This helps in the process not being blocked. The work done is more accurate and efficient. This helps the other processes that are waiting.

If two processes in a semaphore have to share a common resource, it is shared by them for certain time intervals. While one uses the resource, the other waits. Now, while waiting, in order to be in synchronism with the other one, it reads the task that was written until then. This means, the state of that process should be non-zero and should keep on incrementing, which otherwise would be sent out to the blocked list. The processes that are in the blocked list are stacked one over the other and are allowed to use the resource according to the priority.

The following figure shows how the process works −

If two or more processes in a semaphore wait indefinitely for a resource and does not get zero to return to the block state, while other processes wait in the blocked state for the use of the same resource while none could use the resource but wait, such a state is called the Deadlock State.

The techniques have been developed for deadlock prevention, avoidance, detection and recovery. Therefore, these cover the salient features of operating system for switching processors.

Software Production

The SPC software production is important because of its complexity and size of the software along with its long working life and reliability, availability and portability.

Software production is that branch of software engineering that deals with the problems encountered in the production and maintenance of large scale software for complex systems. The practice of software engineering is categorized into four stages. These stages make up for the production of software systems.

- Functional specifications

- Formal description and detailed specifications

- Coding and verification

- Testing and debugging

The Application software of a switching system may be divided into call processing software, administrative software and maintenance software; the application software packages of a switching system use a modular organization.

With the introduction of the Stored Program Control, a host of new or improved services can be made available to the subscribers. Many kinds of enhanced services such as abbreviated dialing, recorded number calls or no dialing calls, call back when free, call forwarding, operator answer, calling number record, call waiting, consultation hold, conference calls, automatic alarm, STD barring, malicious call tracing, etc. are all introduced with these changes in the telephony.

Multi-Stage Networks

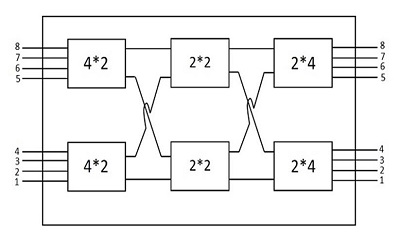

The multi-stage networks are the networks built to provide connections between more subscribers more efficiently than the Crossbar switching systems.

The Crossbar switching networks discussed previously have some limitations as described below −

The number of Crosspoint will be the square of the number of attached stations and hence this is costly for a large switch.

The failure of Crosspoint prevents connection with those two subscribers between which the Crosspoint is connected.

Even if all the attached devices are active, only few of the Crosspoints are utilized

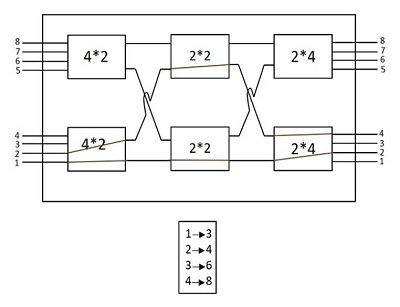

In order to find a solution to subsidize these disadvantages, the multistage space division switches were built. By splitting the Crossbar switch into smaller units and interconnecting them, it is possible to build multistage switches with fewer Crosspoints. The following figure shows an example of a multistage switch.

The multistage switch like the above one needs less number of Crosspoints than the ones needed in Crossbar switching. According to the example shown above, for the 8 (input) and 8 (output) various subscribers (both called and calling subscribers), the Crosspoints needed in a normal Crossbar network will be square of them, which is 64. However, in the multistage Crossbar network, just 40 Crosspoints are enough. This is as shown in the diagram above. In a large multistage Crossbar switch, the reduction is more significant.

Advantages of a Multistage Network

The advantages of a multistage network are as follows −

- The number of Crossbars are reduced.

- The number of paths of connection can be more.

Disadvantages of a Multistage Network

The disadvantage of a multistage network are as follows −

Multistage switches may cause Blocking.

The number or size of the intermediate switches if increased can solve this problem, but the cost increases with this.

Blocking

Blocking reduces the number of Crosspoints. The following diagram will help you understand Blocking in a better way.

In the above figure, where there are 4 inputs and 2 outputs, the Subscriber 1 was connected to Line 3 and the Subscriber 2 was connected to Line 4. The red-colored lines indicate the connections. However, there will be more requests coming; a calling request from subscriber 3 and subscriber 4 if made cannot be processed, as the call cannot be established.

The subscribers of the above block also (as shown in the above diagram) face the same problem. Only two blocks can be connected at a time; connecting more than two or all of the inputs cannot be done (as it depends on the number of outputs present). Hence, a number of connections cannot be established simultaneously, which is understood as the calls being blocked up.