- OS - Home

- OS - Overview

- OS - History

- OS - Evolution

- OS - Functions

- OS - Components

- OS - Structure

- OS - Architecture

- OS - Services

- OS - Properties

- Process Management

- Processes in Operating System

- States of a Process

- Process Schedulers

- Process Control Block

- Operations on Processes

- Process Suspension and Process Switching

- Process States and the Machine Cycle

- Inter Process Communication (IPC)

- Remote Procedure Call (RPC)

- Context Switching

- Threads

- Types of Threading

- Multi-threading

- System Calls

- Scheduling Algorithms

- Process Scheduling

- Types of Scheduling

- Scheduling Algorithms Overview

- FCFS Scheduling Algorithm

- SJF Scheduling Algorithm

- Round Robin Scheduling Algorithm

- HRRN Scheduling Algorithm

- Priority Scheduling Algorithm

- Multilevel Queue Scheduling

- Lottery Scheduling Algorithm

- Starvation and Aging

- Turn Around Time & Waiting Time

- Burst Time in SJF Scheduling

- Process Synchronization

- Process Synchronization

- Solutions For Process Synchronization

- Hardware-Based Solution

- Software-Based Solution

- Critical Section Problem

- Critical Section Synchronization

- Mutual Exclusion Synchronization

- Mutual Exclusion Using Interrupt Disabling

- Peterson's Algorithm

- Dekker's Algorithm

- Bakery Algorithm

- Semaphores

- Binary Semaphores

- Counting Semaphores

- Mutex

- Turn Variable

- Bounded Buffer Problem

- Reader Writer Locks

- Test and Set Lock

- Monitors

- Sleep and Wake

- Race Condition

- Classical Synchronization Problems

- Dining Philosophers Problem

- Producer Consumer Problem

- Sleeping Barber Problem

- Reader Writer Problem

- OS Deadlock

- Introduction to Deadlock

- Conditions for Deadlock

- Deadlock Handling

- Deadlock Prevention

- Deadlock Avoidance (Banker's Algorithm)

- Deadlock Detection and Recovery

- Deadlock Ignorance

- Resource Allocation Graph

- Livelock

- Memory Management

- Memory Management

- Logical and Physical Address

- Contiguous Memory Allocation

- Non-Contiguous Memory Allocation

- First Fit Algorithm

- Next Fit Algorithm

- Best Fit Algorithm

- Worst Fit Algorithm

- Buffering

- Fragmentation

- Compaction

- Virtual Memory

- Segmentation

- Paged Segmentation & Segmented Paging

- Buddy System

- Slab Allocation

- Overlays

- Free Space Management

- Locality of Reference

- Paging and Page Replacement

- Paging

- Demand Paging

- Page Table

- Page Replacement Algorithms

- Second Chance Page Replacement

- Optimal Page Replacement Algorithm

- Belady's Anomaly

- Thrashing

- Storage and File Management

- File Systems

- File Attributes

- Structures of Directory

- Linked Index Allocation

- Indexed Allocation

- Disk Scheduling Algorithms

- FCFS Disk Scheduling

- SSTF Disk Scheduling

- SCAN Disk Scheduling

- LOOK Disk Scheduling

- I/O Systems

- I/O Hardware

- I/O Software

- I/O Programmed

- I/O Interrupt-Initiated

- Direct Memory Access

- OS Types

- OS - Types

- OS - Batch Processing

- OS - Multiprogramming

- OS - Multitasking

- OS - Multiprocessing

- OS - Distributed

- OS - Real-Time

- OS - Single User

- OS - Monolithic

- OS - Embedded

- Popular Operating Systems

- OS - Hybrid

- OS - Zephyr

- OS - Nix

- OS - Linux

- OS - Blackberry

- OS - Garuda

- OS - Tails

- OS - Clustered

- OS - Haiku

- OS - AIX

- OS - Solus

- OS - Tizen

- OS - Bharat

- OS - Fire

- OS - Bliss

- OS - VxWorks

- Miscellaneous Topics

- OS - Security

- OS Questions Answers

- OS - Questions Answers

- OS Useful Resources

- OS - Quick Guide

- OS - Useful Resources

- OS - Discussion

Operating System - Virtual Memory

Virtual memory is a memory management technique used by modern operating systems to create an illusion of a having a large and continuous memory space, even when the physical memory (RAM) is less. A part of the hard disk is used as the physical memory to store data and programs that are not currently in use. The operating system will store the mapping between the virtual addresses and physical addresses in a data structure called page table.

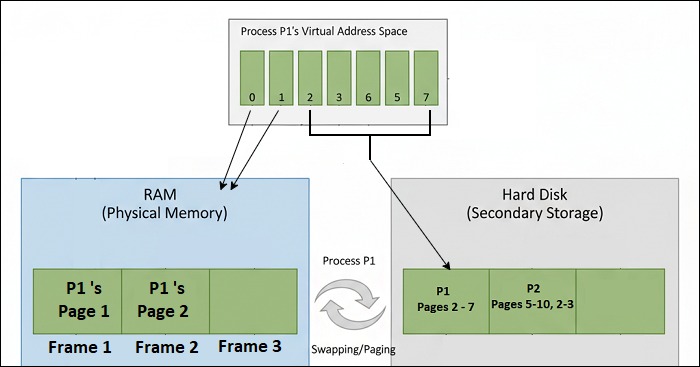

The image below shows how operating system splits the process between physical memory (RAM) and hard disk using virtual memory −

In the image above, the process is divided into pages (Page 1, Page 2, Page 3, ... page 7). The first 2 pages are loaded into the physical memory (RAM), and rest are stored in the hard disk. When the process needs to access a page that is not in the physical memory, the OS will swap the required page from the hard disk into the physical memory.

How Virtual Memory Works?

The steps below explain how virtual memory works in Paging systems −

- The idea behind implementing virtual memory is to store the part of the program that is currently in use in the physical memory (RAM) and keep the rest of the program in the hard disk.

- When the process needs to access a part of the program, the OS will check page table to see if the page is in the physical memory or not.

- If the page is in the physical memory, the CPU can directly access it. If not, a page fault will occur. So the OS will load the page from the hard disk into the physical memory and update the page table.

- While loading a new page into the physical memory, if the space is full, the OS will use a page replacement algorithm to decide which page to remove and make space for the new page in RAM.

Types of Virtual Memory

Modern operating systems use two types of techniques to create virtual memory −

- Paging

- Segmentation

Virtual Memory in Paging

Paging is a memory management technique used by modern operating systems to manage the allocation of memory to processes. In this technique, the physical memory (i.e., the RAM) is divided into blocks of fixed size called frames, and the logical memory (i.e., the memory where the process is stored) is divided into blocks of the same size called pages. When a process is executed, its pages are loaded into available frames in the physical memory.

The image below shows working of paging in operating system −

Virtual Memory in Segmentation

Segmentation is another memory management technique used by modern operating systems to manage the allocation of memory to processes. Here, the logical memory (i.e., the memory where the process is stored) is divided into variable-sized segments based on the logical structure of a program, such as code segment, data segment, stack segment, etc. The physical memory (i.e., the RAM) is also divided into variable-sized blocks called segments. When a process is executed, its segments are loaded into available segments in the physical memory.

The main difference between paging and segmentation is that in paging, the memory is divided into fixed-size blocks. In segmentation, the memory is divided into blocks of variable size based on the logical structure of a program.

Features of Segmentation

The key features of segmentation are −

- Logical division of memory − Segments represent meaningful units like code, stack, data, or modules.

- Variable-sized divisions − Segments can have different lengths, depending on the program's requirements.

- No internal fragmentation − Since segments are not fixed in size.

- External fragmentation − Can occur when free memory is divided into small scattered blocks.

- Protection and sharing − Different segments can have access rights (read, write, execute) and can be shared among processes.

Advantages of Virtual Memory

The benefits of using virtual memory are −

- Increased memory capacity − Virtual memory creates an illusion of having a large memory space. This will help to run applications that are larger than the physical memory (RAM) available in the system.

- Efficient memory Usage − Virtual memory improves the efficiency of memory usage by loading only the required pages into physical memory. This will reduce wastage of memory and improve system performance.

- Isolation of Memory − Virtual memory allocates a unique address space to each process. This will improve the safety and security of the system by preventing one process from accessing the memory of another process.

- Quick swapping of pages − OS swap pages between physical memory and disk quickly, such that the process execution is just like it is running in physical memory.

Limitations of Virtual Memory

The virtual memory also comes with limitations such as −

- Complexity − It is very difficult to implement virtual memory systems. The operating system needs to manage the mapping between virtual addresses and physical addresses, also handle page faults and page replacement. Only experienced developers can handle this complexity.

- Performance Issues − Accessing data from the hard disk is much slower than accessing data from RAM. So, if a process frequently accesses pages that are not in physical memory, it can lead to slow running of the process.

- Thrashing − Thrashing occurs when the system spends more time swapping pages in and out of physical memory than executing the process. This can happen when the size of RAM is very small compared to what the process needs.

Applications of Virtual Memory

Most of the modern operating systems use virtual memory technique to manage memory system. Some of the popular operating systems that use virtual memory are −

- Microsoft Windows

- Linux

- macOS

- Unix

- Android

Virtual Memory vs Physical Memory

The table below shows the key differences between virtual memory and physical memory −

| Criteria | Virtual Memory | Physical Memory |

|---|---|---|

| Definition | Virtual memory is a memory management technique that creates an illusion of having a large and continuous memory space. | Physical memory is the actual hardware component (RAM) that stores data and programs currently in use. |

| Storage Location | It uses both RAM and a portion of the hard disk. The part of process not currently in use is stored on the hard disk. | It is located on the computer's motherboard as RAM modules. |

| Capacity | High capacity as it combines RAM and hard disk space. | The capacity is limited to the size of the installed RAM in the system. |

| Management | It uses techniques like paging and segmentation to manage memory allocation to processes. | It is managed by the operating system. |

Conclusion

Virtual memory is a technique to create large and continuous memory space inside RAM using a portion of the hard disk. This improves the efficiency of memory usage and it even allows running applications that are larger than the RAM available in the system. There are two types of virtual memory techniques: paging and segmentation. The major limitations of virtual memory are its complexity and performance issues due to slow access times of hard disk compared to RAM.