- DSA - Home

- DSA - Overview

- DSA - Environment Setup

- DSA - Algorithms Basics

- DSA - Asymptotic Analysis

- Data Structures

- DSA - Data Structure Basics

- DSA - Data Structures and Types

- DSA - Array Data Structure

- DSA - Skip List Data Structure

- Linked Lists

- DSA - Linked List Data Structure

- DSA - Doubly Linked List Data Structure

- DSA - Circular Linked List Data Structure

- Stack & Queue

- DSA - Stack Data Structure

- DSA - Expression Parsing

- DSA - Queue Data Structure

- DSA - Circular Queue Data Structure

- DSA - Priority Queue Data Structure

- DSA - Deque Data Structure

- Searching Algorithms

- DSA - Searching Algorithms

- DSA - Linear Search Algorithm

- DSA - Binary Search Algorithm

- DSA - Interpolation Search

- DSA - Jump Search Algorithm

- DSA - Exponential Search

- DSA - Fibonacci Search

- DSA - Sublist Search

- DSA - Hash Table

- Sorting Algorithms

- DSA - Sorting Algorithms

- DSA - Bubble Sort Algorithm

- DSA - Insertion Sort Algorithm

- DSA - Selection Sort Algorithm

- DSA - Merge Sort Algorithm

- DSA - Shell Sort Algorithm

- DSA - Heap Sort Algorithm

- DSA - Bucket Sort Algorithm

- DSA - Counting Sort Algorithm

- DSA - Radix Sort Algorithm

- DSA - Quick Sort Algorithm

- Matrices Data Structure

- DSA - Matrices Data Structure

- DSA - Lup Decomposition In Matrices

- DSA - Lu Decomposition In Matrices

- Graph Data Structure

- DSA - Graph Data Structure

- DSA - Depth First Traversal

- DSA - Breadth First Traversal

- DSA - Spanning Tree

- DSA - Topological Sorting

- DSA - Strongly Connected Components

- DSA - Biconnected Components

- DSA - Augmenting Path

- DSA - Network Flow Problems

- DSA - Flow Networks In Data Structures

- DSA - Edmonds Blossom Algorithm

- DSA - Maxflow Mincut Theorem

- Tree Data Structure

- DSA - Tree Data Structure

- DSA - Tree Traversal

- DSA - Binary Search Tree

- DSA - AVL Tree

- DSA - Red Black Trees

- DSA - B Trees

- DSA - B+ Trees

- DSA - Splay Trees

- DSA - Range Queries

- DSA - Segment Trees

- DSA - Fenwick Tree

- DSA - Fusion Tree

- DSA - Hashed Array Tree

- DSA - K-Ary Tree

- DSA - Kd Trees

- DSA - Priority Search Tree Data Structure

- Recursion

- DSA - Recursion Algorithms

- DSA - Tower of Hanoi Using Recursion

- DSA - Fibonacci Series Using Recursion

- Divide and Conquer

- DSA - Divide and Conquer

- DSA - Max-Min Problem

- DSA - Strassen's Matrix Multiplication

- DSA - Karatsuba Algorithm

- Greedy Algorithms

- DSA - Greedy Algorithms

- DSA - Travelling Salesman Problem (Greedy Approach)

- DSA - Prim's Minimal Spanning Tree

- DSA - Kruskal's Minimal Spanning Tree

- DSA - Dijkstra's Shortest Path Algorithm

- DSA - Map Colouring Algorithm

- DSA - Fractional Knapsack Problem

- DSA - Job Sequencing with Deadline

- DSA - Optimal Merge Pattern Algorithm

- Dynamic Programming

- DSA - Dynamic Programming

- DSA - Matrix Chain Multiplication

- DSA - Floyd Warshall Algorithm

- DSA - 0-1 Knapsack Problem

- DSA - Longest Common Sub-sequence Algorithm

- DSA - Travelling Salesman Problem (Dynamic Approach)

- Hashing

- DSA - Hashing Data Structure

- DSA - Collision In Hashing

- Disjoint Set

- DSA - Disjoint Set

- DSA - Path Compression And Union By Rank

- Heap

- DSA - Heap Data Structure

- DSA - Binary Heap

- DSA - Binomial Heap

- DSA - Fibonacci Heap

- Tries Data Structure

- DSA - Tries

- DSA - Standard Tries

- DSA - Compressed Tries

- DSA - Suffix Tries

- Treaps

- DSA - Treaps Data Structure

- Bit Mask

- DSA - Bit Mask In Data Structures

- Bloom Filter

- DSA - Bloom Filter Data Structure

- Approximation Algorithms

- DSA - Approximation Algorithms

- DSA - Vertex Cover Algorithm

- DSA - Set Cover Problem

- DSA - Travelling Salesman Problem (Approximation Approach)

- Randomized Algorithms

- DSA - Randomized Algorithms

- DSA - Randomized Quick Sort Algorithm

- DSA - Karger’s Minimum Cut Algorithm

- DSA - Fisher-Yates Shuffle Algorithm

- Miscellaneous

- DSA - Infix to Postfix

- DSA - Bellmon Ford Shortest Path

- DSA - Maximum Bipartite Matching

- DSA Useful Resources

- DSA - Questions and Answers

- DSA - Selection Sort Interview Questions

- DSA - Merge Sort Interview Questions

- DSA - Insertion Sort Interview Questions

- DSA - Heap Sort Interview Questions

- DSA - Bubble Sort Interview Questions

- DSA - Bucket Sort Interview Questions

- DSA - Radix Sort Interview Questions

- DSA - Cycle Sort Interview Questions

- DSA - Quick Guide

- DSA - Useful Resources

- DSA - Discussion

Dynamic Programming

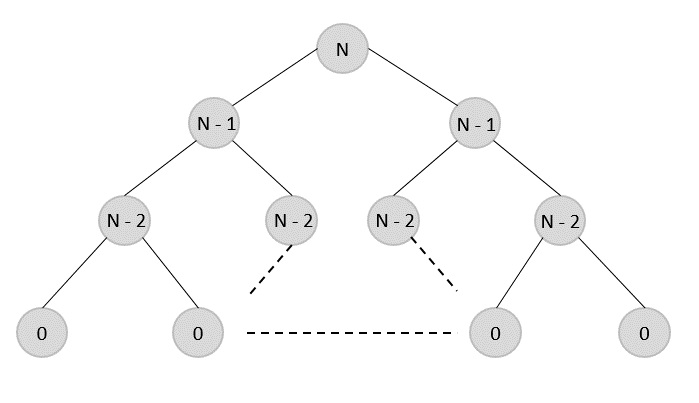

Dynamic programming approach is similar to divide and conquer in breaking down the problem into smaller and yet smaller possible sub-problems. But unlike divide and conquer, these sub-problems are not solved independently. Rather, results of these smaller sub-problems are remembered and used for similar or overlapping sub-problems.

Mostly, dynamic programming algorithms are used for solving optimization problems. Before solving the in-hand sub-problem, dynamic algorithm will try to examine the results of the previously solved sub-problems. The solutions of sub-problems are combined in order to achieve the best optimal final solution. This paradigm is thus said to be using Bottom-up approach.

So we can conclude that −

The problem should be able to be divided into smaller overlapping sub-problem.

Final optimum solution can be achieved by using an optimum solution of smaller sub-problems.

Dynamic algorithms use memorization.

However, in a problem, two main properties can suggest that the given problem can be solved using Dynamic Programming. They are −

Overlapping Sub-Problems

Similar to Divide-and-Conquer approach, Dynamic Programming also combines solutions to sub-problems. It is mainly used where the solution of one sub-problem is needed repeatedly. The computed solutions are stored in a table, so that these dont have to be re-computed. Hence, this technique is needed where overlapping sub-problem exists.

For example, Binary Search does not have overlapping sub-problem. Whereas recursive program of Fibonacci numbers have many overlapping sub-problems.

Optimal Sub-Structure

A given problem has Optimal Substructure Property, if the optimal solution of the given problem can be obtained using optimal solutions of its sub-problems.

For example, the Shortest Path problem has the following optimal substructure property −

If a node x lies in the shortest path from a source node u to destination node v, then the shortest path from u to v is the combination of the shortest path from u to x, and the shortest path from x to v.

The standard All Pair Shortest Path algorithms like Floyd-Warshall and Bellman-Ford are typical examples of Dynamic Programming.

Steps of Dynamic Programming Approach

Dynamic Programming algorithm is designed using the following four steps −

Characterize the structure of an optimal solution.

Recursively define the value of an optimal solution.

Compute the value of an optimal solution, typically in a bottom-up fashion.

Construct an optimal solution from the computed information.

Dynamic Programming vs. Greedy vs. Divide and Conquer

In contrast to greedy algorithms, where local optimization is addressed, dynamic algorithms are motivated for an overall optimization of the problem.

In contrast to divide and conquer algorithms, where solutions are combined to achieve an overall solution, dynamic algorithms use the output of a smaller sub-problem and then try to optimize a bigger sub-problem. Dynamic algorithms use memorization to remember the output of already solved sub-problems.

Examples of Dynamic Programming

The following computer problems can be solved using dynamic programming approach −

Dynamic programming can be used in both top-down and bottom-up manner. And of course, most of the times, referring to the previous solution output is cheaper than re-computing in terms of CPU cycles.