- DSA - Home

- DSA - Overview

- DSA - Environment Setup

- DSA - Algorithms Basics

- DSA - Asymptotic Analysis

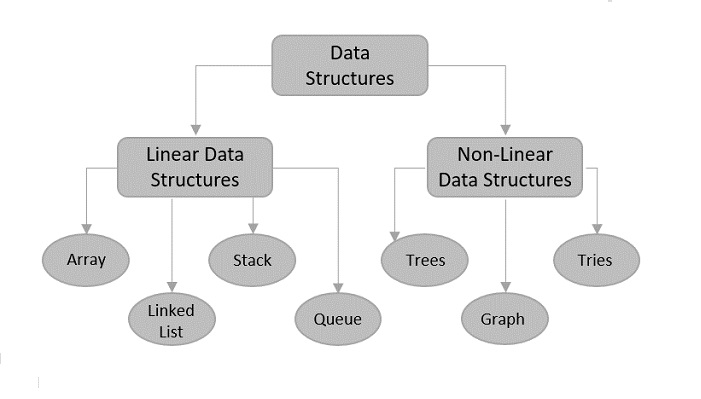

- Data Structures

- DSA - Data Structure Basics

- DSA - Data Structures and Types

- DSA - Array Data Structure

- DSA - Skip List Data Structure

- Linked Lists

- DSA - Linked List Data Structure

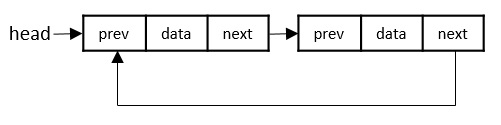

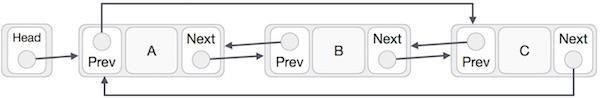

- DSA - Doubly Linked List Data Structure

- DSA - Circular Linked List Data Structure

- Stack & Queue

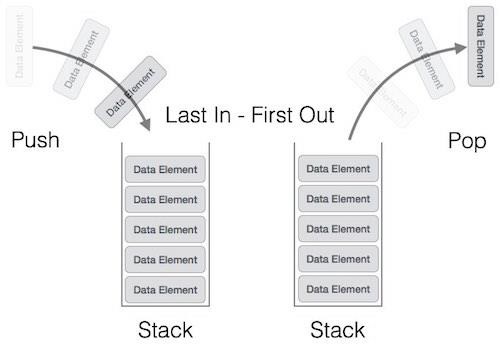

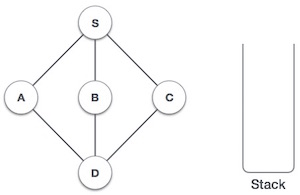

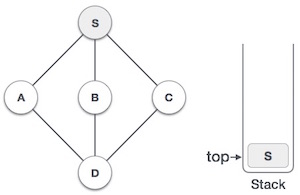

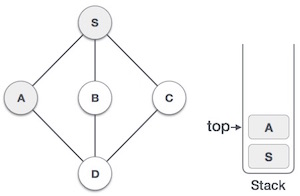

- DSA - Stack Data Structure

- DSA - Expression Parsing

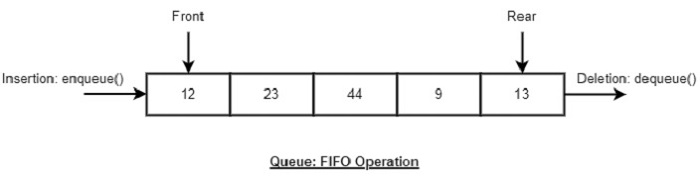

- DSA - Queue Data Structure

- DSA - Circular Queue Data Structure

- DSA - Priority Queue Data Structure

- DSA - Deque Data Structure

- Searching Algorithms

- DSA - Searching Algorithms

- DSA - Linear Search Algorithm

- DSA - Binary Search Algorithm

- DSA - Interpolation Search

- DSA - Jump Search Algorithm

- DSA - Exponential Search

- DSA - Fibonacci Search

- DSA - Sublist Search

- DSA - Hash Table

- Sorting Algorithms

- DSA - Sorting Algorithms

- DSA - Bubble Sort Algorithm

- DSA - Insertion Sort Algorithm

- DSA - Selection Sort Algorithm

- DSA - Merge Sort Algorithm

- DSA - Shell Sort Algorithm

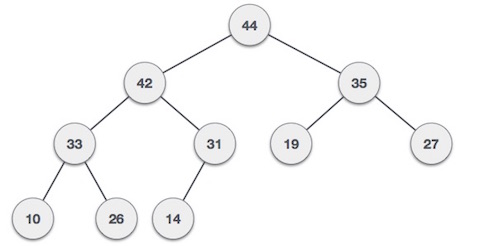

- DSA - Heap Sort Algorithm

- DSA - Bucket Sort Algorithm

- DSA - Counting Sort Algorithm

- DSA - Radix Sort Algorithm

- DSA - Quick Sort Algorithm

- Matrices Data Structure

- DSA - Matrices Data Structure

- DSA - Lup Decomposition In Matrices

- DSA - Lu Decomposition In Matrices

- Graph Data Structure

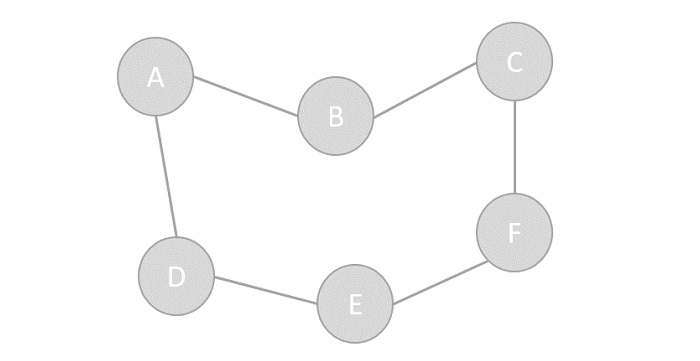

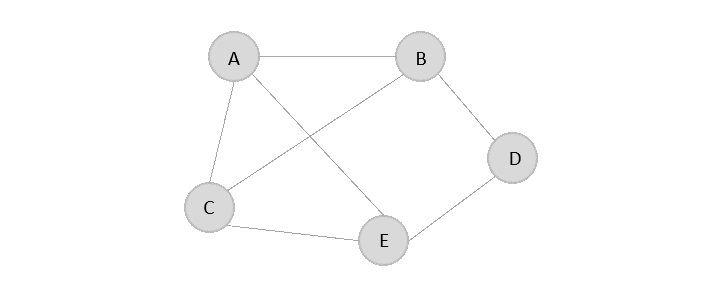

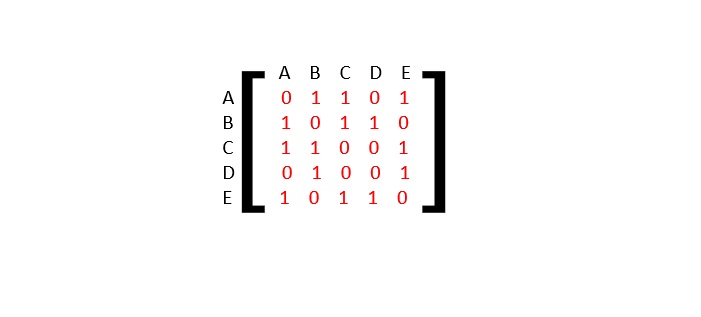

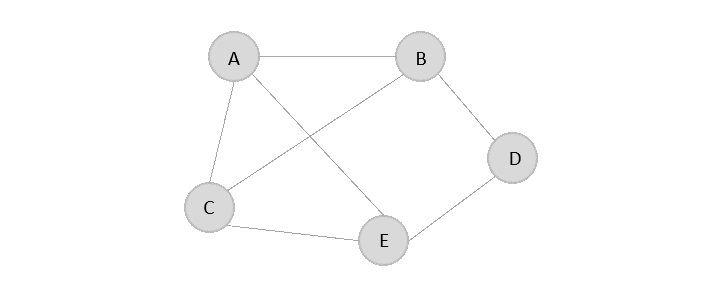

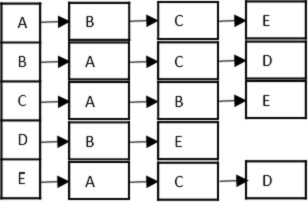

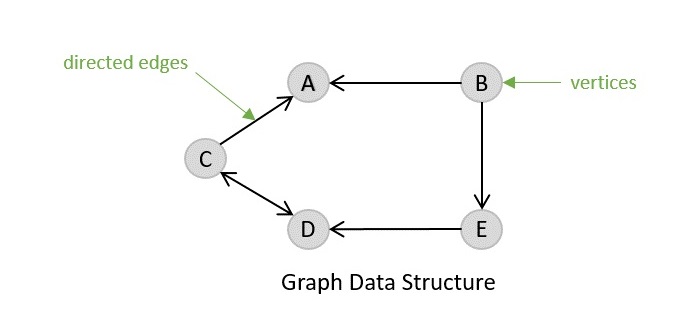

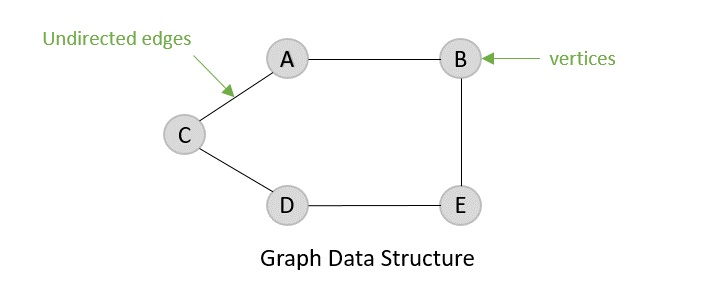

- DSA - Graph Data Structure

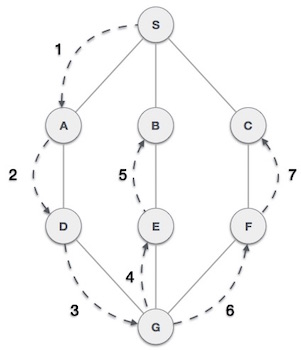

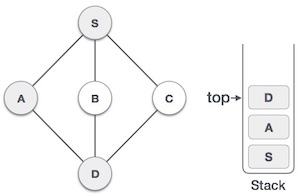

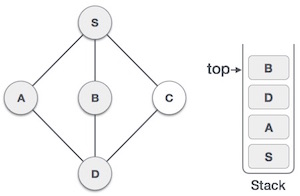

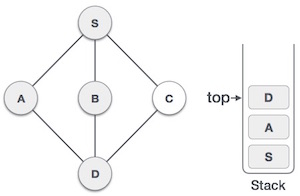

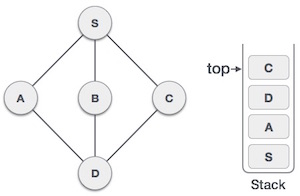

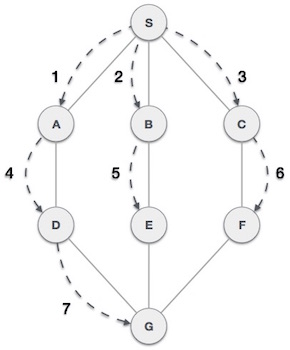

- DSA - Depth First Traversal

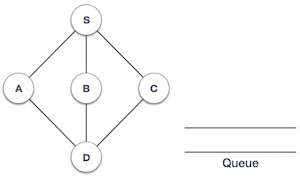

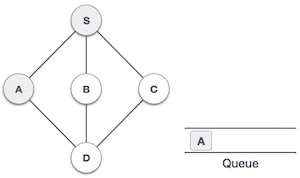

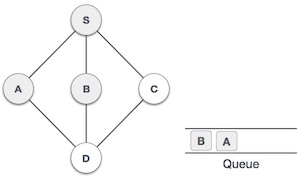

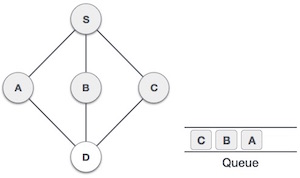

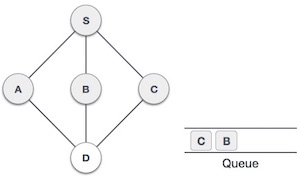

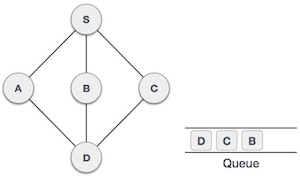

- DSA - Breadth First Traversal

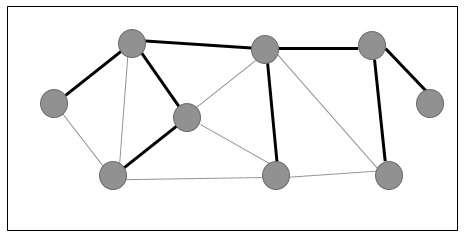

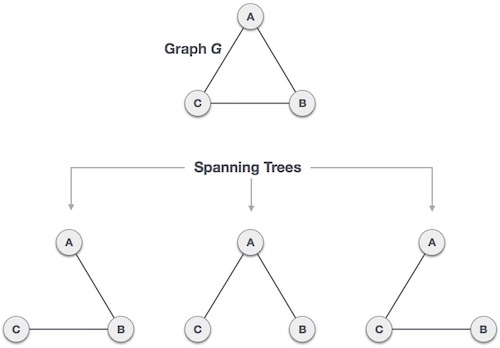

- DSA - Spanning Tree

- DSA - Topological Sorting

- DSA - Strongly Connected Components

- DSA - Biconnected Components

- DSA - Augmenting Path

- DSA - Network Flow Problems

- DSA - Flow Networks In Data Structures

- DSA - Edmonds Blossom Algorithm

- DSA - Maxflow Mincut Theorem

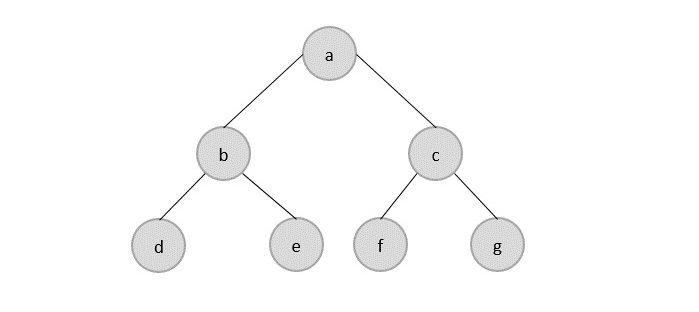

- Tree Data Structure

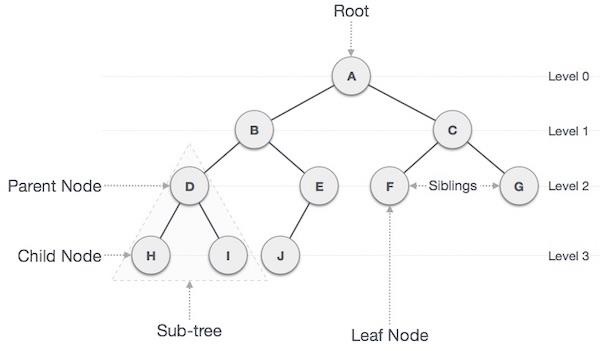

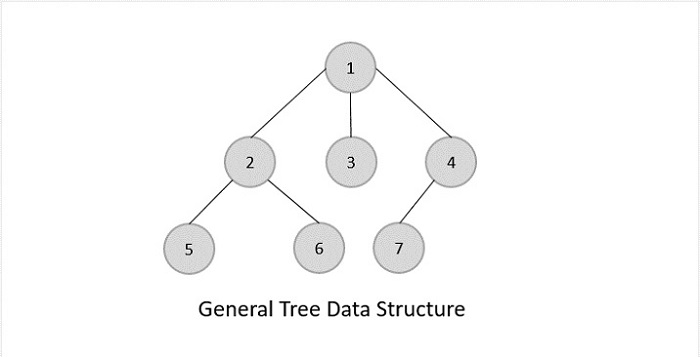

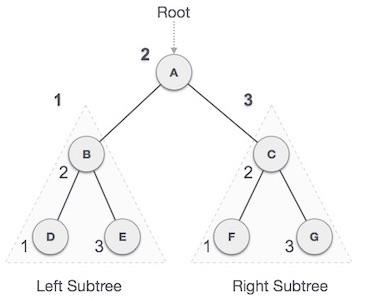

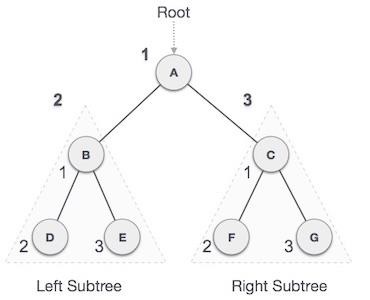

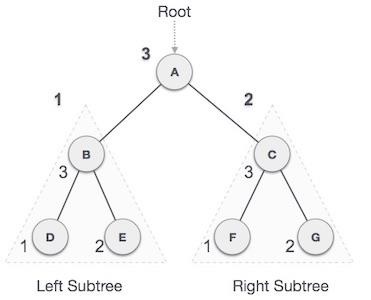

- DSA - Tree Data Structure

- DSA - Tree Traversal

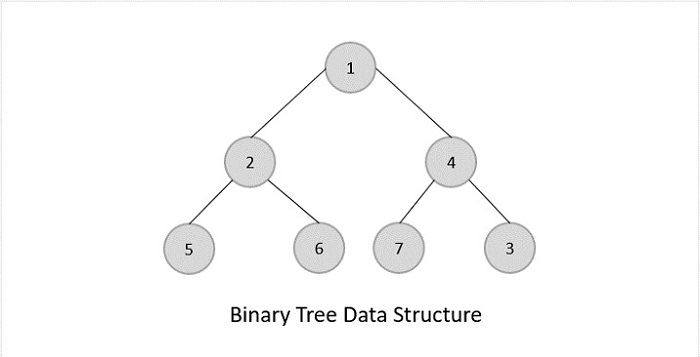

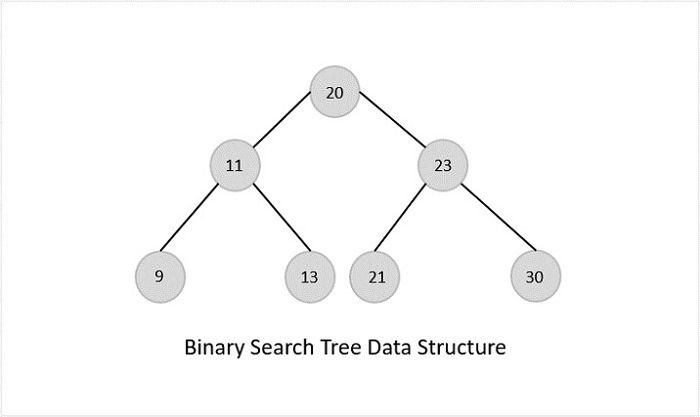

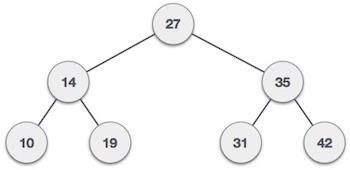

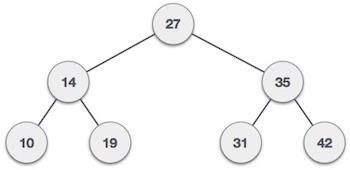

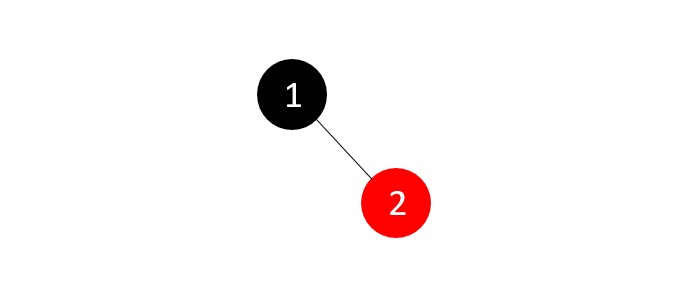

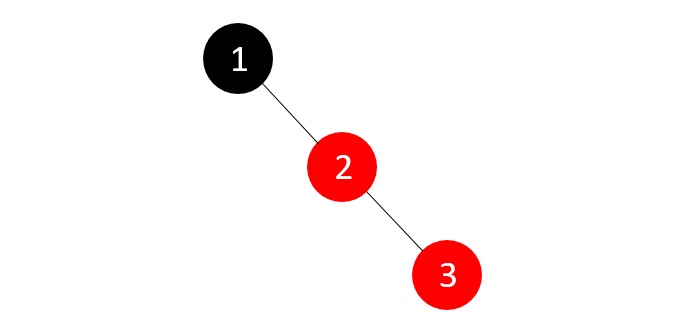

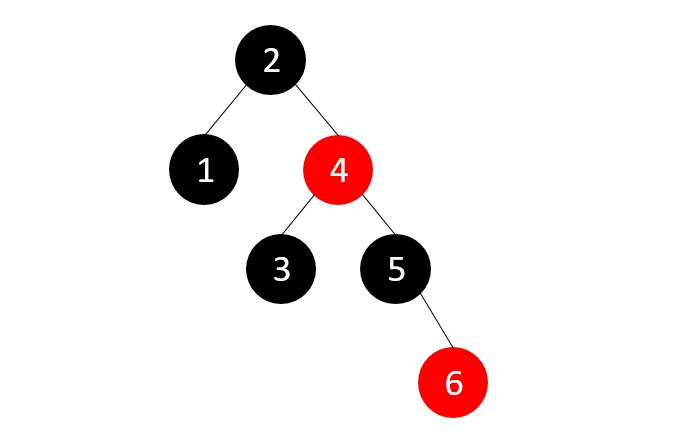

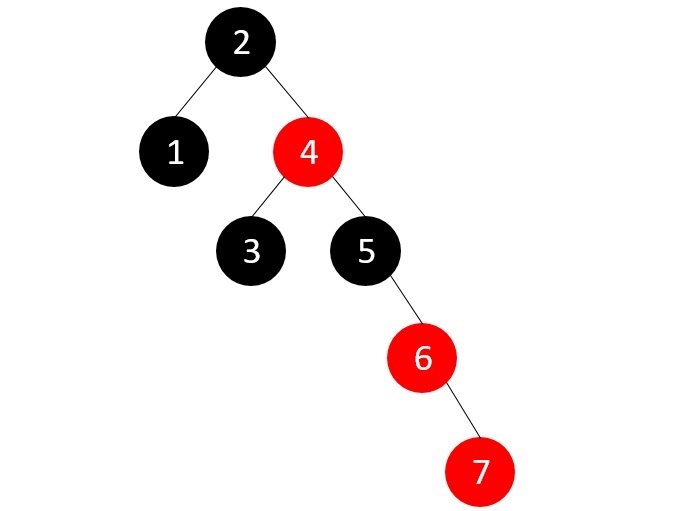

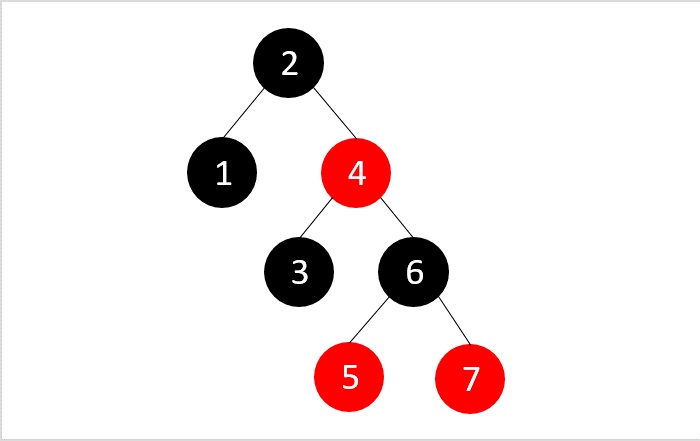

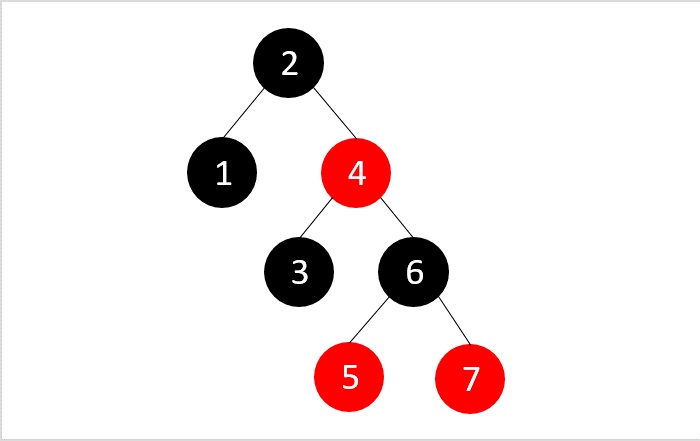

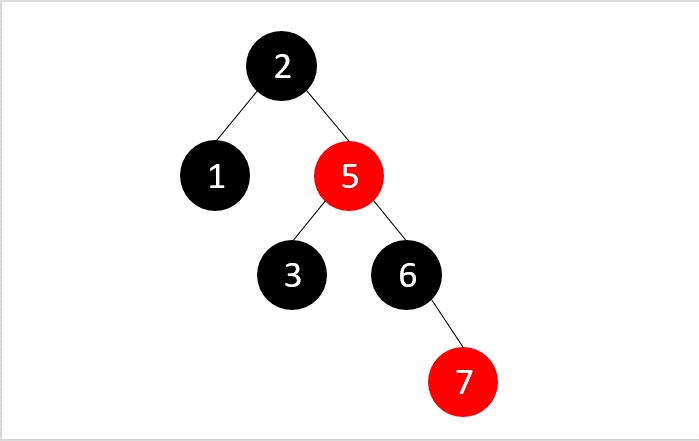

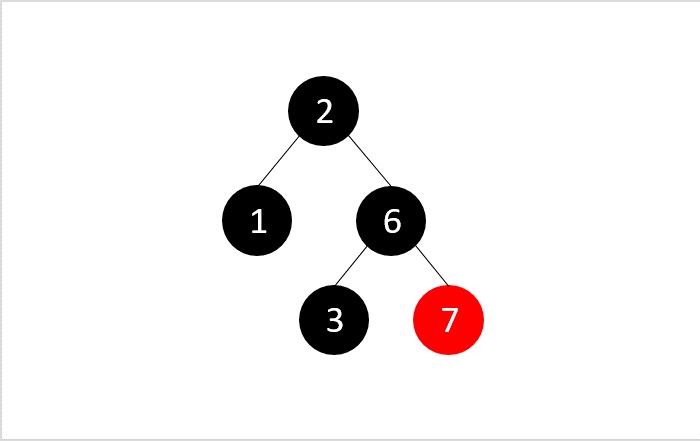

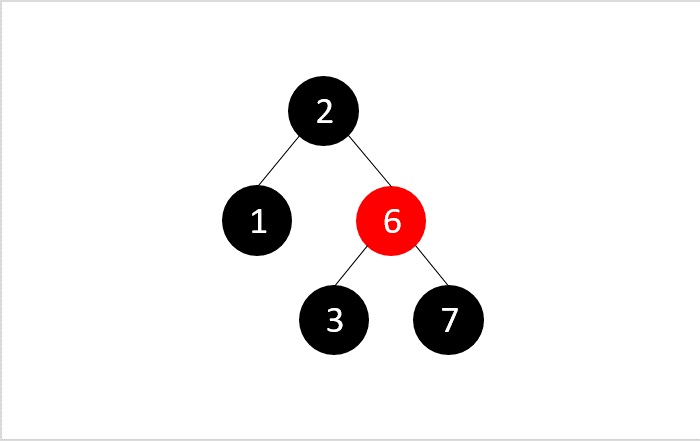

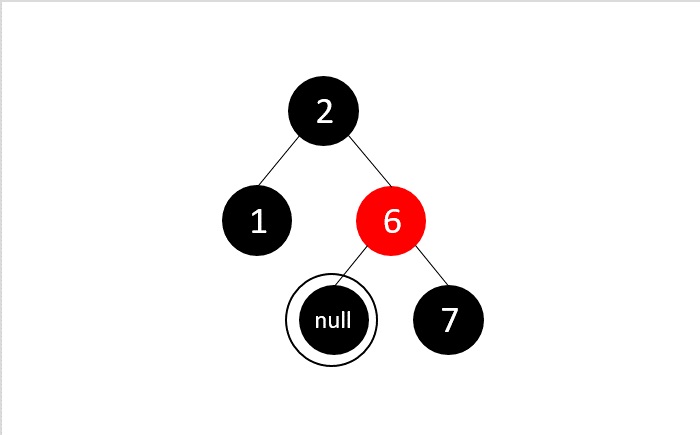

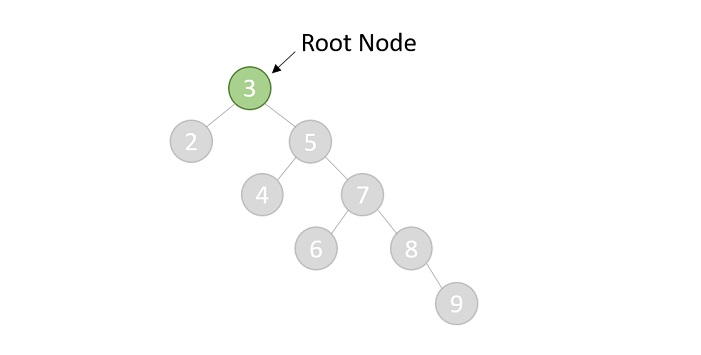

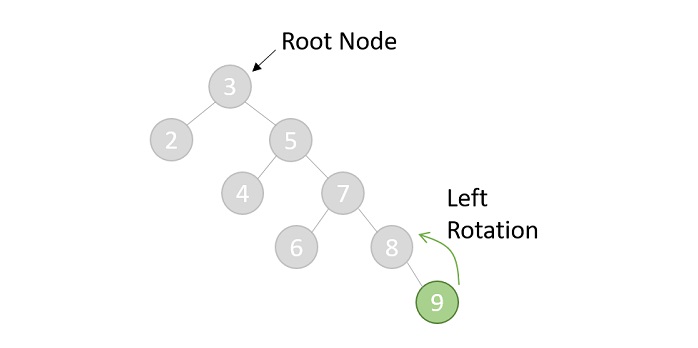

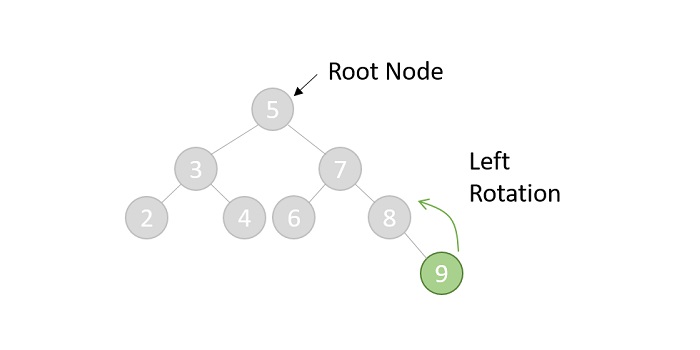

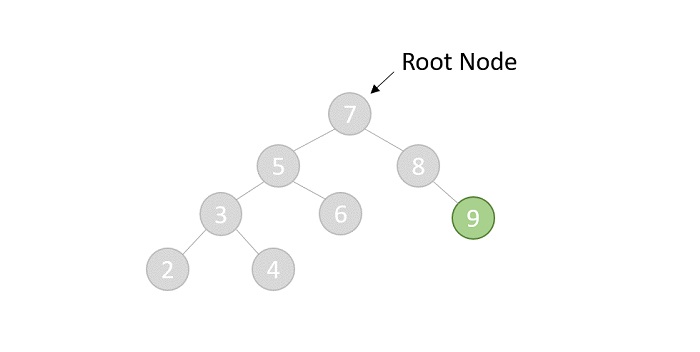

- DSA - Binary Search Tree

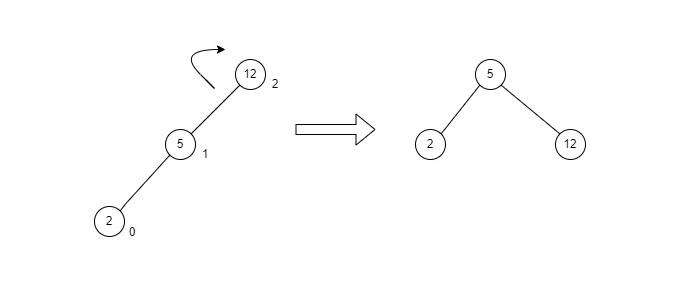

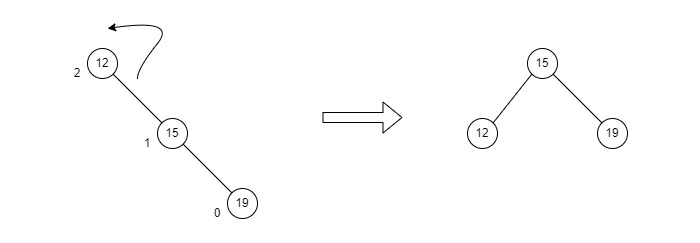

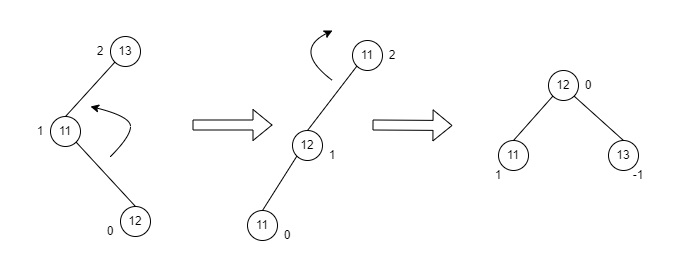

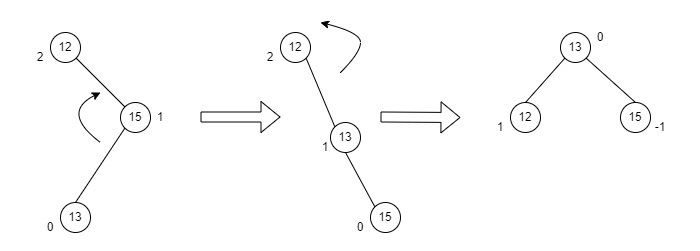

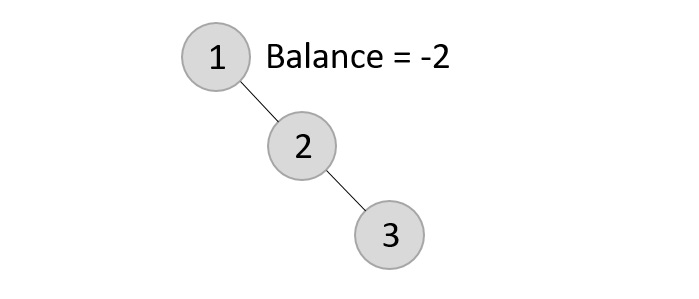

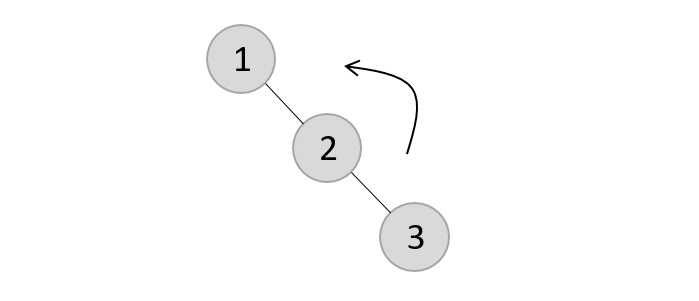

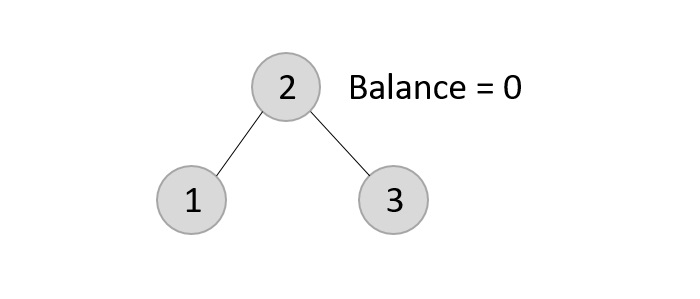

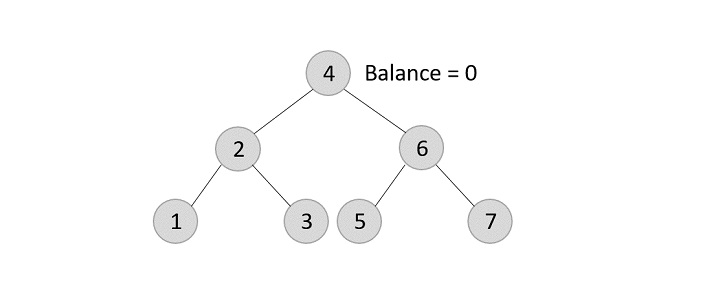

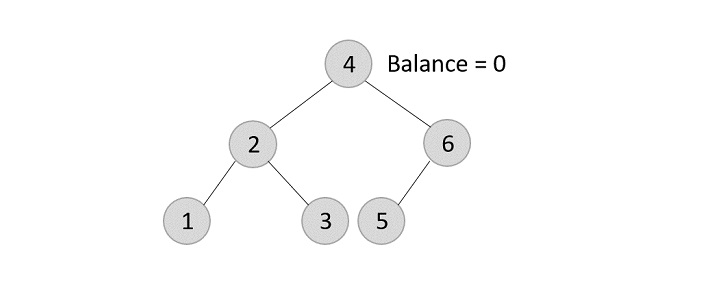

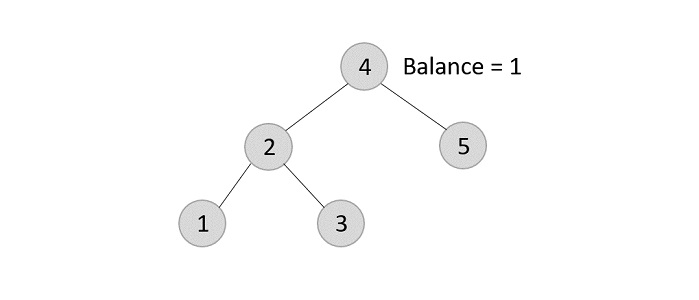

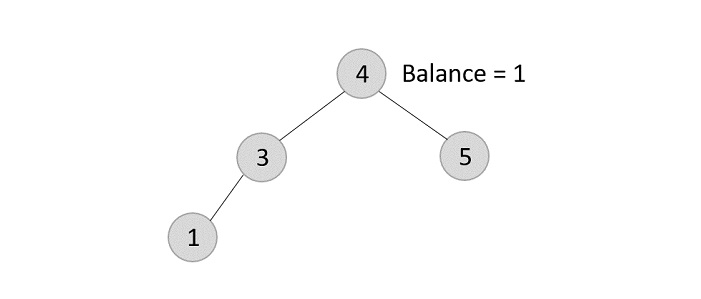

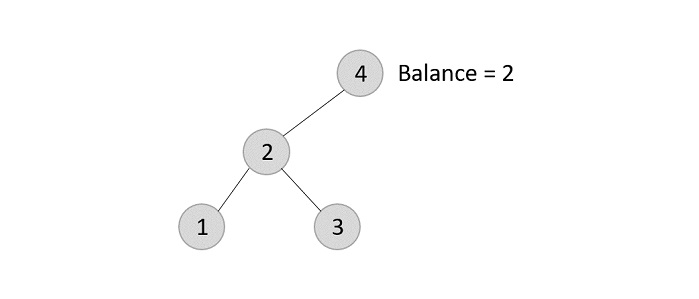

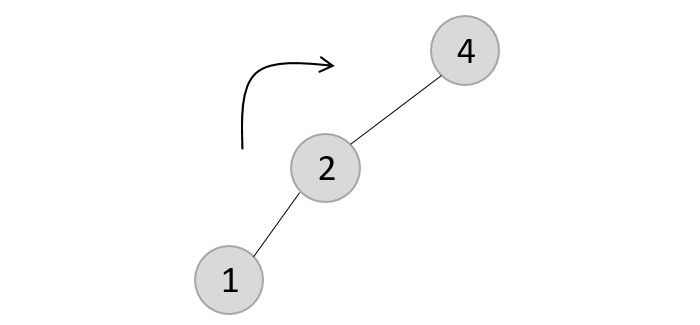

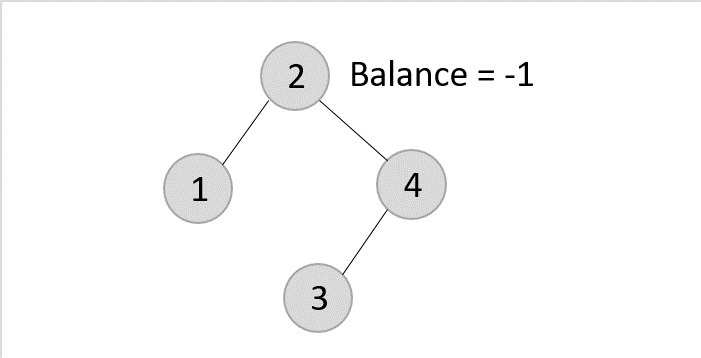

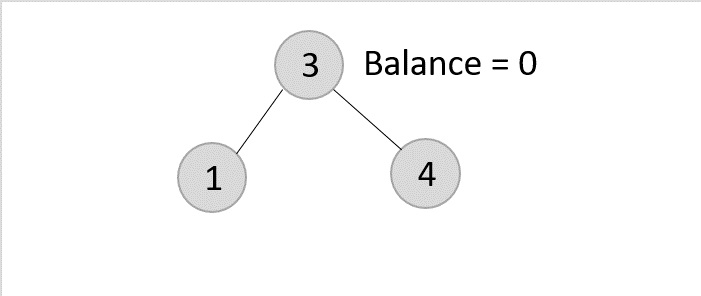

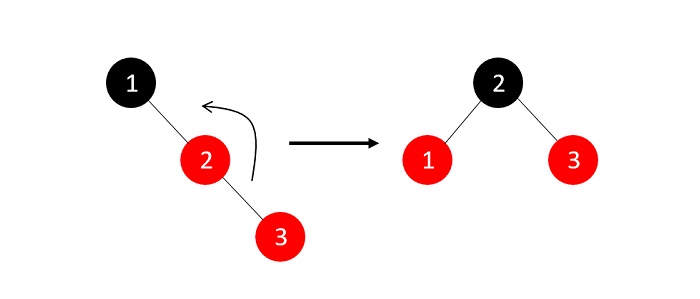

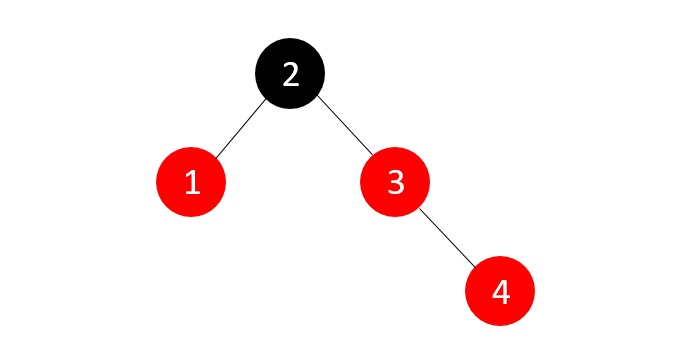

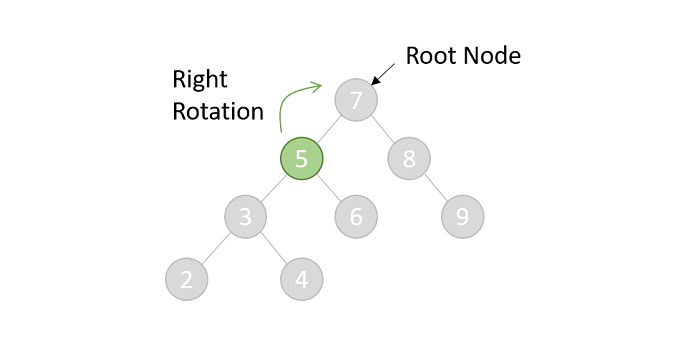

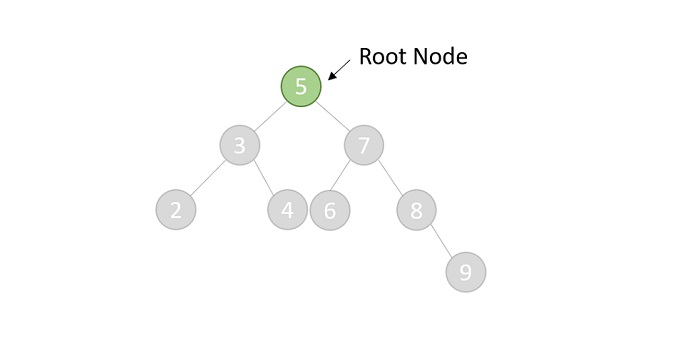

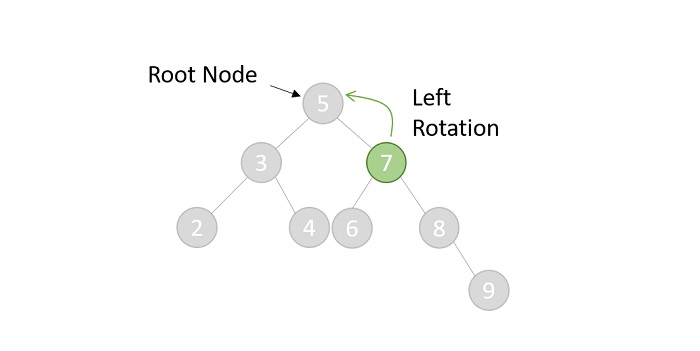

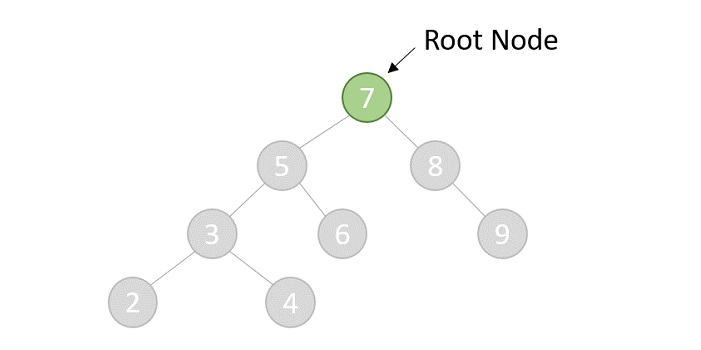

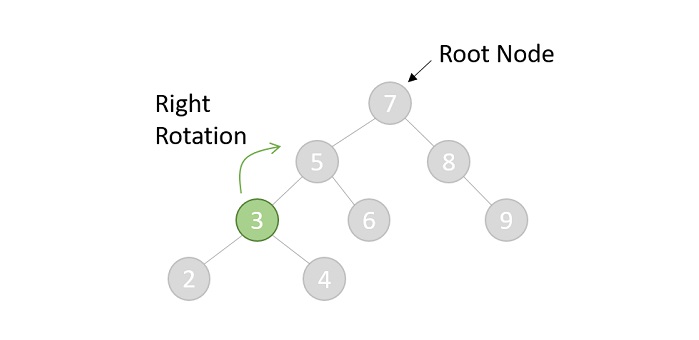

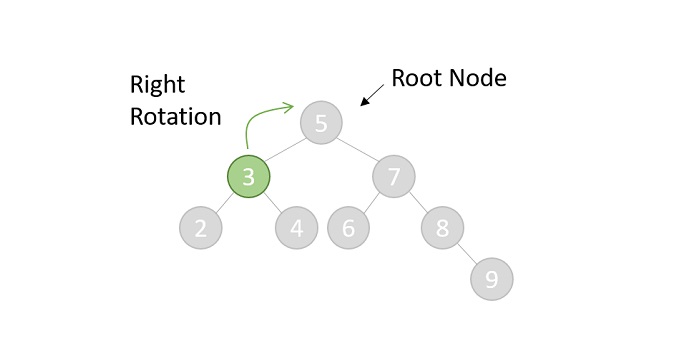

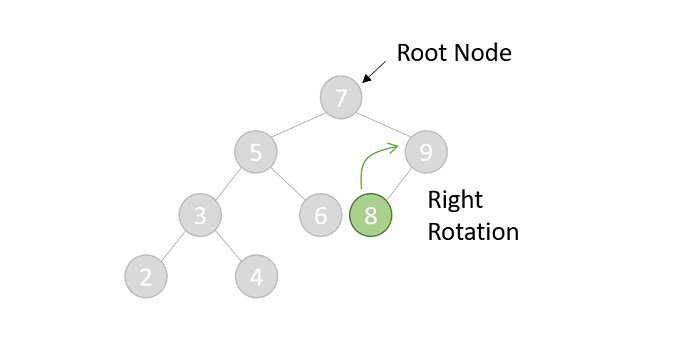

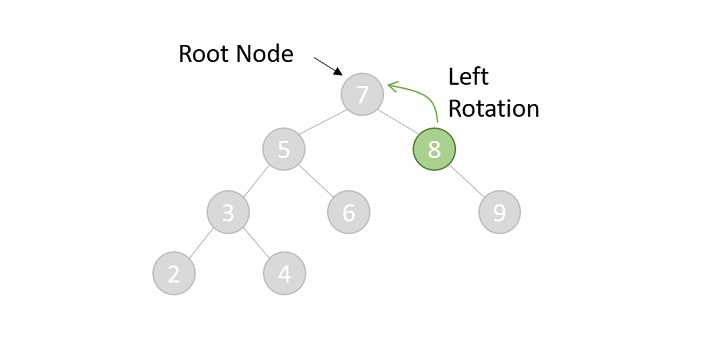

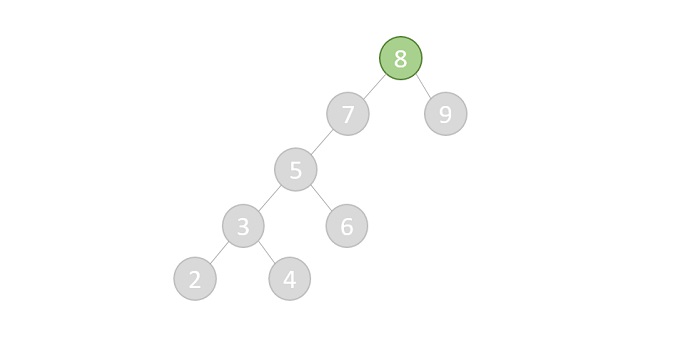

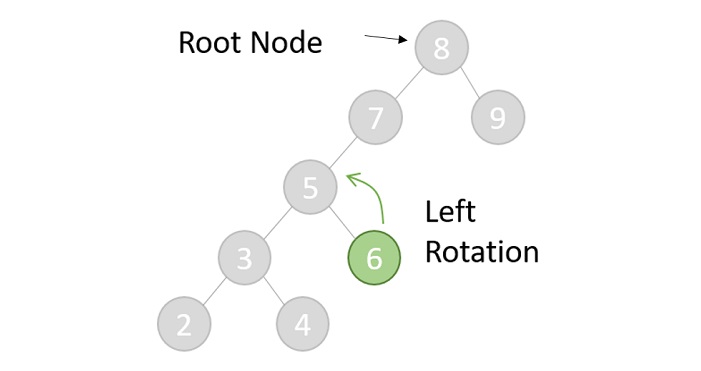

- DSA - AVL Tree

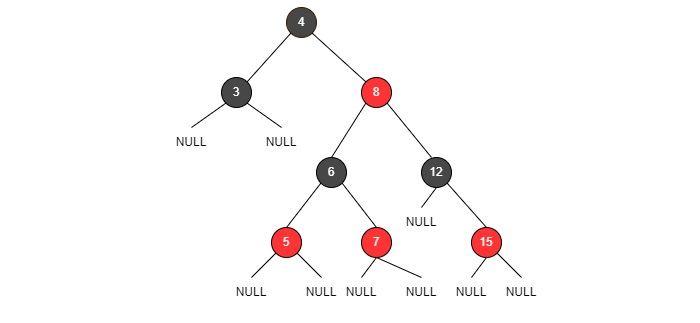

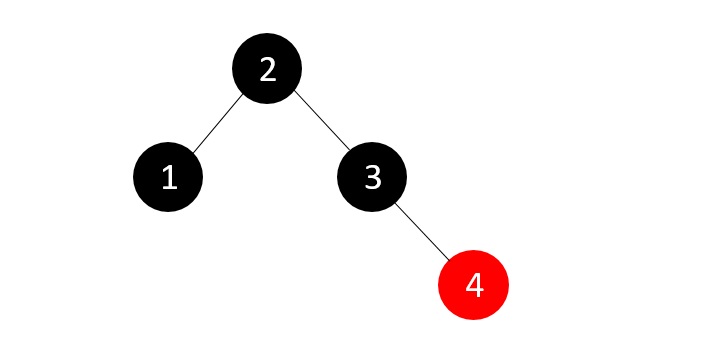

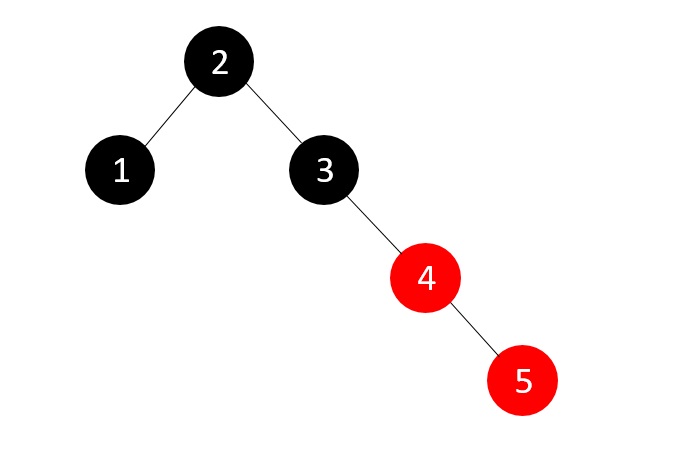

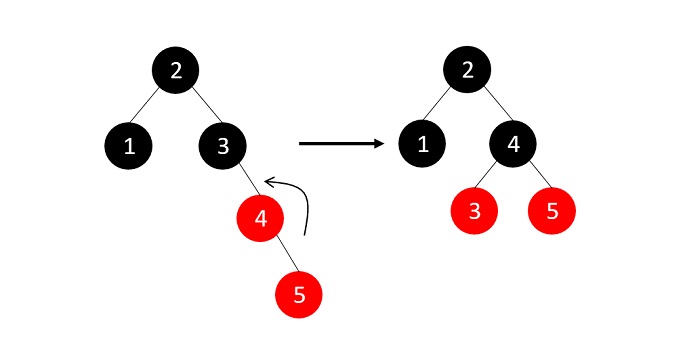

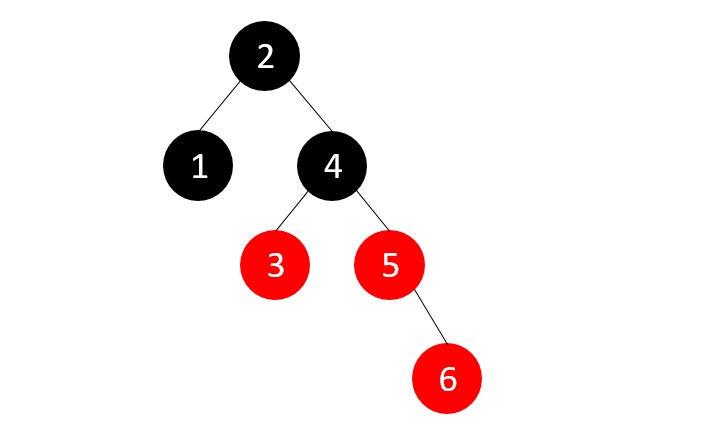

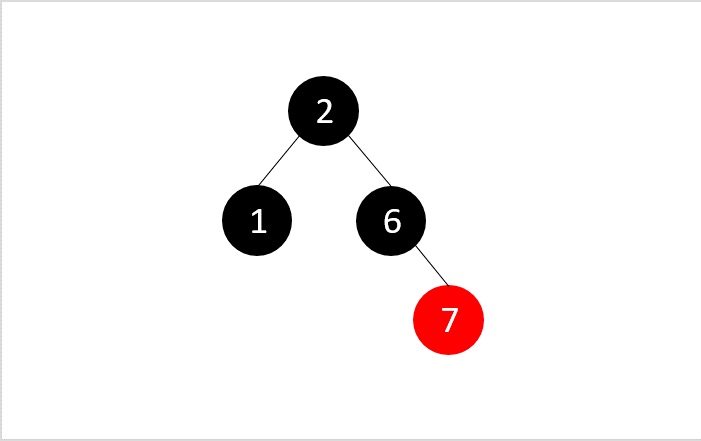

- DSA - Red Black Trees

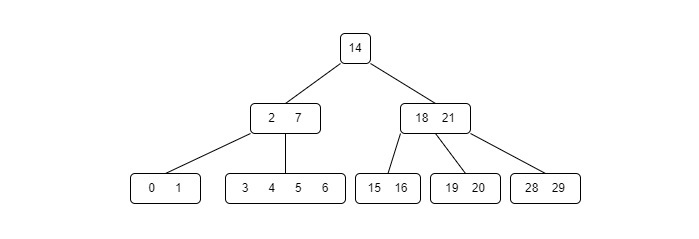

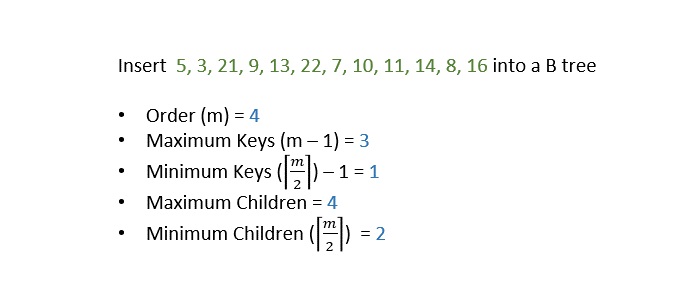

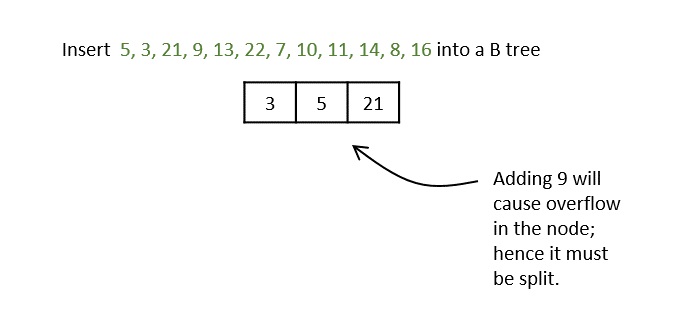

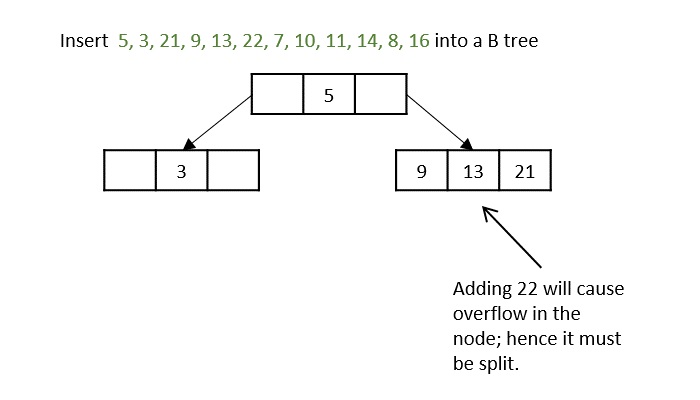

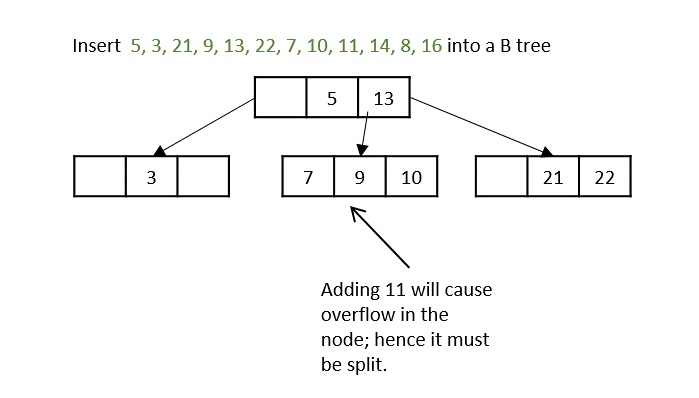

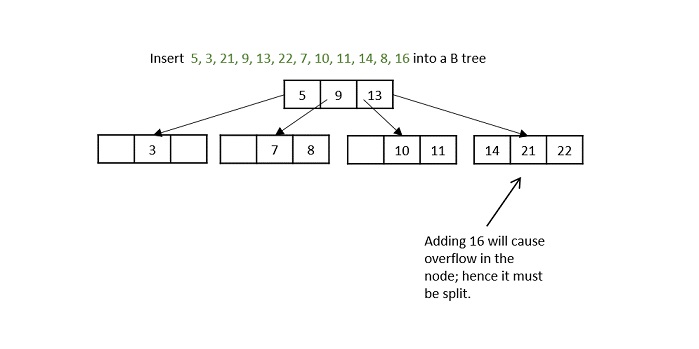

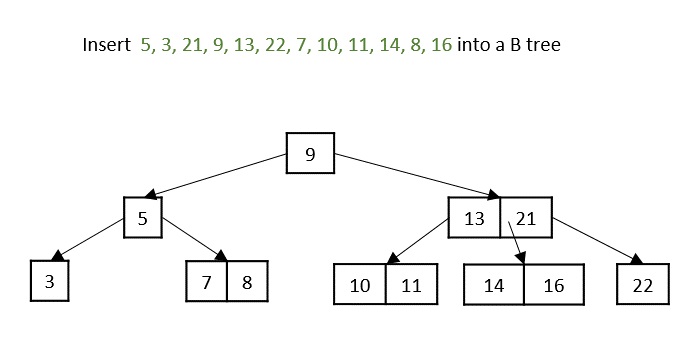

- DSA - B Trees

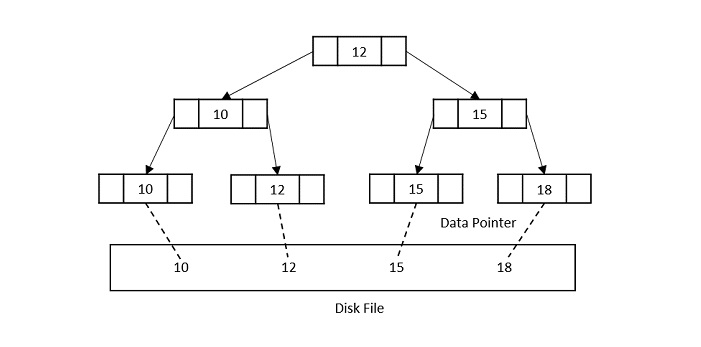

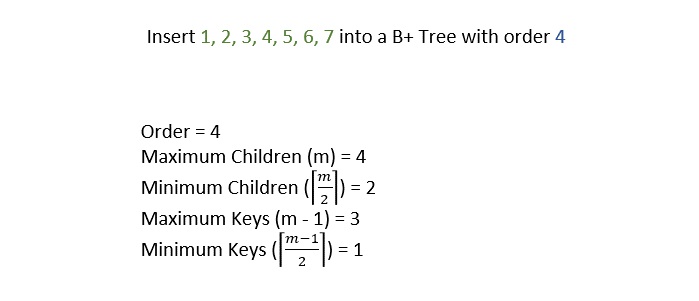

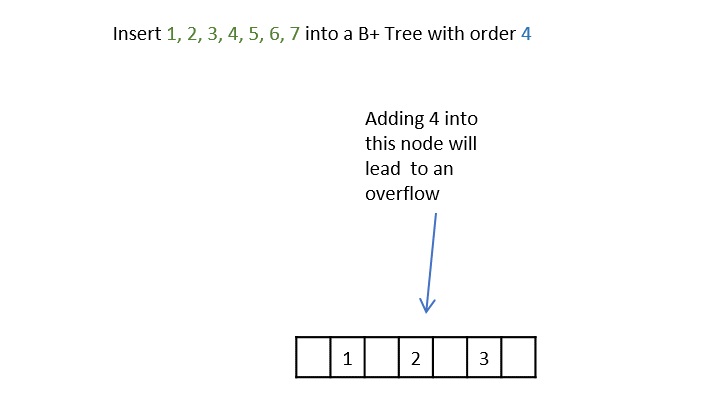

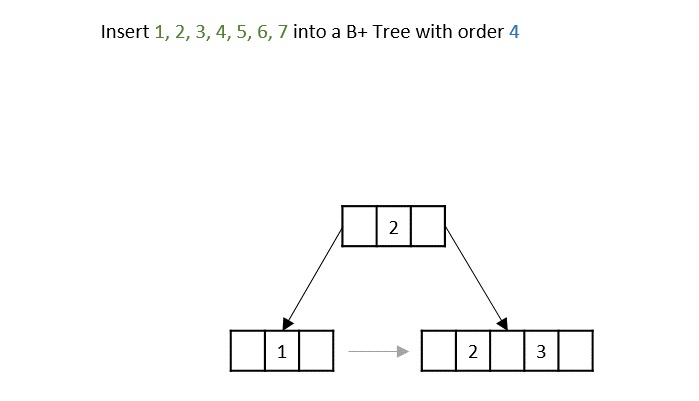

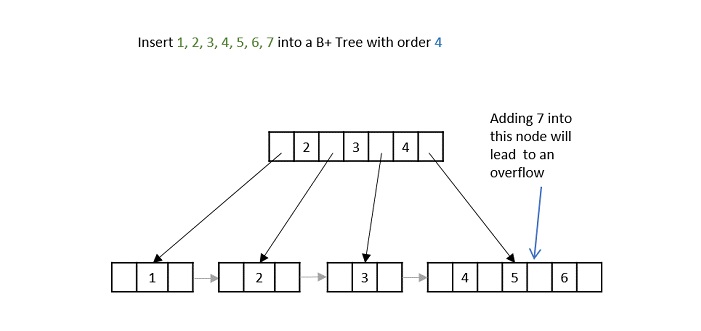

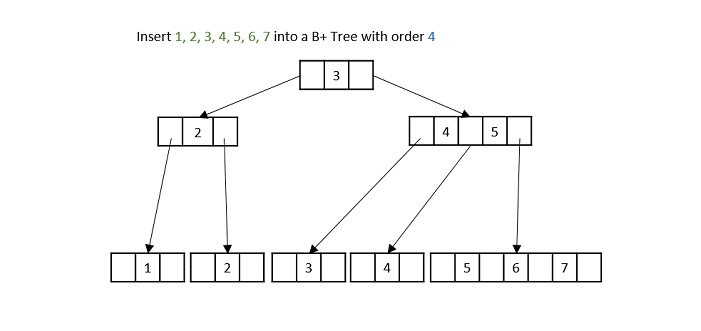

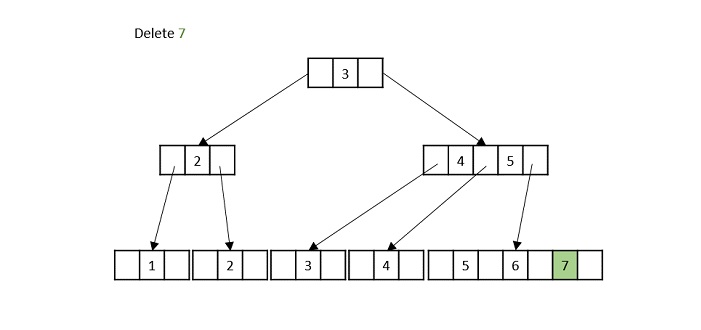

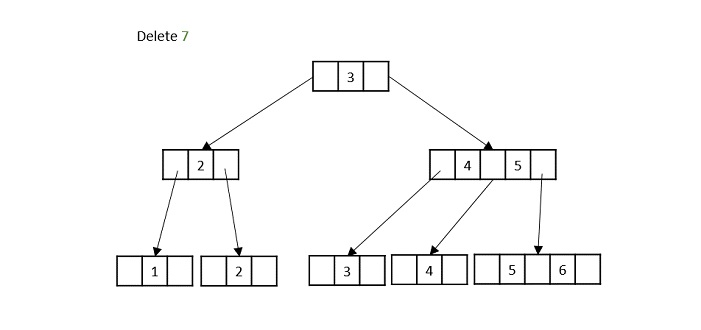

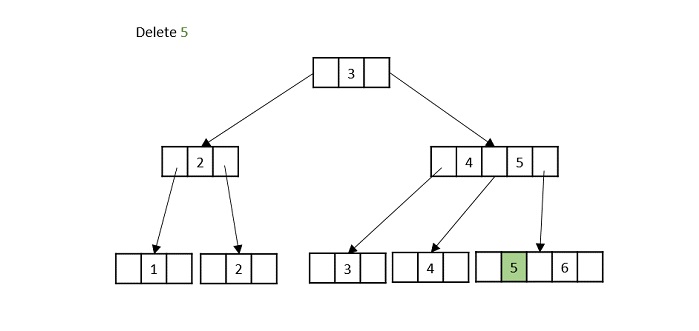

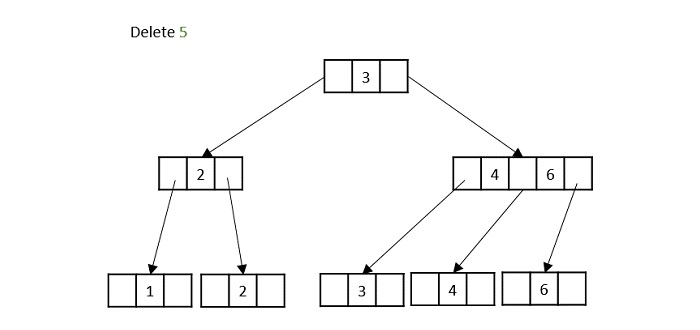

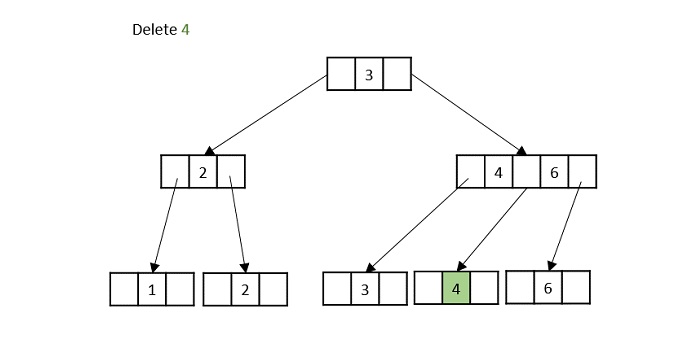

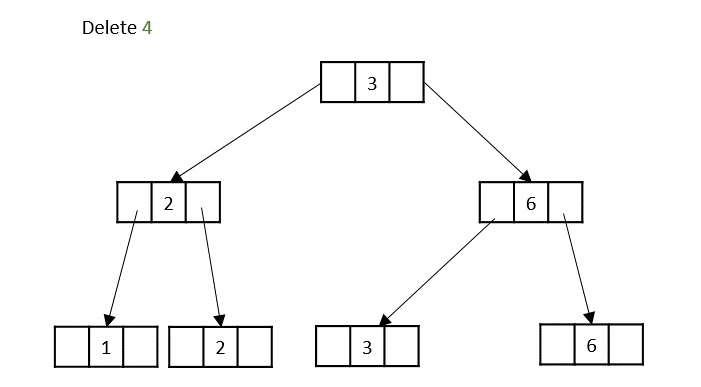

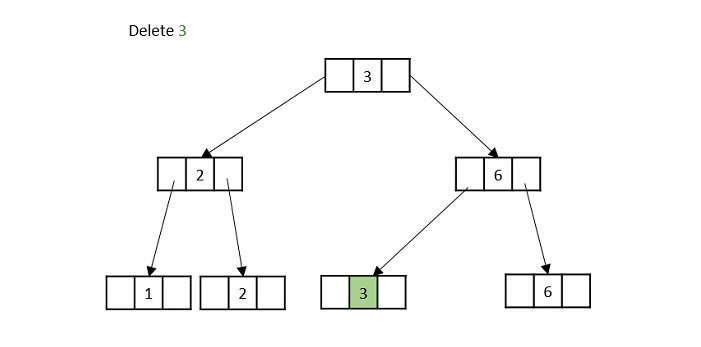

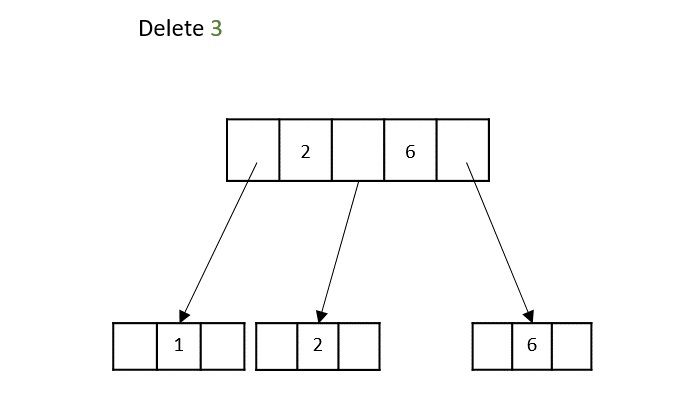

- DSA - B+ Trees

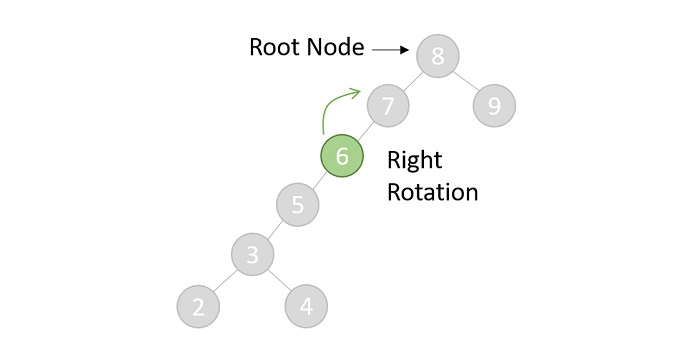

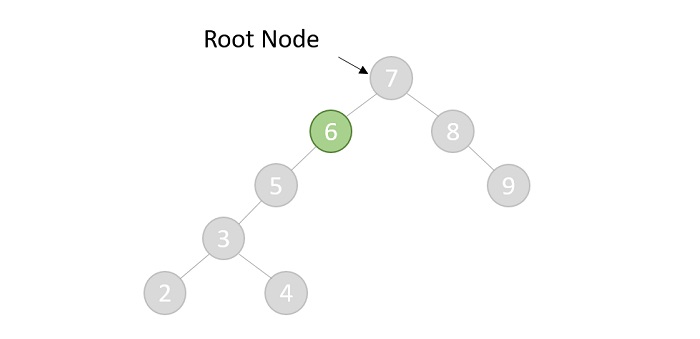

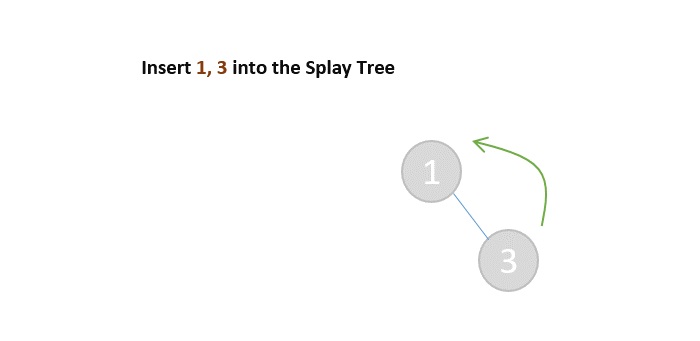

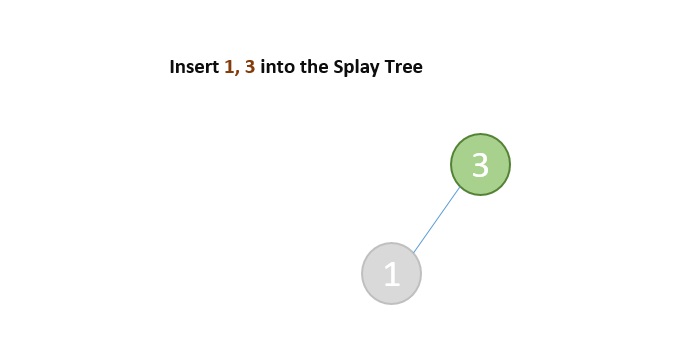

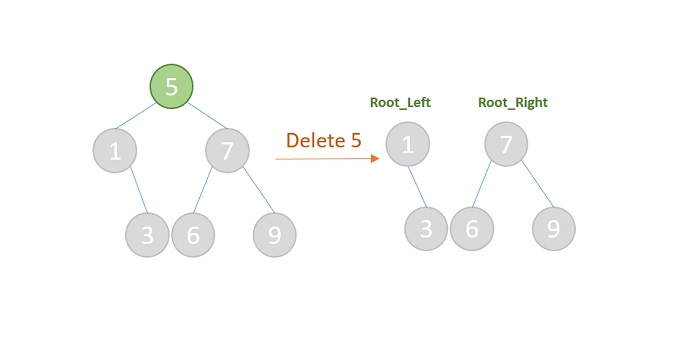

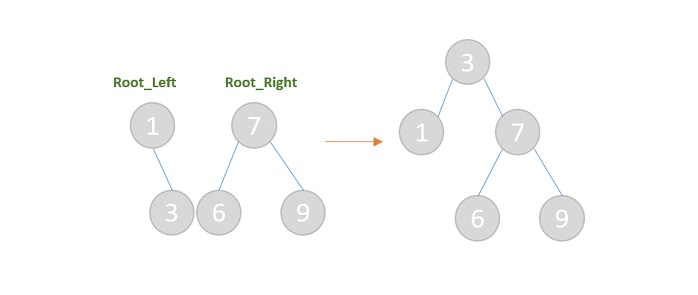

- DSA - Splay Trees

- DSA - Range Queries

- DSA - Segment Trees

- DSA - Fenwick Tree

- DSA - Fusion Tree

- DSA - Hashed Array Tree

- DSA - K-Ary Tree

- DSA - Kd Trees

- DSA - Priority Search Tree Data Structure

- Recursion

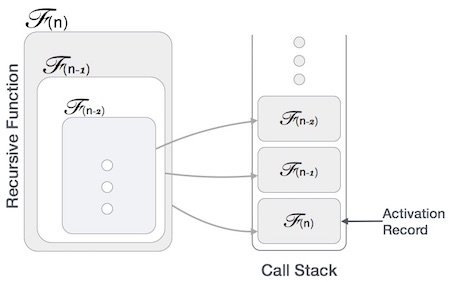

- DSA - Recursion Algorithms

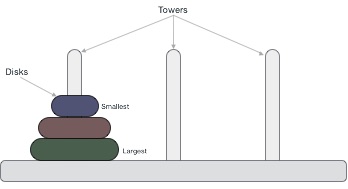

- DSA - Tower of Hanoi Using Recursion

- DSA - Fibonacci Series Using Recursion

- Divide and Conquer

- DSA - Divide and Conquer

- DSA - Max-Min Problem

- DSA - Strassen's Matrix Multiplication

- DSA - Karatsuba Algorithm

- Greedy Algorithms

- DSA - Greedy Algorithms

- DSA - Travelling Salesman Problem (Greedy Approach)

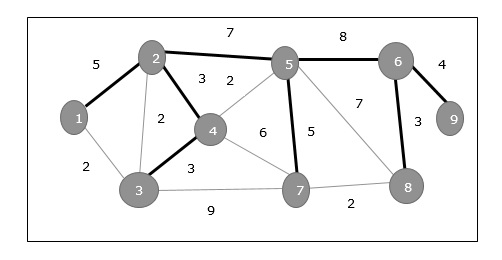

- DSA - Prim's Minimal Spanning Tree

- DSA - Kruskal's Minimal Spanning Tree

- DSA - Dijkstra's Shortest Path Algorithm

- DSA - Map Colouring Algorithm

- DSA - Fractional Knapsack Problem

- DSA - Job Sequencing with Deadline

- DSA - Optimal Merge Pattern Algorithm

- Dynamic Programming

- DSA - Dynamic Programming

- DSA - Matrix Chain Multiplication

- DSA - Floyd Warshall Algorithm

- DSA - 0-1 Knapsack Problem

- DSA - Longest Common Sub-sequence Algorithm

- DSA - Travelling Salesman Problem (Dynamic Approach)

- Hashing

- DSA - Hashing Data Structure

- DSA - Collision In Hashing

- Disjoint Set

- DSA - Disjoint Set

- DSA - Path Compression And Union By Rank

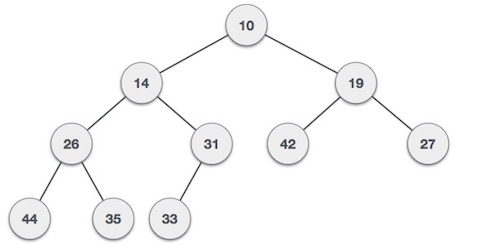

- Heap

- DSA - Heap Data Structure

- DSA - Binary Heap

- DSA - Binomial Heap

- DSA - Fibonacci Heap

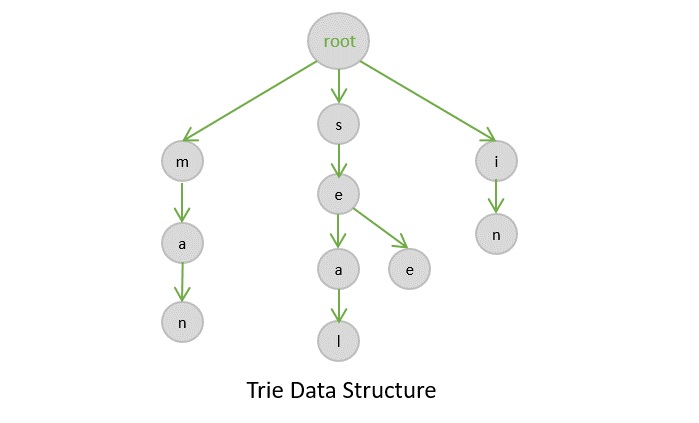

- Tries Data Structure

- DSA - Tries

- DSA - Standard Tries

- DSA - Compressed Tries

- DSA - Suffix Tries

- Treaps

- DSA - Treaps Data Structure

- Bit Mask

- DSA - Bit Mask In Data Structures

- Bloom Filter

- DSA - Bloom Filter Data Structure

- Approximation Algorithms

- DSA - Approximation Algorithms

- DSA - Vertex Cover Algorithm

- DSA - Set Cover Problem

- DSA - Travelling Salesman Problem (Approximation Approach)

- Randomized Algorithms

- DSA - Randomized Algorithms

- DSA - Randomized Quick Sort Algorithm

- DSA - Karger’s Minimum Cut Algorithm

- DSA - Fisher-Yates Shuffle Algorithm

- Miscellaneous

- DSA - Infix to Postfix

- DSA - Bellmon Ford Shortest Path

- DSA - Maximum Bipartite Matching

- DSA Useful Resources

- DSA - Questions and Answers

- DSA - Selection Sort Interview Questions

- DSA - Merge Sort Interview Questions

- DSA - Insertion Sort Interview Questions

- DSA - Heap Sort Interview Questions

- DSA - Bubble Sort Interview Questions

- DSA - Bucket Sort Interview Questions

- DSA - Radix Sort Interview Questions

- DSA - Cycle Sort Interview Questions

- DSA - Quick Guide

- DSA - Useful Resources

- DSA - Discussion

Data Structures & Algorithms - Quick Guide

Overview

Data Structure is a systematic way to organize data in order to use it efficiently. Following terms are the foundation terms of a data structure.

Interface − Each data structure has an interface. Interface represents the set of operations that a data structure supports. An interface only provides the list of supported operations, type of parameters they can accept and return type of these operations.

Implementation − Implementation provides the internal representation of a data structure. Implementation also provides the definition of the algorithms used in the operations of the data structure.

Characteristics of a Data Structure

Correctness − Data structure implementation should implement its interface correctly.

Time Complexity − Running time or the execution time of operations of data structure must be as small as possible.

Space Complexity − Memory usage of a data structure operation should be as little as possible.

Need for Data Structure

As applications are getting complex and data rich, there are three common problems that applications face now-a-days.

Data Search − Consider an inventory of 1 million(106) items of a store. If the application is to search an item, it has to search an item in 1 million(106) items every time slowing down the search. As data grows, search will become slower.

Processor speed − Processor speed although being very high, falls limited if the data grows to billion records.

Multiple requests − As thousands of users can search data simultaneously on a web server, even the fast server fails while searching the data.

To solve the above-mentioned problems, data structures come to rescue. Data can be organized in a data structure in such a way that all items may not be required to be searched, and the required data can be searched almost instantly.

Execution Time Cases

There are three cases which are usually used to compare various data structure's execution time in a relative manner.

Worst Case − This is the scenario where a particular data structure operation takes maximum time it can take. If an operation's worst case time is ƒ(n) then this operation will not take more than ƒ(n) time where ƒ(n) represents function of n.

Average Case − This is the scenario depicting the average execution time of an operation of a data structure. If an operation takes ƒ(n) time in execution, then m operations will take mƒ(n) time.

Best Case − This is the scenario depicting the least possible execution time of an operation of a data structure. If an operation takes ƒ(n) time in execution, then the actual operation may take time as the random number which would be maximum as ƒ(n).

Basic Terminology

Data − Data are values or set of values.

Data Item − Data item refers to single unit of values.

Group Items − Data items that are divided into sub items are called as Group Items.

Elementary Items − Data items that cannot be divided are called as Elementary Items.

Attribute and Entity − An entity is that which contains certain attributes or properties, which may be assigned values.

Entity Set − Entities of similar attributes form an entity set.

Field − Field is a single elementary unit of information representing an attribute of an entity.

Record − Record is a collection of field values of a given entity.

File − File is a collection of records of the entities in a given entity set.

Environment Setup

Local Environment Setup

If you are still willing to set up your environment for C programming language, you need the following two tools available on your computer, (a) Text Editor and (b) The C Compiler.

Text Editor

This will be used to type your program. Examples of few editors include Windows Notepad, OS Edit command, Brief, Epsilon, EMACS, and vim or vi.

The name and the version of the text editor can vary on different operating systems. For example, Notepad will be used on Windows, and vim or vi can be used on Windows as well as Linux or UNIX.

The files you create with your editor are called source files and contain program source code. The source files for C programs are typically named with the extension ".c".

Before starting your programming, make sure you have one text editor in place and you have enough experience to write a computer program, save it in a file, compile it, and finally execute it.

The C Compiler

The source code written in the source file is the human readable source for your program. It needs to be "compiled", to turn into machine language so that your CPU can actually execute the program as per the given instructions.

This C programming language compiler will be used to compile your source code into a final executable program. We assume you have the basic knowledge about a programming language compiler.

Most frequently used and free available compiler is GNU C/C++ compiler. Otherwise, you can have compilers either from HP or Solaris if you have respective Operating Systems (OS).

The following section guides you on how to install GNU C/C++ compiler on various OS. We are mentioning C/C++ together because GNU GCC compiler works for both C and C++ programming languages.

Installation on UNIX/Linux

If you are using Linux or UNIX, then check whether GCC is installed on your system by entering the following command from the command line −

$ gcc -v

If you have GNU compiler installed on your machine, then it should print a message such as the following −

Using built-in specs. Target: i386-redhat-linux Configured with: ../configure --prefix = /usr ....... Thread model: posix gcc version 4.1.2 20080704 (Red Hat 4.1.2-46)

If GCC is not installed, then you will have to install it yourself using the detailed instructions available at https://gcc.gnu.org/install/

This tutorial has been written based on Linux and all the given examples have been compiled on Cent OS flavor of Linux system.

Installation on Mac OS

If you use Mac OS X, the easiest way to obtain GCC is to download the Xcode development environment from Apple's website and follow the simple installation instructions. Once you have Xcode setup, you will be able to use GNU compiler for C/C++.

Xcode is currently available at developer.apple.com/technologies/tools/

Installation on Windows

To install GCC on Windows, you need to install MinGW. To install MinGW, go to the MinGW homepage, www.mingw.org, and follow the link to the MinGW download page. Download the latest version of the MinGW installation program, which should be named MinGW-<version>.exe.

While installing MinWG, at a minimum, you must install gcc-core, gcc-g++, binutils, and the MinGW runtime, but you may wish to install more.

Add the bin subdirectory of your MinGW installation to your PATH environment variable, so that you can specify these tools on the command line by their simple names.

When the installation is complete, you will be able to run gcc, g++, ar, ranlib, dlltool, and several other GNU tools from the Windows command line.

Data Structure Basics

This chapter explains the basic terms related to data structure.

Data Definition

Data Definition defines a particular data with the following characteristics.

Atomic − Definition should define a single concept.

Traceable − Definition should be able to be mapped to some data element.

Accurate − Definition should be unambiguous.

Clear and Concise − Definition should be understandable.

Data Object

Data Object represents an object having a data.

Data Type

Data type is a way to classify various types of data such as integer, string, etc. which determines the values that can be used with the corresponding type of data, the type of operations that can be performed on the corresponding type of data. There are two data types −

- Built-in Data Type

- Derived Data Type

Built-in Data Type

Those data types for which a language has built-in support are known as Built-in Data types. For example, most of the languages provide the following built-in data types.

- Integers

- Boolean (true, false)

- Floating (Decimal numbers)

- Character and Strings

Derived Data Type

Those data types which are implementation independent as they can be implemented in one or the other way are known as derived data types. These data types are normally built by the combination of primary or built-in data types and associated operations on them. For example −

- List

- Array

- Stack

- Queue

Basic Operations

The data in the data structures are processed by certain operations. The particular data structure chosen largely depends on the frequency of the operation that needs to be performed on the data structure.

- Traversing

- Searching

- Insertion

- Deletion

- Sorting

- Merging

Data Structures and Types

Data structures are introduced in order to store, organize and manipulate data in programming languages. They are designed in a way that makes accessing and processing of the data a little easier and simpler. These data structures are not confined to one particular programming language; they are just pieces of code that structure data in the memory.

Data types are often confused as a type of data structures, but it is not precisely correct even though they are referred to as Abstract Data Types. Data types represent the nature of the data while data structures are just a collection of similar or different data types in one.

There are usually just two types of data structures −

Linear

Non-Linear

Linear Data Structures

The data is stored in linear data structures sequentially. These are rudimentary structures since the elements are stored one after the other without applying any mathematical operations.

Linear data structures are usually easy to implement but since the memory allocation might become complicated, time and space complexities increase. Few examples of linear data structures include −

Arrays

Linked Lists

Stacks

Queues

Based on the data storage methods, these linear data structures are divided into two sub-types. They are − static and dynamic data structures.

Static Linear Data Structures

In Static Linear Data Structures, the memory allocation is not scalable. Once the entire memory is used, no more space can be retrieved to store more data. Hence, the memory is required to be reserved based on the size of the program. This will also act as a drawback since reserving more memory than required can cause a wastage of memory blocks.

The best example for static linear data structures is an array.

Dynamic Linear Data Structures

In Dynamic linear data structures, the memory allocation can be done dynamically when required. These data structures are efficient considering the space complexity of the program.

Few examples of dynamic linear data structures include: linked lists, stacks and queues.

Non-Linear Data Structures

Non-Linear data structures store the data in the form of a hierarchy. Therefore, in contrast to the linear data structures, the data can be found in multiple levels and are difficult to traverse through.

However, they are designed to overcome the issues and limitations of linear data structures. For instance, the main disadvantage of linear data structures is the memory allocation. Since the data is allocated sequentially in linear data structures, each element in these data structures uses one whole memory block. However, if the data uses less memory than the assigned block can hold, the extra memory space in the block is wasted. Therefore, non-linear data structures are introduced. They decrease the space complexity and use the memory optimally.

Few types of non-linear data structures are −

Graphs

Trees

Tries

Maps

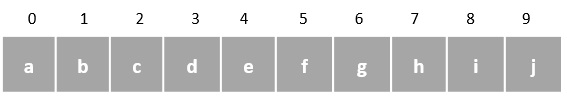

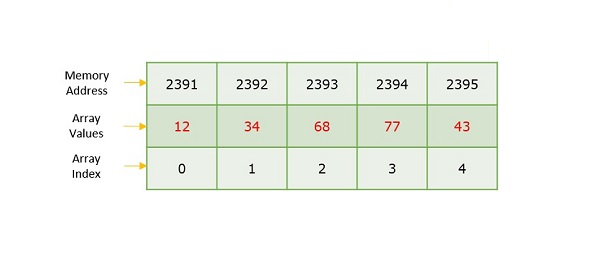

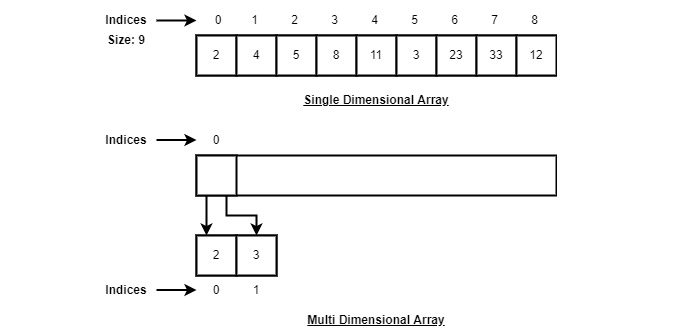

Array Data Structure

Array is a type of linear data structure that is defined as a collection of elements with same or different data types. They exist in both single dimension and multiple dimensions. These data structures come into picture when there is a necessity to store multiple elements of similar nature together at one place.

The difference between an array index and a memory address is that the array index acts like a key value to label the elements in the array. However, a memory address is the starting address of free memory available.

Following are the important terms to understand the concept of Array.

Element − Each item stored in an array is called an element.

Index − Each location of an element in an array has a numerical index, which is used to identify the element.

Syntax

Creating an array in C and C++ programming languages −

data_type array_name[array_size] = {elements separated using commas}

or,

data_type array_name[array_size];

Creating an array in Java programming language −

data_type[] array_name = {elements separated by commas}

or,

data_type array_name = new data_type[array_size];

Need for Arrays

Arrays are used as solutions to many problems from the small sorting problems to more complex problems like travelling salesperson problem. There are many data structures other than arrays that provide efficient time and space complexity for these problems, so what makes using arrays better? The answer lies in the random access lookup time.

Arrays provide O(1) random access lookup time. That means, accessing the 1st index of the array and the 1000th index of the array will both take the same time. This is due to the fact that array comes with a pointer and an offset value. The pointer points to the right location of the memory and the offset value shows how far to look in the said memory.

array_name[index]

| |

Pointer Offset

Therefore, in an array with 6 elements, to access the 1st element, array is pointed towards the 0th index. Similarly, to access the 6th element, array is pointed towards the 5th index.

Array Representation

Arrays are represented as a collection of buckets where each bucket stores one element. These buckets are indexed from 0 to n-1, where n is the size of that particular array. For example, an array with size 10 will have buckets indexed from 0 to 9.

This indexing will be similar for the multidimensional arrays as well. If it is a 2-dimensional array, it will have sub-buckets in each bucket. Then it will be indexed as array_name[m][n], where m and n are the sizes of each level in the array.

As per the above illustration, following are the important points to be considered.

Index starts with 0.

Array length is 9 which means it can store 9 elements.

Each element can be accessed via its index. For example, we can fetch an element at index 6 as 23.

Basic Operations in the Arrays

The basic operations in the Arrays are insertion, deletion, searching, display, traverse, and update. These operations are usually performed to either modify the data in the array or to report the status of the array.

Following are the basic operations supported by an array.

Traverse − print all the array elements one by one.

Insertion − Adds an element at the given index.

Deletion − Deletes an element at the given index.

Search − Searches an element using the given index or by the value.

Update − Updates an element at the given index.

Display − Displays the contents of the array.

In C, when an array is initialized with size, then it assigns defaults values to its elements in following order.

| Data Type | Default Value |

|---|---|

| bool | false |

| char | 0 |

| int | 0 |

| float | 0.0 |

| double | 0.0f |

| void | |

| wchar_t | 0 |

Insertion Operation

In the insertion operation, we are adding one or more elements to the array. Based on the requirement, a new element can be added at the beginning, end, or any given index of array. This is done using input statements of the programming languages.

Algorithm

Following is an algorithm to insert elements into a Linear Array until we reach the end of the array −

1. Start 2. Create an Array of a desired datatype and size. 3. Initialize a variable i as 0. 4. Enter the element at ith index of the array. 5. Increment i by 1. 6. Repeat Steps 4 & 5 until the end of the array. 7. Stop

Here, we see a practical implementation of insertion operation, where we add data at the end of the array −

Example

#include <stdio.h>

int main(){

int LA[3] = {}, i;

printf("Array Before Insertion:\n");

for(i = 0; i < 3; i++)

printf("LA[%d] = %d \n", i, LA[i]);

printf("Inserting Elements.. \n");

printf("The array elements after insertion :\n"); // prints array values

for(i = 0; i < 3; i++) {

LA[i] = i + 2;

printf("LA[%d] = %d \n", i, LA[i]);

}

return 0;

}

Output

Array Before Insertion: LA[0] = 0 LA[1] = 0 LA[2] = 0 Inserting Elements.. The array elements after insertion : LA[0] = 2 LA[1] = 3 LA[2] = 4

#include <iostream>

using namespace std;

int main(){

int LA[3] = {}, i;

cout << "Array Before Insertion:" << endl;

for(i = 0; i < 3; i++)

cout << "LA[" << i <<"] = " << LA[i] << endl;

//prints garbage values

cout << "Inserting elements.." <<endl;

cout << "Array After Insertion:" << endl; // prints array values

for(i = 0; i < 5; i++) {

LA[i] = i + 2;

cout << "LA[" << i <<"] = " << LA[i] << endl;

}

return 0;

}

Output

Array Before Insertion: LA[0] = 0 LA[1] = 0 LA[2] = 0 Inserting elements.. Array After Insertion: LA[0] = 2 LA[1] = 3 LA[2] = 4 LA[3] = 5 LA[4] = 6

public class ArrayDemo {

public static void main(String []args) {

int LA[] = new int[3];

System.out.println("Array Before Insertion:");

for(int i = 0; i < 3; i++)

System.out.println("LA[" + i + "] = " + LA[i]); //prints empty array

System.out.println("Inserting Elements..");

// Printing Array after Insertion

System.out.println("Array After Insertion:");

for(int i = 0; i < 3; i++) {

LA[i] = i+3;

System.out.println("LA[" + i + "] = " + LA[i]);

}

}

}

Output

Array Before Insertion: LA[0] = 0 LA[1] = 0 LA[2] = 0 Inserting Elements.. Array After Insertion: LA[0] = 3 LA[1] = 4 LA[2] = 5

# python program to insert element using insert operation

def insert(arr, element):

arr.append(element)

# Driver's code

if __name__ == '__main__':

# declaring array and value to insert

LA = [0, 0, 0]

x = 0

# array before inserting an element

print("Array Before Insertion: ")

for x in range(len(LA)):

print("LA", [x], " = " , LA[x])

print("Inserting elements....")

# array after Inserting element

for x in range(len(LA)):

LA.append(x);

LA[x] = x+1;

print("Array After Insertion: ")

for x in range(len(LA)):

print("LA", [x], " = " , LA[x])

Output

Array Before Insertion: LA [0] = 0 LA [1] = 0 LA [2] = 0 Inserting elements.... Array After Insertion: LA [0] = 1 LA [1] = 2 LA [2] = 3 LA [3] = 0 LA [4] = 1 LA [5] = 2

For other variations of array insertion operation, click here.

Deletion Operation

In this array operation, we delete an element from the particular index of an array. This deletion operation takes place as we assign the value in the consequent index to the current index.

Algorithm

Consider LA is a linear array with N elements and K is a positive integer such that K<=N. Following is the algorithm to delete an element available at the Kth position of LA.

1. Start 2. Set J = K 3. Repeat steps 4 and 5 while J < N 4. Set LA[J] = LA[J + 1] 5. Set J = J+1 6. Set N = N-1 7. Stop

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

void main(){

int LA[] = {1,3,5};

int n = 3;

int i;

printf("The original array elements are :\n");

for(i = 0; i<n; i++)

printf("LA[%d] = %d \n", i, LA[i]);

for(i = 1; i<n; i++) {

LA[i] = LA[i+1];

n = n - 1;

}

printf("The array elements after deletion :\n");

for(i = 0; i<n; i++)

printf("LA[%d] = %d \n", i, LA[i]);

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 The array elements after deletion : LA[0] = 1 LA[1] = 5

#include <iostream>

using namespace std;

int main(){

int LA[] = {1,3,5};

int i, n = 3;

cout << "The original array elements are :"<<endl;

for(i = 0; i<n; i++) {

cout << "LA[" << i << "] = " << LA[i] << endl;

}

for(i = 1; i<n; i++) {

LA[i] = LA[i+1];

n = n - 1;

}

cout << "The array elements after deletion :"<<endl;

for(i = 0; i<n; i++) {

cout << "LA[" << i << "] = " << LA[i] <<endl;

}

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 The array elements after deletion : LA[0] = 1 LA[1] = 5

public class ArrayDemo {

public static void main(String []args) {

int LA[] = new int[3];

int n = LA.length;

System.out.println("Array Before Deletion:");

for(int i = 0; i < n; i++) {

LA[i] = i + 3;

System.out.println("LA[" + i + "] = " + LA[i]);

}

for(int i = 1; i<n-1; i++) {

LA[i] = LA[i+1];

n = n - 1;

}

System.out.println("Array After Deletion:");

for(int i = 0; i < n; i++) {

System.out.println("LA[" + i + "] = " + LA[i]);

}

}

}

Output

Array Before Deletion: LA[0] = 3 LA[1] = 4 LA[2] = 5 Array After Deletion: LA[0] = 3 LA[1] = 5

#python program to delete the value using delete operation

if __name__ == '__main__':

# Declaring array and deleting value

LA = [0,0,0]

n = len(LA)

print("Array Before Deletion: ")

for x in range(len(LA)):

LA.append(x)

LA[x] = x + 3

print("LA", [x], " = " , LA[x])

# delete the value if exists

# or show error it does not exist in the list

for x in range(1, n-1):

LA[x] = LA[x+1]

n = n-1

print("Array After Deletion: ")

for x in range(n):

print("LA", [x], " = " , LA[x])

Output

Array Before Deletion: LA [0] = 3 LA [1] = 4 LA [2] = 5 Array After Deletion: LA [0] = 3 LA [1] = 5

Search Operation

Searching an element in the array using a key; The key element sequentially compares every value in the array to check if the key is present in the array or not.

Algorithm

Consider LA is a linear array with N elements and K is a positive integer such that K<=N. Following is the algorithm to find an element with a value of ITEM using sequential search.

1. Start 2. Set J = 0 3. Repeat steps 4 and 5 while J < N 4. IF LA[J] is equal ITEM THEN GOTO STEP 6 5. Set J = J +1 6. PRINT J, ITEM 7. Stop

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

void main(){

int LA[] = {1,3,5,7,8};

int item = 5, n = 5;

int i = 0, j = 0;

printf("The original array elements are :\n");

for(i = 0; i<n; i++) {

printf("LA[%d] = %d \n", i, LA[i]);

}

for(i = 0; i<n; i++) {

if( LA[i] == item ) {

printf("Found element %d at position %d\n", item, i+1);

}

}

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8 Found element 5 at position 3

#include <iostream>

using namespace std;

int main(){

int LA[] = {1,3,5,7,8};

int item = 5, n = 5;

int i = 0;

cout << "The original array elements are : " <<endl;

for(i = 0; i<n; i++) {

cout << "LA[" << i << "] = " << LA[i] << endl;

}

for(i = 0; i<n; i++) {

if( LA[i] == item ) {

cout << "Found element " << item << " at position " << i+1 <<endl;

}

}

return 0;

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8 Found element 5 at position 3

public class ArrayDemo{

public static void main(String []args){

int LA[] = new int[5];

System.out.println("Array:");

for(int i = 0; i < 5; i++) {

LA[i] = i + 3;

System.out.println("LA[" + i + "] = " + LA[i]);

}

for(int i = 0; i < 5; i++) {

if(LA[i] == 6)

System.out.println("Element " + 6 + " is found at index " + i);

}

}

}

Output

Array: LA[0] = 3 LA[1] = 4 LA[2] = 5 LA[3] = 6 LA[4] = 7 Element 6 is found at index 3

#search operation using python

def findElement(arr, n, value):

for i in range(n):

if (arr[i] == value):

return i

# If the key is not found

return -1

# Driver's code

if __name__ == '__main__':

LA = [1,3,5,7,8]

print("Array element are: ")

for x in range(len(LA)):

print("LA", [x], " = ", LA[x])

value = 5

n = len(LA)

# element found using search operation

index = findElement(LA, n, value)

if index != -1:

print("Element", value, "Found at position = " + str(index + 1))

else:

print("Element not found")

Output

Array element are: LA [0] = 1 LA [1] = 3 LA [2] = 5 LA [3] = 7 LA [4] = 8 Element 5 Found at position = 3

Traversal Operation

This operation traverses through all the elements of an array. We use loop statements to carry this out.

Algorithm

Following is the algorithm to traverse through all the elements present in a Linear Array −

1 Start 2. Initialize an Array of certain size and datatype. 3. Initialize another variable i with 0. 4. Print the ith value in the array and increment i. 5. Repeat Step 4 until the end of the array is reached. 6. End

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

int main(){

int LA[] = {1,3,5,7,8};

int item = 10, k = 3, n = 5;

int i = 0, j = n;

printf("The original array elements are :\n");

for(i = 0; i<n; i++) {

printf("LA[%d] = %d \n", i, LA[i]);

}

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8

#include <iostream>

using namespace std;

int main(){

int LA[] = {1,3,5,7,8};

int item = 10, k = 3, n = 5;

int i = 0, j = n;

cout << "The original array elements are:\n";

for(i = 0; i<n; i++)

cout << "LA[" << i << "] = " << LA[i] << endl;

return 0;

}

Output

The original array elements are: LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8

public class ArrayDemo {

public static void main(String []args) {

int LA[] = new int[5];

System.out.println("The array elements are: ");

for(int i = 0; i < 5; i++) {

LA[i] = i + 2;

System.out.println("LA[" + i + "] = " + LA[i]);

}

}

}

Output

The array elements are: LA[0] = 2 LA[1] = 3 LA[2] = 4 LA[3] = 5 LA[4] = 6

# Python code to iterate over a array using python

LA = [1, 3, 5, 7, 8]

# length of the elements

length = len(LA)

# Traversing the elements using For loop and range

# same as 'for x in range(len(array))'

print("Array elements are: ")

for x in range(length):

print("LA", [x], " = ", LA[x])

Output

Array elements are: LA [0] = 1 LA [1] = 3 LA [2] = 5 LA [3] = 7 LA [4] = 8

Update Operation

Update operation refers to updating an existing element from the array at a given index.

Algorithm

Consider LA is a linear array with N elements and K is a positive integer such that K<=N. Following is the algorithm to update an element available at the Kth position of LA.

1. Start 2. Set LA[K-1] = ITEM 3. Stop

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

void main(){

int LA[] = {1,3,5,7,8};

int k = 3, n = 5, item = 10;

int i, j;

printf("The original array elements are :\n");

for(i = 0; i<n; i++) {

printf("LA[%d] = %d \n", i, LA[i]);

}

LA[k-1] = item;

printf("The array elements after updation :\n");

for(i = 0; i<n; i++) {

printf("LA[%d] = %d \n", i, LA[i]);

}

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8 The array elements after updation : LA[0] = 1 LA[1] = 3 LA[2] = 10 LA[3] = 7 LA[4] = 8

#include <iostream>

using namespace std;

int main(){

int LA[] = {1,3,5,7,8};

int item = 10, k = 3, n = 5;

int i = 0, j = n;

cout << "The original array elements are :\n";

for(i = 0; i<n; i++)

cout << "LA[" << i << "] = " << LA[i] << endl;

LA[2] = item;

cout << "The array elements after updation are :\n";

for(i = 0; i<n; i++)

cout << "LA[" << i << "] = " << LA[i] << endl;

return 0;

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8 The array elements after updation are : LA[0] = 1 LA[1] = 3 LA[2] = 10 LA[3] = 7 LA[4] = 8

public class ArrayDemo {

public static void main(String []args) {

int LA[] = new int[5];

int item = 15;

System.out.println("The array elements are: ");

for(int i = 0; i < 5; i++) {

LA[i] = i + 2;

System.out.println("LA[" + i + "] = " + LA[i]);

}

LA[3] = item;

System.out.println("The array elements after updation are: ");

for(int i = 0; i < 5; i++)

System.out.println("LA[" + i + "] = " + LA[i]);

}

}

Output

The array elements are: LA[0] = 2 LA[1] = 3 LA[2] = 4 LA[3] = 5 LA[4] = 6 The array elements after updation are: LA[0] = 2 LA[1] = 3 LA[2] = 4 LA[3] = 15 LA[4] = 6

#update operation using python

#Declaring array elements

LA = [1,3,5,7,8]

#before updation

print("The original array elements are :");

for x in range(len(LA)):

print("LA", [x], " = ", LA[x])

#after updation

LA[2] = 10

print("The array elements after updation are: ")

for x in range(len(LA)):

print("LA", [x], " = ", LA[x])

Output

The original array elements are : LA [0] = 1 LA [1] = 3 LA [2] = 5 LA [3] = 7 LA [4] = 8 The array elements after updation are: LA [0] = 1 LA [1] = 3 LA [2] = 10 LA [3] = 7 LA [4] = 8

Display Operation

This operation displays all the elements in the entire array using a print statement.

Algorithm

1. Start 2. Print all the elements in the Array 3. Stop

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

int main(){

int LA[] = {1,3,5,7,8};

int n = 5;

int i;

printf("The original array elements are :\n");

for(i = 0; i<n; i++) {

printf("LA[%d] = %d \n", i, LA[i]);

}

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8

#include <iostream>

using namespace std;

int main(){

int LA[] = {1,3,5,7,8};

int n = 5;

int i;

cout << "The original array elements are :\n";

for(i = 0; i<n; i++)

cout << "LA[" << i << "] = " << LA[i] << endl;

return 0;

}

Output

The original array elements are : LA[0] = 1 LA[1] = 3 LA[2] = 5 LA[3] = 7 LA[4] = 8

public class ArrayDemo {

public static void main(String []args) {

int LA[] = new int[5];

System.out.println("The array elements are: ");

for(int i = 0; i < 5; i++) {

LA[i] = i + 2;

System.out.println("LA[" + i + "] = " + LA[i]);

}

}

}

Output

The array elements are: LA[0] = 2 LA[1] = 3 LA[2] = 4 LA[3] = 5 LA[4] = 6

#Display operation using python

#Display operation using python

#Declaring array elements

LA = [2,3,4,5,6]

#Displaying the array

print("The array elements are: ")

for x in range(len(LA)):

print("LA", [x], " = " , LA[x])

Output

The array elements are: LA [0] = 2 LA [1] = 3 LA [2] = 4 LA [3] = 5 LA [4] = 6

Linked List Data Structure

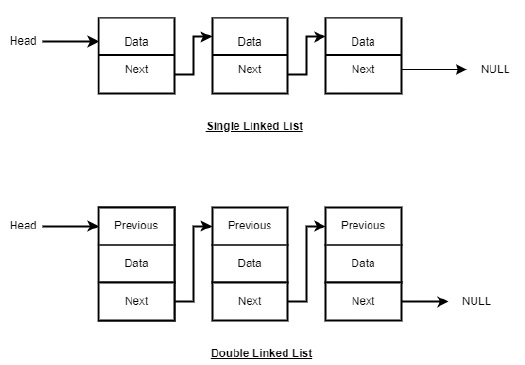

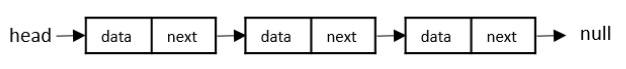

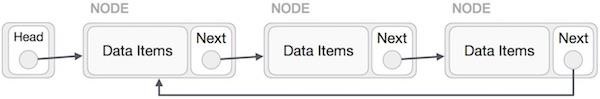

If arrays accommodate similar types of data types, linked lists consist of elements with different data types that are also arranged sequentially.

But how are these linked lists created?

A linked list is a collection of nodes connected together via links. These nodes consist of the data to be stored and a pointer to the address of the next node within the linked list. In the case of arrays, the size is limited to the definition, but in linked lists, there is no defined size. Any amount of data can be stored in it and can be deleted from it.

There are three types of linked lists −

Singly Linked List − The nodes only point to the address of the next node in the list.

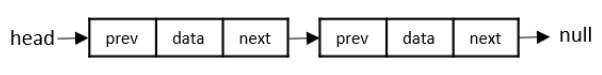

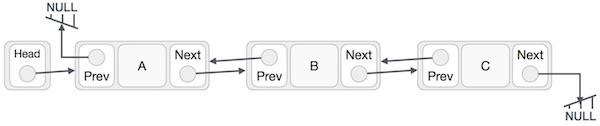

Doubly Linked List − The nodes point to the addresses of both previous and next nodes.

Circular Linked List − The last node in the list will point to the first node in the list. It can either be singly linked or doubly linked.

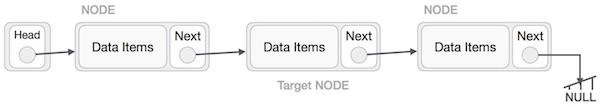

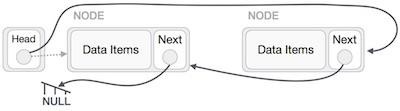

Linked List Representation

Linked list can be visualized as a chain of nodes, where every node points to the next node.

As per the above illustration, following are the important points to be considered.

Linked List contains a link element called first (head).

Each link carries a data field(s) and a link field called next.

Each link is linked with its next link using its next link.

Last link carries a link as null to mark the end of the list.

Types of Linked List

Following are the various types of linked list.

Singly Linked Lists

Singly linked lists contain two buckets in one node; one bucket holds the data and the other bucket holds the address of the next node of the list. Traversals can be done in one direction only as there is only a single link between two nodes of the same list.

Doubly Linked Lists

Doubly Linked Lists contain three buckets in one node; one bucket holds the data and the other buckets hold the addresses of the previous and next nodes in the list. The list is traversed twice as the nodes in the list are connected to each other from both sides.

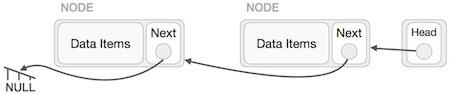

Circular Linked Lists

Circular linked lists can exist in both singly linked list and doubly linked list.

Since the last node and the first node of the circular linked list are connected, the traversal in this linked list will go on forever until it is broken.

Basic Operations in the Linked Lists

The basic operations in the linked lists are insertion, deletion, searching, display, and deleting an element at a given key. These operations are performed on Singly Linked Lists as given below −

Insertion − Adds an element at the beginning of the list.

Deletion − Deletes an element at the beginning of the list.

Display − Displays the complete list.

Search − Searches an element using the given key.

Delete − Deletes an element using the given key.

Insertion Operation

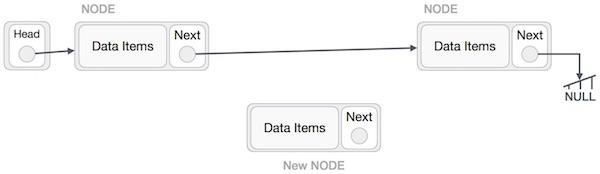

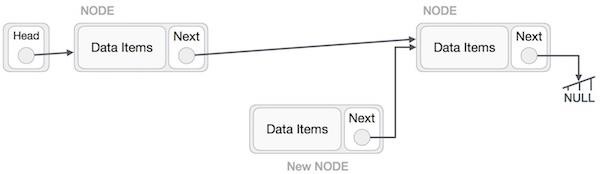

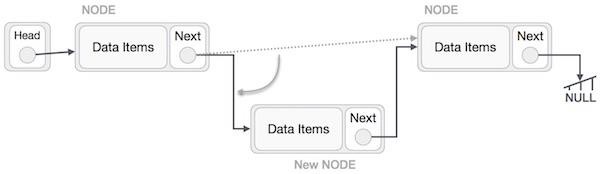

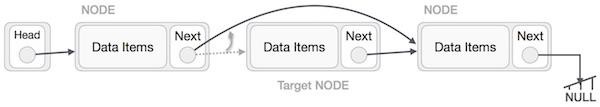

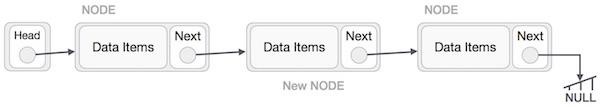

Adding a new node in linked list is a more than one step activity. We shall learn this with diagrams here. First, create a node using the same structure and find the location where it has to be inserted.

Imagine that we are inserting a node B (NewNode), between A (LeftNode) and C (RightNode). Then point B.next to C −

NewNode.next −> RightNode;

It should look like this −

Now, the next node at the left should point to the new node.

LeftNode.next −> NewNode;

This will put the new node in the middle of the two. The new list should look like this −

Insertion in linked list can be done in three different ways. They are explained as follows −

Insertion at Beginning

In this operation, we are adding an element at the beginning of the list.

Algorithm

1. START 2. Create a node to store the data 3. Check if the list is empty 4. If the list is empty, add the data to the node and assign the head pointer to it. 5 If the list is not empty, add the data to a node and link to the current head. Assign the head to the newly added node. 6. END

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void main(){

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

printf("Linked List: ");

// print list

printList();

}

Output

Linked List: [ 50 44 30 22 12 ]

#include <bits/stdc++.h>

#include <string>

using namespace std;

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

cout << "\n[";

//start from the beginning

while(p != NULL) {

cout << " " << p->data << " ";

p = p->next;

}

cout << "]";

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

int main(){

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

cout << "Linked List: ";

// print list

printList();

}

Output

Linked List: [ 50 44 30 22 12 ]

public class Linked_List {

static class node {

int data;

node next;

node (int value) {

data = value;

next = null;

}

}

static node head;

// display the list

static void printList() {

node p = head;

System.out.print("\n[");

//start from the beginning

while(p != null) {

System.out.print(" " + p.data + " ");

p = p.next;

}

System.out.print("]");

}

//insertion at the beginning

static void insertatbegin(int data) {

//create a link

node lk = new node(data);;

// point it to old first node

lk.next = head;

//point first to new first node

head = lk;

}

public static void main(String args[]) {

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

insertatbegin(33);

System.out.println("Linked List: ");

// print list

printList();

}

}

Output

Linked List: [33 50 44 30 22 12 ]

class Node:

def __init__(self, data=None):

self.data = data

self.next = None

class SLL:

def __init__(self):

self.head = None

# Print the linked list

def listprint(self):

printval = self.head

print("Linked List: ")

while printval is not None:

print (printval.data)

printval = printval.next

def AddAtBeginning(self,newdata):

NewNode = Node(newdata)

# Update the new nodes next val to existing node

NewNode.next = self.head

self.head = NewNode

l1 = SLL()

l1.head = Node("731")

e2 = Node("672")

e3 = Node("63")

l1.head.next = e2

e2.next = e3

l1.AddAtBeginning("122")

l1.listprint()

Output

Linked List: 122 731 672 63

Insertion at Ending

In this operation, we are adding an element at the ending of the list.

Algorithm

1. START 2. Create a new node and assign the data 3. Find the last node 4. Point the last node to new node 5. END

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void insertatend(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

struct node *linkedlist = head;

// point it to old first node

while(linkedlist->next != NULL)

linkedlist = linkedlist->next;

//point first to new first node

linkedlist->next = lk;

}

void main(){

int k=0;

insertatbegin(12);

insertatend(22);

insertatend(30);

insertatend(44);

insertatend(50);

printf("Linked List: ");

// print list

printList();

}

Output

Linked List: [ 12 22 30 44 50 ]

#include <bits/stdc++.h>

#include <string>

using namespace std;

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

cout << "\n[";

//start from the beginning

while(p != NULL) {

cout << " " << p->data << " ";

p = p->next;

}

cout << "]";

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void insertatend(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

struct node *linkedlist = head;

// point it to old first node

while(linkedlist->next != NULL)

linkedlist = linkedlist->next;

//point first to new first node

linkedlist->next = lk;

}

int main(){

insertatbegin(12);

insertatend(22);

insertatbegin(30);

insertatend(44);

insertatbegin(50);

cout << "Linked List: ";

// print list

printList();

}

Output

Linked List: [ 50 30 12 22 44 ]

public class Linked_List {

static class node {

int data;

node next;

node (int value) {

data = value;

next = null;

}

}

static node head;

// display the list

static void printList() {

node p = head;

System.out.print("\n[");

//start from the beginning

while(p != null) {

System.out.print(" " + p.data + " ");

p = p.next;

}

System.out.print("]");

}

//insertion at the beginning

static void insertatbegin(int data) {

//create a link

node lk = new node(data);;

// point it to old first node

lk.next = head;

//point first to new first node

head = lk;

}

static void insertatend(int data) {

//create a link

node lk = new node(data);

node linkedlist = head;

// point it to old first node

while(linkedlist.next != null)

linkedlist = linkedlist.next;

//point first to new first node

linkedlist.next = lk;

}

public static void main(String args[]) {

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatend(44);

insertatend(50);

insertatend(33);

System.out.println("Linked List: ");

// print list

printList();

}

}

Output

Linked List: [ 30 22 12 44 50 33 ]

class Node:

def __init__(self, data=None):

self.data = data

self.next = None

class LL:

def __init__(self):

self.head = None

def listprint(self):

val = self.head

print("Linked List:")

while val is not None:

print(val.data)

val = val.next

l1 = LL()

l1.head = Node("23")

l2 = Node("12")

l3 = Node("7")

l4 = Node("14")

l5 = Node("61")

# Linking the first Node to second node

l1.head.next = l2

# Linking the second Node to third node

l2.next = l3

l3.next = l4

l4.next = l5

l1.listprint()

Output

Linked List: 23 12 7 14 61

Insertion at a Given Position

In this operation, we are adding an element at any position within the list.

Algorithm

1. START 2. Create a new node and assign data to it 3. Iterate until the node at position is found 4. Point first to new first node 5. END

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void insertafternode(struct node *list, int data){

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

lk->next = list->next;

list->next = lk;

}

void main(){

int k=0;

insertatbegin(12);

insertatbegin(22);

insertafternode(head->next, 30);

printf("Linked List: ");

// print list

printList();

}

Output

Linked List: [ 22 12 30 ]

#include <bits/stdc++.h>

#include <string>

using namespace std;

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

cout << "\n[";

//start from the beginning

while(p != NULL) {

cout << " " << p->data << " ";

p = p->next;

}

cout << "]";

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void insertafternode(struct node *list, int data){

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

lk->next = list->next;

list->next = lk;

}

int main(){

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertafternode(head->next,44);

insertafternode(head->next->next, 50);

cout << "Linked List: ";

// print list

printList();

}

Output

Linked List: [ 30 22 44 50 12 ]

public class Linked_List {

static class node {

int data;

node next;

node (int value) {

data = value;

next = null;

}

}

static node head;

// display the list

static void printList() {

node p = head;

System.out.print("\n[");

//start from the beginning

while(p != null) {

System.out.print(" " + p.data + " ");

p = p.next;

}

System.out.print("]");

}

//insertion at the beginning

static void insertatbegin(int data) {

//create a link

node lk = new node(data);;

// point it to old first node

lk.next = head;

//point first to new first node

head = lk;

}

static void insertafternode(node list, int data) {

node lk = new node(data);

lk.next = list.next;

list.next = lk;

}

public static void main(String args[]) {

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertafternode(head.next, 50);

insertafternode(head.next.next, 33);

System.out.println("Linked List: ");

// print list

printList();

}

}

Output

Linked List: [44 30 50 33 22 12 ]

class Node:

def __init__(self, data=None):

self.data = data

self.next = None

class SLL:

def __init__(self):

self.head = None

# Print the linked list

def listprint(self):

printval = self.head

print("Linked List: ")

while printval is not None:

print (printval.data)

printval = printval.next

# Function to add node

def InsertAtPos(self,nodeatpos,newdata):

if nodeatpos is None:

print("The mentioned node is absent")

return

NewNode = Node(newdata)

NewNode.next = nodeatpos.next

nodeatpos.next = NewNode

l1 = SLL()

l1.head = Node("731")

e2 = Node("672")

e3 = Node("63")

l1.head.next = e2

e2.next = e3

l1.InsertAtPos(l1.head.next, "122")

l1.listprint()

Output

Linked List: 731 672 122 63

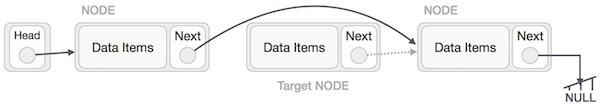

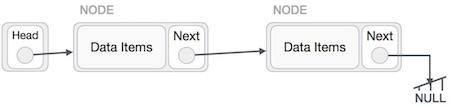

Deletion Operation

Deletion is also a more than one step process. We shall learn with pictorial representation. First, locate the target node to be removed, by using searching algorithms.

The left (previous) node of the target node now should point to the next node of the target node −

LeftNode.next > TargetNode.next;

This will remove the link that was pointing to the target node. Now, using the following code, we will remove what the target node is pointing at.

TargetNode.next > NULL;

We need to use the deleted node. We can keep that in memory otherwise we can simply deallocate memory and wipe off the target node completely.

Similar steps should be taken if the node is being inserted at the beginning of the list. While inserting it at the end, the second last node of the list should point to the new node and the new node will point to NULL.

Deletion in linked lists is also performed in three different ways. They are as follows −

Deletion at Beginning

In this deletion operation of the linked, we are deleting an element from the beginning of the list. For this, we point the head to the second node.

Algorithm

1. START 2. Assign the head pointer to the next node in the list 3. END

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void deleteatbegin(){

head = head->next;

}

int main(){

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(40);

insertatbegin(55);

printf("Linked List: ");

// print list

printList();

deleteatbegin();

printf("\nLinked List after deletion: ");

// print list

printList();

}

Output

Linked List: [ 55 40 30 22 12 ] Linked List after deletion: [ 40 30 22 12 ]

#include <bits/stdc++.h>

#include <string>

using namespace std;

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

cout << "\n[";

//start from the beginning

while(p != NULL) {

cout << " " << p->data << " ";

p = p->next;

}

cout << "]";

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void deleteatbegin(){

head = head->next;

}

int main(){

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

cout << "Linked List: ";

// print list

printList();

deleteatbegin();

cout << "Linked List after deletion: ";

printList();

}

Output

Linked List: [ 50 44 30 22 12 ] Linked List after deletion: [ 44 30 22 12 ]

public class Linked_List {

static class node {

int data;

node next;

node (int value) {

data = value;

next = null;

}

}

static node head;

// display the list

static void printList() {

node p = head;

System.out.print("\n[");

//start from the beginning

while(p != null) {

System.out.print(" " + p.data + " ");

p = p.next;

}

System.out.print("]");

}

//insertion at the beginning

static void insertatbegin(int data) {

//create a link

node lk = new node(data);;

// point it to old first node

lk.next = head;

//point first to new first node

head = lk;

}

static void deleteatbegin() {

head = head.next;

}

public static void main(String args[]) {

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

insertatbegin(33);

System.out.println("Linked List: ");

// print list

printList();

deleteatbegin();

System.out.println("\nLinked List after deletion: ");

// print list

printList();

}

}

Output

Linked List: [ 33 50 44 30 22 12 ] Linked List after deletion: [50 44 30 22 12 ]

#python code for deletion at beginning using linked list.

from typing import Optional

class Node:

def __init__(self, data: int, next: Optional['Node'] = None):

self.data = data

self.next = next

class LinkedList:

def __init__(self):

self.head = None

#display the list

def print_list(self):

p = self.head

print("\n[", end="")

while p:

print(f" {p.data} ", end="")

p = p.next

print("]")

#Insertion at the beginning

def insert_at_begin(self, data: int):

lk = Node(data)

#point it to old first node

lk.next = self.head

#point firt to new first node

self.head = lk

def delete_at_begin(self):

self.head = self.head.next

if __name__ == "__main__":

linked_list = LinkedList()

linked_list.insert_at_begin(12)

linked_list.insert_at_begin(22)

linked_list.insert_at_begin(30)

linked_list.insert_at_begin(44)

linked_list.insert_at_begin(50)

#print list

print("Linked List: ", end="")

linked_list.print_list()

linked_list.delete_at_begin()

print("Linked List after deletion: ", end="")

linked_list.print_list()

Output

Linked List: [ 50 44 30 22 12 ] Linked List after deletion: [ 44 30 22 12 ]

Deletion at Ending

In this deletion operation of the linked, we are deleting an element from the ending of the list.

Algorithm

1. START 2. Iterate until you find the second last element in the list. 3. Assign NULL to the second last element in the list. 4. END

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void deleteatend(){

struct node *linkedlist = head;

while (linkedlist->next->next != NULL)

linkedlist = linkedlist->next;

linkedlist->next = NULL;

}

void main(){

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(40);

insertatbegin(55);

printf("Linked List: ");

// print list

printList();

deleteatend();

printf("\nLinked List after deletion: ");

// print list

printList();

}

Output

Linked List: [ 55 40 30 22 12 ] Linked List after deletion: [ 55 40 30 22 ]

#include <bits/stdc++.h>

#include <string>

using namespace std;

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// Displaying the list

void printList(){

struct node *p = head;

while(p != NULL) {

cout << " " << p->data << " ";

p = p->next;

}

}

// Insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void deleteatend(){

struct node *linkedlist = head;

while (linkedlist->next->next != NULL)

linkedlist = linkedlist->next;

linkedlist->next = NULL;

}

int main(){

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

cout << "Linked List: ";

// print list

printList();

deleteatend();

cout << "\nLinked List after deletion: ";

printList();

}

Output

Linked List: 50 44 30 22 12 Linked List after deletion: 50 44 30 22

public class Linked_List {

static class node {

int data;

node next;

node (int value) {

data = value;

next = null;

}

}

static node head;

// display the list

static void printList() {

node p = head;

System.out.print("\n[");

//start from the beginning

while(p != null) {

System.out.print(" " + p.data + " ");

p = p.next;

}

System.out.print("]");

}

//insertion at the beginning

static void insertatbegin(int data) {

//create a link

node lk = new node(data);;

// point it to old first node

lk.next = head;

//point first to new first node

head = lk;

}

static void deleteatend() {

node linkedlist = head;

while (linkedlist.next.next != null)

linkedlist = linkedlist.next;

linkedlist.next = null;

}

public static void main(String args[]) {

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

insertatbegin(33);

System.out.println("Linked List: ");

// print list

printList();

//deleteatbegin();

deleteatend();

System.out.println("\nLinked List after deletion: ");

// print list

printList();

}

}

Output

Linked List: [ 33 50 44 30 22 12 ] Linked List after deletion: [ 33 50 44 30 22 ]

#python code for deletion at beginning using linked list.

class Node:

def __init__(self, data=None):

self.data = data

self.next = None

class LinkedList:

def __init__(self):

self.head = None

#Displaying the list

def printList(self):

p = self.head

print("\n[", end="")

while p != None:

print(" " + str(p.data) + " ", end="")

p = p.next

print("]")

#Insertion at the beginning

def insertatbegin(self, data):

#create a link

lk = Node(data)

#point it to old first node

lk.next = self.head

#point first to new first node

self.head = lk

def deleteatend(self):

linkedlist = self.head

while linkedlist.next.next != None:

linkedlist = linkedlist.next

linkedlist.next = None

if __name__ == "__main__":

linked_list = LinkedList()

linked_list.insertatbegin(12)

linked_list.insertatbegin(22)

linked_list.insertatbegin(30)

linked_list.insertatbegin(40)

linked_list.insertatbegin(55)

#print list

print("Linked List: ", end="")

linked_list.printList()

linked_list.deleteatend()

print("Linked List after deletion: ", end="")

linked_list.printList()

Output

Linked List: [ 55 40 30 22 12 ] Linked List after deletion: [ 55 40 30 22 ]

Deletion at a Given Position

In this deletion operation of the linked, we are deleting an element at any position of the list.

Algorithm

1. START 2. Iterate until find the current node at position in the list 3. Assign the adjacent node of current node in the list to its previous node. 4. END

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void deletenode(int key){

struct node *temp = head, *prev;

if (temp != NULL && temp->data == key) {

head = temp->next;

return;

}

// Find the key to be deleted

while (temp != NULL && temp->data != key) {

prev = temp;

temp = temp->next;

}

// If the key is not present

if (temp == NULL) return;

// Remove the node

prev->next = temp->next;

}

void main(){

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(40);

insertatbegin(55);

printf("Linked List: ");

// print list

printList();

deletenode(30);

printf("\nLinked List after deletion: ");

// print list

printList();

}

Output

Linked List: [ 55 40 30 22 12 ] Linked List after deletion: [ 55 40 22 12 ]

#include <bits/stdc++.h>

#include <string>

using namespace std;

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

cout << "\n[";

//start from the beginning

while(p != NULL) {

cout << " " << p->data << " ";

p = p->next;

}

cout << "]";

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void deletenode(int key){

struct node *temp = head, *prev;

if (temp != NULL && temp->data == key) {

head = temp->next;

return;

}

// Find the key to be deleted

while (temp != NULL && temp->data != key) {

prev = temp;

temp = temp->next;

}

// If the key is not present

if (temp == NULL) return;

// Remove the node

prev->next = temp->next;

}

int main(){

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

cout << "Linked List: ";

// print list

printList();

deletenode(30);

cout << "Linked List after deletion: ";

printList();

}

Output

Linked List: [ 50 44 30 22 12 ]Linked List after deletion: [ 50 44 22 12 ]

public class Linked_List {

static class node {

int data;

node next;

node (int value) {

data = value;

next = null;

}

}

static node head;

// display the list

static void printList() {

node p = head;

System.out.print("\n[");

//start from the beginning

while(p != null) {

System.out.print(" " + p.data + " ");

p = p.next;

}

System.out.print("]");

}

//insertion at the beginning

static void insertatbegin(int data) {

//create a link

node lk = new node(data);;

// point it to old first node

lk.next = head;

//point first to new first node

head = lk;

}

static void deletenode(int key) {

node temp = head;

node prev = null;

if (temp != null && temp.data == key) {

head = temp.next;

return;

}

// Find the key to be deleted

while (temp != null && temp.data != key) {

prev = temp;

temp = temp.next;

}

// If the key is not present

if (temp == null) return;

// Remove the node

prev.next = temp.next;

}

public static void main(String args[]) {

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(44);

insertatbegin(50);

insertatbegin(33);

System.out.println("Linked List: ");

// print list

printList();

//deleteatbegin();

//deleteatend();

deletenode(12);

System.out.println("\nLinked List after deletion: ");

// print list

printList();

}

}

Output

Linked List: [ 33 50 44 30 22 12 ] Linked List after deletion: [ 33 50 44 30 22 ]

#python code for deletion at given position using linked list.

class Node:

def __init__(self, data=None):

self.data = data

self.next = None

class LinkedList:

def __init__(self):

self.head = None

# display the list

def printList(self):

p = self.head

print("\n[", end="")

#start from the beginning

while(p != None):

print(" ", p.data, " ", end="")

p = p.next

print("]")

#insertion at the beginning

def insertatbegin(self, data):

#create a link

lk = Node(data)

# point it to old first node

lk.next = self.head

#point first to new first node

self.head = lk

def deletenode(self, key):

temp = self.head

if (temp != None and temp.data == key):

self.head = temp.next

return

# Find the key to be deleted

while (temp != None and temp.data != key):

prev = temp

temp = temp.next

# If the key is not present

if (temp == None):

return

# Remove the node

prev.next = temp.next

llist = LinkedList()

llist.insertatbegin(12)

llist.insertatbegin(22)

llist.insertatbegin(30)

llist.insertatbegin(40)

llist.insertatbegin(55)

print("Original Linked List: ", end="")

# print list

llist.printList()

llist.deletenode(30)

print("\nLinked List after deletion: ", end="")

# print list

llist.printList()

Output

Linked List: [ 55 40 30 22 12 ] Linked List after deletion: [ 55 40 22 12 ]

Reverse Operation

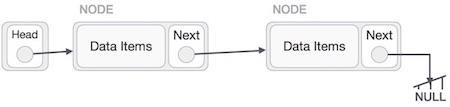

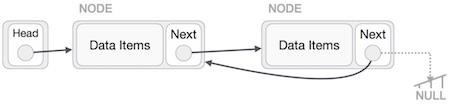

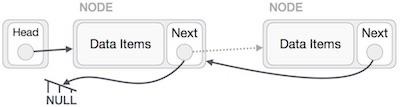

This operation is a thorough one. We need to make the last node to be pointed by the head node and reverse the whole linked list.

First, we traverse to the end of the list. It should be pointing to NULL. Now, we shall make it point to its previous node −

We have to make sure that the last node is not the last node. So we'll have some temp node, which looks like the head node pointing to the last node. Now, we shall make all left side nodes point to their previous nodes one by one.

Except the node (first node) pointed by the head node, all nodes should point to their predecessor, making them their new successor. The first node will point to NULL.

We'll make the head node point to the new first node by using the temp node.

Algorithm

Step by step process to reverse a linked list is as follows −

1 START 2. We use three pointers to perform the reversing: prev, next, head. 3. Point the current node to head and assign its next value to the prev node. 4. Iteratively repeat the step 3 for all the nodes in the list. 5. Assign head to the prev node.

Example

Following are the implementations of this operation in various programming languages −

#include <stdio.h>

#include <string.h>

#include <stdlib.h>

struct node {

int data;

struct node *next;

};

struct node *head = NULL;

struct node *current = NULL;

// display the list

void printList(){

struct node *p = head;

printf("\n[");

//start from the beginning

while(p != NULL) {

printf(" %d ",p->data);

p = p->next;

}

printf("]");

}

//insertion at the beginning

void insertatbegin(int data){

//create a link

struct node *lk = (struct node*) malloc(sizeof(struct node));

lk->data = data;

// point it to old first node

lk->next = head;

//point first to new first node

head = lk;

}

void reverseList(struct node** head){

struct node *prev = NULL, *cur=*head, *tmp;

while(cur!= NULL) {

tmp = cur->next;

cur->next = prev;

prev = cur;

cur = tmp;

}

*head = prev;

}

void main(){

int k=0;

insertatbegin(12);

insertatbegin(22);

insertatbegin(30);

insertatbegin(40);

insertatbegin(55);

printf("Linked List: ");

// print list

printList();

reverseList(&head);

printf("\nReversed Linked List: ");

printList();

}

Output

Linked List: [ 55 40 30 22 12 ] Reversed Linked List: [ 12 22 30 40 55 ]

#include <bits/stdc++.h>