Article Categories

- All Categories

-

Data Structure

Data Structure

-

Networking

Networking

-

RDBMS

RDBMS

-

Operating System

Operating System

-

Java

Java

-

MS Excel

MS Excel

-

iOS

iOS

-

HTML

HTML

-

CSS

CSS

-

Android

Android

-

Python

Python

-

C Programming

C Programming

-

C++

C++

-

C#

C#

-

MongoDB

MongoDB

-

MySQL

MySQL

-

Javascript

Javascript

-

PHP

PHP

-

Economics & Finance

Economics & Finance

What is SIMD Architecture?

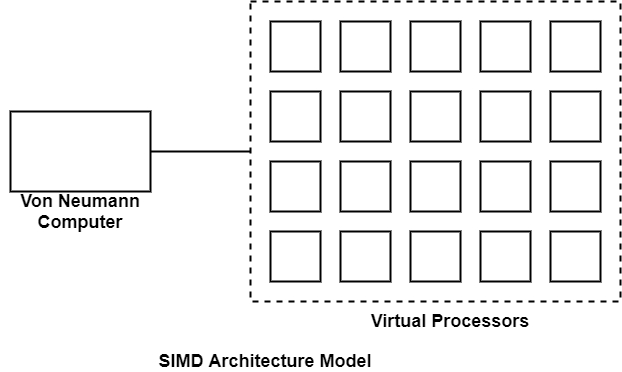

SIMD represents single-instruction multiple-data streams. The SIMD model of parallel computing includes two parts such as a front-end computer of the usual von Neumann style, and a processor array as displayed in the figure.

The processor array is a collection of identical synchronized processing elements adequate for simultaneously implementing the same operation on various data. Each processor in the array has a small amount of local memory where the distributed data resides while it is being processed in parallel.

The processor array is linked to the memory bus of the front end so that the front end can randomly create the local processor memories as if it were another memory.

A program can be developed and performed on the front end using a traditional serial programming language. The application program is performed by the front end in the usual serial method, but problem command to the processor array to carry out SIMD operations in parallel.

The similarity between serial and data-parallel programming is one of the valid points of data parallelism. Synchronization is created irrelevant by the lock-step synchronization of the processors. Processors either do nothing or similar operations at the same time.

In SIMD architecture, parallelism is exploited by using simultaneous operations across huge sets of data. This paradigm is most beneficial for solving issues that have several data that require to be upgraded on a wholesale basis. It is dynamically powerful in many regular scientific calculations.

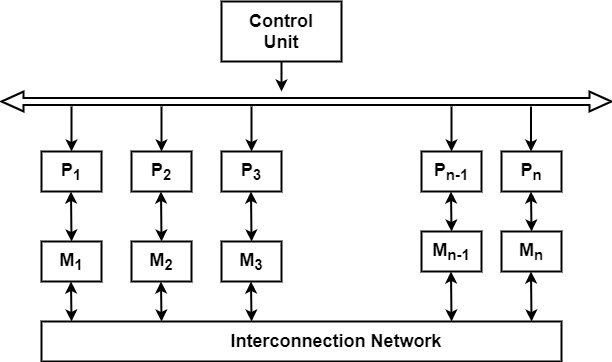

Two main configurations have been applied in SIMD machines. In the first scheme, each processor has its local memory. Processors can interact with each other through the interconnection network. If the interconnection network does not support a direct connection between given groups of processors, then this group can exchange information via an intermediate processor.

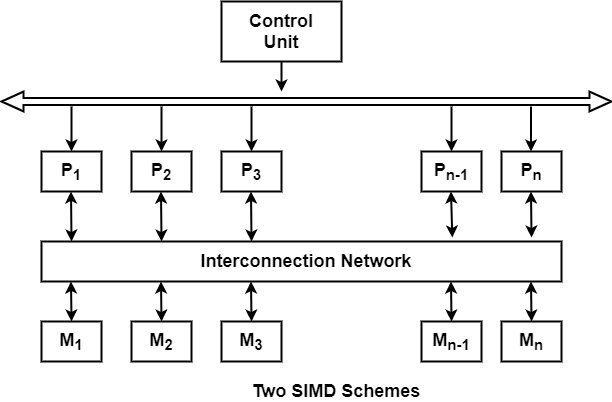

In the second SIMD scheme, processors and memory modules communicate with each other via the interconnection network. Two processors can send information between each other via intermediate memory module(s) or possibly via intermediate processor(s). The BSP (Burroughs’ Scientific Processor) used the second SIMD scheme.