Article Categories

- All Categories

-

Data Structure

Data Structure

-

Networking

Networking

-

RDBMS

RDBMS

-

Operating System

Operating System

-

Java

Java

-

MS Excel

MS Excel

-

iOS

iOS

-

HTML

HTML

-

CSS

CSS

-

Android

Android

-

Python

Python

-

C Programming

C Programming

-

C++

C++

-

C#

C#

-

MongoDB

MongoDB

-

MySQL

MySQL

-

Javascript

Javascript

-

PHP

PHP

-

Economics & Finance

Economics & Finance

What is MIMD Architecture?

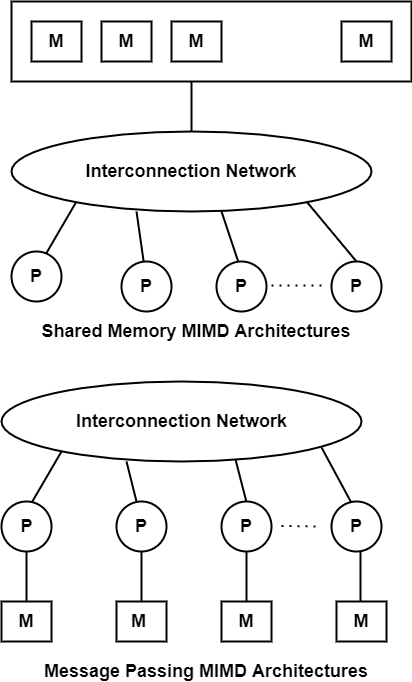

MIMD stands for Multiple-instruction multiple-data streams. It includes parallel architectures are made of multiple processors and multiple memory modules linked via some interconnection network. They fall into two broad types including shared memory or message passing.

A shared memory system generally accomplishes interprocessor coordination through a global memory shared by all processors. These are frequently server systems that communicate through a bus and cache memory controller.

The bus/ cache architecture alleviates the need for expensive multi-ported memories and interface circuitry as well as the need to adopt a message-passing paradigm when developing application software. Because access to shared memory is balanced, these systems are also called SMP (symmetric multiprocessor) systems. Each processor has an equal opportunity to read/write to memory, including equal access speed.

A message-passing system is also defined as distributed memory. It generally combines the local memory and processor at each node of the interconnection network. There is no global memory, so it is important to transfer data from one local memory to another using message passing. This is frequently done by a Send/Receive pair of commands, which should be written into the application software by a programmer.

Thus, programmers should understand the message-passing paradigm, which contains data copying and dealing with consistency problems. Commercial examples of message-passing architectures c. 1990 were the nCUBE, iPSC/2, and multiple Transputer-based systems.