- Java Microservices Tutorial

- Java Microservices - Home

- Microservices - Introduction

- Microservices vs Monolith vs SOA

- Java Microservices - Environment Setup

- Java Microservices - Advantages of Spring Boot

- Java Microservices - Design Patterns

- Java Microservices - Domain Driven Design

- Java Microservices - Decomposition by Business Capability

- Java Microservices - Decomposition by Subdomain

- Java Microservices - Backend for Frontend

- Java Microservices - The Strangler Pattern

- Java Microservices - Synchronous Communication

- Java Microservices - Asynchronous Communication

- Java Microservices - Saga Pattern

- Java Microservices - Centralized Logging (ELK Stack)

- Java Microservices - Event Sourcing

- Java Microservices - CQRS Pattern

- Java Microservices - Sidecar Pattern

- Java Microservices - Service Mesh Pattern

- Java Microservices - Circuit Breaker Pattern

- Java Microservices - Distributed Tracing

- Java Microservices - Control Loop Pattern

- Java Microservices - Database Per Service

- Java Microservices - Bulkhead Pattern

- Java Microservices - Health Check API

- Java Microservices - Retry Pattern

- Java Microservices - Fallback Pattern

- Java Microservices Useful Resources

- Java Microservices Quick Guide

- Java Microservices Useful Resources

- Java Microservices Discussion

Java Microservices - Quick Guide

Microservices - Introduction

In today's fast-paced digital world, businesses demand agility, scalability, and resilience from their software applications. Traditional monolithic architectures, where all components are tightly integrated, often struggle to meet these demands. Enter Microservices - a revolutionary architectural approach that structures applications as a collection of small, independent services, each responsible for a specific business function. This article explores what microservices are, their key characteristics, benefits, challenges, and real-world applications.

What are Microservices?

Microservices, or microservice architecture, is a software design pattern where an application is broken down into multiple loosely coupled, independently deployable services. Each service −

Focuses on a single business capability (e.g., user authentication, payment processing, order management).

Runs in its own process and communicates via APIs (typically REST, gRPC, or message brokers like Kafka).

Can use different programming languages and databases, allowing teams to choose the best tech stack for each service.

Unlike monolithic applications, where a single failure can crash the entire system, microservices isolate faults, ensuring that one service's failure doesn't disrupt others.

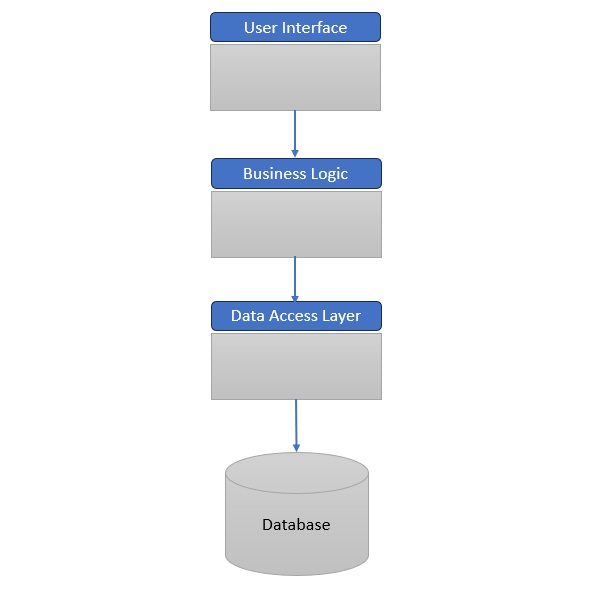

Example: Monolithic/Traditional Application Architecture

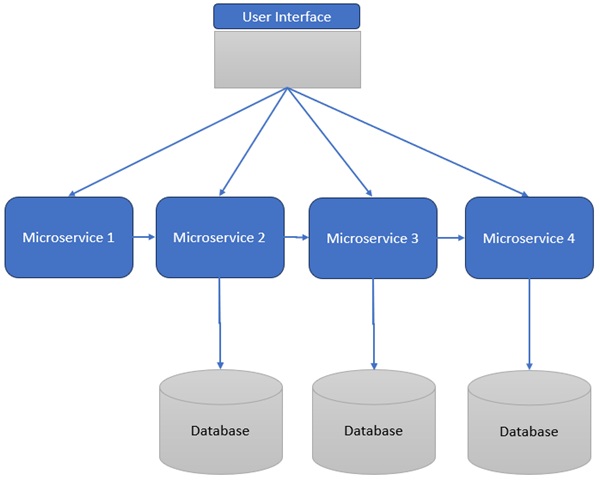

Example: Microservices Architecture

Benefits of Microservices

Faster Development & Deployment

Teams can work in parallel on different services, accelerating release cycles.

Improved Fault Isolation

A crash in one service (e.g., recommendation engine) doesn't bring down the entire app.

Technology Flexibility

Developers can use Python for machine learning services while using Go for high-performance APIs.

Easier Maintenance

Updating a single service is simpler than redeploying a monolithic app.

Better Scalability

Only high-demand services (e.g., checkout) need scaling, optimizing resource usage.

Challenges of Microservices

Increased Complexity

Managing multiple services, databases, and inter-service communication requires robust DevOps practices.

Testing & Debugging Difficulties

End-to-end testing is harder due to distributed dependencies.

Higher Operational Overhead

Requires advanced monitoring (e.g., Prometheus, Grafana) and orchestration tools (e.g., Kubernetes).

Real-World Applications

E-Commerce (Shopee, Amazon) −

Shopee uses microservices for payments, inventory, and delivery, allowing seamless scaling during sales events.

Amazon's transition from a monolith to microservices enabled faster feature rollouts (e.g., AWS, Prime Video).

Streaming Services (Spotify) −

Spotify's microservices handle playlists, recommendations, and podcasts independently, improving performance.

IoT & Smart Devices −

Microservices manage sensor data, analytics, and device control in IoT ecosystems (e.g., smart homes, connected cars).

FinTech (Banking & Payments) −

Banks use microservices for fraud detection, transactions, and customer profiles, ensuring high availability.

When to Use Microservices?

Microservices are ideal for −

Large, complex applications (e.g., enterprise SaaS, global e-commerce).

Teams needing agility (e.g., startups scaling rapidly).

Systems requiring high availability (e.g., financial services, IoT).

However, monoliths may still be better for small projects with limited scalability needs.

Conclusion

Microservices have become the "home" of modern software architecture, offering unparalleled flexibility, scalability, and resilience. While they introduce complexity, their benefits−faster development, fault isolation, and tech diversity−make them indispensable for businesses aiming to thrive in a digital-first world. Whether you're building the next Spotify or a smart home IoT system, microservices provide the foundation for innovation.

Microservices vs Monolith vs SOA

Introduction to Microservices

Microservices, also known as Microservice Architecture (MSA), is a software development approach where applications are structured as a collection of small, independent, and loosely coupled services. Each service is designed to perform a specific business function and communicates with other services via well-defined APIs.

Why Microservices?

Traditional monolithic applications bundle all functionalities into a single codebase, making them difficult to scale, maintain, and update.

Microservices break down applications into modular components, enabling faster development, independent scaling, and improved fault isolation.

Core Principles

Single Responsibility Principle (SRP) − Each service should handle one business capability (e.g., authentication, payment processing).

Decentralized Data Management − Services can use different databases (SQL, NoSQL) based on their needs.

Independent Deployment − Teams can update and deploy services without affecting others.

Evolution from Monolithic to Microservices Architecture

Monolithic Architecture

Single-tiered application where UI, business logic, and database are tightly integrated.

Pros − Simple to develop, test, and deploy initially.

Cons −

Difficult to scale (must scale the entire app).

Long deployment cycles (small changes require full redeployment).

High risk of system-wide failures.

Service-Oriented Architecture (SOA)

An intermediate step between monoliths and microservices.

Uses Enterprise Service Bus (ESB) for communication, leading to tight coupling and bottlenecks.

Microservices Architecture

Eliminates central orchestration (no ESB).

Lightweight protocols (REST, gRPC, Kafka) replace heavy middleware.

Each service is autonomous, improving agility and scalability.

Key Characteristics of Microservices

Modularity − Services are small and focused on a single function.

Decentralized Control − Teams can choose different tech stacks (e.g., Python for ML, Java for backend).

Resilience − Failures in one service don't crash the entire system.

Automated DevOps − CI/CD pipelines enable rapid deployments.

API-First Approach − Services communicate via APIs (REST, GraphQL).

Cloud-Native − Designed for containerization (Docker) and orchestration (Kubernetes).

Microservices vs. Monolithic vs. SOA

| Sr.No. | Aspect | Monolith | SOA | Microservices |

|---|---|---|---|---|

| 1 | Coupling | Tightly coupled | Loosely coupled (via ESB) | Loosely coupled (direct APIs) |

| 2 | Scalability | Scales as a whole | Partial scaling | Per-service scaling |

| 3 | Deployment | Full redeploy needed | Complex due to ESB | Independent deployments |

| 4 | Tech Stack | Limited to one language | Mixed, but constrained | Fully polyglot |

Real-World Use Cases

🛒 E-Commerce (Amazon, Shopee)

Amazon migrated from a monolith to microservices to handle **Prime Day traffic surges**.

Shopee uses microservices for **real-time inventory updates**.

🎵 Streaming (Netflix, Spotify)

Netflix's recommendation engine runs as an independent microservice.

Spotify uses microservices for personalized playlists.

🏦 FinTech (PayPal, Revolut)

PayPal processes millions of transactions daily using microservices.

Revolut's fraud detection runs as a separate service.

Best Practices for Implementing Microservices

Start Small, Then Scale

Begin with one or two services before full adoption.

Use Containers & Orchestration

Docker for containerization, Kubernetes for orchestration.

Implement API Gateways

Kong, Apigee, or AWS API Gateway manage routing, load balancing, and security.

Adopt DevOps & CI/CD

GitLab CI, Jenkins, GitHub Actins automate testing and deployment.

Monitor & Log Everything

Prometheus (metrics), ELK Stack (logs), Grafana (dashboards).

Conclusion

Microservices represent a paradigm shift in software architecture, offering scalability, flexibility, and resilience that monolithic systems cannot match. While they introduce complexity, the benefits−faster deployments, independent scaling, and fault tolerance−make them indispensable for modern cloud-native applications.

Java Microservices - Environment Setup

This chapter will guide you on how to prepare a development environment to start your work with Java Based Microservices. It will also teach you how to set up JDK, Maven and STS on your machine before you set up Spring Boot Framework for Microservices −

Step 1 - Setup Java Development Kit (JDK)

You can download the latest version of SDK from Oracle's Java site − Java SE Downloads. You will find instructions for installing JDK in downloaded files, follow the given instructions to install and configure the setup. Finally set PATH and JAVA_HOME environment variables to refer to the directory that contains java and javac, typically java_install_dir/bin and java_install_dir respectively.

If you are running Windows and have installed the JDK in C:\Program Files\Java\jdk-21, you would have to put the following line in your C:\autoexec.bat file.

set PATH=C:\Program Files\Java\jdk-21;%PATH% set JAVA_HOME=C:\Program Files\Java\jdk-21

Alternatively, on Windows NT/2000/XP, you will have to right-click on My Computer, select Properties → Advanced → Environment Variables. Then, you will have to update the PATH value and click the OK button.

On Unix (Solaris, Linux, etc.), if the SDK is installed in /usr/local/jdk-21 and you use the C shell, you will have to put the following into your .cshrc file.

setenv PATH /usr/local/jdk-21/bin:$PATH setenv JAVA_HOME /usr/local/jdk-21

Alternatively, if you use an Integrated Development Environment (IDE) like Borland JBuilder, Eclipse, IntelliJ IDEA, or Sun ONE Studio, you will have to compile and run a simple program to confirm that the IDE knows where you have installed Java. Otherwise, you will have to carry out a proper setup as given in the document of the IDE.

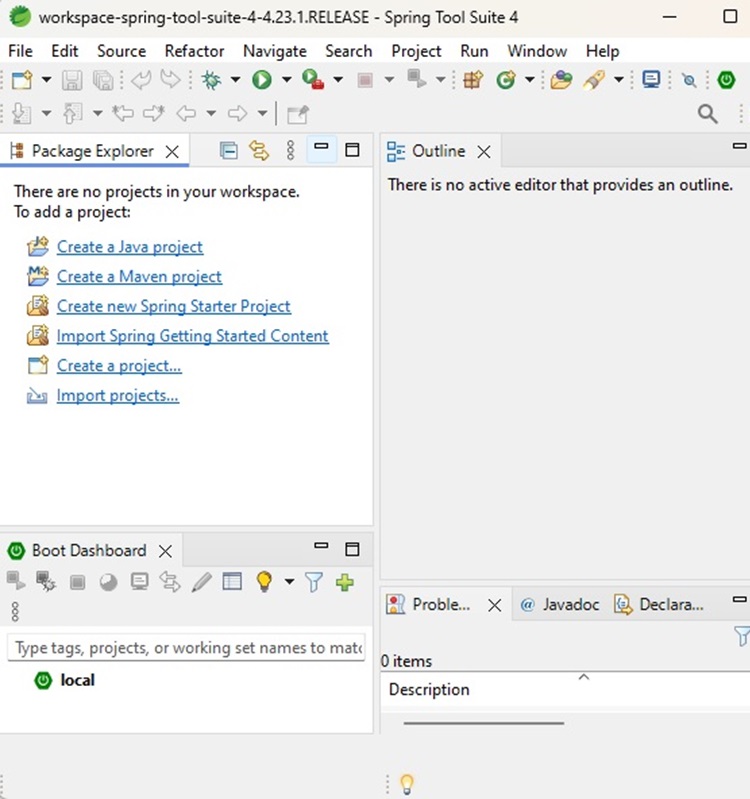

Step 2 - Setup Spring Tool Suite

All the examples in this tutorial have been written using Spring Tool Suite. So we would suggest you should have the latest version of Spring Tool Suite installed on your machine.

To install Spring Tools IDE, download the latest Spring Tools binaries from https://spring.io/tools. Once you download the installation, unpack the binary distribution into a convenient location. For example, in C:\sts on Windows, or /usr/local/sts on Linux/Unix and finally set PATH variable appropriately.

String Tool Suite can be started by executing the following commands on Windows machine, or you can simply double-click on eclipse.exe

%C:\sts\SpringToolSuite4.exe

SpringToolSuite4 can be started by executing the following commands on Unix (Solaris, Linux, etc.) machine −

$/usr/local/sts/SpringToolSuite4

After a successful startup, if everything is fine then it should display the following result −

Step 3 - Download Maven Archive

Download Maven 3.9.8 from https://maven.apache.org/download.cgi.

| OS | Archive name |

|---|---|

| Windows | apache-maven-3.9.8-bin.zip |

| Linux | apache-maven-3.9.8-bin.tar.gz |

| Mac | apache-maven-3.9.8-bin.tar.gz |

Step 4 - Extract the Maven Archive

Extract the archive, to the directory you wish to install Maven 3.9.8. The subdirectory apache-maven-3.9.8 will be created from the archive.

| OS | Location (can be different based on your installation) |

|---|---|

| Windows | C:\Program Files\Apache\apache-maven-3.9.8 |

| Linux | /usr/local/apache-maven |

| Mac | /usr/local/apache-maven |

Step 5 - Set Maven Environment Variables

Add M2_HOME, M2, MAVEN_OPTS to environment variables.

| OS | Output |

|---|---|

| Windows |

Set the environment variables using system properties. M2_HOME=C:\Program Files\Apache\apache-maven-3.9.8 M2=%M2_HOME%\bin MAVEN_OPTS=-Xms256m -Xmx512m |

| Linux |

Open command terminal and set environment variables. export M2_HOME=/usr/local/apache-maven/apache-maven-3.9.8 export M2=$M2_HOME/bin export MAVEN_OPTS=-Xms256m -Xmx512m |

| Mac |

Open command terminal and set environment variables. export M2_HOME=/usr/local/apache-maven/apache-maven-3.9.8 export M2=$M2_HOME/bin export MAVEN_OPTS=-Xms256m -Xmx512m |

Step 6 - Add Maven bin Directory Location to System Path

Now append M2 variable to System Path.

| OS | Output |

|---|---|

| Windows | Append the string ;%M2% to the end of the system variable, Path. |

| Linux | export PATH=$M2:$PATH |

| Mac | export PATH=$M2:$PATH |

Step 7 - Verify Maven Installation

Now open console and execute the following mvn command.

| OS | Task | Command |

|---|---|---|

| Windows | Open Command Console | c:\> mvn --version |

| Linux | Open Command Terminal | $ mvn --version |

| Mac | Open Terminal | machine:~ joseph$ mvn --version |

Finally, verify the output of the above commands, which should be as follows −

| OS | Output |

|---|---|

| Windows |

Apache Maven 3.9.8 (36645f6c9b5079805ea5009217e36f2cffd34256) Maven home: C:\Program Files\Apache\apache-maven-3.9.8 Java version: 21.0.2, vendor: Oracle Corporation, runtime: C:\Program Files\Java\jdk-21 Default locale: en_IN, platform encoding: UTF-8 OS name: "windows 11", version: "10.0", arch: "amd64", family: "windows" |

| Linux |

Apache Maven 3.9.8 (36645f6c9b5079805ea5009217e36f2cffd34256) Java version: 21.0.2 Java home: /usr/local/java-current/jre |

| Mac |

Apache Maven 3.9.8 (36645f6c9b5079805ea5009217e36f2cffd34256) Java version: 21.0.2 Java home: /Library/Java/Home/jre |

Step 8 - Setup Postman

Postman can be installed in operating systems like Mac, Windows and Linux. It is basically an independent application which can be installed in the following ways −

Postman can be installed from the Chrome Extension (will be available only in Chrome browser).

It can be installed as a standalone application.

To download Postman as a standalone application in Windows, navigate to the following link https://www.postman.com/downloads/

For installation steps, you can visit our Postman Tutorial Page Postman - Environment Setup.

Java Microservices - Advantages of Using Spring Boot

In the fast-paced world of software development, Microservices Architecture has emerged as a powerful alternative to monolithic applications. It promotes the idea of developing single-purpose, loosely coupled services that can be deployed independently. Spring Boot, a project from the Spring ecosystem, is one of the most popular frameworks used to build microservices due to its simplicity, speed, and strong community support.

This chapter explores the key advantages of using Spring Boot to develop microservices, including its features, architecture support, tooling, and real-world applicability.

What is Spring Boot?

Spring Boot is an extension of the Spring framework that simplifies the setup and development of Spring-based applications. It minimizes boilerplate code, automates configuration, and promotes convention over configuration.

Spring Boot makes it easy to create stand-alone, production-grade Spring-based applications. - Spring IO

Key Features

Auto-configuration

Embedded servers (Tomcat, Jetty, Undertow)

Production-ready metrics and health checks

Minimal XML configuration

Spring Initializr and CLI tools

How Spring Boot Supports Microservices

Spring Boot, along with Spring Cloud, offers built-in support to develop resilient, scalable, and cloud-ready microservices.

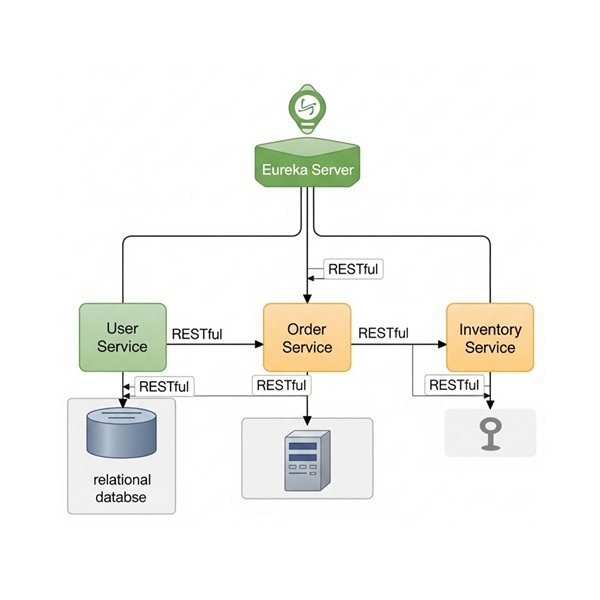

Microservices Architecture using Spring Boot

Advantages of Using Spring Boot in Microservices

Simplified Development

Spring Boot provides −

Pre-built templates and project structures (via Spring Initializr).

Auto-configuration based on classpath contents.

Minimal setup to get REST APIs running.

Example

With just a few annotations (@RestController, @SpringBootApplication), a microservice is ready.

@SpringBootApplication

public class InventoryServiceApplication {

public static void main(String[] args) {

SpringApplication.run(InventoryServiceApplication.class, args);

}

}

Embedded Web Servers

Spring Boot embeds web servers like Tomcat or Jetty, eliminating the need for external server deployment. This makes each microservice −

Self-contained

Easier to deploy in Docker containers or cloud environments

Seamless Integration with Spring Cloud

Spring Cloud provides extensions to Spring Boot that facilitate −

Service discovery (Eureka)

API gateway (Spring Cloud Gateway)

Load balancing (Cloud Loadbalancer)

Circuit breakers (Resilience4j)

Config server (Spring Config Server)

All these integrations are minimal-code and declarative.

Rapid Bootstrapping with Spring Initializr

https://start.spring.io provides a UI and API to generate Spring Boot microservices with −Preselected dependencies (e.g., Web, JPA, Actuator)

Maven or Gradle configuration

Java/Kotlin/Groovy language support

This accelerates development and ensures consistency.

Built-in Monitoring with Spring Boot Actuator

Spring Boot Actuator offers endpoints like −

/health

/metrics

/info

These endpoints integrate well with Prometheus, Grafana, or ELK stack, providing real-time monitoring and health checks for microservices.

Easy Testing and Mocking

Spring Boot provides test annotations −

@SpringBootTest

@WebMvcTest

@DataJpaTest

It also supports −

MockMVC for REST controllers

Testcontainers for Docker-based integration tests

Docker & Cloud-Native Friendly

Spring Boot jars are −

Self-contained − Easily deployable in Docker.

Portable − Can be moved to Kubernetes clusters, AWS ECS, Azure Containers, etc.

Dockerfile Example −

FROM openjdk:17 ADD target/inventory-service.jar app.jar ENTRYPOINT ["java", "-jar", "/app.jar"]

Spring Boot and DevOps Pipelines

Spring Boot integrates well with CI/CD tools −

Jenkins

GitHub Actions

GitLab CI/CD

Automated testing, packaging, and deployment are straightforward.

Case Study - E-Commerce Microservices

Services −

Product Service

Order Service

Payment Service

Notification Service

Using Spring Boot −

Each service uses REST or messaging (RabbitMQ/Kafka)

Configuration is centralized via Spring Cloud Config

Eureka handles service discovery

Gateway provides a unified API interface

Java Microservices - Domain Driven Design

Introduction to Domain-Driven Design (DDD)

Domain-Driven Design (DDD), introduced by Eric Evans in his 2003 book, is a software design approach that focuses on modelling business domains and aligning software architecture with business needs.

In microservices, DDD helps −

Break down complex business domains into smaller, manageable services.

Define clear boundaries between services (Bounded Contexts).

Improve collaboration between developers and domain experts.

Why Use DDD in Microservices?

Microservices require loose coupling and high cohesion, which DDD facilitates by −

Preventing Anaemic Domain Models (services with no business logic).

Avoiding Big Ball of Mud (monolithic-like interdependencies).

Improving Scalability by isolating domain logic.

Enabling Autonomous Teams (each team owns a domain).

Example - E-Commerce System

Without DDD

A single "OrderService" handling payments, inventory, and shipping → tight coupling.

With DDD

Separate Order Service, Payment Service, Inventory Service → clear domain boundaries.

Core Concepts of Domain-Driven Design

Bounded Context

A well-defined boundary where a domain model applies.

Each microservice should align with one Bounded Context.

Example

Order Context − Manages order creation, status.

Shipping Context − Handles logistics, tracking.

Ubiquitous Language

A shared vocabulary between developers and business experts.

Avoids miscommunication (e.g., "customer" vs. "user").

Domain Models

| Sr.No. | Concept | Description | Example |

|---|---|---|---|

| 1 | Entity | Unique identity (e.g., 'Order' with 'orderId'). | Customer(id, name, email) |

| 2 | Value Object | No identity, immutable (e.g., 'Address'). | Money(amount, currency) |

| 3 | Aggregate | A cluster of related objects (e.g., 'Order' + 'OrderItems') | Order (root) → OrderLineItems |

Implementing DDD in Microservices

Service Decomposition by Domain

Each microservice = one Bounded Context.

Example −

User Service (handles authentication, profiles).

Order Service (order lifecycle).

Inventory Service (stock management).

Event Storming

A workshop technique to identify domain events.

Example −

'OrderPlaced' → 'PaymentProcessed' → 'InventoryUpdated'.

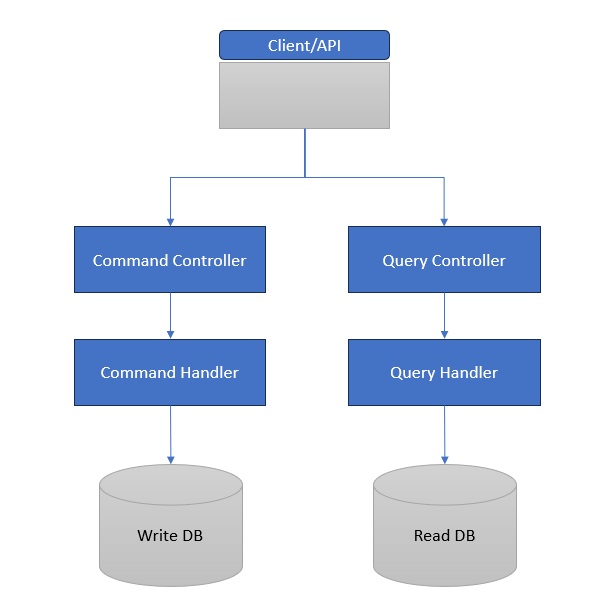

CQRS (Command Query Responsibility Segregation)

Separates reads (Queries) and writes (Commands).

Example −

Command Side − 'CreateOrder()' (writes to DB).

Query Side − 'GetOrderHistory()' (reads from a read-optimized DB).

Event Sourcing

Stores state changes as events (not just current state).

Example −

Instead of updating 'OrderStatus', log − '1. OrderCreated → 2. OrderPaid → 3. OrderShipped'.

Challenges & Best Practices

Challenges

Complexity − DDD requires deep domain understanding.

Over-Engineering − Not all systems need DDD.

Eventual Consistency − Microservices may have delayed sync.

Best Practices

Start Small − Apply DDD only to complex domains.

Use Domain Events − For inter-service communication.

Leverage Tools − Axon Framework, Spring Modulith.

Case Study: DDD in a Real-World Microservices System

Company − A large e-commerce platform.

Problem − Monolith struggling with scaling orders and inventory.

Solution

Identified "Bounded Contexts" (Orders, Payments, Inventory).

Applied "Event Storming" to define workflows.

Used CQRS for fast order history queries.

Result

40% faster order processing.

Better team autonomy.

Conclusion

Domain-Driven Design is powerful but not a silver bullet. When applied correctly in microservices it −

Improves maintainability.

Aligns tech with business needs.

Reduces coupling between services.

Java Microservices - Decomposition by Business Capability

Introduction

Microservices architecture enables the development of complex systems as a suite of independently deployable, modular services. One of the most critical aspects of microservices design is how to decompose a large application into smaller, manageable services. This article focuses on a key decomposition strategy: Decomposition by Business Capability.

This pattern emphasizes splitting services based on business domains rather than technical layers, promoting better alignment with organizational structures, product thinking, and scalability.

What Is Decomposition in Microservices?

In a microservices system, decomposition refers to the act of breaking down a monolithic application into independently deployable units (microservices). Each unit should have −

A well-defined boundary

Autonomy over its data and logic

A clear business purpose

Poor decomposition can lead to tightly coupled services, redundancy, and operational inefficiencies.

Understanding Business Capability

A business capability is something that the business does or needs to do to achieve its objectives. It is −

Stable over time

Independent from organizational changes

Often modeled using Domain-Driven Design (DDD)

Examples of Business Capabilities

| Sr.No. | Business Domain | Business Capabilities |

|---|---|---|

| 1 | E-commerce | Order Management, Payments, Customer Service |

| 2 | Banking | Account Management, Loans, Risk Analysis |

| 3 | Healthcare | Patient Records, Appointments, Billing |

Pattern − Decomposition by Business Capability

Definition

Decomposition by business capability is a microservices design pattern that organizes services around what the business does, not how the software is technically layered.

Core Principle

Each microservice corresponds to a single business capability, becoming the owner of all data and logic related to that capability.

Benefits of Decomposition by Business Capability

| Sr.No. | Benefit | Description |

|---|---|---|

| 1 | High cohesion | Services are focused and internally consistent. |

| 2 | Loose coupling | Independent deployment and scalability. |

| 3 | Clear ownership | Easier to assign to teams (Team-Service alignment). |

| 4 | Faster development | Services evolve independently without breaking other components. |

| 5 | Better DDD alignment | Ties naturally with DDD's Bounded Contexts. |

Applying the Pattern: A Case Study

Scenario: Building an Online Retail Platform

Monolith Capabilities

User management

Product catalog

Order management

Payment processing

Decomposed Microservices

| Sr.No. | Microservice | Business Capability |

|---|---|---|

| 1 | user-service | User registration, profiles |

| 2 | product-service | Product listings, categories |

| 3 | order-service | Cart, checkout, orders |

| 4 | payment-service | Payment processing |

Step-by-Step Implementation (Spring Boot)

We'll use Spring Boot to demonstrate decomposition by business capability.

Create Individual Services.

user-service – User Capability

UserController.java

@RestController

@RequestMapping("/users")

public class UserController {

@GetMapping("/{id}")

public String getUser(@PathVariable String id) {

return "User profile for ID: " + id;

}

}

product-service – Product Capability

ProductController.java

@RestController

@RequestMapping("/products")

public class ProductController {

@GetMapping("/{id}")

public String getProduct(@PathVariable String id) {

return "Product details for ID: " + id;

}

}

order-service – Order Capability

OrderController.java

@RestController

@RequestMapping("/orders")

public class OrderController {

@PostMapping("/")

public String placeOrder(@RequestBody String orderData) {

return "Order placed successfully";

}

}

payment-service – Payment Capability

PaymentController.java

@RestController

@RequestMapping("/payments")

public class PaymentController {

@PostMapping("/")

public String makePayment(@RequestBody String paymentData) {

return "Payment successful";

}

}

Each service is an isolated Spring Boot application, deployed independently, with its own database.

Communication Between Business Capabilities

Inter-service communication is done via REST or asynchronous messaging.

REST Example from Order to Payment

@Autowired

private RestTemplate restTemplate;

public String callPaymentService() {

return restTemplate.postForObject("http://payment-service/payments", new Payment(), String.class);

}

Integration with Domain-Driven Design (DDD)

Decomposition by business capability is closely aligned with DDD's Bounded Context.

Bounded Context Example

ProductContext → product-service

CustomerContext → user-service

OrderContext → order-service

Each service is a self-contained model and is responsible for its own aggregates, entities, and repositories.

Database Design per Capability

Each microservice manages its own database. This ensures −

Loose coupling

Independent schema evolution

Avoidance of shared database anti-pattern

Example

| Sr.No. | Service | Table |

|---|---|---|

| 1 | user-service | Users |

| 2 | product-service | products, categories |

| 3 | order-service | orders, order_items |

Challenges in This Pattern

| Sr.No. | Challenge | Description |

|---|---|---|

| 1 | Data consistency | No distributed transactions; must use eventual consistency |

| 2 | Cross-cutting concerns | Logging, auth, monitoring must be centralized |

| 3 | Service granularity confusion | Too fine-grained = overhead; too coarse = mini-monolith |

| 4 | Initial complexity | More moving parts to manage compared to monolith |

Real-World Examples

| Sr.No. | Company | Business Capability-based Microservices |

|---|---|---|

| 1 | Amazon | Order, Inventory, Delivery, Payment |

| 2 | Netflix | Playback, Recommendations, Membership |

| 3 | Uber | Ride Booking, Payments, Driver Management |

These companies structure services around business functions, not technical tiers.

Conclusion

Decomposition by Business Capability is one of the most effective strategies for structuring microservices. It helps design systems that are −

Modular and scalable

Aligned with business goals

Easy to manage and evolve

This pattern provides a strong foundation for team autonomy, agile development, and cloud-native deployment.

Java Microservices - Decomposition by Subdomain

Introduction

Modern software systems must evolve quickly, scale independently, and remain robust in the face of change. Microservices architecture provides a foundation for these requirements by breaking down applications into independent services.

However, how we decompose a system is critical. A poor decomposition can lead to tight coupling, poor scalability, and development friction. Among the various decomposition strategies, "Decomposition by Subdomain" − driven by Domain-Driven Design (DDD) − stands out as one of the most effective and sustainable methods.

This article explores the Decomposition by Subdomain pattern in microservices, its rationale, implementation approach, and real-world applications using Spring Boot.

What is Decomposition by Subdomain?

Definition

Decomposition by subdomain is a microservices design pattern that breaks a system into services based on domain substructures called subdomains, identified through Domain-Driven Design (DDD).

Instead of organizing services by technical functions (like DAO, controllers), we organize them by business function areas such as−

Customer Management

Billing

Inventory

Shipping

Each subdomain becomes a bounded context, which maps directly to a microservice.

Benefits of Decomposition by Subdomain

| Sr.No. | Benefit | Explanation |

|---|---|---|

| 1 | High Cohesion | Services handle a specific, focused domain task |

| 2 | Loosely Coupled Services | Minimizes dependencies between services |

| 3 | Aligned to Business Goals | Improves communication between technical and business teams |

| 4 | Supports Team Autonomy | Teams can own and evolve services independently |

| 5 | Easier Maintenance | Smaller, focused services are easier to debug and test |

Identifying Subdomains: A Case Study

Let's consider an online learning platform like Coursera.

Business Capabilities

User Registration

Course Catalog

Enrollment & Payment

Content Delivery

Certification

Decomposed Subdomains

| Sr.No. | Subdomain | Microservice |

|---|---|---|

| 1 | Identity & Access | auth-service |

| 2 | Course Management | course-service |

| 3 | Payment & Enrollment | enrollment-service |

| 4 | Video Streaming | streaming-service |

| 5 | Certificate Issuance | certification-service |

Implementing the Pattern Using Spring Boot

We'll illustrate with two subdomains: Course Management and Enrollment.

Course-Service (Core Subdomain)

Responsibilities

Manage course creation, categories, metadata.

CourseController.java

@RestController

@RequestMapping("/courses")

public class CourseController {

@GetMapping("/{id}")

public String getCourse(@PathVariable String id) {

return "Course info for ID: " + id;

}

@PostMapping("/")

public String createCourse(@RequestBody Course course) {

return "Course created: " + course.getTitle();

}

}

application.yml

spring:

application:

name: course-service

server:

port: 8081

Enrollment-Service (Core Subdomain)

Responsibilities

Manage student enrollment and payment status.

EnrollmentController.java

@RestController

@RequestMapping("/enrollments")

public class EnrollmentController {

@PostMapping("/")

public String enroll(@RequestBody Enrollment enrollment) {

return "Student enrolled in course ID: " + enrollment.getCourseId();

}

}

application.yml

spring:

application:

name: enrollment-service

server:

port: 8082

Manage student enrollment and payment status.

Each service has −

Its own data model

Database

And communicates via REST or asynchronous events.

Communicating Across Subdomains

Subdomain-based services often need to interact.

REST Call (Synchronous)

enrollment-service calls course-service to validate a course −

@Autowired

private RestTemplate restTemplate;

public String getCourse(String id) {

return restTemplate.getForObject("http://course-service/courses/" + id, String.class);

}

Event-Driven (Asynchronous)

Using Kafka or RabbitMQ for loose coupling −

course-service emits CourseCreatedEvent.

enrollment-service listens and updates its cache.

Aligning Subdomains with Bounded Contexts

Subdomain decomposition often aligns with bounded contexts in DDD.

Bounded Context − A logical boundary where a particular domain model is defined and applicable.

This allows −

Unique data models

Different vocabularies

Clear API boundaries

Example

course-service uses CourseEntity

enrollment-service uses CourseView (DTO)

This prevents leaky abstractions and supports data autonomy.

Subdomain Database Design

Each service/subdomain must own its data.

Microservice DB Ownership

| Sr.No. | Service | Tables |

|---|---|---|

| 1 | course-service | courses, categories |

| 2 | enrolment-service | enrolments, students |

| 3 | auth-service | users, roles, permissions |

No shared schemas or cross-database joins.

For queries across services: use data replication, event-driven updates, or API composition.

Best Practices and Considerations

| Sr.No. | Best Practice | Tables |

|---|---|---|

| 1 | Use domain modeling | Deeply understand the business language |

| 2 | Keep bounded contexts separate | Avoid accidental coupling |

| 3 | Implement shared contracts | Use OpenAPI or shared message formats |

| 4 | Ensure services work together | Use Event Storming or DDD modeling |

| 5 | Use observability tools | Monitor interactions (e.g., Sleuth, Zipkin, Prometheus) |

Real-World Example: Netflix

Netflix decomposes by subdomain−

| Sr.No. | Subdomain | Service Name |

|---|---|---|

| 1 | Playback | video-stream-service |

| 2 | Recommendation | reco-engine-service |

| 3 | Account Management | account-service |

| 4 | Billing | billing-service |

Each team owns one or more subdomains and releases features independently.

Challenges and How to Address Them

| Sr.No. | Challenge | Solution |

|---|---|---|

| 1 | Data consistency | Use eventual consistency + sagas or event sourcing |

| 2 | Duplication of logic/data | Keep services independent, use APIs to sync |

| 3 | Complexity of orchestration | Use orchestration (e.g., Netflix Conductor) or choreography |

| 4 | Domain boundaries unclear | Use Event Storming or DDD modeling |

Conclusion

Decomposition by Subdomain is a powerful pattern that promotes −

Business-aligned services

Autonomous development teams

Scalable and maintainable architecture

It fosters long-term agility by structuring software based on what the business actually does, not just on technology or project constraints.

With proper modeling, tooling, and communication strategies, subdomain decomposition leads to systems that are easier to build, grow, and maintain.

Java Microservices - Backend for Frontend

Microservices architectures offer modularity, scalability, and development agility. But they also introduce new challenges in client-to-service interactions, particularly when multiple clients-such as web apps, mobile apps, and IoT devices-consume backend services differently. The Backend for Frontend (BFF) pattern solves this problem by introducing a customized backend layer for each type of frontend. This article explores the BFF pattern in depth, from its motivation and benefits to its implementation using Spring Boot.

The Challenge with Shared Backends

Let's consider a monolithic or centralized API that serves all clients (web, mobile, desktop). Problems often include −

Over-fetching or under-fetching data

Heavy payloads sent to mobile devices

diverse authentication requirements

Frontend-specific transformations polluting backend logic

Example

| Sr.No. | Frontend | Requirement |

|---|---|---|

| 1 | Web | Full product details + reviews |

| 2 | Mobile | Minimal product summary |

| 3 | SmartWatch | Only product name + price |

A one-size-fits-all backend is suboptimal. You either over-engineer APIs or add complex branching logic in the frontend or backend.

What is the Backend for Frontend (BFF) Pattern?

Definition

Backend for Frontend (BFF) is a microservices design pattern where each type of client gets its own dedicated backend layer that interacts with downstream services and tailors the response specifically for that frontend.

Origin

Coined by Sam Newman, the BFF pattern is widely used in companies like Netflix, Amazon, and Spotify to streamline frontend-backend interactions.

Architecture Overview

Each frontend has its own BFF that −

Aggregates and formats data

Performs client-specific logic

Secures and optimizes communication

Benefits of BFF Pattern

| Sr.No. | Benefit | Description |

|---|---|---|

| 1 | Client-specific APIs | Serve just what the client needs-no more, no less |

| 2 | Reduced frontend logic | Frontend doesn't need to transform or combine data |

| 3 | Better performance | Smaller, optimized payloads for mobile, watches, etc |

| 4 | Simplified backend services | Backend microservices stay generic and reusable |

| 5 | Team autonomy | Separate BFFs allow independent teams for each frontend |

| 6 | Security boundary | Frontends don't directly call internal services |

Real-World Example: E-commerce Platform

Core Microservices

product-service

review-service

inventory-service

user-service

Clients

Web app

Mobile app

BFF Setup

| Sr.No. | BFF | Functions |

|---|---|---|

| 1 | Web BFF | Combines product + reviews + inventory |

| 2 | Mobile BFF | Returns product summary + price only |

BFF Implementation Using Spring Boot

Let's implement two BFFs using Spring Boot: one for Web and one for Mobile.

product-service (Downstream Service)

ProductController.java

@RestController

@RequestMapping("/products")

public class ProductController {

@GetMapping("/{id}")

public Product getProduct(@PathVariable String id) {

return new Product(id, "iPhone 15", "High-end smartphone", 1299.99);

}

}

Web BFF

WebProductController.java

@RestController

@RequestMapping("/web/products")

public class WebProductController {

@Autowired

private RestTemplate restTemplate;

@GetMapping("/{id}")

public Map<String, Object> getFullProduct(@PathVariable String id) {

Product product = restTemplate.getForObject("http://localhost:8081/products/" + id, Product.class);

Map<String, Object> response = new HashMap<>();

response.put("name", product.getName());

response.put("description", product.getDescription());

response.put("price", product.getPrice());

response.put("reviews", List.of("Great phone!", "Excellent display"));

return response;

}

}

Mobile BFF

MobileProductController.java

@RestController

@RequestMapping("/mobile/products")

public class MobileProductController {

@Autowired

private RestTemplate restTemplate;

@GetMapping("/{id}")

public Map<String, Object> getProductSummary(@PathVariable String id) {

Product product = restTemplate.getForObject("http://localhost:8081/products/" + id, Product.class);

Map<String, Object> response = new HashMap<>();

response.put("name", product.getName());

response.put("price", product.getPrice());

return response;

}

}

Note − In production, you'd use service discovery, circuit breakers, caching, and load balancing.

Key Responsibilities of a BFF

| Sr.No. | Responsibility | Why It's Important |

|---|---|---|

| 1 | API Composition | Aggregate results from multiple services |

| 2 | Payload Optimization | Tailor response size and shape |

| 3 | Security Layer | Token validation, OAuth2 flow |

| 4 | Session Handling | Manage session tokens, cookies |

| 5 | Error Handling | Convert internal errors to frontend-appropriate messages |

| 6 | Caching | Apply client-specific caching strategies |

Best Practices

Do:

Create one BFF per frontend (not per team)

Keep BFF logic frontend-specific, not business-specific

Apply rate limiting and auth at BFF layer

Use open APIs internally for microservice communication

Keep BFFs lightweight and stateless

Don't:

Overload BFFs with business logic

Reuse a single BFF for all frontends

Hard-code service URLs (use discovery mechanisms)

Ignore observability and monitoring

Tools and Frameworks

| Sr.No. | Concern | Tools |

|---|---|---|

| 1 | Framework | Spring Boot, Node.js |

| 2 | API Gateway | Spring Cloud Gateway, NGINX |

| 3 | Auth | OAuth2, JWT, Keycloak |

| 4 | Service Discovery | Eureka, Consul |

| 5 | Monitoring | Prometheus, Grafana, ELK |

When Should You Use BFF Pattern?

Ideal When −

Multiple frontends (mobile, web, IoT)

Different data requirements per frontend

Need for optimized client-server communication

Complex aggregation logic required

Security concerns restrict frontend access to backend

Not Ideal If −

Single frontend

Simple system with flat data requirements

Real-World Companies Using BFF

| Sr.No. | Company | Use Case |

|---|---|---|

| 1 | Netflix | Mobile, TV, web apps-each with separate BFFs for performance |

| 2 | Spotify | Separate APIs for mobile and desktop clients with custom features |

| 3 | Amazon | Web and Alexa clients using different response models and BFFs |

Challenges and Mitigation

| Sr.No. | Challenge | Solution |

|---|---|---|

| 1 | Duplicate logic in BFFs | Share common libraries or move to shared microservices |

| 2 | Increased deployment units | Automate CI/CD pipelines |

| 3 | Versioning across BFFs | Use semantic versioning or independent endpoints |

| 4 | Security complexities | Centralize auth logic via API Gateway or shared library |

Conclusion

The Backend for Frontend pattern is a smart strategy to tailor backend communication for different frontend clients. By implementing a dedicated BFF for each frontend, you can−

Optimize performance

Improve user experience

Simplify frontend development

Maintain backend service purity

When used correctly, BFF enhances the agility, modularity, and maintainability of microservices-based systems.

Java Microservices - The Strangler Pattern

Introduction

One of the most challenging tasks in modern software architecture is migrating legacy monolithic systems to microservices without causing service disruptions or rewriting the entire application from scratch. This is where the Strangler Pattern proves invaluable.

Inspired by the way strangler fig trees grow-by slowly enveloping and replacing their host trees-the Strangler Pattern enables a gradual and safe migration. This article explores the pattern in-depth, including its purpose, structure, benefits, challenges, and implementation using Spring Boot.

The Need for the Strangler Pattern

Common Legacy Problems

Difficult to scale monoliths horizontally

High risk and cost in making changes

Long build and deployment times

Technology obsolescence

Poor modularization and code ownership

A complete rewrite of a monolithic system is −

Risky

Expensive

Often unsuccessful due to scope creep

Solution

Strangler Pattern allows for incremental replacement −

Develop new functionality as microservices

Gradually extract old components

Redirect traffic progressively

Retire monolith module by module

What is the Strangler Pattern?

Definition

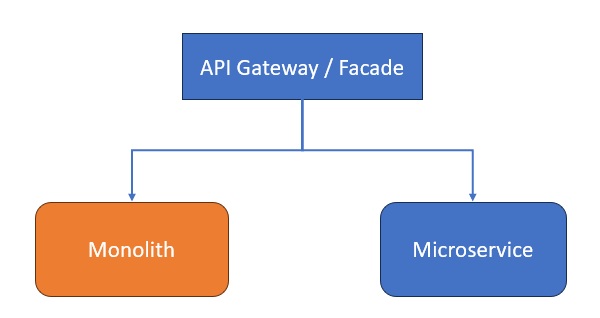

The Strangler Pattern is a migration strategy that incrementally replaces legacy components by building a facade that routes requests to either the old monolith or the new microservices.

Over time, as microservices take over more responsibilities, the monolith becomes obsolete and can be decommissioned.

Origin

Named by Martin Fowler, inspired by how the strangler fig overtakes host trees over time.

Key Components of the Strangler Pattern

| Sr.No. | Component | Role |

|---|---|---|

| 1 | Facade Layer | Routes incoming requests to monolith or microservices |

| 2 | Legacy Monolith | Existing application codebase |

| 3 | Microservices | New components replacing monolith parts |

| 4 | Routing Logic | Determines where each request should go |

| 5 | Monitoring Tools | Ensure proper behavior during migration |

Diagram: Strangler Pattern in Action

API Gateway forwards requests based on route mappings.

Requests for newer functionality go to microservices.

Legacy requests go to the monolith.

Real-World Use Case

Scenario: Legacy E-commerce Platform

Monolith Responsibilities

Product Catalog

Cart & Checkout

Payments

Order History

Migration Goal

Refactor into microservices

product-service

checkout-service

payment-service

Approach

Facade − Introduce Spring Cloud Gateway as the entry point.

Route old product-related endpoints to monolith.

Route new checkout/payment endpoints to new services.

Gradually migrate and remove old endpoints.

Step-by-Step Implementation Using Spring Boot

Introduce a Gateway (Strangling Point)

Facade − Introduce Spring Cloud Gateway as the entry point.

Route old product-related endpoints to monolith.

Route new checkout/payment endpoints to new services.

Gradually migrate and remove old endpoints.

Use Spring Cloud Gateway −

pom.xml

<dependency> <groupId>org.springframework.cloud</groupId> <artifactId>spring-cloud-starter-gateway</artifactId> </dependency>

application.yml

spring:

application:

name: api-gateway

cloud:

gateway:

routes:

- id: monolith-service

uri: http://localhost:8080

predicates:

- Path=/products/**, /cart/**

- id: checkout-service

uri: http://localhost:8081

predicates:

- Path=/checkout/**

- id: payment-service

uri: http://localhost:8082

predicates:

- Path=/payment/**

Keep Monolith Intact (Initially)

No code changes in the monolith are needed immediately.

Develop Microservices (e.g., Checkout)

CheckoutController.java

@RestController

@RequestMapping("/checkout")

public class CheckoutController {

@PostMapping("/")

public String checkout(@RequestBody CheckoutRequest req) {

return "Checked out cart ID: " + req.getCartId();

}

}

application.yml (checkout-service)

server:

port: 8081

spring:

application:

name: checkout-service

Gradual Migration

Redirect /checkout to new service

Extract logic for /cart next

Replace /products as last step

Each move is low risk

Advantages of the Strangler Pattern

| Sr.No. | Benefit | Description |

|---|---|---|

| 1 | Incremental Migration | Safely move piece-by-piece to microservices |

| 2 | Reduced Risk | Avoids "big bang" rewrites |

| 3 | Easier Debugging | Only part of the system changes at any time |

| 4 | Reuses Existing Features | Keeps old monolith alive until no longer needed |

| 5 | Supports Parallel Dev | Teams can build new modules while legacy still runs |

Challenges and Solutions

| Sr.No. | Challenge | Solution |

|---|---|---|

| 1 | Routing Complexity | Use Spring Cloud Gateway / Istio for traffic control |

| 2 | Inconsistent Data Models | Use event-driven sync or API composition |

| 3 | Monolith Coupling | Use facade to abstract internals; slowly decouple modules |

| 4 | Dual Maintenance Effort | Keep migration short-lived per module |

| 5 | Authentication Integration | Centralize with OAuth2 / JWT and shared identity provider |

Tools and Technologies for Strangler Pattern

| Sr.No. | Purpose | Tools |

|---|---|---|

| 1 | Routing / Gateway | Spring Cloud Gateway, Istio, NGINX |

| 2 | Service Discovery | Eureka, Consul |

| 3 | Asynchronous Events | Kafka, RabbitMQ |

| 4 | Observability | Sleuth, Zipkin, Prometheus |

| 5 | CI/CD | Jenkins, GitLab CI/CD |

Real-World Example: Amazon

Amazon moved from a monolithic system in the early 2000s to thousands of microservices by −

Introducing API gateways

Migrating single features at a time

Using service ownership by small autonomous teams

Strangler Pattern helped ensure uninterrupted service during their evolution.

When to Use the Strangler Pattern

Use When −

You want minimal risk migration

You must maintain availability

You don't have budget or time for rewrites

The monolith is too large for a full refactor

Avoid If −

The system is small and simple

Conclusion

The Strangler Pattern is a powerful and pragmatic approach to incrementally migrating legacy monolithic systems to modern microservice architectures.

By placing a routing layer between consumers and services, teams can −

Gradually introduce new microservices

Retire legacy components step-by-step

Minimize risk and maximize business continuity

This pattern reduces technical debt progressively and supports long-term modernization efforts, making it one of the most practical patterns in the microservices transition toolkit.

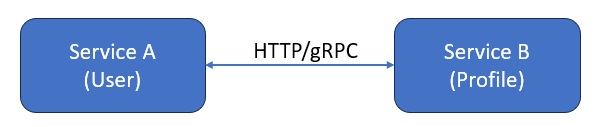

Java Microservices - Synchronous Communication (REST/gRPC)

Introduction

Microservices architecture involves breaking down applications into independently deployable, loosely coupled services. For these services to work cohesively, they must communicate with each other-either synchronously or asynchronously.

This article focuses on the Synchronous Communication pattern, where services interact in real time, expecting immediate responses. The two most widely used technologies for synchronous communication are −

REST (Representational State Transfer)

gRPC (Google Remote Procedure Call)

We will explore both in detail−understanding their use cases, trade-offs, implementation techniques, and how they compare.

What Is Synchronous Communication?

Definition

Synchronous communication in microservices refers to a communication pattern where one service sends a request to another and waits for a response before proceeding.

This is akin to traditional function calls: Service A calls Service B, and waits for the result to continue its execution.

Characteristics of Synchronous Communication

| Sr.No. | Feature | Description |

|---|---|---|

| 1 | Real-time interaction | The client waits until the response is received |

| 2 | Simple error handling | Built-in status codes, retries, and fallbacks |

| 3 | Tightly coupled timing | Both services must be available during communication |

| 4 | Serialization | Data is serialized into formats like JSON (REST) or Protobuf (gRPC) |

Why Use Synchronous Communication?

Ideal for −

Real-time data requirements (e.g., payments, user authentication)

CRUD operations (e.g., read user profile)

Predictable and consistent APIs

Not Ideal for −

High-volume or event-driven scenarios

Long-running processes

Systems requiring decoupling and fault tolerance

Technology Options

| Sr.No. | Protocol | Description | Common Usage |

|---|---|---|---|

| 1 | REST | HTTP-based API using JSON/XML | Web, mobile, HTTP clients |

| 2 | gRPC | Binary protocol over HTTP/2 using Protobuf | Internal microservices, low-latency systems |

Architecture Overview

Service A makes a synchronous request to Service B

Service B processes and responds instantly

If B fails, A must retry or handle the failure

REST-Based Synchronous Communication with Spring Boot

Project Setup

Dependencies (Maven)

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-webflux</artifactId> <!-- Optional for async REST --> </dependency>

Service B: Profile Service

@RestController

@RequestMapping("/profiles")

public class ProfileController {

@GetMapping("/{id}")

public Profile getProfile(@PathVariable String id) {

return new Profile(id, "Alice", "alice@example.com");

}

}

Service A: User Service (REST Client)

@Service

public class ProfileClient {

@Autowired

private RestTemplate restTemplate;

public Profile getProfile(String userId) {

return restTemplate.getForObject("http://profile-service/profiles/" + userId, Profile.class);

}

}

Enable LoadBalanced RestTemplate

@Bean

@LoadBalanced

public RestTemplate restTemplate() {

return new RestTemplate();

}

Configuration (application.yml)

spring:

application:

name: user-service

eureka:

client:

service-url:

defaultZone: http://localhost:8761/eureka

gRPC-Based Synchronous Communication in Spring Boot

Why gRPC?

Feature REST gRPC Format JSON / XML Protocol Buffers (binary) Performance Moderate Very high Streaming Limited Full-duplex supported Language Support Wide Also wide HTTP Version HTTP/1.1 HTTP/2gRPC is ideal for internal service communication requiring low latency.

Setup: Add gRPC Dependencies

Use yidongnan's Spring Boot starter for gRPC −

Maven

<dependency> <groupId>net.devh</groupId> <artifactId>grpc-server-spring-boot-starter</artifactId> <version>2.14.0.RELEASE</version> </dependency> <dependency> <groupId>net.devh</groupId> <artifactId>grpc-client-spring-boot-starter</artifactId> <version>2.14.0.RELEASE</version> </dependency>

Define Proto File

profile.proto

syntax = "proto3";

package profile;

service ProfileService {

rpc GetProfile (ProfileRequest) returns (ProfileResponse);

}

message ProfileRequest {

string userId = 1;

}

message ProfileResponse {

string userId = 1;

string name = 2;

string email = 3;

}

Compile with the Protobuf plugin to generate Java classes.

Implement the gRPC Server

@GrpcService

public class ProfileServiceImpl extends ProfileServiceGrpc.ProfileServiceImplBase {

@Override

public void getProfile(ProfileRequest request, StreamObserver<ProfileResponse> responseObserver) {

ProileResponse response = ProfileResponse.newBuilder()

.setUserId(request.getUserId())

.setName("Alice")

.setEmail("alice@example.com")

.build();

responseObserver.onNext(response);

responseObserver.onCompleted();

}

}

gRPC Client

@Service

public class ProfileGrpcClient {

@GrpcClient("profile-service")

private ProfileServiceGrpc.ProfileServiceBlockingStub stub;

public ProfileResponse getProfile(String userId) {

return stub.getProfile(ProfileRequest.newBuilder().setUserId(userId).build());

}

}

Synchronous Communication Best Practices

| Sr.No. | Practice | Description |

|---|---|---|

| 1 | Circuit Breakers | Use Resilience4j or Hystrix to avoid cascading failures |

| 2 | Timeouts | Set request timeouts to avoid hanging requests |

| 3 | Retries | Automatically retry transient failures |

| 4 | Load Balancing | Use Ribbon, Eureka, or Kubernetes for distributing traffic |

| 5 | Monitoring & Tracing | Use Sleuth, Zipkin, Prometheus for observability |

| 6 | Fallback Mechanisms | Provide alternative responses if a service fails |

Pros and Cons of Synchronous Communication

| Sr.No. | Pros | Cons |

|---|---|---|

| 1 | Simpler to implement and debug | Coupling in availability |

| 2 | Easier data consistency | Not suitable for large-scale, event-driven systems |

| 3 | Familiar request/response model | Latency increases with each network hop |

| 4 | Ideal for chained workflows | Prone to cascading failures |

Use Cases Comparison: REST vs. gRPC

| Sr.No. | Use Case | Recommended Approach |

|---|---|---|

| 1 | Internal microservice communication | gRPC (performance critical) |

| 2 | Mobile/Web communication | REST (browser/client friendly) |

| 3 | Streaming large datasets | gRPC with streaming |

| 4 | Public APIs | REST (easy integration) |

Real-World Example: Netflix

Netflix uses gRPC extensively for internal communications between services like recommendation engines and playback servers, due to its high performance and contract-first development.

However, for public APIs, Netflix still uses REST with GraphQL for client flexibility.

When to Use Synchronous Communication

Use When

Real-time responses are required

Workflow depends on sequential execution

Systems are under control in terms of scale

Avoid When

Services are frequently unavailable

High-volume traffic or long processing is involved

Decoupling and resilience are key priorities

Conclusion

Synchronous communication is a core pattern in microservices that enables real-time, request-response interaction between services. With REST and gRPC as the leading technologies, you can choose based on −

Performance needs (gRPC)

Interoperability (REST)

Use case complexity

For mission-critical, performance-sensitive applications, gRPC is highly effective. For client-facing and public APIs, REST remains the default choice.

Design your system based on communication patterns that align with business and technical requirements.

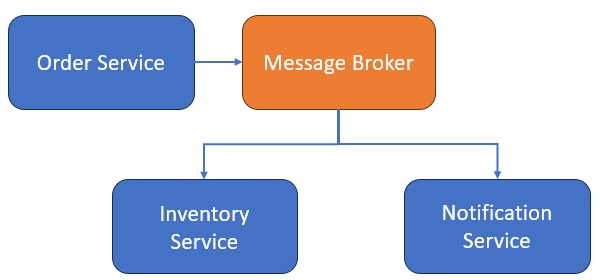

Java Microservices - Asynchronous Communication

Introduction

As microservices become more complex, their need for effective communication grows. Traditionally, services interact synchronously-one service calls another and waits for a response. However, this model can lead to tight coupling, reduced resilience, and latency issues.

To address these challenges, modern systems often rely on Asynchronous Communication, especially via Event-Driven Architecture (EDA). In this model, services publish and subscribe to events, enabling loose coupling, scalability, and high performance.

This article explores the asynchronous communication model using RabbitMQ and Apache Kafka, and demonstrates practical implementations using Spring Boot.

What is Asynchronous Communication?

Definition

Asynchronous communication is a pattern where services interact without waiting for a direct response. Messages or events are sent and received independently, typically via message brokers or event buses.

Characteristics

Non-blocking communication

Services don't need to be online simultaneously

Interaction via queues, topics, or streams

Enables event-driven workflows

Why Use Asynchronous Communication in Microservices?

Advantages

Example

| Sr.No. | Feature | Benefit |

|---|---|---|

| 1 | Loose Coupling | Services don't directly depend on each other |

| 2 | Resilience | Failures in one service don't cascade |

| 3 | Scalability | Easily scale consumers independently |

| 4 | Performance | No waiting for slow downstream responses |

| 5 | Decoupled Development | Teams can build services independently |

Common Use Cases

Order processing

Email notifications

Event sourcing

Payment workflows

Audit and logging

Architecture of Event-Driven Microservices

Key Components

| Sr.No. | Component | Role |

|---|---|---|

| 1 | Producer | Sends events (e.g., OrderPlaced) |

| 2 | Broker | Delivers events (RabbitMQ, Kafka, etc.) |

| 3 | Consumer | Subscribes to and processes events |

Diagram

Technologies for Asynchronous Communication

| Sr.No. | Tool | Description | Best Use Cases |

|---|---|---|---|

| 1 | RabbitMQ | Lightweight message broker using AMQP | Task queues, retry queues, real-time alerts |

| 2 | Kafka | Distributed event streaming platform | High-volume data, event sourcing, audit |

| 3 | ActiveMQ | Legacy support, JMS compatibility | Java-based systems |

| 4 | Amazon SNS/SQS | Managed messaging services | Cloud-native systems |

Asynchronous Communication with RabbitMQ and Spring Boot

Overview of RabbitMQ

RabbitMQ is a message queueing broker that supports multiple protocols, primarily AMQP. It uses exchanges, queues, and bindings.

Exchange − Routes messages

Queue − Stores messages until consumed

Binding − Connects exchanges to queues

Setup (Spring Boot)

Maven Dependencies−

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-amqp</artifactId> </dependency>

Producer Example: Order Service

@Service

public class OrderProducer {

@Autowired

private RabbitTemplate rabbitTemplate;

public void sendOrderEvent(Order order) {

rabbitTemplate.convertAndSend("order.exchange", "order.routingKey", order);

}

}

Configuration

@Configuration

public class RabbitMQConfig {

@Bean

public Queue orderQueue() {

return new Queue("order.queue", true);

}

@Bean

public DirectExchange exchange() {

return new DirectExchange("order.exchange");

}

@Bean

public Binding binding() {

return BindingBuilder

.bind(orderQueue())

.to(exchange())

.with("order.routingKey");

}

}

Consumer Example: Inventory Service

@Service

public class InventoryConsumer {

@RabbitListener(queues = "order.queue")

public void handleOrder(Order order) {

System.out.println("Processing inventory for order: " + order.getId());

}

}

Asynchronous Communication with Apache Kafka

Overview of Kafka

Apache Kafka is a distributed, fault-tolerant event streaming platform.

Producer− Publishes messages to a topic

Consumer− Subscribes to topic(s)

Broker− Manages topics and partitions

Topic− Logical stream of events

Setup (Spring Boot)

Maven Dependencies −

<dependency> <groupId>org.springframework.kafka</groupId> <artifactId>spring-kafka</artifactId> </dependency>

Producer Example: Order Service

@Service

public class KafkaOrderProducer {

@Autowired

private KafkaTemplate<String, Order> kafkaTemplate;

public void sendOrder(Order order) {

kafkaTemplate.send("order-topic", order);

}

}

Kafka Configuration

spring:

kafka:

bootstrap-servers: localhost:9092

producer:

key-serializer: org.apache.kafka.common.serialization.StringSerializer

value-serializer: org.springframework.kafka.support.serializer.JsonSerializer

consumer:

group-id: inventory-service

key-deserializer: org.apache.kafka.common.serialization.StringDeserializer

value-deserializer: org.springframework.kafka.support.serializer.JsonDeserializer

Consumer Example: Inventory Service

@Service

public class KafkaOrderConsumer {

@KafkaListener(topics = "order-topic", groupId = "inventory-service")

public void consume(Order order) {

System.out.println("Inventory updated for Order: " + order.getId());

}

}

Comparison: RabbitMQ vs Kafka

| Sr.No. | Feature | RabbitMQ | Apache Kafka |

|---|---|---|---|

| 1 | Model | Message Queue (Push) | Event Log (Pull) |

| 2 | Message Retention | Deletes after consumption | Retains for configured period |

| 3 | Use Case | Real-time messaging | Event streaming, audit, analytics |

| 4 | Performance | Good for low/medium volume | Excellent for high-throughput |

| 5 | Delivery Guarantees | At most once / at least once | Exactly once (with config) |

| 6 | Built-in Features | Dead-letter queues, priority | Stream replay, partitioning |

Best Practices

| Sr.No. | Practice | Description |

|---|---|---|

| 1 | Idempotency | Ensure consumers handle duplicate events safely |

| 2 | Dead-letter Queues (DLQs) | Handle failed messages without losing them |

| 3 | Retries and Backoff | Use exponential backoff for transient failures |

| 4 | Message Versioning | Support schema evolution |

| 5 | Monitoring & Tracing | Use Zipkin, Prometheus, Kafka UI for observability |

| 6 | Async Boundaries | Use command/event distinction (e.g., OrderPlaced vs OrderConfirmed) |

Real-World Use Cases

| Sr.No. | Company | Event-Driven Use Case |

|---|---|---|

| 1 | Uber | Geolocation updates, surge pricing via Kafka |

| 2 | Netflix | User activity tracking, recommendation pipelines with Kafka |

| 3 | Shopify | Order fulfillment via RabbitMQ |

| 4 | Built Kafka for internal use−event sourcing at scale |

When to Use Asynchronous Communication

Ideal For −

High-volume systems

Background task processing

Decoupled architectures

Event sourcing and audit trails

Retry-able workflows (notifications, billing, etc.)

Not Ideal When −

Immediate response is required

Simple request-response is sufficient

External system mandates synchronous calls (e.g., payment gateway)

Conclusion

Asynchronous communication is a key architectural pattern for building scalable, resilient, and event-driven microservices.

RabbitMQ is a great choice for lightweight message-based systems.

Apache Kafka shines in high-throughput, log-based systems.

By adopting this pattern, organizations gain the flexibility to −

Decouple services

Increase responsiveness

Handle complex workflows

Enable real-time data pipelines

When combined with proper tooling and best practices, asynchronous communication becomes a cornerstone of robust microservices systems.

Java Microservices - Saga Pattern

Introduction

As businesses embrace microservices architecture, one major challenge arises: how to maintain data consistency across distributed services. In traditional monoliths, a database transaction ensures ACID properties. But in microservices, each service often manages its own database − making distributed transactions difficult.

The Saga pattern is a solution to this problem. It allows services to collaborate on a long-running business transaction by exchanging a sequence of local transactions and compensating actions when needed.

This article explores the Saga pattern in detail, including its types, real-world examples, implementation with Spring Boot, and best practices.

What is Saga Pattern?

A Saga is a sequence of local transactions, where each transaction updates data within a single microservice and publishes an event or calls the next service. If one transaction fails, the Saga executes compensating transactions to undo the impact of previous ones.

A saga is a failure management pattern for long-running distributed transactions.

Why Do We Need Sagas?

Challenges in Distributed Transactions

| Sr.No. | Challenge | Description |

|---|---|---|

| 1 | Lack of global transactions | No XA/2PC (Two Phase Commit) across microservices |

| 2 | Data ownership | Each service owns its data (Database per service) |

| 3 | Partial failures | Some steps may succeed, others may fail |

| 4 | Consistency | Eventual consistency instead of strict ACID |

The Saga pattern helps orchestrate distributed workflows with eventual consistency.

Types of Saga Implementations

Choreography Based Saga

No central controller

Services listen to events and act accordingly

Lightweight, but complex with many services

Example Flow

Order Service → emits OrderCreated

Payment Service → listens, processes payment → emits PaymentCompleted

Inventory Service → reserves stock → emits InventoryReserved

Shipping Service → ships item

If any step fails, a compensating event is triggered.

Orchestration-Based Saga

Central Saga orchestrator directs the flow

Each service executes commands from the orchestrator

Easier to manage, but introduces coupling

Example Flow

Orchestrator → calls Order Service

On success → calls Payment Service

On failure → instructs Order Service to cancel

Real-World Example: E-Commerce Order Processing

Steps

Place Order

Reserve Inventory

Process Payment

Ship Item

Each service has a local database and transaction logic.

If payment fails, we must −

Cancel the order

Release the inventory

This is handled by a Saga.

Saga architecture

Diagram: Choreography Based Saga

Each service publishes and subscribes to events through a broker like Kafka or RabbitMQ.

Implementing Saga Pattern in Spring Boot

Let's implement a Choreography based saga using Spring Boot + Kafka.

Technologies Used

Spring Boot

Spring Kafka

Apache Kafka (as the event broker)

Lombok for model simplification

Maven Dependencies

<dependency> <groupId>org.springframework.kafka</groupId> <artifactId>spring-kafka</artifactId> </dependency> <dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-web</artifactId> </dependency> <dependency> <groupId>org.projectlombok</groupId> <artifactId>lombok</artifactId> <scope>provided</scope> </dependency>

Example Services and Topics

| Sr.No. | Service | Events Published | Topics Subscribed |

|---|---|---|---|

| 1 | Order Service | OrderCreated, OrderCancelled | PaymentFailed, InventoryFailed |

| 2 | Payment Service | PaymentCompleted, PaymentFailed | OrderCreated |

| 3 | Inventory Service | InventoryReserved, InventoryFailed | PaymentCompleted |

Sample Event: OrderCreatedEvent.java

@Data

@AllArgsConstructor

@NoArgsConstructor

public class OrderCreatedEvent {

private String orderId;

private String productId;

private int quantity;

}

Order Service − Kafka Producer

@Service

public class OrderService {

@Autowired

private KafkaTemplate<String, Object> kafkaTemplate;

public void createOrder(OrderCreatedEvent event) {

kafkaTemplate.send("order-created", event);

}

}

Payment Service − Kafka Consumer

@KafkaListener(topics = "order-created", groupId = "payment-service")

public void handleOrder(OrderCreatedEvent event) {

// Process payment

boolean success = processPayment(event);

if (success) {

kafkaTemplate.send("payment-completed", new PaymentCompletedEvent(event.getOrderId()));

} else {

kafkaTemplate.send("payment-failed", new PaymentFailedEvent(event.getOrderId()));

}

}

Inventory Service − Kafka Consumer

@KafkaListener(topics = "payment-completed", groupId = "inventory-service")

public void handlePayment(PaymentCompletedEvent event) {

// Reserve inventory

boolean success = reserveStock(event.getOrderId());

if (success) {

kafkaTemplate.send("inventory-reserved", new InventoryReservedEvent(event.getOrderId()));

} else {

kafkaTemplate.send("inventory-failed", new InventoryFailedEvent(event.getOrderId()));

}

}

Saga Compensation and Failure Handling

Compensating Transactions

If a step fails (e.g., inventory reservation), previous actions must be reversed−

InventoryFailed → triggers PaymentRollback

PaymentFailed → triggers OrderCancelled

These compensating actions must be idempotent and safe to retry.

Benefits of the Saga Pattern

| Sr.No. | Benefit | Description |

|---|---|---|

| 1 | Decentralized workflow | Maintains autonomy of microservices |

| 2 | Resilience | Can recover from partial failures |

| 3 | Eventual consistency | Instead of strict ACID transactions |

| 4 | Scalable and fault-tolerant | Built on asynchronous messaging |

Challenges and Pitfalls

| Sr.No. | Challenge | Mitigation |

|---|---|---|

| 1 | Complex error handling | Use retries and DLQs |

| 2 | Debugging flows | Use tracing tools like Zipkin |

| 3 | Compensating logic overhead | Modularize and isolate business logic |

| 4 | Message ordering issues | Use Kafka partitions wisely |

Testing a Saga

Approaches

Use Testcontainers to simulate Kafka or RabbitMQ

Verify event flow using integration tests

Mock downstream services using WireMock

Simulate failures to test compensation logic

Real-World Examples

| Sr.No. | Company | Use of Saga Pattern |

|---|---|---|

| 1 | Netflix | Manages distributed workflows in video delivery |

| 2 | Booking.com | Manages hotel bookings, payments, and cancellations |

| 3 | Uber | Handles driver assignment, payments, and cancellations |

| 4 | Amazon | Processes multi-step order and inventory systems |

Best Practices

| Sr.No. | Practice | Reason |

|---|---|---|

| 1 | Use separate event models | Avoid domain model leakage |

| 2 | Make compensating actions idempotent | Safe retries |

| 3 | Implement timeouts | Avoid stuck sagas |

| 4 | Track saga state | Use DB or state store |

| 5 | Use correlation IDs | Easier debugging and tracing |

Conclusion

The Saga pattern provides an elegant solution to the problem of distributed transactions in a microservices architecture. Whether using choreography or orchestration, sagas enable services to maintain data consistency, handle failures gracefully, and ensure resilient workflows.

By combining Spring Boot with Kafka or orchestration engines, developers can build reliable, scalable, and maintainable systems that operate effectively across service boundaries.

Java Microservices - Centralized Logging (ELK Stack)

Introduction