- Home

- CentOS Overview

- Basic CentOS Linux Commands

- File / Folder Management

- User Management

- Quota Management

- Systemd Services Start and Stop

- Resource Mgmt with systemctl

- Resource Mgmt with crgoups

- Process Management

- Firewall Setup

- Configure PHP in CentOS Linux

- Set Up Python with CentOS Linux

- Configure Ruby on CentOS Linux

- Set Up Perl for CentOS Linux

- Install and Configure Open LDAP

- Create SSL Certificates

- Install Apache Web Server CentOS 7

- MySQL Setup On CentOS 7

- Set Up Postfix MTA and IMAP/POP3

- Install Anonymous FTP

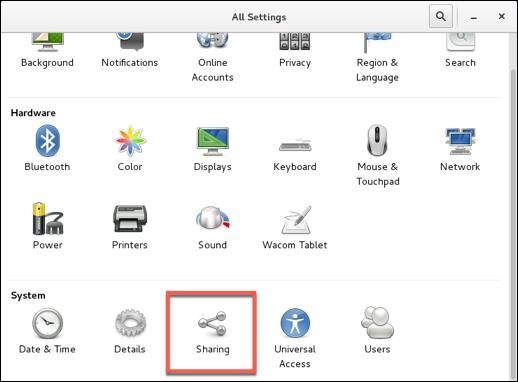

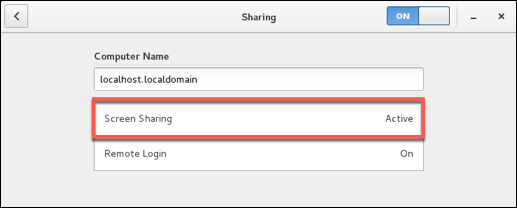

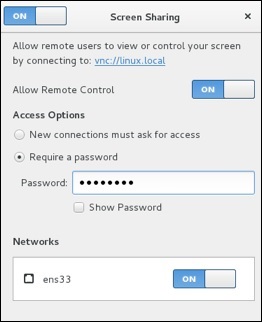

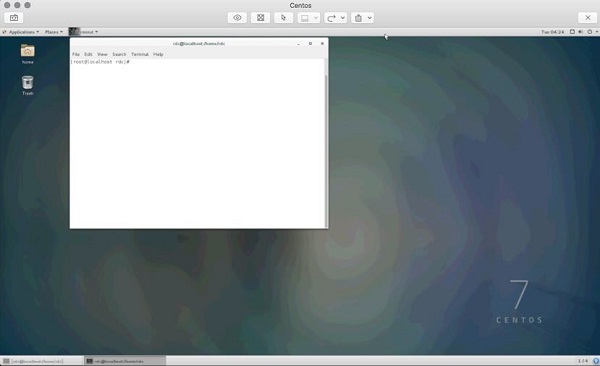

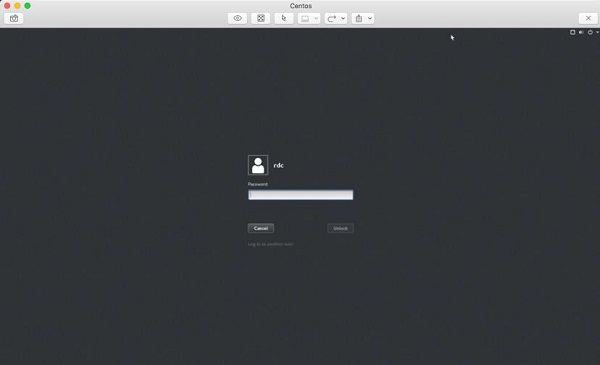

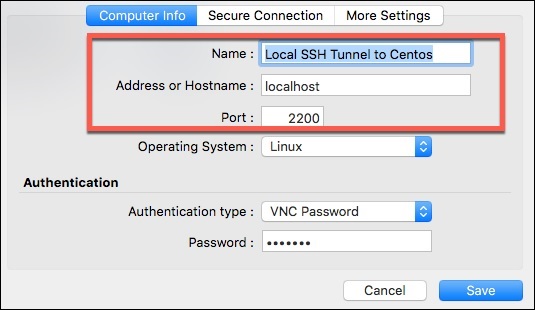

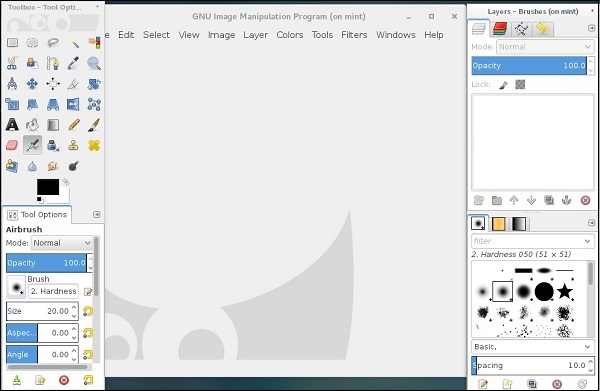

- Remote Management

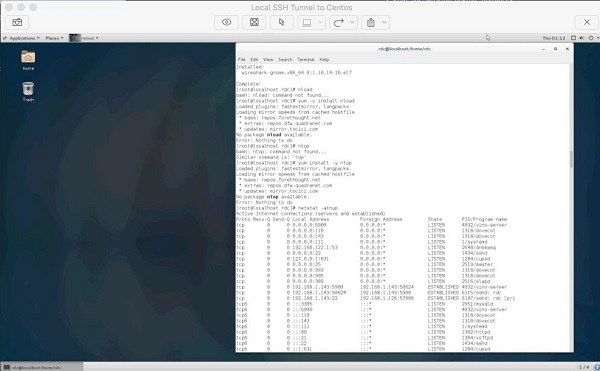

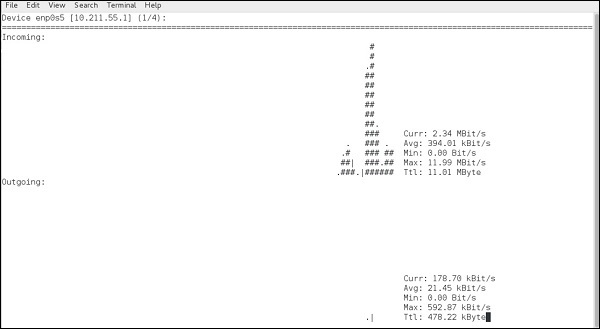

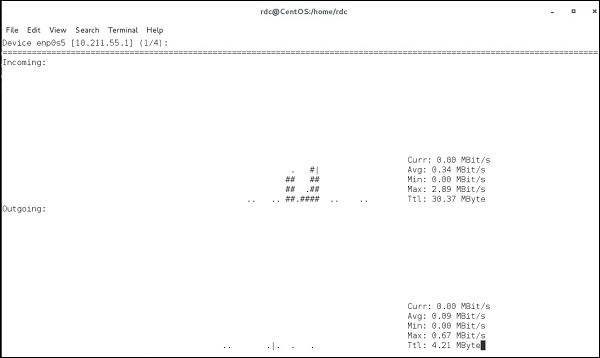

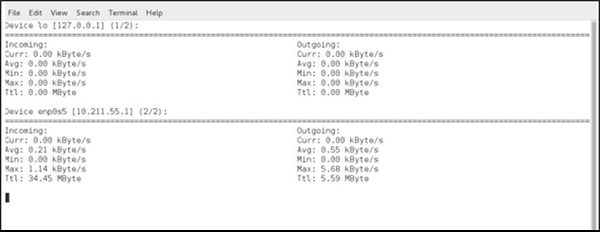

- Traffic Monitoring in CentOS

- Log Management

- Backup and Recovery

- System Updates

- Shell Scripting

- Package Management

- Volume Management

Linux Admin - Quick Guide

Linux Admin - CentOS Overview

Unique among business class Linux distributions, CentOS stays true to the open-source nature that Linux was founded on. The first Linux kernel was developed by a college student at the University of Helsinki (Linus Torvalds) and combined with the GNU utilities founded and promoted by Richard Stallman. CentOS has a proven, open-source licensing that can power todays business world.

CentOS has quickly become one of the most prolific server platforms in the world. Any Linux Administrator, when seeking employment, is bound to come across the words: CentOS Linux Experience Preferred. From startups to Fortune 10 tech titans, CentOS has placed itself amongst the higher echelons of server operating systems worldwide.

What makes CentOS stand out from other Linux distributions is a great combination of −

Open source licensing

Dedicated user-base of Linux professionals

Good hardware support

Rock-solid stability and reliability

Focus on security and updates

Strict adherence to software packaging standards needed in a corporate environment

Before starting the lessons, we assume that the readers have a basic knowledge of Linux and Administration fundamentals such as −

What is the root user?

The power of the root user

Basic concept of security groups and users

Experience using a Linux terminal emulator

Fundamental networking concepts

Fundamental understanding of interpreted programming languages (Perl, Python, Ruby)

Networking protocols such as HTTP, LDAP, FTP, IMAP, SMTP

Cores that compose a computer operating system: file system, drivers, and the kerne

Basic CentOS Linux Commands

Before learning the tools of a CentOS Linux Administrator, it is important to note the philosophy behind the Linux administration command line.

Linux was designed based on the Unix philosophy of small, precise tools chained together simplifying larger tasks. Linux, at its root, does not have large single-purpose applications for one specific use a lot of the time. Instead, there are hundreds of basic utilities that when combined offer great power to accomplish big tasks with efficiency.

Examples of the Linux Philosophy

For example, if an administrator wants a listing of all the current users on a system, the following chained commands can be used to get a list of all system users. On execution of the command, the users are on the system are listed in an alphabetical order.

[root@centosLocal centos]# cut /etc/passwd -d":" -f1 | sort abrt adm avahi bin centos chrony colord daemon dbus

It is easy to export this list into a text file using the following command.

[root@localhost /]# cut /etc/passwd -d ":" -f1 > system_users.txt [root@localhost /]# cat ./system_users.txt | sort | wc l 40 [root@localhost /]#

It is also possible to compare the user list with an export at a later date.

[root@centosLocal centos]# cut /etc/passwd -d ":" -f1 > system_users002.txt && cat system_users002.txt | sort | wc -l 41 [root@centosLocal centos]# diff ./system_users.txt ./system_users002.txt evilBackdoor [root@centosLocal centos]#

With this approach of small tools chained to accomplish bigger tasks, it is simpler to make a script performing these commands, than automatically email results at regular time intervals.

Basic Commands every Linux Administrator should be proficient in are −

In the Linux world, Administrators use filtering commands every day to parse logs, filter command output, and perform actions with interactive shell scripts. As mentioned, the power of these commands come in their ability to modify one another through a process called piping.

The following command shows how many words begin with the letter a from the CentOS main user dictionary.

[root@centosLocal ~]# egrep '^a.*$' /usr/share/dict/words | wc -l 25192 [root@centosLocal ~]#

Linux Admin - File / Folder Management

To introduce permissions as they apply to both directories and files in CentOS Linux, let's look at the following command output.

[centos@centosLocal etc]$ ls -ld /etc/yum* drwxr-xr-x. 6 root root 100 Dec 5 06:59 /etc/yum -rw-r--r--. 1 root root 970 Nov 15 08:30 /etc/yum.conf drwxr-xr-x. 2 root root 187 Nov 15 08:30 /etc/yum.repos.d

Note − The three primary object types you will see are

"-" − a dash for plain file

"d" − for a directory

"l" − for a symbolic link

We will focus on the three blocks of output for each directory and file −

- drwxr-xr-x : root : root

- -rw-r--r-- : root : root

- drwxr-xr-x : root : root

Now let's break this down, to better understand these lines −

| d | Means the object type is a directory |

| rwx | Indicates directory permissions applied to the owner |

| r-x | Indicates directory permissions applied to the group |

| r-x | Indicates directory permissions applied to the world |

| root | The first instance, indicates the owner of the directory |

| root | The second instance, indicates the group to which group permissions are applied |

Understanding the difference between owner, group and world is important. Not understanding this can have big consequences on servers that host services to the Internet.

Before we give a real-world example, let's first understand the permissions as they apply to directories and files.

Please take a look at the following table, then continue with the instruction.

| Octal | Symbolic | Perm. | Directory |

|---|---|---|---|

| 1 | x | Execute | Enter the directory and access files |

| 2 | w | Write | Delete or modify the files in a directory |

| 4 | r | Read | List the files within the directory |

Note − When files should be accessible for reading in a directory, it is common to apply read and execute permissions. Otherwise, the users will have difficulty working with the files. Leaving write disabled will assure files cannot be: renamed, deleted, copied over, or have permissions modified.

Applying Permissions to Directories and Files

When applying permissions, there are two concepts to understand −

- Symbolic Permissions

- Octal Permissions

In essence, each are the same but a different way to referring to, and assigning file permissions. For a quick guide, please study and refer to the following table −

| Read | Write | Execute | |

|---|---|---|---|

| Octal | 4 | 2 | 1 |

| Symbolic | r | w | x |

When assigning permissions using the octal method, use a 3 byte number such as: 760. The number 760 translates into: Owner: rwx; Group: rw; Other (or world) no permissions.

Another scenario: 733 would translate to: Owner: rwx; Group: wx; Other: wx.

There is one drawback to permissions using the Octal method. Existing permission sets cannot be modified. It is only possible to reassign the entire permission set of an object.

Now you might wonder, what is wrong with always re-assigning permissions? Imagine a large directory structure, for example /var/www/ on a production web-server. We want to recursively take away the w or write bit on all directories for Other. Thus, forcing it to be pro-actively added only when needed for security measures. If we re-assign the entire permission set, we take away all other custom permissions assigned to every sub-directory.

Hence, it will cause a problem for both the administrator and the user of the system. At some point, a person (or persons) would need to re-assign all the custom permissions that were wiped out by re-assigning the entire permission-set for every directory and object.

In this case, we would want to use the Symbolic method to modify permissions −

chmod -R o-w /var/www/

The above command would not "overwrite permissions" but modify the current permission sets. So get accustomed to using the best practice

- Octal only to assign permissions

- Symbolic to modify permission sets

It is important that a CentOS Administrator be proficient with both Octal and Symbolic permissions as permissions are important for the integrity of data and the entire operating system. If permissions are incorrect, the end result will be both sensitive data and the entire operating system will be compromised.

With that covered, let's look at a few commands for modifying permissions and object owner/members −

- chmod

- chown

- chgrp

- umask

chmod : Change File Mode Permission Bits

| Command | Action |

|---|---|

| -c | Like verbose, but will only report the changes made |

| -v | Verbose, outputsthe diagnostics for every request made |

| -R | Recursively applies the operation on files and directories |

chmod will allow us to change permissions of directories and files using octal or symbolic permission sets. We will use this to modify our assignment and uploads directories.

chown : Change File Owner and Group

| Command | Action |

|---|---|

| -c | Like verbose, but will only report the changes made |

| -v | Verbose, outputsthe diagnostics for every request made |

| -R | Recursively applies the operation on files and directories |

chown can modify both owning the user and group of objects. However, unless needing to modify both at the same time, using chgrp is usually used for groups.

chgrp : Change Group Ownership of File or Directory

| Command | Action |

|---|---|

| -c | Like verbose, but will only report the changes |

| -v | Verbose, outputs the diagnostics for every request made |

| -R | Recursively, applies the operations on file and directories |

chgrp will change the group owner to that supplied.

Real-world practice

Let's change all the subdirectory assignments in /var/www/students/ so the owning group is the students group. Then assign the root of students to the professors group. Later, make Dr. Terry Thomas the owner of the students directory, since he is tasked as being in-charge of all Computer Science academia at the school.

As we can see, when created, the directory is left pretty raw.

[root@centosLocal ~]# ls -ld /var/www/students/ drwxr-xr-x. 4 root root 40 Jan 9 22:03 /var/www/students/ [root@centosLocal ~]# ls -l /var/www/students/ total 0 drwxr-xr-x. 2 root root 6 Jan 9 22:03 assignments drwxr-xr-x. 2 root root 6 Jan 9 22:03 uploads [root@centosLocal ~]#

As Administrators we never want to give our root credentials out to anyone. But at the same time, we need to allow users the ability to do their job. So let's allow Dr. Terry Thomas to take more control of the file structure and limit what students can do.

[root@centosLocal ~]# chown -R drterryt:professors /var/www/students/ [root@centosLocal ~]# ls -ld /var/www/students/ drwxr-xr-x. 4 drterryt professors 40 Jan 9 22:03 /var/www/students/ [root@centosLocal ~]# ls -ls /var/www/students/ total 0 0 drwxr-xr-x. 2 drterryt professors 6 Jan 9 22:03 assignments 0 drwxr-xr-x. 2 drterryt professors 6 Jan 9 22:03 uploads [root@centosLocal ~]#

Now, each directory and subdirectory has an owner of drterryt and the owning group is professors. Since the assignments directory is for students to turn assigned work in, let's take away the ability to list and modify files from the students group.

[root@centosLocal ~]# chgrp students /var/www/students/assignments/ && chmod 736 /var/www/students/assignments/ [root@centosLocal assignments]# ls -ld /var/www/students/assignments/ drwx-wxrw-. 2 drterryt students 44 Jan 9 23:14 /var/www/students/assignments/ [root@centosLocal assignments]#

Students can copy assignments to the assignments directory. But they cannot list contents of the directory, copy over current files, or modify files in the assignments directory. Thus, it just allows the students to submit completed assignments. The CentOS filesystem will provide a date-stamp of when assignments turned in.

As the assignments directory owner −

[drterryt@centosLocal assignments]$ whoami drterryt [drterryt@centosLocal assignments]$ ls -ld /var/www/students/assignment drwx-wxrw-. 2 drterryt students 44 Jan 9 23:14 /var/www/students/assignments/ [drterryt@centosLocal assignments]$ ls -l /var/www/students/assignments/ total 4 -rw-r--r--. 1 adama students 0 Jan 9 23:14 myassign.txt -rw-r--r--. 1 tammyr students 16 Jan 9 23:18 terryt.txt [drterryt@centosLocal assignments]$

We can see, the directory owner can list files as well as modify and remove files.

umask Command: Supplies the Default Modes for File and Directory Permissions As They are Created

umask is an important command that supplies the default modes for File and Directory Permissions as they are created.

umask permissions use unary, negated logic.

| Permission | Operation |

|---|---|

| 0 | Read, write, execute |

| 1 | Read and write |

| 2 | Read and execute |

| 3 | Read only |

| 4 | Read and execute |

| 5 | Write only |

| 6 | Execute only |

| 7 | No permissions |

[adama@centosLocal umask_tests]$ ls -l ./ -rw-r--r--. 1 adama students 0 Jan 10 00:27 myDir -rw-r--r--. 1 adama students 0 Jan 10 00:27 myFile.txt [adama@centosLocal umask_tests]$ whoami adama [adama@centosLocal umask_tests]$ umask 0022 [adama@centosLocal umask_tests]$

Now, lets change the umask for our current user, and make a new file and directory.

[adama@centosLocal umask_tests]$ umask 077 [adama@centosLocal umask_tests]$ touch mynewfile.txt [adama@centosLocal umask_tests]$ mkdir myNewDir [adama@centosLocal umask_tests]$ ls -l total 0 -rw-r--r--. 1 adama students 0 Jan 10 00:27 myDir -rw-r--r--. 1 adama students 0 Jan 10 00:27 myFile.txt drwx------. 2 adama students 6 Jan 10 00:35 myNewDir -rw-------. 1 adama students 0 Jan 10 00:35 mynewfile.txt

As we can see, newly created files are a little more restrictive than before.

umask for users must should be changed in either −

- /etc/profile

- ~/bashrc

[root@centosLocal centos]# su adama [adama@centosLocal centos]$ umask 0022 [adama@centosLocal centos]$

Generally, the default umask in CentOS will be okay. When we run into trouble with a default of 0022, is usually when different departments belonging to different groups need to collaborate on projects.

This is where the role of a system administrator comes in, to balance the operations and design of the CentOS operating system.

Linux Admin - User Management

When discussing user management, we have three important terms to understand −

- Users

- Groups

- Permissions

We have already discussed in-depth permissions as applied to files and folders. In this chapter, let's discuss about users and groups.

CentOS Users

In CentOS, there are two types accounts −

System accounts − Used for a daemon or other piece of software.

Interactive accounts − Usually assigned to a user for accessing system resources.

The main difference between the two user types is −

System accounts are used by daemons to access files and directories. These will usually be disallowed from interactive login via shell or physical console login.

Interactive accounts are used by end-users to access computing resources from either a shell or physical console login.

With this basic understanding of users, let's now create a new user for Bob Jones in the Accounting Department. A new user is added with the adduser command.

Following are some adduser common switches −

| Switch | Action |

|---|---|

| -c | Adds comment to the user account |

| -m | Creates user home directory in default location, if nonexistent |

| -g | Default group to assign the user |

| -n | Does not create a private group for the user, usually a group with username |

| -M | Does not create a home directory |

| -s | Default shell other than /bin/bash |

| -u | Specifies UID (otherwise assigned by the system) |

| -G | Additional groups to assign the user to |

When creating a new user, use the -c, -m, -g, -n switches as follows −

[root@localhost Downloads]# useradd -c "Bob Jones Accounting Dept Manager" -m -g accounting -n bjones

Now let's see if our new user has been created −

[root@localhost Downloads]# id bjones (bjones) gid = 1001(accounting) groups = 1001(accounting) [root@localhost Downloads]# grep bjones /etc/passwd bjones:x:1001:1001:Bob Jones Accounting Dept Manager:/home/bjones:/bin/bash [root@localhost Downloads]#

Now we need to enable the new account using the passwd command −

[root@localhost Downloads]# passwd bjones Changing password for user bjones. New password: Retype new password: passwd: all authentication tokens updated successfully. [root@localhost Downloads]#

The user account is not enabled allowing the user to log into the system.

Disabling User Accounts

There are several methods to disable accounts on a system. These range from editing the /etc/passwd file by hand. Or even using the passwd command with the -lswitch. Both of these methods have one big drawback: if the user has ssh access and uses an RSA key for authentication, they can still login using this method.

Now lets use the chage command, changing the password expiry date to a previous date. Also, it may be good to make a note on the account as to why we disabled it.

[root@localhost Downloads]# chage -E 2005-10-01 bjones [root@localhost Downloads]# usermod -c "Disabled Account while Bob out of the country for five months" bjones [root@localhost Downloads]# grep bjones /etc/passwd bjones:x:1001:1001:Disabled Account while Bob out of the country for four months:/home/bjones:/bin/bash [root@localhost Downloads]#

Manage Groups

Managing groups in Linux makes it convenient for an administrator to combine the users within containers applying permission-sets applicable to all group members. For example, all users in Accounting may need access to the same files. Thus, we make an accounting group, adding Accounting users.

For the most part, anything requiring special permissions should be done in a group. This approach will usually save time over applying special permissions to just one user. Example, Sally is in-charge of reports and only Sally needs access to certain files for reporting. However, what if Sally is sick one day and Bob does reports? Or the need for reporting grows? When a group is made, an Administrator only needs to do it once. The add users is applied as needs change or expand.

Following are some common commands used for managing groups −

- chgrp

- groupadd

- groups

- usermod

chgrp − Changes the group ownership for a file or directory.

Let's make a directory for people in the accounting group to store files and create directories for files.

[root@localhost Downloads]# mkdir /home/accounting [root@localhost Downloads]# ls -ld /home/accounting drwxr-xr-x. 2 root root 6 Jan 13 10:18 /home/accounting [root@localhost Downloads]#

Next, let's give group ownership to the accounting group.

[root@localhost Downloads]# chgrp -v accounting /home/accounting/ changed group of /home/accounting/ from root to accounting [root@localhost Downloads]# ls -ld /home/accounting/ drwxr-xr-x. 2 root accounting 6 Jan 13 10:18 /home/accounting/ [root@localhost Downloads]#

Now, everyone in the accounting group has read and execute permissions to /home/accounting. They will need write permissions as well.

[root@localhost Downloads]# chmod g+w /home/accounting/ [root@localhost Downloads]# ls -ld /home/accounting/ drwxrwxr-x. 2 root accounting 6 Jan 13 10:18 /home/accounting/ [root@localhost Downloads]#

Since the accounting group may deal with sensitive documents, we need to apply some restrictive permissions for other or world.

[root@localhost Downloads]# chmod o-rx /home/accounting/ [root@localhost Downloads]# ls -ld /home/accounting/ drwxrwx---. 2 root accounting 6 Jan 13 10:18 /home/accounting/ [root@localhost Downloads]#

groupadd − Used to make a new group.

| Switch | Action |

|---|---|

| -g | Specifies a GID for the group |

| -K | Overrides specs for GID in /etc/login.defs |

| -o | Allows overriding non-unique group id disallowance |

| -p | Group password, allowing the users to activate themselves |

Let's make a new group called secret. We will add a password to the group, allowing the users to add themselves with a known password.

[root@localhost]# groupadd secret [root@localhost]# gpasswd secret Changing the password for group secret New Password: Re-enter new password: [root@localhost]# exit exit [centos@localhost ~]$ newgrp secret Password: [centos@localhost ~]$ groups secret wheel rdc [centos@localhost ~]$

In practice, passwords for groups are not used often. Secondary groups are adequate and sharing passwords amongst other users is not a great security practice.

The groups command is used to show which group a user belongs to. We will use this, after making some changes to our current user.

usermod is used to update account attributes.

Following are the common usermod switches.

| Switch | Action |

|---|---|

| -a | Appends, adds user to supplementary groups, only with the -G option |

| -c | Comment, updatesthe user comment value |

| -d | Home directory, updates the user's home directory |

| -G | Groups, adds or removesthe secondary user groups |

| -g | Group, default primary group of the user |

[root@localhost]# groups centos centos : accounting secret [root@localhost]# [root@localhost]# usermod -a -G wheel centos [root@localhost]# groups centos centos : accounting wheel secret [root@localhost]#

Linux Admin - Quota Management

CentOS disk quotas can be enabled both; alerting the system administrator and denying further disk-storage-access to a user before disk capacity is exceeded. When a disk is full, depending on what resides on the disk, an entire system can come to a screeching halt until recovered.

Enabling Quota Management in CentOS Linux is basically a 4 step process −

Step 1 − Enable quota management for groups and users in /etc/fstab.

Step 2 − Remount the filesystem.

Step 3 − Create Quota database and generate disk usage table.

Step 4 − Assign quota policies.

Enable Quota Management in /etc/fstab

First, we want to backup our /etc/fstab filen −

[root@centosLocal centos]# cp -r /etc/fstab ./

We now have a copy of our known working /etc/fstab in the current working directory.

# # /etc/fstab # Created by anaconda on Sat Dec 17 02:44:51 2016 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # /dev/mapper/cl-root / xfs defaults 0 0 UUID = 4b9a40bc-9480-4 /boot xfs defaults 0 0 /dev/mapper/cl-home /home xfs defaults,usrquota,grpquota 0 0 /dev/mapper/cl-swap swap swap defaults 0 0

We made the following changes in the options section of /etc/fstab for the volume or Label to where quotas are to be applied for users and groups.

- usrquota

- grpquota

As you can see, we are using the xfs filesystem. When using xfs there are extra manual steps involved. /home is on the same disk as /. Further investigation shows / is set for noquota, which is a kernel level mounting option. We must re-configure our kernel boot options.

root@localhost rdc]# mount | grep ' / ' /dev/mapper/cl-root on / type xfs (rw,relatime,seclabel,attr2,inode64,noquota) [root@localhost rdc]#

Reconfiguring Kernel Boot Options for XFS File Systems

This step is only necessary under two conditions −

- When the disk/partition we are enabling quotas on, is using the xfs file system

- When the kernel is passing noquota parameter to /etc/fstab at boot time

Step 1 − Make a backup of /etc/default/grub.

cp /etc/default/grub ~/

Step 2 − Modify /etc/default/grub.

Here is the default file.

GRUB_TIMEOUT=5 GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)" GRUB_DEFAULT=saved GRUB_DISABLE_SUBMENU=true GRUB_TERMINAL_OUTPUT="console" GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=cl/root rd.lvm.lv=cl/swap rhgb quiet" GRUB_DISABLE_RECOVERY="true"

We want to modify the following line −

GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=cl/root rd.lvm.lv=cl/swap rhgb quiet"

to

GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=cl/root rd.lvm.lv =cl/swap rhgb quiet rootflags=usrquota,grpquota"

Note − It is important we copy these changes verbatim. After we reconfigure grub.cfg, our system will fail to boot if any errors were made in the configuration. Please, try this part of the tutorial on a non-production system.

Step 3 − Backup your working grub.cfg

cp /boot/grub2/grub.cfg /boot/grub2/grub.cfg.bak

Make a new grub.cfg

[root@localhost rdc]# grub2-mkconfig -o /boot/grub2/grub.cfg Generating grub configuration file ... Found linux image: /boot/vmlinuz-3.10.0-514.el7.x86_64 Found initrd image: /boot/initramfs-3.10.0-514.el7.x86_64.img Found linux image: /boot/vmlinuz-0-rescue-dbba7fa47f73457b96628ba8f3959bfd Found initrd image: /boot/initramfs-0-rescuedbba7fa47f73457b96628ba8f3959bfd.img done [root@localhost rdc]#

Reboot

[root@localhost rdc]#reboot

If all modifications were precise, we should not have the availability to add quotas to the xfs file system.

[rdc@localhost ~]$ mount | grep ' / ' /dev/mapper/cl-root on / type xfs (rw,relatime,seclabel,attr2,inode64,usrquota,grpquota) [rdc@localhost ~]$

We have passed the usrquota and grpquota parameters via grub.

Now, again edit /etc/fstab to include / since /homeon the same physical disk.

/dev/mapper/cl-root/xfs defaults,usrquota,grpquota 0 0

Now let's enable the quota databases.

[root@localhost rdc]# quotacheck -acfvugM

Make sure Quotas are enabled.

[root@localhost rdc]# quotaon -ap group quota on / (/dev/mapper/cl-root) is on user quota on / (/dev/mapper/cl-root) is on group quota on /home (/dev/mapper/cl-home) is on user quota on /home (/dev/mapper/cl-home) is on [root@localhost rdc]#

Remount the File System

If the partition or disk is separate from the actively booted partition, we can remount without rebooting. If the quota was configured on a disk/partition booted in the root directory /, we may need to reboot the operating system. Forcing the remount and applying changes, the need to remount the filesystem may vary.

[rdc@localhost ~]$ df Filesystem 1K-blocks Used Available Use% Mounted on /dev/mapper/cl-root 22447404 4081860 18365544 19% / devtmpfs 903448 0 903448 0% /dev tmpfs 919308 100 919208 1% /dev/shm tmpfs 919308 9180 910128 1% /run tmpfs 919308 0 919308 0% /sys/fs/cgroup /dev/sda2 1268736 176612 1092124 14% /boot /dev/mapper/cl-var 4872192 158024 4714168 4% /var /dev/mapper/cl-home 18475008 37284 18437724 1% /home tmpfs 183864 8 183856 1% /run/user/1000 [rdc@localhost ~]$

As we can see, LVM volumes are in use. So it's simple to just reboot. This will remount /home and load the /etc/fstab configuration changes into active configuration.

Create Quota Database Files

CentOS is now capable of working with disk quotas on /home. To enable full quota supprt, we must run the quotacheck command.

quotacheck will create two files −

- aquota.user

- aquota.group

These are used to store quota information for the quota enabled disks/partitions.

Following are the common quotacheck switches.

| Switch | Action |

|---|---|

| -u | Checks for user quotas |

| -g | Checks for group quotas |

| -c | Quotas should be enabled for each file system with enables quotas |

| -v | Displays verbose output |

Add Quota Limits Per User

For this, we will use the edquota command, followed by the username −

[root@localhost rdc]# edquota centos Disk quotas for user centos (uid 1000): Filesystem blocks soft hard inodes soft hard /dev/mapper/cl-root 12 0 0 13 0 0 /dev/mapper/cl-home 4084 0 0 140 0 0

Let's look at each column.

Filesystem − It is the filesystem quotas for the user applied to

blocks − How many blocks the user is currently using on each filesystem

soft − Set blocks for a soft limit. Soft limit allows the user to carry quota for a given time period

hard − Set blocks for a hard limit. Hard limit is total allowable quota

inodes − How many inodes the user is currently using

soft − Soft inode limit

hard − Hard inode limit

To check our current quota as a user −

[centos@localhost ~]$ quota Disk quotas for user centos (uid 1000): Filesystem blocks quota limit grace files quota limit grace /dev/mapper/cl-home 6052604 56123456 61234568 475 0 0 [centos@localhost ~]$

Following is an error given to a user when the hard quota limit has exceeded.

[centos@localhost Downloads]$ cp CentOS-7-x86_64-LiveKDE-1611.iso.part ../Desktop/ cp: cannot create regular file ../Desktop/CentOS-7-x86_64-LiveKDE- 1611.iso.part: Disk quota exceeded [centos@localhost Downloads]$

As we can see, we are closely within this user's disk quota. Let's set a soft limit warning. This way, the user will have advance notice before quota limits expire. From experience, you will get end-user complaints when they come into work and need to spend 45 minutes clearing files to actually get to work.

As an Administrator, we can check quota usage with the repquota command.

[root@localhost Downloads]# repquota /home

Block limits File limits

User used soft hard grace used soft hard grace

----------------------------------------------------------------------------------------

root -- 0 0 0 3 0 0

centos -+ 6189824 56123456 61234568 541 520 540 6days

[root@localhost Downloads]#

As we can see, the user centos has exceeded their hard block quota and can no longer use any more disk space on /home.

-+denotes a hard quota has been exceeded on the filesystem.

When planning quotas, it is necessary to do a little math. What an Administrator needs to know is:How many users are on the system? How much free space to allocate amongst users/groups? How many bytes make up a block on the file system?

Define quotas in terms of blocks as related to free disk-space.It is recommended to leave a "safe" buffer of free-space on the file system that will remain in worst case scenario: all quotas are simultaneously exceeded. This is especially on a partition that is used by the system for writing logs.

Systemd Services Start and Stop

systemd is the new way of running services on Linux. systemd has a superceded sysvinit. systemd brings faster boot-times to Linux and is now, a standard way to manage Linux services. While stable, systemd is still evolving.

systemd as an init system, is used to manage both services and daemons that need status changes after the Linux kernel has been booted. By status change starting, stopping, reloading, and adjusting service state is applied.

First, let's check the version of systemd currently running on our server.

[centos@localhost ~]$ systemctl --version systemd 219 +PAM +AUDIT +SELINUX +IMA -APPARMOR +SMACK +SYSVINIT +UTMP +LIBCRYPTSETUP +GCRYPT +GNUTLS +ACL +XZ -LZ4 -SECCOMP +BLKID +ELFUTILS +KMOD +IDN [centos@localhost ~]$

As of CentOS version 7, fully updated at the time of this writing systemd version 219 is the current stable version.

We can also analyze the last server boot time with systemd-analyze

[centos@localhost ~]$ systemd-analyze Startup finished in 1.580s (kernel) + 908ms (initrd) + 53.225s (userspace) = 55.713s [centos@localhost ~]$

When the system boot times are slower, we can use the systemd-analyze blame command.

[centos@localhost ~]$ systemd-analyze blame 40.882s kdump.service 5.775s NetworkManager-wait-online.service 4.701s plymouth-quit-wait.service 3.586s postfix.service 3.121s systemd-udev-settle.service 2.649s tuned.service 1.848s libvirtd.service 1.437s network.service 875ms packagekit.service 855ms gdm.service 514ms firewalld.service 438ms rsyslog.service 436ms udisks2.service 398ms sshd.service 360ms boot.mount 336ms polkit.service 321ms accounts-daemon.service

When working with systemd, it is important to understand the concept of units. Units are the resources systemd knows how to interpret. Units are categorized into 12 types as follows −

- .service

- .socket

- .device

- .mount

- .automount

- .swap

- .target

- .path

- .timer

- .snapshot

- .slice

- .scope

For the most part, we will be working with .service as unit targets. It is recommended to do further research on the other types. As only .service units will apply to starting and stopping systemd services.

Each unit is defined in a file located in either −

/lib/systemd/system − base unit files

/etc/systemd/system − modified unit files started at run-time

Manage Services with systemctl

To work with systemd, we will need to get very familiar with the systemctl command. Following are the most common command line switches for systemctl.

| Switch | Action |

|---|---|

| -t | Comma separated value of unit types such as service or socket |

| -a | Shows all loaded units |

| --state | Shows all units in a defined state, either: load, sub, active, inactive, etc.. |

| -H | Executes operation remotely. Specify Host name or host and user separated by @. |

Basic systemctl Usage

systemctl [operation] example: systemctl --state [servicename.service]

For a quick look at all the services running on our box.

[root@localhost rdc]# systemctl -t service UNIT LOAD ACTIVE SUB DESCRIPTION abrt-ccpp.service loaded active exited Install ABRT coredump hook abrt-oops.service loaded active running ABRT kernel log watcher abrt-xorg.service loaded active running ABRT Xorg log watcher abrtd.service loaded active running ABRT Automated Bug Reporting Tool accounts-daemon.service loaded active running Accounts Service alsa-state.service loaded active running Manage Sound Card State (restore and store) atd.service loaded active running Job spooling tools auditd.service loaded active running Security Auditing Service avahi-daemon.service loaded active running Avahi mDNS/DNS-SD Stack blk-availability.service loaded active exited Availability of block devices bluetooth.service loaded active running Bluetooth service chronyd.service loaded active running NTP client/server

Stopping a Service

Let's first, stop the bluetooth service.

[root@localhost]# systemctl stop bluetooth [root@localhost]# systemctl --all -t service | grep bluetooth bluetooth.service loaded inactive dead Bluetooth service [root@localhost]#

As we can see, the bluetooth service is now inactive.

To start the bluetooth service again.

[root@localhost]# systemctl start bluetooth [root@localhost]# systemctl --all -t service | grep bluetooth bluetooth.service loaded active running Bluetooth service [root@localhost]#

Note − We didn't specify bluetooth.service, since the .service is implied. It is a good practice to think of the unit type appending the service we are dealing with. So, from here on, we will use the .service extension to clarify we are working on service unit operations.

The primary actions that can be performed on a service are −

| Start | Starts the service |

| Stop | Stops a service |

| Reload | Reloads the active configuration of a service w/o stopping it (like kill -HUP in system v init) |

| Restart | Starts, then stops a service |

| Enable | Starts a service at boot time |

| Disable | Stops a service from automatically starting at run time |

The above actions are primarily used in the following scenarios −

| Start | To bring a service up that has been put in the stopped state. |

| Stop | To temporarily shut down a service (for example when a service must be stopped to access files locked by the service, as when upgrading the service) |

| Reload | When a configuration file has been edited and we want to apply the new changes while not stopping the service. |

| Restart | In the same scenario as reload, but the service does not support reload. |

| Enable | When we want a disabled service to run at boot time. |

| Disable | Used primarily when there is a need to stop a service, but it starts on boot. |

To check the status of a service −

[root@localhost]# systemctl status network.service network.service - LSB: Bring up/down networking Loaded: loaded (/etc/rc.d/init.d/network; bad; vendor preset: disabled) Active: active (exited) since Sat 2017-01-14 04:43:48 EST; 1min 31s ago Docs: man:systemd-sysv-generator(8) Process: 923 ExecStart = /etc/rc.d/init.d/network start (code=exited, status = 0/SUCCESS) localhost.localdomain systemd[1]: Starting LSB: Bring up/down networking... localhost.localdomain network[923]: Bringing up loopback interface: [ OK ] localhost.localdomain systemd[1]: Started LSB: Bring up/down networking. [root@localhost]#

Show us the current status of the networking service. If we want to see all the services related to networking, we can use −

[root@localhost]# systemctl --all -t service | grep -i network network.service loaded active exited LSB: Bring up/ NetworkManager-wait-online.service loaded active exited Network Manager NetworkManager.service loaded active running Network Manager ntpd.service loaded inactive dead Network Time rhel-import-state.service loaded active exited Import network [root@localhost]#

For those familiar with the sysinit method of managing services, it is important to make the transition to systemd. systemd is the new way starting and stopping daemon services in Linux.

Linux Admin - Resource Mgmt with systemctl

systemctl is the utility used to control systemd. systemctl provides CentOS administrators with the ability to perform a multitude of operations on systemd including −

- Configure systemd units

- Get status of systemd untis

- Start and stop services

- Enable / disable systemd services for runtime, etc.

The command syntax for systemctl is pretty basic, but can tangle with switches and options. We will present the most essential functions of systemctl needed for administering CentOS Linux.

Basic systemctl syntax: systemctl [OPTIONS] COMMAND [NAME]

Following are the common commands used with systemctl −

- start

- stop

- restart

- reload

- status

- is-active

- list-units

- enable

- disable

- cat

- show

We have already discussed start, stop, reload, restart, enable and disable with systemctl. So let's go over the remaining commonly used commands.

status

In its most simple form, the status command can be used to see the system status as a whole −

[root@localhost rdc]# systemctl status

localhost.localdomain

State: running

Jobs: 0 queued

Failed: 0 units

Since: Thu 2017-01-19 19:14:37 EST; 4h 5min ago

CGroup: /

1 /usr/lib/systemd/systemd --switched-root --system --deserialize 21

user.slice

user-1002.slice

session-1.scope

2869 gdm-session-worker [pam/gdm-password]

2881 /usr/bin/gnome-keyring-daemon --daemonize --login

2888 gnome-session --session gnome-classic

2895 dbus-launch --sh-syntax --exit-with-session

The above output has been condensed. In the real-world systemctl status will output about 100 lines of treed process statuses.

Let's say we want to check the status of our firewall service −

[root@localhost rdc]# systemctl status firewalld

firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled; vendor preset: enabled)

Active: active (running) since Thu 2017-01-19 19:14:55 EST; 4h 12min ago

Docs: man:firewalld(1)

Main PID: 825 (firewalld)

CGroup: /system.slice/firewalld.service

825 /usr/bin/python -Es /usr/sbin/firewalld --nofork --nopid

As you see, our firewall service is currently active and has been for over 4 hours.

list-units

The list-units command allows us to list all the units of a certain type. Let's check for sockets managed by systemd −

[root@localhost]# systemctl list-units --type=socket UNIT LOAD ACTIVE SUB DESCRIPTION avahi-daemon.socket loaded active running Avahi mDNS/DNS-SD Stack Activation Socket cups.socket loaded active running CUPS Printing Service Sockets dbus.socket loaded active running D-Bus System Message Bus Socket dm-event.socket loaded active listening Device-mapper event daemon FIFOs iscsid.socket loaded active listening Open-iSCSI iscsid Socket iscsiuio.socket loaded active listening Open-iSCSI iscsiuio Socket lvm2-lvmetad.socket loaded active running LVM2 metadata daemon socket lvm2-lvmpolld.socket loaded active listening LVM2 poll daemon socket rpcbind.socket loaded active listening RPCbind Server Activation Socket systemd-initctl.socket loaded active listening /dev/initctl Compatibility Named Pipe systemd-journald.socket loaded active running Journal Socket systemd-shutdownd.socket loaded active listening Delayed Shutdown Socket systemd-udevd-control.socket loaded active running udev Control Socket systemd-udevd-kernel.socket loaded active running udev Kernel Socket virtlockd.socket loaded active listening Virtual machine lock manager socket virtlogd.socket loaded active listening Virtual machine log manager socket

Now lets check the current running services −

[root@localhost rdc]# systemctl list-units --type=service UNIT LOAD ACTIVE SUB DESCRIPTION abrt-ccpp.service loaded active exited Install ABRT coredump hook abrt-oops.service loaded active running ABRT kernel log watcher abrt-xorg.service loaded active running ABRT Xorg log watcher abrtd.service loaded active running ABRT Automated Bug Reporting Tool accounts-daemon.service loaded active running Accounts Service alsa-state.service loaded active running Manage Sound Card State (restore and store) atd.service loaded active running Job spooling tools auditd.service loaded active running Security Auditing Service

is-active

The is-active command is an example of systemctl commands designed to return the status information of a unit.

[root@localhost rdc]# systemctl is-active ksm.service active

cat

cat is one of the seldomly used command. Instead of using cat at the shell and typing the path to a unit file, simply use systemctl cat.

[root@localhost]# systemctl cat firewalld # /usr/lib/systemd/system/firewalld.service [Unit] Description=firewalld - dynamic firewall daemon Before=network.target Before=libvirtd.service Before = NetworkManager.service After=dbus.service After=polkit.service Conflicts=iptables.service ip6tables.service ebtables.service ipset.service Documentation=man:firewalld(1) [Service] EnvironmentFile = -/etc/sysconfig/firewalld ExecStart = /usr/sbin/firewalld --nofork --nopid $FIREWALLD_ARGS ExecReload = /bin/kill -HUP $MAINPID # supress to log debug and error output also to /var/log/messages StandardOutput = null StandardError = null Type = dbus BusName = org.fedoraproject.FirewallD1 [Install] WantedBy = basic.target Alias = dbus-org.fedoraproject.FirewallD1.service [root@localhost]#

Now that we have explored both systemd and systemctl in more detail, let's use them to manage the resources in cgroups or control groups.

Linux Admin - Resource Mgmt with crgoups

cgroups or Control Groups are a feature of the Linux kernel that allows an administrator to allocate or cap the system resources for services and also group.

To list active control groups running, we can use the following ps command −

[root@localhost]# ps xawf -eo pid,user,cgroup,args 8362 root - \_ [kworker/1:2] 1 root - /usr/lib/systemd/systemd --switched- root --system -- deserialize 21 507 root 7:cpuacct,cpu:/system.slice /usr/lib/systemd/systemd-journald 527 root 7:cpuacct,cpu:/system.slice /usr/sbin/lvmetad -f 540 root 7:cpuacct,cpu:/system.slice /usr/lib/systemd/systemd-udevd 715 root 7:cpuacct,cpu:/system.slice /sbin/auditd -n 731 root 7:cpuacct,cpu:/system.slice \_ /sbin/audispd 734 root 7:cpuacct,cpu:/system.slice \_ /usr/sbin/sedispatch 737 polkitd 7:cpuacct,cpu:/system.slice /usr/lib/polkit-1/polkitd --no-debug 738 rtkit 6:memory:/system.slice/rtki /usr/libexec/rtkit-daemon 740 dbus 7:cpuacct,cpu:/system.slice /bin/dbus-daemon --system -- address=systemd: --nofork --nopidfile --systemd-activation

Resource Management, as of CentOS 6.X, has been redefined with the systemd init implementation. When thinking Resource Management for services, the main thing to focus on are cgroups. cgroups have advanced with systemd in both functionality and simplicity.

The goal of cgroups in resource management is -no one service can take the system, as a whole, down. Or no single service process (perhaps a poorly written PHP script) will cripple the server functionality by consuming too many resources.

cgroups allow resource control of units for the following resources −

CPU − Limit cpu intensive tasks that are not critical as other, less intensive tasks

Memory − Limit how much memory a service can consume

Disks − Limit disk i/o

**CPU Time: **

Tasks needing less CPU priority can have custom configured CPU Slices.

Let's take a look at the following two services for example.

Polite CPU Service 1

[root@localhost]# systemctl cat polite.service

# /etc/systemd/system/polite.service

[Unit]

Description = Polite service limits CPU Slice and Memory

After=remote-fs.target nss-lookup.target

[Service]

MemoryLimit = 1M

ExecStart = /usr/bin/sha1sum /dev/zero

ExecStop = /bin/kill -WINCH ${MAINPID}

WantedBy=multi-user.target

# /etc/systemd/system/polite.service.d/50-CPUShares.conf

[Service]

CPUShares = 1024

[root@localhost]#

Evil CPU Service 2

[root@localhost]# systemctl cat evil.service

# /etc/systemd/system/evil.service

[Unit]

Description = I Eat You CPU

After=remote-fs.target nss-lookup.target

[Service]

ExecStart = /usr/bin/md5sum /dev/zero

ExecStop = /bin/kill -WINCH ${MAINPID}

WantedBy=multi-user.target

# /etc/systemd/system/evil.service.d/50-CPUShares.conf

[Service]

CPUShares = 1024

[root@localhost]#

Let's set Polite Service using a lesser CPU priority −

systemctl set-property polite.service CPUShares = 20 /system.slice/polite.service 1 70.5 124.0K - - /system.slice/evil.service 1 99.5 304.0K - -

As we can see, over a period of normal system idle time, both rogue processes are still using CPU cycles. However, the one set to have less time-slices is using less CPU time. With this in mind, we can see how using a lesser time time-slice would allow essential tasks better access the system resources.

To set services for each resource, the set-property method defines the following parameters −

systemctl set-property name parameter=value

| CPU Slices | CPUShares |

| Memory Limit | MemoryLimit |

| Soft Memory Limit | MemorySoftLimit |

| Block IO Weight | BlockIOWeight |

| Block Device Limit (specified in /volume/path) ) | BlockIODeviceWeight |

| Read IO | BlockIOReadBandwidth |

| Disk Write IO | BlockIOReadBandwidth |

Most often services will be limited by CPU use, Memory limits and Read / Write IO.

After changing each, it is necessary to reload systemd and restart the service −

systemctl set-property foo.service CPUShares = 250 systemctl daemon-reload systemctl restart foo.service

Configure CGroups in CentOS Linux

To make custom cgroups in CentOS Linux, we need to first install services and configure them.

Step 1 − Install libcgroup (if not already installed).

[root@localhost]# yum install libcgroup Package libcgroup-0.41-11.el7.x86_64 already installed and latest version Nothing to do [root@localhost]#

As we can see, by default CentOS 7 has libcgroup installed with the everything installer. Using a minimal installer will require us to install the libcgroup utilities along with any dependencies.

Step 2 − Start and enable the cgconfig service.

[root@localhost]# systemctl enable cgconfig Created symlink from /etc/systemd/system/sysinit.target.wants/cgconfig.service to /usr/lib/systemd/system/cgconfig.service. [root@localhost]# systemctl start cgconfig [root@localhost]# systemctl status cgconfig cgconfig.service - Control Group configuration service Loaded: loaded (/usr/lib/systemd/system/cgconfig.service; enabled; vendor preset: disabled) Active: active (exited) since Mon 2017-01-23 02:51:42 EST; 1min 21s ago Main PID: 4692 (code=exited, status = 0/SUCCESS) Memory: 0B CGroup: /system.slice/cgconfig.service Jan 23 02:51:42 localhost.localdomain systemd[1]: Starting Control Group configuration service... Jan 23 02:51:42 localhost.localdomain systemd[1]: Started Control Group configuration service. [root@localhost]#

Linux Admin - Process Management

Following are the common commands used with Process Managementbg, fg, nohup, ps, pstree, top, kill, killall, free, uptime, nice.

Work with Processes

Quick Note: Process PID in Linux

In Linux every running process is given a PID or Process ID Number. This PID is how CentOS identifies a particular process. As we have discussed, systemd is the first process started and given a PID of 1 in CentOS.

Pgrep is used to get Linux PID for a given process name.

[root@CentOS]# pgrep systemd 1 [root@CentOS]#

As seen, the pgrep command returns the current PID of systemd.

Basic CentOS Process and Job Management in CentOS

When working with processes in Linux it is important to know how basic foregrounding and backgrounding processes is performed at the command line.

fg − Bringsthe process to the foreground

bg − Movesthe process to the background

jobs − List of the current processes attached to the shell

ctrl+z − Control + z key combination to sleep the current process

& − Startsthe process in the background

Let's start using the shell command sleep. sleep will simply do as it is named, sleep for a defined period of time: sleep.

[root@CentOS ~]$ jobs [root@CentOS ~]$ sleep 10 & [1] 12454 [root@CentOS ~]$ sleep 20 & [2] 12479 [root@CentOS ~]$ jobs [1]- Running sleep 10 & [2]+ Running sleep 20 & [cnetos@CentOS ~]$

Now, let's bring the first job to the foreground −

[root@CentOS ~]$ fg 1 sleep 10

If you are following along, you'll notice the foreground job is stuck in your shell. Now, let's put the process to sleep, then re-enable it in the background.

- Hit control+z

- Type: bg 1, sending the first job into the background and starting it.

[root@CentOS ~]$ fg 1 sleep 20 ^Z [1]+ Stopped sleep 20 [root@CentOS ~]$ bg 1 [1]+ sleep 20 & [root@CentOS ~]$

nohup

When working from a shell or terminal, it is worth noting that by default all the processes and jobs attached to the shell will terminate when the shell is closed or the user logs out. When using nohup the process will continue to run if the user logs out or closes the shell to which the process is attached.

[root@CentOS]# nohup ping www.google.com & [1] 27299 nohup: ignoring input and appending output to nohup.out [root@CentOS]# pgrep ping 27299 [root@CentOS]# kill -KILL `pgrep ping` [1]+ Killed nohup ping www.google.com [root@CentOS rdc]# cat nohup.out PING www.google.com (216.58.193.68) 56(84) bytes of data. 64 bytes from sea15s07-in-f4.1e100.net (216.58.193.68): icmp_seq = 1 ttl = 128 time = 51.6 ms 64 bytes from sea15s07-in-f4.1e100.net (216.58.193.68): icmp_seq = 2 ttl = 128 time = 54.2 ms 64 bytes from sea15s07-in-f4.1e100.net (216.58.193.68): icmp_seq = 3 ttl = 128 time = 52.7 ms

ps Command

The ps command is commonly used by administrators to investigate snapshots of a specific process. ps is commonly used with grep to filter out a specific process to analyze.

[root@CentOS ~]$ ps axw | grep python 762 ? Ssl 0:01 /usr/bin/python -Es /usr/sbin/firewalld --nofork -nopid 1296 ? Ssl 0:00 /usr/bin/python -Es /usr/sbin/tuned -l -P 15550 pts/0 S+ 0:00 grep --color=auto python

In the above command, we see all the processes using the python interpreter. Also included with the results were our grep command, looking for the string python.

Following are the most common command line switches used with ps.

| Switch | Action |

|---|---|

| a | Excludes constraints of only the reporting processes for the current user |

| x | Shows processes not attached to a tty or shell |

| w | Formats wide output display of the output |

| e | Shows environment after the command |

| -e | Selects all processes |

| -o | User-defined formatted output |

| -u | Shows all processes by a specific user |

| -C | Shows all processes by name or process id |

| --sort | Sorts the processes by definition |

To see all processes in use by the nobody user −

[root@CentOS ~]$ ps -u nobody PID TTY TIME CMD 1853 ? 00:00:00 dnsmasq [root@CentOS ~]$

To see all information about the firewalld process −

[root@CentOS ~]$ ps -wl -C firewalld F S UID PID PPID C PRI NI ADDR SZ WCHAN TTY TIME CMD 0 S 0 762 1 0 80 0 - 81786 poll_s ? 00:00:01 firewalld [root@CentOS ~]$

Let's see which processes are consuming the most memory −

[root@CentOS ~]$ ps aux --sort=-pmem | head -10 USER PID %CPU %MEM VSZ RSS TTY STAT START TIME COMMAND cnetos 6130 0.7 5.7 1344512 108364 ? Sl 02:16 0:29 /usr/bin/gnome-shell cnetos 6449 0.0 3.4 1375872 64440 ? Sl 02:16 0:00 /usr/libexec/evolution-calendar-factory root 5404 0.6 2.1 190256 39920 tty1 Ssl+ 02:15 0:27 /usr/bin/Xorg :0 -background none -noreset -audit 4 -verbose -auth /run/gdm/auth-for-gdm-iDefCt/database -seat seat0 -nolisten tcp vt1 cnetos 6296 0.0 1.7 1081944 32136 ? Sl 02:16 0:00 /usr/libexec/evolution/3.12/evolution-alarm-notify cnetos 6350 0.0 1.5 560728 29844 ? Sl 02:16 0:01 /usr/bin/prlsga cnetos 6158 0.0 1.4 1026956 28004 ? Sl 02:16 0:00 /usr/libexec/gnome-shell-calendar-server cnetos 6169 0.0 1.4 1120028 27576 ? Sl 02:16 0:00 /usr/libexec/evolution-source-registry root 762 0.0 1.4 327144 26724 ? Ssl 02:09 0:01 /usr/bin/python -Es /usr/sbin/firewalld --nofork --nopid cnetos 6026 0.0 1.4 1090832 26376 ? Sl 02:16 0:00 /usr/libexec/gnome-settings-daemon [root@CentOS ~]$

See all the processes by user centos and format, displaying the custom output −

[cnetos@CentOS ~]$ ps -u cnetos -o pid,uname,comm PID USER COMMAND 5802 centos gnome-keyring-d 5812 cnetos gnome-session 5819 cnetos dbus-launch 5820 cnetos dbus-daemon 5888 cnetos gvfsd 5893 cnetos gvfsd-fuse 5980 cnetos ssh-agent 5996 cnetos at-spi-bus-laun

pstree Command

pstree is similar to ps but is not often used. It displays the processes in a neater tree fashion.

[centos@CentOS ~]$ pstree

systemdModemManager2*[{ModemManager}]

NetworkManagerdhclient

2*[{NetworkManager}]

2*[abrt-watch-log]

abrtd

accounts-daemon2*[{accounts-daemon}]

alsactl

at-spi-bus-laundbus-daemon{dbus-daemon}

3*[{at-spi-bus-laun}]

at-spi2-registr2*[{at-spi2-registr}]

atd

auditdaudispdsedispatch

{audispd}

{auditd}

avahi-daemonavahi-daemon

caribou2*[{caribou}]

cgrulesengd

chronyd

colord2*[{colord}]

crond

cupsd

The total output from pstree can exceed 100 lines. Usually, ps will give more useful information.

top Command

top is one of the most often used commands when troubleshooting performance issues in Linux. It is useful for real-time stats and process monitoring in Linux. Following is the default output of top when brought up from the command line.

Tasks: 170 total, 1 running, 169 sleeping, 0 stopped, 0 zombie %Cpu(s): 2.3 us, 2.0 sy, 0.0 ni, 95.7 id, 0.0 wa, 0.0 hi, 0.0 si, 0.0 st KiB Mem : 1879668 total, 177020 free, 607544 used, 1095104 buff/cache KiB Swap: 3145724 total, 3145428 free, 296 used. 1034648 avail Mem PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND 5404 root 20 0 197832 48024 6744 S 1.3 2.6 1:13.22 Xorg 8013 centos 20 0 555316 23104 13140 S 1.0 1.2 0:14.89 gnome-terminal- 6339 centos 20 0 332336 6016 3248 S 0.3 0.3 0:23.71 prlcc 6351 centos 20 0 21044 1532 1292 S 0.3 0.1 0:02.66 prlshprof

Common hot keys used while running top (hot keys are accessed by pressing the key as top is running in your shell).

| Command | Action |

|---|---|

| b | Enables / disables bold highlighting on top menu |

| z | Cycles the color scheme |

| l | Cycles the load average heading |

| m | Cycles the memory average heading |

| t | Task information heading |

| h | Help menu |

| Shift+F | Customizes sorting and display fields |

Following are the common command line switches for top.

| Command | Action |

|---|---|

| -o | Sorts by column (can prepend with - or + to sort ascending or descending) |

| -u | Shows only processes from a specified user |

| -d | Updates the delay time of top |

| -O | Returns a list of columns which top can apply sorting |

Sorting options screen in top, presented using Shift+F. This screen allows customization of top display and sort options.

Fields Management for window 1:Def, whose current sort field is %MEM Navigate with Up/Dn, Right selects for move then <Enter> or Left commits, 'd' or <Space> toggles display, 's' sets sort. Use 'q' or <Esc> to end! * PID = Process Id TGID = Thread Group Id * USER = Effective User Name ENVIRON = Environment vars * PR = Priority vMj = Major Faults delta * NI = Nice Value vMn = Minor Faults delta * VIRT = Virtual Image (KiB) USED = Res+Swap Size (KiB) * RES = Resident Size (KiB) nsIPC = IPC namespace Inode * SHR = Shared Memory (KiB) nsMNT = MNT namespace Inode * S = Process Status nsNET = NET namespace Inode * %CPU = CPU Usage nsPID = PID namespace Inode * %MEM = Memory Usage (RES) nsUSER = USER namespace Inode * TIME+ = CPU Time, hundredths nsUTS = UTS namespace Inode * COMMAND = Command Name/Line PPID = Parent Process pid UID = Effective User Id

top, showing the processes for user rdc and sorted by memory usage −

PID USER %MEM PR NI VIRT RES SHR S %CPU TIME+ COMMAND 6130 rdc 6.2 20 0 1349592 117160 33232 S 0.0 1:09.34 gnome-shell 6449 rdc 3.4 20 0 1375872 64428 21400 S 0.0 0:00.43 evolution-calen 6296 rdc 1.7 20 0 1081944 32140 22596 S 0.0 0:00.40 evolution-alarm 6350 rdc 1.6 20 0 560728 29844 4256 S 0.0 0:10.16 prlsga 6281 rdc 1.5 20 0 1027176 28808 17680 S 0.0 0:00.78 nautilus 6158 rdc 1.5 20 0 1026956 28004 19072 S 0.0 0:00.20 gnome-shell-cal

Showing valid top fields (condensed) −

[centos@CentOS ~]$ top -O PID PPID UID USER RUID RUSER SUID SUSER GID GROUP PGRP TTY TPGID

kill Command

The kill command is used to kill a process from the command shell via its PID. When killing a process, we need to specify a signal to send. The signal lets the kernel know how we want to end the process. The most commonly used signals are −

SIGTERM is implied as the kernel lets a process know it should stop soon as it is safe to do so. SIGTERM gives the process an opportunity to exit gracefully and perform safe exit operations.

SIGHUP most daemons will restart when sent SIGHUP. This is often used on the processes when changes have been made to a configuration file.

SIGKILL since SIGTERM is the equivalent to asking a process to shut down. The kernel needs an option to end a process that will not comply with requests. When a process is hung, the SIGKILL option is used to shut the process down explicitly.

For a list off all signals that can be sent with kill the -l option can be used −

[root@CentOS]# kill -l 1) SIGHUP 2) SIGINT 3) SIGQUIT 4) SIGILL 5) SIGTRAP 6) SIGABRT 7) SIGBUS 8) SIGFPE 9) SIGKILL 10) SIGUSR1 11) SIGSEGV 12) SIGUSR2 13) SIGPIPE 14) SIGALRM 15) SIGTERM 16) SIGSTKFLT 17) SIGCHLD 18) SIGCONT 19) SIGSTOP 20) SIGTSTP 21) SIGTTIN 22) SIGTTOU 23) SIGURG 24) SIGXCPU 25) SIGXFSZ 26) SIGVTALRM 27) SIGPROF 28) SIGWINCH 29) SIGIO 30) SIGPWR 31) SIGSYS 34) SIGRTMIN 35) SIGRTMIN+1 36) SIGRTMIN+2 37) SIGRTMIN+3 38) SIGRTMIN+4 39) SIGRTMIN+5 40) SIGRTMIN+6 41) SIGRTMIN+7 42) SIGRTMIN+8 43) SIGRTMIN+9 44) SIGRTMIN+10 45) SIGRTMIN+11 46) SIGRTMIN+12 47) SIGRTMIN+13 48) SIGRTMIN+14 49) SIGRTMIN+15 50) SIGRTMAX-14 51) SIGRTMAX-13 52) SIGRTMAX-12 53) SIGRTMAX-11 54) SIGRTMAX-10 55) SIGRTMAX-9 56) SIGRTMAX-8 57) SIGRTMAX-7 58) SIGRTMAX-6 59) SIGRTMAX-5 60) SIGRTMAX-4 61) SIGRTMAX-3 62) SIGRTMAX-2 63) SIGRTMAX-1 64) SIGRTMAX [root@CentOS rdc]#

Using SIGHUP to restart system.

[root@CentOS]# pgrep systemd 1 464 500 643 15071 [root@CentOS]# kill -HUP 1 [root@CentOS]# pgrep systemd 1 464 500 643 15196 15197 15198 [root@CentOS]#

pkill will allow the administrator to send a kill signal by the process name.

[root@CentOS]# pgrep ping 19450 [root@CentOS]# pkill -9 ping [root@CentOS]# pgrep ping [root@CentOS]#

killall will kill all the processes. Be careful using killall as root, as it will kill all the processes for all users.

[root@CentOS]# killall chrome

free Command

free is a pretty simple command often used to quickly check the memory of a system. It displays the total amount of used physical and swap memory.

[root@CentOS]# free

total used free shared buff/cache available

Mem: 1879668 526284 699796 10304 653588 1141412

Swap: 3145724 0 3145724

[root@CentOS]#

nice Command

nice will allow an administrator to set the scheduling priority of a process in terms of CPU usages. The niceness is basically how the kernel will schedule CPU time slices for a process or job. By default, it is assumed the process is given equal access to CPU resources.

First, let's use top to check the niceness of the currently running processes.

PID USER PR NI VIRT RES SHR S %CPU %MEM TIME+ COMMAND 28 root 39 19 0 0 0 S 0.0 0.0 0:00.17 khugepaged 690 root 39 19 16808 1396 1164 S 0.0 0.1 0:00.01 alsactl] 9598 rdc 39 19 980596 21904 10284 S 0.0 1.2 0:00.27 tracker-extract 9599 rdc 39 19 469876 9608 6980 S 0.0 0.5 0:00.04 tracker-miner-a 9609 rdc 39 19 636528 13172 8044 S 0.0 0.7 0:00.12 tracker-miner-f 9611 rdc 39 19 469620 8984 6496 S 0.0 0.5 0:00.02 tracker-miner-u 27 root 25 5 0 0 0 S 0.0 0.0 0:00.00 ksmd 637 rtkit 21 1 164648 1276 1068 S 0.0 0.1 0:00.11 rtkit-daemon 1 root 20 0 128096 6712 3964 S 0.3 0.4 0:03.57 systemd 2 root 20 0 0 0 0 S 0.0 0.0 0:00.01 kthreadd 3 root 20 0 0 0 0 S 0.0 0.0 0:00.50 ksoftirqd/0 7 root 20 0 0 0 0 S 0.0 0.0 0:00.00 migration/0 8 root 20 0 0 0 0 S 0.0 0.0 0:00.00 rcu_bh 9 root 20 0 0 0 0 S 0.0 0.0 0:02.07 rcu_sched

We want to focus on the NICE column depicted by NI. The niceness range can be anywhere between -20 to positive 19. -20 represents the highest given priority.

nohup nice --20 ping www.google.com &

renice

renice allows us to change the current priority of a process that is already running.

renice 17 -p 30727

The above command will lower the priority of our ping process command.

Linux Admin - Firewall Setup

firewalld is the default front-end controller for iptables on CentOS. The firewalld front-end has two main advantages over raw iptables −

Uses easy-to-configure and implement zones abstracting chains and rules.

Rulesets are dynamic, meaning stateful connections are uninterrupted when the settings are changed and/or modified.

Remember, firewalld is the wrapper for iptables - not a replacement. While custom iptables commands can be used with firewalld, it is recommended to use firewalld as to not break the firewall functionality.

First, let's make sure firewalld is both started and enabled.

[root@CentOS rdc]# systemctl status firewalld

firewalld.service - firewalld - dynamic firewall daemon

Loaded: loaded (/usr/lib/systemd/system/firewalld.service; enabled; vendor preset: enabled)

Active: active (running) since Thu 2017-01-26 21:42:05 MST; 3h 46min ago

Docs: man:firewalld(1)

Main PID: 712 (firewalld)

Memory: 34.7M

CGroup: /system.slice/firewalld.service

712 /usr/bin/python -Es /usr/sbin/firewalld --nofork --nopid

We can see, firewalld is both active (to start on boot) and currently running. If inactive or not started we can use −

systemctl start firewalld && systemctl enable firewalld

Now that we have our firewalld service configured, let's assure it is operational.

[root@CentOS]# firewall-cmd --state running [root@CentOS]#

We can see, the firewalld service is fully functional.

Firewalld works on the concept of zones. A zone is applied to network interfaces through the Network Manager. We will discuss this in configuring networking. But for now, by default, changing the default zone will change any network adapters left in the default state of "Default Zone".

Let's take a quick look at each zone that comes out-of-the-box with firewalld.

| Sr.No. | Zone & Description |

|---|---|

| 1 | drop Low trust level. All incoming connections and packetsare dropped and only outgoing connections are possible via statefullness |

| 2 | block Incoming connections are replied with an icmp message letting the initiator know the request is prohibited |

| 3 | public All networks are restricted. However, selected incoming connections can be explicitly allowed |

| 4 | external Configures firewalld for NAT. Internal network remains private but reachable |

| 5 | dmz Only certain incoming connections are allowed. Used for systems in DMZ isolation |

| 6 | work By default, trust more computers on the network assuming the system is in a secured work environment |

| 7 | hone By default, more services are unfiltered. Assuming a system is on a home network where services such as NFS, SAMBA and SSDP will be used |

| 8 | trusted All machines on the network are trusted. Most incoming connections are allowed unfettered. This is not meant for interfaces exposed to the Internet |

The most common zones to use are:public, drop, work, and home.

Some scenarios where each common zone would be used are −

public − It is the most common zone used by an administrator. It will let you apply the custom settings and abide by RFC specifications for operations on a LAN.

drop − A good example of when to use drop is at a security conference, on public WiFi, or on an interface connected directly to the Internet. drop assumes all unsolicited requests are malicious including ICMP probes. So any request out of state will not receive a reply. The downside of drop is that it can break the functionality of applications in certain situations requiring strict RFC compliance.

work − You are on a semi-secure corporate LAN. Where all traffic can be assumed moderately safe. This means it is not WiFi and we possibly have IDS, IPS, and physical security or 802.1x in place. We also should be familiar with the people using the LAN.

home − You are on a home LAN. You are personally accountable for every system and the user on the LAN. You know every machine on the LAN and that none have been compromised. Often new services are brought up for media sharing amongst trusted individuals and you don't need to take extra time for the sake of security.

Zones and network interfaces work on a one to many level. One network interface can only have a single zone applied to it at a time. While, a zone can be applied to many interfaces simultaneously.

Let's see what zones are available and what are the currently applied zone.

[root@CentOS]# firewall-cmd --get-zones work drop internal external trusted home dmz public block

[root@CentOS]# firewall-cmd --get-default-zone public [root@CentOS]#

Ready to add some customized rules in firewalld?

First, let's see what our box looks like, to a portscanner from outside.

bash-3.2# nmap -sS -p 1-1024 -T 5 10.211.55.1 Starting Nmap 7.30 ( https://nmap.org ) at 2017-01-27 23:36 MST Nmap scan report for centos.shared (10.211.55.1) Host is up (0.00046s latency). Not shown: 1023 filtered ports PORT STATE SERVICE 22/tcp open ssh Nmap done: 1 IP address (1 host up) scanned in 3.71 seconds bash-3.2#

Let's allow the incoming requests to port 80.

First, check to see what zone is applied as default.

[root@CentOs]# firewall-cmd --get-default-zone public [root@CentOS]#

Then, set the rule allowing port 80 to the current default zone.

[root@CentOS]# firewall-cmd --zone=public --add-port = 80/tcp success [root@CentOS]#

Now, let's check our box after allowing port 80 connections.

bash-3.2# nmap -sS -p 1-1024 -T 5 10.211.55.1 Starting Nmap 7.30 ( https://nmap.org ) at 2017-01-27 23:42 MST Nmap scan report for centos.shared (10.211.55.1) Host is up (0.00053s latency). Not shown: 1022 filtered ports PORT STATE SERVICE 22/tcp open ssh 80/tcp closed http Nmap done: 1 IP address (1 host up) scanned in 3.67 seconds bash-3.2#

It now allows unsolicited traffic to 80.

Let's put the default zone to drop and see what happens to port scan.

[root@CentOS]# firewall-cmd --set-default-zone=drop success [root@CentOS]# firewall-cmd --get-default-zone drop [root@CentOs]#

Now let's scan the host with the network interface in a more secure zone.

bash-3.2# nmap -sS -p 1-1024 -T 5 10.211.55.1 Starting Nmap 7.30 ( https://nmap.org ) at 2017-01-27 23:50 MST Nmap scan report for centos.shared (10.211.55.1) Host is up (0.00094s latency). All 1024 scanned ports on centos.shared (10.211.55.1) are filtered Nmap done: 1 IP address (1 host up) scanned in 12.61 seconds bash-3.2#

Now, everything is filtered from outside.

As demonstrated below, the host will not even respond to ICMP ping requests when in drop.

bash-3.2# ping 10.211.55.1 PING 10.211.55.1 (10.211.55.1): 56 data bytes Request timeout for icmp_seq 0 Request timeout for icmp_seq 1 Request timeout for icmp_seq 2

Let's set the default zone to public again.

[root@CentOs]# firewall-cmd --set-default-zone=public success [root@CentOS]# firewall-cmd --get-default-zone public [root@CentOS]#

Now let's check our current filtering ruleset in public.

[root@CentOS]# firewall-cmd --zone=public --list-all public (active) target: default icmp-block-inversion: no interfaces: enp0s5 sources: services: dhcpv6-client ssh ports: 80/tcp protocols: masquerade: no forward-ports: sourceports: icmp-blocks: rich rules: [root@CentOS rdc]#

As configured, our port 80 filter rule is only within the context of the running configuration. This means once the system is rebooted or the firewalld service is restarted, our rule will be discarded.

We will be configuring an httpd daemon soon, so let's make our changes persistent −

[root@CentOS]# firewall-cmd --zone=public --add-port=80/tcp --permanent success [root@CentOS]# systemctl restart firewalld [root@CentOS]#

Now our port 80 rule in the public zone is persistent across reboots and service restarts.

Following are the common firewalld commands applied with firewall-cmd.

| Command | Action |

|---|---|

| firewall-cmd --get-zones | Lists all zones that can be applied to an interface |

| firewall-cmd status | Returns the currents status of the firewalld service |

| firewall-cmd --get-default-zone | Gets the current default zone |

| firewall-cmd --set-default-zone=<zone> | Sets the default zone into the current context |

| firewall-cmd --get-active-zone | Gets the current zones in context as applied to an interface |

| firewall-cmd --zone=<zone> --list-all | Lists the configuration of supplied zone |

| firewall-cmd --zone=<zone> --addport=<port/transport protocol> | Applies a port rule to the zone filter |

| --permanent | Makes changes to the zone persistent. Flag is used inline with modification commands |

These are the basic concepts of administrating and configuring firewalld.

Configuring host-based firewall services in CentOS can be a complex task in more sophisticated networking scenarios. Advanced usage and configuration of firewalld and iptables in CentOS can take an entire tutorial. However, we have presented the basics that should be enough to complete a majority of daily tasks.

Configure PHP in CentOS Linux

PHP is the one of the most prolific web languages in use today. Installing a LAMP Stack on CentOS is something every system administrator will need to perform, most likely sooner than later.

A traditional LAMP Stack consists of (L)inux (A)pache (M)ySQL (P)HP.

There are three main components to a LAMP Stack on CentOS −

- Web Server

- Web Development Platform / Language

- Database Server

Note − The term LAMP Stack can also include the following technologies: PostgreSQL, MariaDB, Perl, Python, Ruby, NGINX Webserver.

For this tutorial, we will stick with the traditional LAMP Stack of CentOS GNU Linux: Apache web server, MySQL Database Server, and PHP.

We will actually be using MariaDB. MySQL configuration files, databases and tables are transparent to MariaDB. MariaDB is now included in the standard CentOS repository instead of MySQL. This is due to the limitations of licensing and open-source compliance, since Oracle has taken over the development of MySQL.

The first thing we need to do is install Apache.

[root@CentOS]# yum install httpd Loaded plugins: fastestmirror, langpacks base | 3.6 kB 00:00:00 extras | 3.4 kB 00:00:00 updates | 3.4 kB 00:00:00 extras/7/x86_64/primary_d | 121 kB 00:00:00 Loading mirror speeds from cached hostfile * base: mirror.sigmanet.com * extras: linux.mirrors.es.net * updates: mirror.eboundhost.com Resolving Dependencies --> Running transaction check ---> Package httpd.x86_64 0:2.4.6-45.el7.centos will be installed --> Processing Dependency: httpd-tools = 2.4.6-45.el7.centos for package: httpd-2.4.6-45.el7.centos.x86_64 --> Processing Dependency: /etc/mime.types for package: httpd-2.4.645.el7.centos.x86_64 --> Running transaction check ---> Package httpd-tools.x86_64 0:2.4.6-45.el7.centos will be installed ---> Package mailcap.noarch 0:2.1.41-2.el7 will be installed --> Finished Dependency Resolution Installed: httpd.x86_64 0:2.4.6-45.el7.centos Dependency Installed: httpd-tools.x86_64 0:2.4.6-45.el7.centos mailcap.noarch 0:2.1.41-2.el7 Complete! [root@CentOS]#

Let's configure httpd service.

[root@CentOS]# systemctl start httpd && systemctl enable httpd

Now, let's make sure the web-server is accessible through firewalld.

bash-3.2# nmap -sS -p 1-1024 -T 5 -sV 10.211.55.1 Starting Nmap 7.30 ( https://nmap.org ) at 2017-01-28 02:00 MST Nmap scan report for centos.shared (10.211.55.1) Host is up (0.00054s latency). Not shown: 1022 filtered ports PORT STATE SERVICE VERSION 22/tcp open ssh OpenSSH 6.6.1 (protocol 2.0) 80/tcp open http Apache httpd 2.4.6 ((CentOS)) Service detection performed. Please report any incorrect results at https://nmap.org/submit/ . Nmap done: 1 IP address (1 host up) scanned in 10.82 seconds bash-3.2#

As you can see by the nmap service probe, Apache webserver is listening and responding to requests on the CentOS host.

Install MySQL Database Server

[root@CentOS rdc]# yum install mariadb-server.x86_64 && yum install mariadb- devel.x86_64 && mariadb.x86_64 && mariadb-libs.x86_64

We are installing the following repository packages for MariaDB −

mariadb-server.x86_64

The main MariaDB Server daemon package.

mariadb-devel.x86_64

Files need to compile from the source with MySQL/MariaDB compatibility.

mariadb.x86_64

MariaDB client utilities for administering MariaDB Server from the command line.

mariadb-libs.x86_64

Common libraries for MariaDB that could be needed for other applications compiled with MySQL/MariaDB support.

Now, let's start and enable the MariaDB Service.

[root@CentOS]# systemctl start mariadb [root@CentOS]# systemctl enable mariadb

Note − Unlike Apache, we will not enable connections to MariaDB through our host-based firewall (firewalld). When using a database server, it's considered best security practice to only allow local socket connections, unless the remote socket access is specifically needed.

Let's make sure the MariaDB Server is accepting connections.

[root@CentOS#] netstat -lnt

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 0.0.0.0:3306 0.0.0.0:* LISTEN

tcp 0 0 0.0.0.0:111 0.0.0.0:* LISTEN

tcp 0 0 192.168.122.1:53 0.0.0.0:* LISTEN

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN

tcp 0 0 127.0.0.1:631 0.0.0.0:* LISTEN

tcp 0 0 127.0.0.1:25 0.0.0.0:* LISTEN

[root@CentOS rdc]#

As we can see, MariaDB is listening on port 3306 tcp. We will leave our host-based firewall (firewalld) blocking incoming connections to port 3306.

Install and Configure PHP

[root@CentOS#] yum install php.x86_64 && php-common.x86_64 && php-mysql.x86_64 && php-mysqlnd.x86_64 && php-pdo.x86_64 && php-soap.x86_64 && php-xml.x86_64

I'd recommend installing the following php packages for common compatibility −

- php-common.x86_64

- php-mysql.x86_64

- php-mysqlnd.x86_64

- php-pdo.x86_64

- php-soap.x86_64

- php-xml.x86_64

[root@CentOS]# yum install -y php-common.x86_64 php-mysql.x86_64 php- mysqlnd.x86_64 php-pdo.x86_64 php-soap.x86_64 php-xml.x86_64

This is our simple php file located in the Apache webroot of /var/www/html/

[root@CentOS]# cat /var/www/html/index.php

<html>

<head>

<title>PHP Test Page</title>

</head>