- Apache Flume - Home

- Apache Flume - Introduction

- Data Transfer in Hadoop

- Apache Flume - Architecture

- Apache Flume - Data Flow

- Apache Flume - Environment

- Apache Flume - configuration

- Apache Flume - Fetching Twitter Data

- Sequence Generator Source

- Apache Flume - NetCat Source

Apache Flume - Sequence Generator Source

In the previous chapter, we have seen how to fetch data from twitter source to HDFS. This chapter explains how to fetch data from Sequence generator.

Prerequisites

To run the example provided in this chapter, you need to install HDFS along with Flume. Therefore, verify Hadoop installation and start the HDFS before proceeding further. (Refer the previous chapter to learn how to start the HDFS).

Configuring Flume

We have to configure the source, the channel, and the sink using the configuration file in the conf folder. The example given in this chapter uses a sequence generator source, a memory channel, and an HDFS sink.

Sequence Generator Source

It is the source that generates the events continuously. It maintains a counter that starts from 0 and increments by 1. It is used for testing purpose. While configuring this source, you must provide values to the following properties −

Channels

Source type − seq

Channel

We are using the memory channel. To configure the memory channel, you must provide a value to the type of the channel. Given below are the list of properties that you need to supply while configuring the memory channel −

type − It holds the type of the channel. In our example the type is MemChannel.

Capacity − It is the maximum number of events stored in the channel. Its default value is 100. (optional)

TransactionCapacity − It is the maximum number of events the channel accepts or sends. Its default is 100. (optional).

HDFS Sink

This sink writes data into the HDFS. To configure this sink, you must provide the following details.

Channel

type − hdfs

hdfs.path − the path of the directory in HDFS where data is to be stored.

And we can provide some optional values based on the scenario. Given below are the optional properties of the HDFS sink that we are configuring in our application.

fileType − This is the required file format of our HDFS file. SequenceFile, DataStream and CompressedStream are the three types available with this stream. In our example, we are using the DataStream.

writeFormat − Could be either text or writable.

batchSize − It is the number of events written to a file before it is flushed into the HDFS. Its default value is 100.

rollsize − It is the file size to trigger a roll. It default value is 100.

rollCount − It is the number of events written into the file before it is rolled. Its default value is 10.

Example Configuration File

Given below is an example of the configuration file. Copy this content and save as seq_gen .conf in the conf folder of Flume.

# Naming the components on the current agent SeqGenAgent.sources = SeqSource SeqGenAgent.channels = MemChannel SeqGenAgent.sinks = HDFS # Describing/Configuring the source SeqGenAgent.sources.SeqSource.type = seq # Describing/Configuring the sink SeqGenAgent.sinks.HDFS.type = hdfs SeqGenAgent.sinks.HDFS.hdfs.path = hdfs://localhost:9000/user/Hadoop/seqgen_data/ SeqGenAgent.sinks.HDFS.hdfs.filePrefix = log SeqGenAgent.sinks.HDFS.hdfs.rollInterval = 0 SeqGenAgent.sinks.HDFS.hdfs.rollCount = 10000 SeqGenAgent.sinks.HDFS.hdfs.fileType = DataStream # Describing/Configuring the channel SeqGenAgent.channels.MemChannel.type = memory SeqGenAgent.channels.MemChannel.capacity = 1000 SeqGenAgent.channels.MemChannel.transactionCapacity = 100 # Binding the source and sink to the channel SeqGenAgent.sources.SeqSource.channels = MemChannel SeqGenAgent.sinks.HDFS.channel = MemChannel

Execution

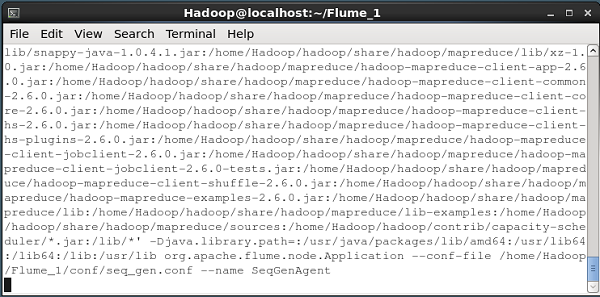

Browse through the Flume home directory and execute the application as shown below.

$ cd $FLUME_HOME $./bin/flume-ng agent --conf $FLUME_CONF --conf-file $FLUME_CONF/seq_gen.conf --name SeqGenAgent

If everything goes fine, the source starts generating sequence numbers which will be pushed into the HDFS in the form of log files.

Given below is a snapshot of the command prompt window fetching the data generated by the sequence generator into the HDFS.

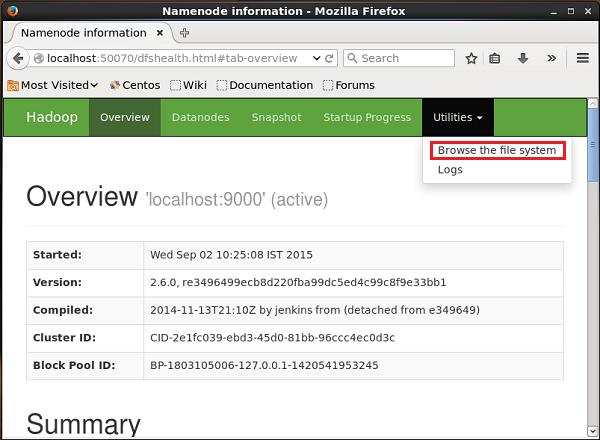

Verifying the HDFS

You can access the Hadoop Administration Web UI using the following URL −

http://localhost:50070/

Click on the dropdown named Utilities on the right-hand side of the page. You can see two options as shown in the diagram given below.

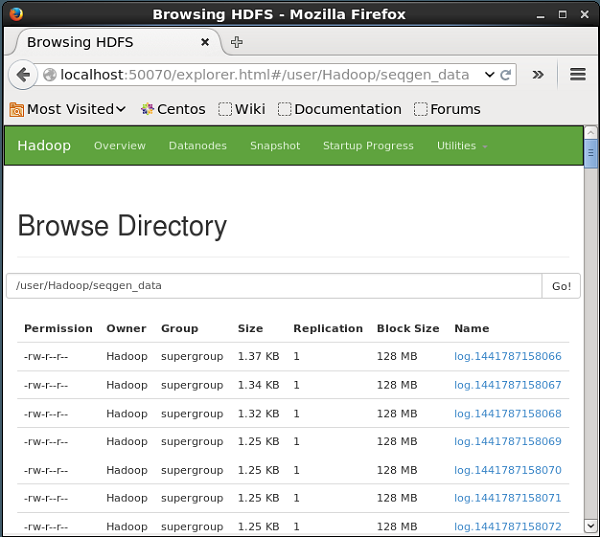

Click on Browse the file system and enter the path of the HDFS directory where you have stored the data generated by the sequence generator.

In our example, the path will be /user/Hadoop/ seqgen_data /. Then, you can see the list of log files generated by the sequence generator, stored in the HDFS as given below.

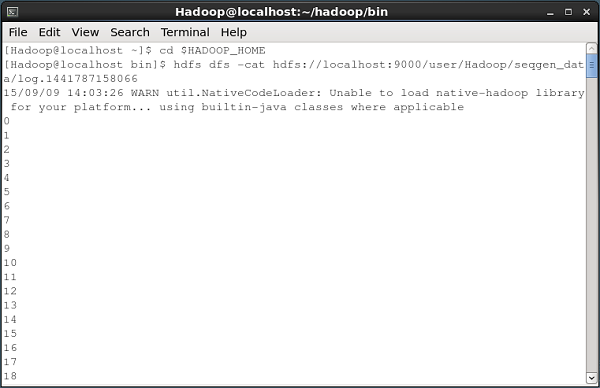

Verifying the Contents of the File

All these log files contain numbers in sequential format. You can verify the contents of these file in the file system using the cat command as shown below.