- Home

- Introduction

- Environment Setup

- Concurrency vs Parallelism

- System & Memory Architecture

- Threads

- Implementation of Threads

- Synchronizing Threads

- Threads Intercommunication

- Testing Thread Applications

- Debugging Thread Applications

- Benchmarking & Profiling

- Pool of Threads

- Pool of Processes

- Multiprocessing

- Processes Intercommunication

- Event-Driven Programming

- Reactive Programming

Python Resources

Concurrency in Python - Threads

In general, as we know that thread is a very thin twisted string usually of the cotton or silk fabric and used for sewing clothes and such. The same term thread is also used in the world of computer programming. Now, how do we relate the thread used for sewing clothes and the thread used for computer programming? The roles performed by the two threads is similar here. In clothes, thread hold the cloth together and on the other side, in computer programming, thread hold the computer program and allow the program to execute sequential actions or many actions at once.

Thread is the smallest unit of execution in an operating system. It is not in itself a program but runs within a program. In other words, threads are not independent of one other and share code section, data section, etc. with other threads. These threads are also known as lightweight processes.

States of Thread

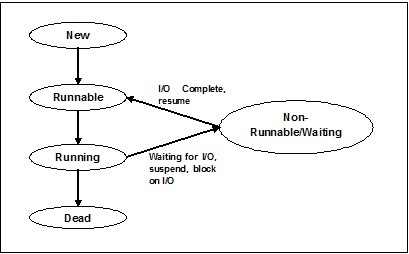

To understand the functionality of threads in depth, we need to learn about the lifecycle of the threads or the different thread states. Typically, a thread can exist in five distinct states. The different states are shown below −

New Thread

A new thread begins its life cycle in the new state. However, at this stage, it has not yet started and it has not been allocated any resources. We can say that it is just an instance of an object.

Runnable

As the newly born thread is started, the thread becomes runnable i.e. waiting to run. In this state, it has all the resources but still task scheduler have not scheduled it to run.

Running

In this state, the thread makes progress and executes the task, which has been chosen by task scheduler to run. Now, the thread can go to either the dead state or the non-runnable/ waiting state.

Non-running/waiting

In this state, the thread is paused because it is either waiting for the response of some I/O request or waiting for the completion of the execution of other thread.

Dead

A runnable thread enters the terminated state when it completes its task or otherwise terminates.

The following diagram shows the complete life cycle of a thread −

Types of Thread

In this section, we will see the different types of thread. The types are described below −

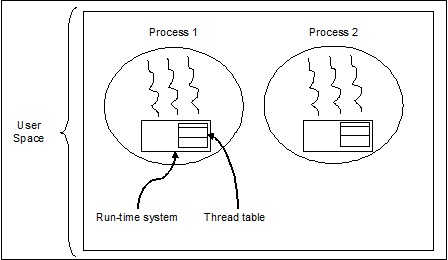

User Level Threads

These are user-managed threads.

In this case, the thread management kernel is not aware of the existence of threads. The thread library contains code for creating and destroying threads, for passing message and data between threads, for scheduling thread execution and for saving and restoring thread contexts. The application starts with a single thread.

The examples of user level threads are −

- Java threads

- POSIX threads

Advantages of User level Threads

Following are the different advantages of user level threads −

- Thread switching does not require Kernel mode privileges.

- User level thread can run on any operating system.

- Scheduling can be application specific in the user level thread.

- User level threads are fast to create and manage.

Disadvantages of User level Threads

Following are the different disadvantages of user level threads −

- In a typical operating system, most system calls are blocking.

- Multithreaded application cannot take advantage of multiprocessing.

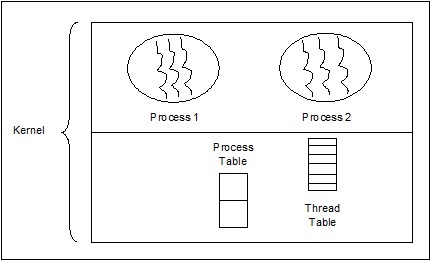

Kernel Level Threads

Operating System managed threads act on kernel, which is an operating system core.

In this case, the Kernel does thread management. There is no thread management code in the application area. Kernel threads are supported directly by the operating system. Any application can be programmed to be multithreaded. All of the threads within an application are supported within a single process.

The Kernel maintains context information for the process as a whole and for individual threads within the process. Scheduling by the Kernel is done on a thread basis. The Kernel performs thread creation, scheduling and management in Kernel space. Kernel threads are generally slower to create and manage than the user threads. The examples of kernel level threads are Windows, Solaris.

Advantages of Kernel Level Threads

Following are the different advantages of kernel level threads −

Kernel can simultaneously schedule multiple threads from the same process on multiple processes.

If one thread in a process is blocked, the Kernel can schedule another thread of the same process.

Kernel routines themselves can be multithreaded.

Disadvantages of Kernel Level Threads

Kernel threads are generally slower to create and manage than the user threads.

Transfer of control from one thread to another within the same process requires a mode switch to the Kernel.

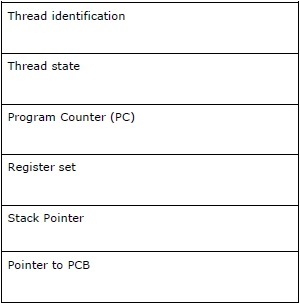

Thread Control Block - TCB

Thread Control Block (TCB) may be defined as the data structure in the kernel of operating system that mainly contains information about thread. Thread-specific information stored in TCB would highlight some important information about each process.

Consider the following points related to the threads contained in TCB −

Thread identification − It is the unique thread id (tid) assigned to every new thread.

Thread state − It contains the information related to the state (Running, Runnable, Non-Running, Dead) of the thread.

Program Counter (PC) − It points to the current program instruction of the thread.

Register set − It contains the threads register values assigned to them for computations.

Stack Pointer − It points to the threads stack in the process. It contains the local variables under threads scope.

Pointer to PCB − It contains the pointer to the process that created that thread.

Relation between process & thread

In multithreading, process and thread are two very closely related terms having the same goal to make computer able to do more than one thing at a time. A process can contain one or more threads but on the contrary, thread cannot contain a process. However, they both remain the two basic units of execution. A program, executing a series of instructions, initiates process and thread both.

The following table shows the comparison between process and thread −

| Process | Thread |

|---|---|

| Process is heavy weight or resource intensive. | Thread is lightweight which takes fewer resources than a process. |

| Process switching needs interaction with operating system. | Thread switching does not need to interact with operating system. |

| In multiple processing environments, each process executes the same code but has its own memory and file resources. | All threads can share same set of open files, child processes. |

| If one process is blocked, then no other process can execute until the first process is unblocked. | While one thread is blocked and waiting, a second thread in the same task can run. |

| Multiple processes without using threads use more resources. | Multiple threaded processes use fewer resources. |

| In multiple processes, each process operates independently of the others. | One thread can read, write or change another thread's data. |

| If there would be any change in the parent process then it does not affect the child processes. | If there would be any change in the main thread then it may affect the behavior of other threads of that process. |

| To communicate with sibling processes, processes must use inter-process communication. | Threads can directly communicate with other threads of that process. |

Concept of Multithreading

As we have discussed earlier that Multithreading is the ability of a CPU to manage the use of operating system by executing multiple threads concurrently. The main idea of multithreading is to achieve parallelism by dividing a process into multiple threads. In a more simple way, we can say that multithreading is the way of achieving multitasking by using the concept of threads.

The concept of multithreading can be understood with the help of the following example.

Example

Suppose we are running a process. The process could be for opening MS word for writing something. In such process, one thread will be assigned to open MS word and another thread will be required to write. Now, suppose if we want to edit something then another thread will be required to do the editing task and so on.

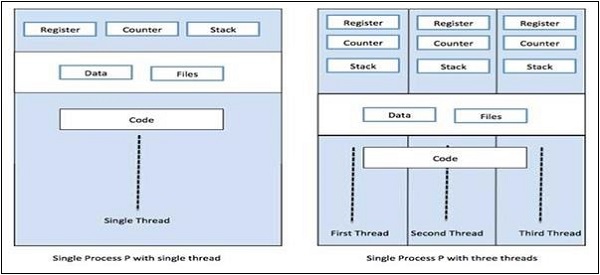

The following diagram helps us understand how multiple threads exist in memory −

We can see in the above diagram that more than one thread can exist within one process where every thread contains its own register set and local variables. Other than that, all the threads in a process share global variables.

Pros of Multithreading

Let us now see a few advantages of multithreading. The advantages are as follows −

Speed of communication − Multithreading improves the speed of computation because each core or processor handles separate threads concurrently.

Program remains responsive − It allows a program to remain responsive because one thread waits for the input and another runs a GUI at the same time.

Access to global variables − In multithreading, all the threads of a particular process can access the global variables and if there is any change in global variable then it is visible to other threads too.

Utilization of resources − Running of several threads in each program makes better use of CPU and the idle time of CPU becomes less.

Sharing of data − There is no requirement of extra space for each thread because threads within a program can share same data.

Cons of Multithreading

Let us now see a few disadvantages of multithreading. The disadvantages are as follows −

Not suitable for single processor system − Multithreading finds it difficult to achieve performance in terms of speed of computation on single processor system as compared with the performance on multi-processor system.

Issue of security − As we know that all the threads within a program share same data, hence there is always an issue of security because any unknown thread can change the data.

Increase in complexity − Multithreading can increase the complexity of the program and debugging becomes difficult.

Lead to deadlock state − Multithreading can lead the program to potential risk of attaining the deadlock state.

Synchronization required − Synchronization is required to avoid mutual exclusion. This leads to more memory and CPU utilization.