- Apache MXNet - Home

- Apache MXNet - Introduction

- Apache MXNet - Installing MXNet

- Apache MXNet - Toolkits and Ecosystem

- Apache MXNet - System Architecture

- Apache MXNet - System Components

- Apache MXNet - Unified Operator API

- Apache MXNet - Distributed Training

- Apache MXNet - Python Packages

- Apache MXNet - NDArray

- Apache MXNet - Gluon

- Apache MXNet - KVStore and Visualization

- Apache MXNet - Python API ndarray

- Apache MXNet - Python API gluon

- Apache MXNet - Python API autograd and initializer

- Apache MXNet - Python API Symbol

- Apache MXNet - Python API Module

- Apache MXNet Useful Resources

- Apache MXNet - Quick Guide

- Apache MXNet - Useful Resources

- Apache MXNet - Discussion

Apache MXNet - Quick Guide

Apache MXNet - Introduction

This chapter highlights the features of Apache MXNet and talks about the latest version of this deep learning software framework.

What is MXNet?

Apache MXNet is a powerful open-source deep learning software framework instrument helping developers build, train, and deploy Deep Learning models. Past few years, from healthcare to transportation to manufacturing and, in fact, in every aspect of our daily life, the impact of deep learning has been widespread. Nowadays, deep learning is sought by companies to solve some hard problems like Face recognition, object detection, Optical Character Recognition (OCR), Speech Recognition, and Machine Translation.

Thats the reason Apache MXNet is supported by:

Some big companies like Intel, Baidu, Microsoft, Wolfram Research, etc.

Public cloud providers including Amazon Web Services (AWS), and Microsoft Azure

Some big research institutes like Carnegie Mellon, MIT, the University of Washington, and the Hong Kong University of Science & Technology.

Why Apache MXNet?

There are various deep learning platforms like Torch7, Caffe, Theano, TensorFlow, Keras, Microsoft Cognitive Toolkit, etc. existed then you might wonder why Apache MXNet? Lets check out some of the reasons behind it:

Apache MXNet solves one of the biggest issues of existing deep learning platforms. The issue is that in order to use deep learning platforms one must need to learn another system for a different programming flavor.

With the help of Apache MXNet developers can exploit the full capabilities of GPUs as well as cloud computing.

Apache MXNet can accelerate any numerical computation and places a special emphasis on speeding up the development and deployment of large-scale DNN (deep neural networks).

It provides the users the capabilities of both imperative and symbolic programming.

Various Features

If you are looking for a flexible deep learning library to quickly develop cutting-edge deep learning research or a robust platform to push production workload, your search ends at Apache MXNet. It is because of the following features of it:

Distributed Training

Whether it is multi-gpu or multi-host training with near-linear scaling efficiency, Apache MXNet allows developers to make most out of their hardware. MXNet also support integration with Horovod, which is an open source distributed deep learning framework created at Uber.

For this integration, following are some of the common distributed APIs defined in Horovod:

horovod.broadcast()

horovod.allgather()

horovod.allgather()

In this regard, MXNet offer us the following capabilities:

Device Placement − With the help of MXNet we can easily specify each data structure (DS).

Automatic Differentiation − Apache MXNet automates the differentiation i.e. derivative calculations.

Multi-GPU training − MXNet allows us to achieve scaling efficiency with number of available GPUs.

Optimized Predefined Layers − We can code our own layers in MXNet as well as the optimized the predefined layers for speed also.

Hybridization

Apache MXNet provides its users a hybrid front-end. With the help of the Gluon Python API it can bridge the gap between its imperative and symbolic capabilities. It can be done by calling its hybridize functionality.

Faster Computation

The linear operations like tens or hundreds of matrix multiplications are the computational bottleneck for deep neural nets. To solve this bottleneck MXNet provides −

Optimized numerical computation for GPUs

Optimized numerical computation for distributed ecosystems

Automation of common workflows with the help of which the standard NN can be expressed briefly.

Language Bindings

MXNet has deep integration into high-level languages like Python and R. It also provides support for other programming languages such as-

Scala

Julia

Clojure

Java

C/C++

Perl

We do not need to learn any new programming language instead MXNet, combined with hybridization feature, allows an exceptionally smooth transition from Python to deployment in the programming language of our choice.

Latest version MXNet 1.6.0

Apache Software Foundation (ASF) has released the stable version 1.6.0 of Apache MXNet on 21st February 2020 under Apache License 2.0. This is the last MXNet release to support Python 2 as MXNet community voted to no longer support Python 2 in further releases. Let us check out some of the new features this release brings for its users.

NumPy-Compatible interface

Due to its flexibility and generality, NumPy has been widely used by Machine Learning practitioners, scientists, and students. But as we know that, these days hardware accelerators like Graphical Processing Units (GPUs) have become increasingly assimilated into various Machine Learning (ML) toolkits, the NumPy users, to take advantage of the speed of GPUs, need to switch to new frameworks with different syntax.

With MXNet 1.6.0, Apache MXNet is moving toward a NumPy-compatible programming experience. The new interface provides equivalent usability as well as expressiveness to the practitioners familiar with NumPy syntax. Along with that MXNet 1.6.0 also enables the existing Numpy system to utilize hardware accelerators like GPUs to speed-up large-scale computations.

Integration with Apache TVM

Apache TVM, an open-source end-to-end deep learning compiler stack for hardware-backends such as CPUs, GPUs, and specialized accelerators, aims to fill the gap between the productivity-focused deep-learning frameworks and performance-oriented hardware backends. With the latest release MXNet 1.6.0, users can leverage Apache(incubating) TVM to implement high-performance operator kernels in Python programming language. Two main advantages of this new feature are following −

Simplifies the former C++ based development process.

Enables sharing the same implementation across multiple hardware backend such as CPUs, GPUs, etc.

Improvements on existing features

Apart from the above listed features of MXNet 1.6.0, it also provides some improvements over the existing features. The improvements are as follows −

Grouping element-wise operation for GPU

As we know the performance of element-wise operations is memory-bandwidth and that is the reason, chaining such operations may reduce overall performance. Apache MXNet 1.6.0 does element-wise operation fusion, that actually generates just-in-time fused operations as and when possible. Such element-wise operation fusion also reduces storage needs and improve overall performance.

Simplifying common expressions

MXNet 1.6.0 eliminates the redundant expressions and simplify the common expressions. Such enhancement also improves memory usage and total execution time.

Optimizations

MXNet 1.6.0 also provides various optimizations to existing features & operators, which are as follows:

Automatic Mixed Precision

Gluon Fit API

MKL-DNN

Large tensor Support

TensorRT integration

Higher-order gradient support

Operators

Operator performance profiler

ONNX import/export

Improvements to Gluon APIs

Improvements to Symbol APIs

More than 100 bug fixes

Apache MXNet - Installing MXNet

To get started with MXNet, the first thing we need to do, is to install it on our computer. Apache MXNet works on pretty much all the platforms available, including Windows, Mac, and Linux.

Linux OS

We can install MXNet on Linux OS in the following ways −

Graphical Processing Unit (GPU)

Here, we will use various methods namely Pip, Docker, and Source to install MXNet when we are using GPU for processing −

By using Pip method

You can use the following command to install MXNet on your Linus OS −

pip install mxnet

Apache MXNet also offers MKL pip packages, which are much faster when running on intel hardware. Here for example mxnet-cu101mkl means that −

The package is built with CUDA/cuDNN

The package is MKL-DNN enabled

The CUDA version is 10.1

For other option you can also refer to https://pypi.org/project/mxnet/.

By using Docker

You can find the docker images with MXNet at DockerHub, which is available at https://hub.docker.com/u/mxnet Let us check out the steps below to install MXNet by using Docker with GPU −

Step 1− First, by following the docker installation instructions which are available at https://docs.docker.com/engine/install/ubuntu/. We need to install Docker on our machine.

Step 2− To enable the usage of GPUs from the docker containers, next we need to install nvidia-docker-plugin. You can follow the installation instructions given at https://github.com/NVIDIA/nvidia-docker/wiki.

Step 3− By using the following command, you can pull the MXNet docker image −

$ sudo docker pull mxnet/python:gpu

Now in order to see if mxnet/python docker image pull was successful, we can list docker images as follows −

$ sudo docker images

For the fastest inference speeds with MXNet, it is recommended to use the latest MXNet with Intel MKL-DNN. Check the commands below −

$ sudo docker pull mxnet/python:1.3.0_cpu_mkl $ sudo docker images

From source

To build the MXNet shared library from source with GPU, first we need to set up the environment for CUDA and cuDNN as follows−

Download and install CUDA toolkit, here CUDA 9.2 is recommended.

Next download cuDNN 7.1.4.

Now we need to unzip the file. It is also required to change to the cuDNN root directory. Also move the header and libraries to local CUDA Toolkit folder as follows −

tar xvzf cudnn-9.2-linux-x64-v7.1 sudo cp -P cuda/include/cudnn.h /usr/local/cuda/include sudo cp -P cuda/lib64/libcudnn* /usr/local/cuda/lib64 sudo chmod a+r /usr/local/cuda/include/cudnn.h /usr/local/cuda/lib64/libcudnn* sudo ldconfig

After setting up the environment for CUDA and cuDNN, follow the steps below to build the MXNet shared library from source −

Step 1− First, we need to install the prerequisite packages. These dependencies are required on Ubuntu version 16.04 or later.

sudo apt-get update sudo apt-get install -y build-essential git ninja-build ccache libopenblas-dev libopencv-dev cmake

Step 2− In this step, we will download MXNet source and configure. First let us clone the repository by using following command−

git clone recursive https://github.com/apache/incubator-mxnet.git mxnet cd mxnet cp config/linux_gpu.cmake #for build with CUDA

Step 3− By using the following commands, you can build MXNet core shared library−

rm -rf build mkdir -p build && cd build cmake -GNinja .. cmake --build .

Two important points regarding the above step is as follows−

If you want to build the Debug version, then specify the as follows−

cmake -DCMAKE_BUILD_TYPE=Debug -GNinja ..

In order to set the number of parallel compilation jobs, specify the following −

cmake --build . --parallel N

Once you successfully build MXNet core shared library, in the build folder in your MXNet project root, you will find libmxnet.so which is required to install language bindings(optional).

Central Processing Unit (CPU)

Here, we will use various methods namely Pip, Docker, and Source to install MXNet when we are using CPU for processing −

By using Pip method

You can use the following command to install MXNet on your Linus OS−

pip install mxnet

Apache MXNet also offers MKL-DNN enabled pip packages which are much faster, when running on intel hardware.

pip install mxnet-mkl

By using Docker

You can find the docker images with MXNet at DockerHub, which is available at https://hub.docker.com/u/mxnet. Let us check out the steps below to install MXNet by using Docker with CPU −

Step 1− First, by following the docker installation instructions which are available at https://docs.docker.com/engine/install/ubuntu/. We need to install Docker on our machine.

Step 2− By using the following command, you can pull the MXNet docker image:

$ sudo docker pull mxnet/python

Now, in order to see if mxnet/python docker image pull was successful, we can list docker images as follows −

$ sudo docker images

For the fastest inference speeds with MXNet, it is recommended to use the latest MXNet with Intel MKL-DNN.

Check the commands below −

$ sudo docker pull mxnet/python:1.3.0_cpu_mkl $ sudo docker images

From source

To build the MXNet shared library from source with CPU, follow the steps below −

Step 1− First, we need to install the prerequisite packages. These dependencies are required on Ubuntu version 16.04 or later.

sudo apt-get update sudo apt-get install -y build-essential git ninja-build ccache libopenblas-dev libopencv-dev cmake

Step 2− In this step we will download MXNet source and configure. First let us clone the repository by using following command:

git clone recursive https://github.com/apache/incubator-mxnet.git mxnet cd mxnet cp config/linux.cmake config.cmake

Step 3− By using the following commands, you can build MXNet core shared library:

rm -rf build mkdir -p build && cd build cmake -GNinja .. cmake --build .

Two important points regarding the above step is as follows−

If you want to build the Debug version, then specify the as follows:

cmake -DCMAKE_BUILD_TYPE=Debug -GNinja ..

In order to set the number of parallel compilation jobs, specify the following−

cmake --build . --parallel N

Once you successfully build MXNet core shared library, in the build folder in your MXNet project root, you will find libmxnet.so, which is required to install language bindings(optional).

MacOS

We can install MXNet on MacOS in the following ways−

Graphical Processing Unit (GPU)

If you plan to build MXNet on MacOS with GPU, then there is NO Pip and Docker method available. The only method in this case is to build it from source.

From source

To build the MXNet shared library from source with GPU, first we need to set up the environment for CUDA and cuDNN. You need to follow the NVIDIA CUDA Installation Guide which is available at https://docs.nvidia.com and cuDNN Installation Guide, which is available at https://docs.nvidia.com/deeplearning/cudnn/installation/latest/linux.html for mac OS.

Please note that in 2019 CUDA stopped supporting macOS. In fact, future versions of CUDA may also not support macOS.

Once you set up the environment for CUDA and cuDNN, follow the steps given below to install MXNet from source on OS X (Mac)−

Step 1− As we need some dependencies on OS x, First, we need to install the prerequisite packages.

xcode-select -install #Install OS X Developer Tools /usr/bin/ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/master/install)" #Install Homebrew brew install cmake ninja ccache opencv # Install dependencies

We can also build MXNet without OpenCV as opencv is an optional dependency.

Step 2− In this step we will download MXNet source and configure. First let us clone the repository by using following command−

git clone -recursive https://github.com/apache/incubator-mxnet.git mxnet cd mxnet cp config/linux.cmake config.cmake

For a GPU-enabled, it is necessary to install the CUDA dependencies first because when one tries to build a GPU-enabled build on a machine without GPU, MXNet build cannot autodetect your GPU architecture. In such cases MXNet will target all available GPU architectures.

Step 3− By using the following commands, you can build MXNet core shared library−

rm -rf build mkdir -p build && cd build cmake -GNinja .. cmake --build .

Two important points regarding the above step is as follows−

If you want to build the Debug version, then specify the as follows−

cmake -DCMAKE_BUILD_TYPE=Debug -GNinja ..

In order to set the number of parallel compilation jobs, specify the following:

cmake --build . --parallel N

Once you successfully build MXNet core shared library, in the build folder in your MXNet project root, you will find libmxnet.dylib, which is required to install language bindings(optional).

Central Processing Unit (CPU)

Here, we will use various methods namely Pip, Docker, and Source to install MXNet when we are using CPU for processing−

By using Pip method

You can use the following command to install MXNet on your Linus OS

pip install mxnet

By using Docker

You can find the docker images with MXNet at DockerHub, which is available at https://hub.docker.com/u/mxnet. Let us check out the steps below to install MXNet by using Docker with CPU−

Step 1− First, by following the docker installation instructions which are available at https://docs.docker.com/docker-for-mac we need to install Docker on our machine.

Step 2− By using the following command, you can pull the MXNet docker image−

$ docker pull mxnet/python

Now in order to see if mxnet/python docker image pull was successful, we can list docker images as follows−

$ docker images

For the fastest inference speeds with MXNet, it is recommended to use the latest MXNet with Intel MKL-DNN. Check the commands below−

$ docker pull mxnet/python:1.3.0_cpu_mkl $ docker images

From source

Follow the steps given below to install MXNet from source on OS X (Mac)−

Step 1− As we need some dependencies on OS x, first, we need to install the prerequisite packages.

xcode-select -install #Install OS X Developer Tools /usr/bin/ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/master/install)" #Install Homebrew brew install cmake ninja ccache opencv # Install dependencies

We can also build MXNet without OpenCV as opencv is an optional dependency.

Step 2− In this step we will download MXNet source and configure. First, let us clone the repository by using following command−

git clone -recursive https://github.com/apache/incubator-mxnet.git mxnet cd mxnet cp config/linux.cmake config.cmake

Step 3− By using the following commands, you can build MXNet core shared library:

rm -rf build mkdir -p build && cd build cmake -GNinja .. cmake --build .

Two important points regarding the above step is as follows−

If you want to build the Debug version, then specify the as follows−

cmake -DCMAKE_BUILD_TYPE=Debug -GNinja ..

In order to set the number of parallel compilation jobs, specify the following−

cmake --build . --parallel N

Once you successfully build MXNet core shared library, in the build folder in your MXNet project root, you will find libmxnet.dylib, which is required to install language bindings(optional).

Windows OS

To install MXNet on Windows, following are the prerequisites−

Minimum System Requirements

Windows 7, 10, Server 2012 R2, or Server 2016

Visual Studio 2015 or 2017 (any type)

Python 2.7 or 3.6

pip

Recommended System Requirements

Windows 10, Server 2012 R2, or Server 2016

Visual Studio 2017

At least one NVIDIA CUDA-enabled GPU

MKL-enabled CPU: Intel Xeon processor, Intel Core processor family, Intel Atom processor, or Intel Xeon Phi processor

Python 2.7 or 3.6

pip

Graphical Processing Unit (GPU)

By using Pip method−

If you plan to build MXNet on Windows with NVIDIA GPUs, there are two options for installing MXNet with CUDA support with a Python package−

Install with CUDA Support

Below are the steps with the help of which, we can setup MXNet with CUDA.

Step 1− First install Microsoft Visual Studio 2017 or Microsoft Visual Studio 2015.

Step 2− Next, download and install NVIDIA CUDA. It is recommended to use CUDA versions 9.2 or 9.0 because some issues with CUDA 9.1 have been identified in the past.

Step 3− Now, download and install NVIDIA_CUDA_DNN.

Step 4− Finally, by using following pip command, install MXNet with CUDA−

pip install mxnet-cu92

Install with CUDA and MKL Support

Below are the steps with the help of which, we can setup MXNet with CUDA and MKL.

Step 1− First install Microsoft Visual Studio 2017 or Microsoft Visual Studio 2015.

Step 2− Next, download and install intel MKL

Step 3− Now, download and install NVIDIA CUDA.

Step 4− Now, download and install NVIDIA_CUDA_DNN.

Step 5− Finally, by using following pip command, install MXNet with MKL.

pip install mxnet-cu92mkl

From source

To build the MXNet core library from source with GPU, we have the following two options−

Option 1− Build with Microsoft Visual Studio 2017

In order to build and install MXNet yourself by using Microsoft Visual Studio 2017, you need the following dependencies.

Install/update Microsoft Visual Studio.

If Microsoft Visual Studio is not already installed on your machine, first download and install it.

It will prompt about installing Git. Install it also.

If Microsoft Visual Studio is already installed on your machine but you want to update it then proceed to the next step to modify your installation. Here you will be given the opportunity to update Microsoft Visual Studio as well.

Follow the instructions for opening the Visual Studio Installer available at https://docs.microsoft.com/en-us to modify Individual components.

In the Visual Studio Installer application, update as required. After that look for and check VC++ 2017 version 15.4 v14.11 toolset and click Modify.

Now by using the following command, change the version of the Microsoft VS2017 to v14.11−

"C:\Program Files (x86)\Microsoft Visual Studio\2017\Community\VC\Auxiliary\Build\vcvars64.bat" -vcvars_ver=14.11

Next, you need to download and install CMake available at https://cmake.org/download/ It is recommended to use CMake v3.12.2 which is available at https://cmake.org/download/ because it is tested with MXNet.

Now, download and run the OpenCV package available at https://sourceforge.net/projects/opencvlibrary/which will unzip several files. It is up to you if you want to place them in another directory or not. Here, we will use the path C:\utils(mkdir C:\utils) as our default path.

Next, we need to set the environment variable OpenCV_DIR to point to the OpenCV build directory that we have just unzipped. For this open command prompt and type set OpenCV_DIR=C:\utils\opencv\build.

One important point is that if you do not have the Intel MKL (Math Kernel Library) installed the you can install it.

Another open source package you can use is OpenBLAS. Here for the further instructions we are assuming that you are using OpenBLAS.

So, Download the OpenBlas package which is available at https://sourceforge.net and unzip the file, rename it to OpenBLAS and put it under C:\utils.

Next, we need to set the environment variable OpenBLAS_HOME to point to the OpenBLAS directory that contains the include and lib directories. For this open command prompt and type set OpenBLAS_HOME=C:\utils\OpenBLAS.

Now, download and install CUDA available at https://developer.nvidia.com. Note that, if you already had CUDA, then installed Microsoft VS2017, you need to reinstall CUDA now, so that you can get the CUDA toolkit components for Microsoft VS2017 integration.

Next, you need to download and install cuDNN.

Next, you need to download and install git which is at https://gitforwindows.org/ also.

Once you have installed all the required dependencies, follow the steps given below to build the MXNet source code−

Step 1− Open command prompt in windows.

Step 2− Now, by using the following command, download the MXNet source code from GitHub:

cd C:\ git clone https://github.com/apache/incubator-mxnet.git --recursive

Step 3− Next, verify the following−

DCUDNN_INCLUDE and DCUDNN_LIBRARY environment variables are pointing to the include folder and cudnn.lib file of your CUDA installed location

C:\incubator-mxnet is the location of the source code you just cloned in the previous step.

Step 4− Next by using the following command, create a build directory and also go to the directory, for example−

mkdir C:\incubator-mxnet\build cd C:\incubator-mxnet\build

Step 5− Now, by using cmake, compile the MXNet source code as follows−

cmake -G "Visual Studio 15 2017 Win64" -T cuda=9.2,host=x64 -DUSE_CUDA=1 -DUSE_CUDNN=1 -DUSE_NVRTC=1 -DUSE_OPENCV=1 -DUSE_OPENMP=1 -DUSE_BLAS=open -DUSE_LAPACK=1 -DUSE_DIST_KVSTORE=0 -DCUDA_ARCH_LIST=Common -DCUDA_TOOLSET=9.2 -DCUDNN_INCLUDE=C:\cuda\include -DCUDNN_LIBRARY=C:\cuda\lib\x64\cudnn.lib "C:\incubator-mxnet"

Step 6− Once the CMake successfully completed, use the following command to compile the MXNet source code−

msbuild mxnet.sln /p:Configuration=Release;Platform=x64 /maxcpucount

Option 2: Build with Microsoft Visual Studio 2015

In order to build and install MXNet yourself by using Microsoft Visual Studio 2015, you need the following dependencies.

Install/update Microsoft Visual Studio 2015. The minimum requirement to build MXnet from source is of Update 3 of Microsoft Visual Studio 2015. You can use Tools -> Extensions and Updates... | Product Updates menu to upgrade it.

Next, you need to download and install CMake which is available at https://cmake.org/download/. It is recommended to use CMake v3.12.2 which is at https://cmake.org/download/, because it is tested with MXNet.

Now, download and run the OpenCV package available at https://excellmedia.dl.sourceforge.net which will unzip several files. It is up to you, if you want to place them in another directory or not.

Next, we need to set the environment variable OpenCV_DIR to point to the OpenCV build directory that we have just unzipped. For this, open command prompt and type set OpenCV_DIR=C:\opencv\build\x64\vc14\bin.

One important point is that if you do not have the Intel MKL (Math Kernel Library) installed the you can install it.

Another open source package you can use is OpenBLAS. Here for the further instructions we are assuming that you are using OpenBLAS.

So, Download the OpenBLAS package available at https://excellmedia.dl.sourceforge.net and unzip the file, rename it to OpenBLAS and put it under C:\utils.

Next, we need to set the environment variable OpenBLAS_HOME to point to the OpenBLAS directory that contains the include and lib directories. You can find the directory in C:\Program files (x86)\OpenBLAS\

Note that, if you already had CUDA, then installed Microsoft VS2015, you need to reinstall CUDA now so that, you can get the CUDA toolkit components for Microsoft VS2017 integration.

Next, you need to download and install cuDNN.

Now, we need to Set the environment variable CUDACXX to point to the CUDA Compiler(C:\Program Files\NVIDIA GPU Computing Toolkit\CUDA\v9.1\bin\nvcc.exe for example).

Similarly, we also need to set the environment variable CUDNN_ROOT to point to the cuDNN directory that contains the include, lib and bin directories (C:\Downloads\cudnn-9.1-windows7-x64-v7\cuda for example).

Once you have installed all the required dependencies, follow the steps given below to build the MXNet source code−

Step 1− First, download the MXNet source code from GitHub−

cd C:\ git clone https://github.com/apache/incubator-mxnet.git --recursive

Step 2− Next, use CMake to create a Visual Studio in ./build.

Step 3− Now, in Visual Studio, we need to open the solution file,.sln, and compile it. These commands will produce a library called mxnet.dll in the ./build/Release/ or ./build/Debug folder

Step 4− Once the CMake successfully completed, use the following command to compile the MXNet source code

msbuild mxnet.sln /p:Configuration=Release;Platform=x64 /maxcpucount

Central Processing Unit (CPU)

Here, we will use various methods namely Pip, Docker, and Source to install MXNet when we are using CPU for processing−

By using Pip method

If you plan to build MXNet on Windows with CPUs, there are two options for installing MXNet using a Python package−

Install with CPUs

Use the following command to install MXNet with CPU with Python−

pip install mxnet

Install with Intel CPUs

As discussed above, MXNet has experimental support for Intel MKL as well as MKL-DNN. Use the following command to install MXNet with Intel CPU with Python−

pip install mxnet-mkl

By using Docker

You can find the docker images with MXNet at DockerHub, available at https://hub.docker.com/u/mxnet Let us check out the steps below, to install MXNet by using Docker with CPU−

Step 1− First, by following the docker installation instructions which can be read at https://docs.docker.com/docker-for-mac/install. We need to install Docker on our machine.

Step 2− By using the following command, you can pull the MXNet docker image−

$ docker pull mxnet/python

Now in order to see if mxnet/python docker image pull was successful, we can list docker images as follows−

$ docker images

For the fastest inference speeds with MXNet, it is recommended to use the latest MXNet with Intel MKL-DNN.

Check the commands below−

$ docker pull mxnet/python:1.3.0_cpu_mkl $ docker images

Installing MXNet On Cloud and Devices

This section highlights how to install Apache MXNet on Cloud and on devices. Let us begin by learning about installing MXNet on cloud.

Installing MXNet On Cloud

You can also get Apache MXNet on several cloud providers with Graphical Processing Unit (GPU) support. Two other kind of support you can find are as follows−

- GPU/CPU-hybrid support for use cases like scalable inference.

- Factorial GPU support with AWS Elastic Inference.

Following are cloud providers providing GPU support with different virtual machine for Apache MXNet−

The Alibaba Console

You can create the NVIDIA GPU Cloud Virtual Machine (VM) available at https://docs.nvidia.com/ngc with the Alibaba Console and use Apache MXNet.

Amazon Web Services

It also provides GPU support and gives the following services for Apache MXNet−

Amazon SageMaker

It manages training and deployment of Apache MXNet models.

AWS Deep Learning AMI

It provides preinstalled Conda environment for both Python 2 and Python 3 with Apache MXNet, CUDA, cuDNN, MKL-DNN, and AWS Elastic Inference.

Dynamic Training on AWS

It provides the training for experimental manual EC2 setup as well as for semi-automated CloudFormation setup.

You can use NVIDIA VM available at https://aws.amazon.com with Amazon web services.

Google Cloud Platform

Google is also providing NVIDIA GPU cloud image which is available at https://console.cloud.google.com to work with Apache MXNet.

Microsoft Azure

Microsoft Azure Marketplace is also providing NVIDIA GPU cloud image available at https://azuremarketplace.microsoft.com to work with Apache MXNet.

Oracle Cloud

Oracle is also providing NVIDIA GPU cloud image available at https://docs.cloud.oracle.com to work with Apache MXNet.

Central Processing Unit (CPU)

Apache MXNet works on every cloud providers CPU-only instance. There are various methods to install such as−

Python pip install instructions.

Docker instructions.

Preinstalled option like Amazon Web Services which provides AWS Deep Learning AMI (having preinstalled Conda environment for both Python 2 and Python 3 with MXNet and MKL-DNN).

Installing MXNet on Devices

Let us learn how to install MXNet on devices.

Raspberry Pi

You can also run Apache MXNet on Raspberry Pi 3B devices as MXNet also support Respbian ARM based OS. In order to run MXNet smoothly on the Raspberry Pi3, it is recommended to have a device that has more than 1 GB of RAM and a SD card with at least 4GB of free space.

Following are the ways with the help of which you can build MXNet for the Raspberry Pi and install the Python bindings for the library as well−

Quick installation

The pre-built Python wheel can be used on a Raspberry Pi 3B with Stretch for quick installation. One of the important issues with this method is that, we need to install several dependencies to get Apache MXNet to work.

Docker installation

You can follow the docker installation instructions, which is available at https://docs.docker.com/engine/install/ubuntu/ to install Docker on your machine. For this purpose, we can install and use Community Edition (CE) also.

Native Build (from source)

In order to install MXNet from source, we need to follow the following two steps−

Step 1

Build the shared library from the Apache MXNet C++ source code

To build the shared library on Raspberry version Wheezy and later, we need the following dependencies:

Git− It is required to pull code from GitHub.

Libblas− It is required for linear algebraic operations.

Libopencv− It is required for computer vision related operations. However, it is optional if you would like to save your RAM and Disk Space.

C++ Compiler− It is required to compiles and builds MXNet source code. Following are the supported compilers that supports C++ 11−

G++ (4.8 or later version)

Clang(3.9-6)

Use the following commands to install the above-mentioned dependencies−

sudo apt-get update sudo apt-get -y install git cmake ninja-build build-essential g++-4.9 c++-4.9 liblapack* libblas* libopencv* libopenblas* python3-dev python-dev virtualenv

Next, we need to clone the MXNet source code repository. For this use the following git command in your home directory−

git clone https://github.com/apache/incubator-mxnet.git --recursive cd incubator-mxnet

Now, with the help of following commands, build the shared library:

mkdir -p build && cd build cmake \ -DUSE_SSE=OFF \ -DUSE_CUDA=OFF \ -DUSE_OPENCV=ON \ -DUSE_OPENMP=ON \ -DUSE_MKL_IF_AVAILABLE=OFF \ -DUSE_SIGNAL_HANDLER=ON \ -DCMAKE_BUILD_TYPE=Release \ -GNinja .. ninja -j$(nproc)

Once you execute the above commands, it will start the build process which will take couple of hours to finish. You will get a file named libmxnet.so in the build directory.

Step 2

Install the supported language-specific packages for Apache MXNet

In this step, we will install MXNet Pythin bindings. To do so, we need to run the following command in the MXNet directory−

cd python pip install --upgrade pip pip install -e .

Alternatively, with the following command, you can also create a whl package installable with pip−

ci/docker/runtime_functions.sh build_wheel python/ $(realpath build)

NVIDIA Jetson Devices

You can also run Apache MXNet on NVIDIA Jetson Devices, such as TX2 or Nano as MXNet also support the Ubuntu Arch64 based OS. In order to run, MXNet smoothly on the NVIDIA Jetson Devices, it is necessary to have CUDA installed on your Jetson device.

Following are the ways with the help of which you can build MXNet for NVIDIA Jetson devices:

By using a Jetson MXNet pip wheel for Python development

From source

But, before building MXNet from any of the above-mentioned ways, you need to install following dependencies on your Jetson devices−

Python Dependencies

In order to use the Python API, we need the following dependencies−

sudo apt update sudo apt -y install \ build-essential \ git \ graphviz \ libatlas-base-dev \ libopencv-dev \ python-pip sudo pip install --upgrade \ pip \ setuptools sudo pip install \ graphviz==0.8.4 \ jupyter \ numpy==1.15.2

Clone the MXNet source code repository

By using the following git command in your home directory, clone the MXNet source code repository−

git clone --recursive https://github.com/apache/incubator-mxnet.git mxnet

Setup environment variables

Add the following in your .profile file in your home directory−

export PATH=/usr/local/cuda/bin:$PATH export MXNET_HOME=$HOME/mxnet/ export PYTHONPATH=$MXNET_HOME/python:$PYTHONPATH

Now, apply the change immediately with the following command−

source .profile

Configure CUDA

Before configuring CUDA, with nvcc, you need to check what version of CUDA is running−

nvcc --version

Suppose, if more than one CUDA version is installed on your device or computer and you want to switch CUDA versions then, use the following and replace the symbolic link to the version you want−

sudo rm /usr/local/cuda sudo ln -s /usr/local/cuda-10.0 /usr/local/cuda

The above command will switch to CUDA 10.0, which is preinstalled on NVIDIA Jetson device Nano.

Once you done with the above-mentioned prerequisites, you can now install MXNet on NVIDIA Jetson Devices. So, let us understand the ways with the help of which you can install MXNet−

By using a Jetson MXNet pip wheel for Python development− If you want to use a prepared Python wheel then download the following to your Jetson and run it−

MXNet 1.4.0 (for Python 3) available at https://docs.docker.com

MXNet 1.4.0 (for Python 2) available at https://docs.docker.com

Native Build (from source)

In order to install MXNet from source, we need to follow the following two steps−

Step 1

Build the shared library from the Apache MXNet C++ source code

To build the shared library from the Apache MXNet C++ source code, you can either use Docker method or do it manually−

Docker method

In this method, you first need to install Docker and able to run it without sudo (which is also explained in previous steps). Once done, run the following to execute cross-compilation via Docker−

$MXNET_HOME/ci/build.py -p jetson

Manual

In this method, you need to edit the Makefile (with below command) to install the MXNet with CUDA bindings to leverage the Graphical Processing units (GPU) on NVIDIA Jetson devices:

cp $MXNET_HOME/make/crosscompile.jetson.mk config.mk

After editing the Makefile, you need to edit config.mk file to make some additional changes for the NVIDIA Jetson device.

For this, update the following settings−

Update the CUDA path: USE_CUDA_PATH = /usr/local/cuda

Add -gencode arch=compute-63, code=sm_62 to the CUDA_ARCH setting.

Update the NVCC settings: NVCCFLAGS := -m64

Turn on OpenCV: USE_OPENCV = 1

Now to ensure that the MXNet builds with Pascals hardware level low precision acceleration, we need to edit the Mshadow Makefile as follow−

MSHADOW_CFLAGS += -DMSHADOW_USE_PASCAL=1

Finally, with the help of following command you can build the complete Apache MXNet library−

cd $MXNET_HOME make -j $(nproc)

Once you execute the above commands, it will start the build process which will take couple of hours to finish. You will get a file named libmxnet.so in the mxnet/lib directory.

Step 2

Install the Apache MXNet Python Bindings

In this step, we will install MXNet Python bindings. To do so we need to run the following command in the MXNet directory−

cd $MXNET_HOME/python sudo pip install -e .

Once done with above steps, you are now ready to run MXNet on your NVIDIA Jetson devices TX2 or Nano. It can be verified with the following command−

import mxnet mxnet.__version__

It will return the version number if everything is properly working.

Apache MXNet - Toolkits and Ecosystem

To support the research and development of Deep Learning applications across many fields, Apache MXNet provides us a rich ecosystem of toolkits, libraries and many more. Let us explore them −

ToolKits

Following are some of the most used and important toolkits provided by MXNet −

GluonCV

As name implies GluonCV is a Gluon toolkit for computer vision powered by MXNet. It provides implementation of state-of-the-art DL (Deep Learning) algorithms in computer vision (CV). With the help of GluonCV toolkit engineers, researchers, and students can validate new ideas and learn CV easily.

Given below are some of the features of GluonCV −

It trains scripts for reproducing state-of-the-art results reported in latest research.

More than 170+ high quality pretrained models.

Embrace flexible development pattern.

GluonCV is easy to optimize. We can deploy it without retaining heavy weight DL framework.

It provides carefully designed APIs that greatly lessen the implementation intricacy.

Community support.

Easy to understand implementations.

Following are the supported applications by GluonCV toolkit:

Image Classification

Object Detection

Semantic Segmentation

Instance Segmentation

Pose Estimation

Video Action Recognition

We can install GluonCV by using pip as follows −

pip install --upgrade mxnet gluoncv

GluonNLP

As name implies GluonNLP is a Gluon toolkit for Natural Language Processing (NLP) powered by MXNet. It provides implementation of state-of-the-art DL (Deep Learning) models in NLP.

With the help of GluonNLP toolkit engineers, researchers, and students can build blocks for text data pipelines and models. Based on these models, they can quickly prototype the research ideas and product.

Given below are some of the features of GluonNLP:

It trains scripts for reproducing state-of-the-art results reported in latest research.

Set of pretrained models for common NLP tasks.

It provides carefully designed APIs that greatly lessen the implementation intricacy.

Community support.

It also provides tutorials to help you get started on new NLP tasks.

Following are the NLP tasks we can implement with GluonNLP toolkit −

Word Embedding

Language Model

Machine Translation

Text Classification

Sentiment Analysis

Natural Language Inference

Text Generation

Dependency Parsing

Named Entity Recognition

Intent Classification and Slot Labeling

We can install GluonNLP by using pip as follows −

pip install --upgrade mxnet gluonnlp

GluonTS

As name implies GluonTS is a Gluon toolkit for Probabilistic Time Series Modeling powered by MXNet.

It provides the following features −

State-of-the-art (SOTA) deep learning models ready to be trained.

The utilities for loading as well as iterating over time-series datasets.

Building blocks to define your own model.

With the help of GluonTS toolkit engineers, researchers, and students can train and evaluate any of the built-in models on their own data, quickly experiment with different solutions, and come up with a solution for their time series tasks.

They can also use the provided abstractions and building blocks to create custom time series models, and rapidly benchmark them against baseline algorithms.

We can install GluonTS by using pip as follows −

pip install gluonts

GluonFR

As name implies, it is an Apache MXNet Gluon toolkit for FR (Face Recognition). It provides the following features −

State-of-the-art (SOTA) deep learning models in face recognition.

The implementation of SoftmaxCrossEntropyLoss, ArcLoss, TripletLoss, RingLoss, CosLoss/AMsoftmax, L2-Softmax, A-Softmax, CenterLoss, ContrastiveLoss, and LGM Loss, etc.

In order to install Gluon Face, we need Python 3.5 or later. We also first need to install GluonCV and MXNet first as follows −

pip install gluoncv --pre pip install mxnet-mkl --pre --upgrade pip install mxnet-cuXXmkl --pre upgrade # if cuda XX is installed

Once you installed the dependencies, you can use the following command to install GluonFR −

From Source

pip install git+https://github.com/THUFutureLab/gluon-face.git@master

Pip

pip install gluonfr

Ecosystem

Now let us explore MXNets rich libraries, packages, and frameworks −

Coach RL

Coach, a Python Reinforcement Learning (RL) framework created by Intel AI lab. It enables easy experimentation with State-of-the-art RL algorithms. Coach RL supports Apache MXNet as a back end and allows simple integration of new environment to solve.

In order to extend and reuse existing components easily, Coach RL very well decoupled the basic reinforcement learning components such as algorithms, environments, NN architectures, exploration policies.

Following are the agents and supported algorithms for Coach RL framework −

Value Optimization Agents

Deep Q Network (DQN)

Double Deep Q Network (DDQN)

Dueling Q Network

Mixed Monte Carlo (MMC)

Persistent Advantage Learning (PAL)

Categorical Deep Q Network (C51)

Quantile Regression Deep Q Network (QR-DQN)

N-Step Q Learning

Neural Episodic Control (NEC)

Normalized Advantage Functions (NAF)

Rainbow

Policy Optimization Agents

Policy Gradients (PG)

Asynchronous Advantage Actor-Critic (A3C)

Deep Deterministic Policy Gradients (DDPG)

Proximal Policy Optimization (PPO)

Clipped Proximal Policy Optimization (CPPO)

Generalized Advantage Estimation (GAE)

Sample Efficient Actor-Critic with Experience Replay (ACER)

Soft Actor-Critic (SAC)

Twin Delayed Deep Deterministic Policy Gradient (TD3)

General Agents

Direct Future Prediction (DFP)

Imitation Learning Agents

Behavioral Cloning (BC)

Conditional Imitation Learning

Hierarchical Reinforcement Learning Agents

Hierarchical Actor Critic (HAC)

Deep Graph Library

Deep Graph Library (DGL), developed by NYU and AWS teams, Shanghai, is a Python package that provides easy implementations of Graph Neural Networks (GNNs) on top of MXNet. It also provides easy implementation of GNNs on top of other existing major deep learning libraries like PyTorch, Gluon, etc.

Deep Graph Library is a free software. It is available on all Linux distributions later than Ubuntu 16.04, macOS X, and Windows 7 or later. It also requires the Python 3.5 version or later.

Following are the features of DGL −

No Migration cost − There is no migration cost for using DGL as it is built on top of popular exiting DL frameworks.

Message Passing − DGL provides message passing and it has versatile control over it. The message passing ranges from low-level operations such as sending along selected edges to high-level control such as graph-wide feature updates.

Smooth Learning Curve − It is quite easy to learn and use DGL as the powerful user-defined functions are flexible as well as easy to use.

Transparent Speed Optimization − DGL provides transparent speed optimization by doing automatic batching of computations and sparse matrix multiplication.

High performance − In order to achieve maximum efficiency, DGL automatically batches DNN (deep neural networks) training on one or many graphs together.

Easy & friendly interface − DGL provides us easy & friendly interfaces for edge feature access as well as graph structure manipulation.

InsightFace

InsightFace, a Deep Learning Toolkit for Face Analysis that provides implementation of SOTA (state-of-the-art) face analysis algorithm in computer vision powered by MXNet. It provides −

High-quality large set of pre-trained models.

State-of-the-art (SOTA) training scripts.

InsightFace is easy to optimize. We can deploy it without retaining heavy weight DL framework.

It provides carefully designed APIs that greatly lessen the implementation intricacy.

Building blocks to define your own model.

We can install InsightFace by using pip as follows −

pip install --upgrade insightface

Please note that before installing InsightFace, please install the correct MXNet package according to your system configuration.

Keras-MXNet

As we know that Keras is a high-level Neural Network (NN) API written in Python, Keras-MXNet provides us a backend support for the Keras. It can run on top of high performance and scalable Apache MXNet DL framework.

The features of Keras-MXNet are mentioned below −

Allows users for easy, smooth, and fast prototyping. It all happens through user friendliness, modularity, and extensibility.

Supports both CNN (Convolutional Neural Networks) and RNN (Recurrent Neural Networks) as well as the combination of both also.

Runs flawlessly on both Central Processing Unit (CPU) and Graphical Processing Unit (GPU).

Can run on one or multi GPU.

In order to work with this backend, you first need to install keras-mxnet as follows −

pip install keras-mxnet

Now, if you are using GPUs then install MXNet with CUDA 9 support as follows −

pip install mxnet-cu90

But if you are using CPU-only then install basic MXNet as follows −

pip install mxnet

MXBoard

MXBoard is logging tool, written in Python, that is used to record MXNet data frames and display in TensorBoard. In other words, the MXBoard is meant to follow the tensorboard-pytorch API. It supports most of the data types in TensorBoard.

Some of them are mentioned below −

Graph

Scalar

Histogram

Embedding

Image

Text

Audio

Precision-Recall Curve

MXFusion

MXFusion is a modular probabilistic programming library with deep learning. MXFusion allows us to fully exploited modularity, which is a key feature of deep learning libraries, for probabilistic programming. It is simple to use and provides the users a convenient interface for designing probabilistic models and applying them to the real-world problems.

MXFusion is verified on Python version 3.4 and more on MacOS and Linux OS. In order to install MXFusion, we need to first install the following dependencies −

MXNet >= 1.3

Networkx >= 2.1

With the help of following pip command, you can install MXFusion −

pip install mxfusion

TVM

Apache TVM, an open-source end-to-end deep learning compiler stack for hardware-backends such as CPUs, GPUs, and specialized accelerators, aims to fill the gap between the productivity-focused deep-learning frameworks and performance-oriented hardware backends. With the latest release MXNet 1.6.0, users can leverage Apache(incubating) TVM to implement high-performance operator kernels in Python programming language.

Apache TVM actually started as a research project at the SAMPL group of Paul G. Allen School of Computer Science & Engineering, University of Washington and now it is an effort undergoing incubation at The Apache Software Foundation (ASF) which is driven by an OSC (open source community) that involves multiple industry as well as academic institutions under the Apache way.

Following are the main features of Apache(incubating) TVM −

Simplifies the former C++ based development process.

Enables sharing the same implementation across multiple hardware backends such as CPUs, GPUs, etc.

TVM provides compilation of DL models in various frameworks such as Kears, MXNet, PyTorch, Tensorflow, CoreML, DarkNet into minimum deployable modules on diverse hardware backends.

It also provides us the infrastructure to automatically generate and optimize tensor operators with better performance.

XFer

Xfer, a transfer learning framework, is written in Python. It basically takes an MXNet model and train a meta-model or modifies the model for a new target dataset as well.

In simple words, Xfer is a Python library that allows users to quick and easy transfer of knowledge stored in DNN (deep neural networks).

Xfer can be used −

For the classification of data of arbitrary numeric format.

To the common cases of images or text data.

As a pipeline that spams from extracting features to training a repurposer (an object that performs classification in the target task).

Following are the features of Xfer:

Resource efficiency

Data efficiency

Easy access to neural networks

Uncertainty modeling

Rapid prototyping

Utilities for feature extraction from NN

Apache MXNet - System Architecture

This chapter will help you in understanding about the MXNet system architecture. Let us begin by learning about the MXNet Modules.

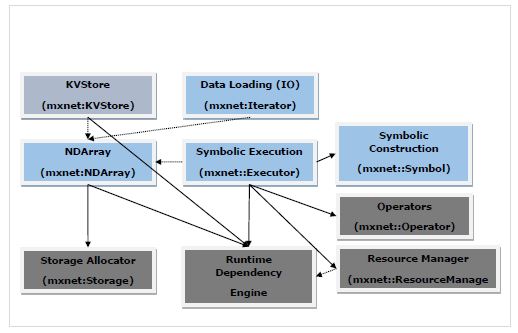

MXNet Modules

The diagram below is the MXNet system architecture and it shows the major modules and components of MXNet modules and their interaction.

In the above diagram −

The modules in blue color boxes are User Facing Modules.

The modules in green color boxes are System Modules.

Solid arrow represents high dependency, i.e. heavily rely on the interface.

Dotted arrow represents light dependency, i.e. Used data structure for convenience and interface consistency. In fact, it can be replaced by the alternatives.

Let us discuss more about user facing and system modules.

User-facing Modules

The user-facing modules are as follows −

NDArray − It provides flexible imperative programs for Apache MXNet. They are dynamic and asynchronous n-dimensional arrays.

KVStore − It acts as interface for efficient parameter synchronization. In KVStore, KV stands for Key-Value. So, it a key-value store interface.

Data Loading (IO) − This user facing module is used for efficient distributed data loading and augmentation.

Symbol Execution − It is a static symbolic graph executor. It provides efficient symbolic graph execution and optimization.

Symbol Construction − This user facing module provides user a way to construct a computation graph i.e. net configuration.

System Modules

The system modules are as follows −

Storage Allocator − This system module, as name suggests, allocates and recycle memory blocks efficiently on host i.e. CPU and different devices i.e. GPUs.

Runtime Dependency Engine − Runtime dependency engine module schedules as well as executes the operations as per their read/write dependency.

Resource Manager − Resource Manager (RM) system module manages global resources like the random number generator and temporal space.

Operator − Operator system module consists of all the operators that define static forward and gradient calculation i.e. backpropagation.

Apache MXNet - System Components

Here, the system components in Apache MXNet are explained in detail. First, we will study about the execution engine in MXNet.

Execution Engine

Apache MXNets execution engine is very versatile. We can use it for deep learning as well as any domain-specific problem: execute a bunch of functions following their dependencies. It is designed in such a way that the functions with dependencies are serialized whereas, the functions with no dependencies can be executed in parallel.

Core Interface

The API given below is the core interface for Apache MXNets execution engine −

virtual void PushSync(Fn exec_fun, Context exec_ctx, std::vector<VarHandle> const& const_vars, std::vector<VarHandle> const& mutate_vars) = 0;

The above API has the following −

exec_fun − The core interface API of MXNet allows us to push the function named exec_fun, along with its context information and dependencies, to the execution engine.

exec_ctx − The context information in which the above-mentioned function exec_fun should be executed.

const_vars − These are the variables that the function reads from.

mutate_vars − These are the variables that are to be modified.

The execution engine provides its user the guarantee that the execution of any two functions that modify a common variable is serialized in their push order.

Function

Following is the function type of the execution engine of Apache MXNet −

using Fn = std::function<void(RunContext)>;

In the above function, RunContext contains the runtime information. The runtime information should be determined by the execution engine. The syntax of RunContext is as follows−

struct RunContext {

// stream pointer which could be safely cast to

// cudaStream_t* type

void *stream;

};

Below are given some important points about execution engines functions −

All the functions are executed by MXNets execution engines internal threads.

It is not good to push blocking the function to the execution engine because with that the function will occupy the execution thread and will also reduce the total throughput.

For this MXNet provides another asynchronous function as follows−

using Callback = std::function<void()>; using AsyncFn = std::function<void(RunContext, Callback)>;

In this AsyncFn function we can pass the heavy part of our threads, but the execution engine does not consider the function finished until we call the callback function.

Context

In Context, we can specify the context of the function to be executed within. This usually includes the following −

Whether the function should be run on a CPU or a GPU.

If we specify GPU in the Context, then which GPU to use.

There is a huge difference between Context and RunContext. Context have the device type and device id, whereas RunContext have the information that can be decided only during runtime.

VarHandle

VarHandle, used to specify the dependencies of functions, is like a token (especially provided by execution engine) we can use to represents the external resources the function can modify or use.

But the question arises, why we need to use VarHandle? It is because, the Apache MXNet engine is designed to decoupled from other MXNet modules.

Following are some important points about VarHandle −

It is lightweight so to create, delete, or copying a variable incurs little operating cost.

We need to specify the immutable variables i.e. the variables that will be used in the const_vars.

We need to specify the mutable variables i.e. the variables that will be modified in the mutate_vars.

The rule used by the execution engine to resolve the dependencies among functions is that the execution of any two functions when one of them modifies at least one common variable is serialized in their push order.

For creating a new variable, we can use the NewVar() API.

For deleting a variable, we can use the PushDelete API.

Let us understand its working with a simple example −

Suppose if we have two functions namely F1 and F2 and they both mutate the variable namely V2. In that case, F2 is guaranteed to be executed after F1 if F2 is pushed after F1. On the other side, if F1 and F2 both use V2 then their actual execution order could be random.

Push and Wait

Push and wait are two more useful API of execution engine.

Following are two important features of Push API:

All the Push APIs are asynchronous which means that the API call immediately returns regardless of whether the pushed function is finished or not.

Push API is not thread safe which means that only one thread should make engine API calls at a time.

Now if we talk about Wait API, following points represent it −

If a user wants to wait for a specific function to be finished, he/she should include a callback function in the closure. Once included, call the function at the end of the function.

On the other hand, if a user wants to wait for all functions that involves a certain variable to finish, he/she should use WaitForVar(var) API.

If someone wants to wait for all the pushed functions to finish, then use the WaitForAll () API.

Used to specify the dependencies of functions, is like a token.

Operators

Operator in Apache MXNet is a class that contains actual computation logic as well as auxiliary information and aid the system in performing optimisation.

Operator Interface

Forward is the core operator interface whose syntax is as follows:

virtual void Forward(const OpContext &ctx, const std::vector<TBlob> &in_data, const std::vector<OpReqType> &req, const std::vector<TBlob> &out_data, const std::vector<TBlob> &aux_states) = 0;

The structure of OpContext, defined in Forward() is as follows:

struct OpContext {

int is_train;

RunContext run_ctx;

std::vector<Resource> requested;

}

The OpContext describes the state of operator (whether in the train or test phase), which device the operator should be run on and also the requested resources. two more useful API of execution engine.

From the above Forward core interface, we can understand the requested resources as follows −

in_data and out_data represent the input and output tensors.

req denotes how the result of computation are written into the out_data.

The OpReqType can be defined as −

enum OpReqType {

kNullOp,

kWriteTo,

kWriteInplace,

kAddTo

};

As like Forward operator, we can optionally implement the Backward interface as follows −

virtual void Backward(const OpContext &ctx, const std::vector<TBlob> &out_grad, const std::vector<TBlob> &in_data, const std::vector<TBlob> &out_data, const std::vector<OpReqType> &req, const std::vector<TBlob> &in_grad, const std::vector<TBlob> &aux_states);

Various tasks

Operator interface allows the users to do the following tasks −

User can specify in-place updates and can reduce memory allocation cost

In order to make it cleaner, the user can hide some internal arguments from Python.

User can define the relationship among the tensors and output tensors.

To perform computation, the user can acquire additional temporary space from the system.

Operator Property

As we are aware that in Convolutional neural network (CNN), one convolution has several implementations. To achieve the best performance from them, we might want to switch among those several convolutions.

That is the reason, Apache MXNet separate the operator semantic interface from the implementation interface. This separation is done in the form of OperatorProperty class which consists of the following−

InferShape − The InferShape interface has two purposes as given below:

First purpose is to tell the system the size of each input and output tensor so that the space can be allocated before Forward and Backward call.

Second purpose is to perform a size check to make sure that there is no error before running.

The syntax is given below −

virtual bool InferShape(mxnet::ShapeVector *in_shape, mxnet::ShapeVector *out_shape, mxnet::ShapeVector *aux_shape) const = 0;

Request Resource − What if your system can manage the computation workspace for operations like cudnnConvolutionForward? Your system can perform optimizations such as reuse the space and many more. Here, MXNet easily achieve this with the help of following two interfaces−

virtual std::vector<ResourceRequest> ForwardResource( const mxnet::ShapeVector &in_shape) const; virtual std::vector<ResourceRequest> BackwardResource( const mxnet::ShapeVector &in_shape) const;

But, what if the ForwardResource and BackwardResource return non-empty arrays? In that case, the system offers corresponding resources through ctx parameter in the Forward and Backward interface of Operator.

Backward dependency − Apache MXNet has following two different operator signatures to deal with backward dependency −

void FullyConnectedForward(TBlob weight, TBlob in_data, TBlob out_data); void FullyConnectedBackward(TBlob weight, TBlob in_data, TBlob out_grad, TBlob in_grad); void PoolingForward(TBlob in_data, TBlob out_data); void PoolingBackward(TBlob in_data, TBlob out_data, TBlob out_grad, TBlob in_grad);

Here, the two important points to note −

The out_data in FullyConnectedForward is not used by FullyConnectedBackward, and

PoolingBackward requires all the arguments of PoolingForward.

That is why for FullyConnectedForward, the out_data tensor once consumed could be safely freed because the backward function will not need it. With the help of this system got a to collect some tensors as garbage as early as possible.

In place Option − Apache MXNet provides another interface to the users to save the cost of memory allocation. The interface is appropriate for element-wise operations in which both input and output tensors have the same shape.

Following is the syntax for specifying the in-place update −

Example for Creating an Operator

With the help of OperatorProperty we can create an operator. To do so, follow the steps given below −

virtual std::vector<std::pair<int, void*>> ElewiseOpProperty::ForwardInplaceOption(

const std::vector<int> &in_data,

const std::vector<void*> &out_data)

const {

return { {in_data[0], out_data[0]} };

}

virtual std::vector<std::pair<int, void*>> ElewiseOpProperty::BackwardInplaceOption(

const std::vector<int> &out_grad,

const std::vector<int> &in_data,

const std::vector<int> &out_data,

const std::vector<void*> &in_grad)

const {

return { {out_grad[0], in_grad[0]} }

}

Step 1

Create Operator

First implement the following interface in OperatorProperty:

virtual Operator* CreateOperator(Context ctx) const = 0;

The example is given below −

class ConvolutionOp {

public:

void Forward( ... ) { ... }

void Backward( ... ) { ... }

};

class ConvolutionOpProperty : public OperatorProperty {

public:

Operator* CreateOperator(Context ctx) const {

return new ConvolutionOp;

}

};

Step 2

Parameterize Operator

If you are going to implement a convolution operator, it is mandatory to know the kernel size, the stride size, padding size, and so on. Why, because these parameters should be passed to the operator before calling any Forward or backward interface.

For this, we need to define a ConvolutionParam structure as below −

#include <dmlc/parameter.h>

struct ConvolutionParam : public dmlc::Parameter<ConvolutionParam> {

mxnet::TShape kernel, stride, pad;

uint32_t num_filter, num_group, workspace;

bool no_bias;

};

Now, we need to put this in ConvolutionOpProperty and pass it to the operator as follows −

class ConvolutionOp {

public:

ConvolutionOp(ConvolutionParam p): param_(p) {}

void Forward( ... ) { ... }

void Backward( ... ) { ... }

private:

ConvolutionParam param_;

};

class ConvolutionOpProperty : public OperatorProperty {

public:

void Init(const vector<pair<string, string>& kwargs) {

// initialize param_ using kwargs

}

Operator* CreateOperator(Context ctx) const {

return new ConvolutionOp(param_);

}

private:

ConvolutionParam param_;

};

Step 3

Register the Operator Property Class and the Parameter Class to Apache MXNet

At last, we need to register the Operator Property Class and the Parameter Class to MXNet. It can be done with the help of following macros −

DMLC_REGISTER_PARAMETER(ConvolutionParam); MXNET_REGISTER_OP_PROPERTY(Convolution, ConvolutionOpProperty);

In the above macro, the first argument is the name string and the second is the property class name.

Apache MXNet - Unified Operator API

This chapter provides information about the unified operator application programming interface (API) in Apache MXNet.

SimpleOp

SimpleOp is a new unified operator API which unifies different invoking processes. Once invoked, it returns to the fundamental elements of operators. The unified operator is specially designed for unary as well as binary operations. It is because most of the mathematical operators attend to one or two operands and more operands make the optimization, related to dependency, useful.

We will be understanding its SimpleOp unified operator working with the help of an example. In this example, we will be creating an operator functioning as a smooth l1 loss, which is a mixture of l1 and l2 loss. We can define and write the loss as given below −

loss = outside_weight .* f(inside_weight .* (data - label)) grad = outside_weight .* inside_weight .* f'(inside_weight .* (data - label))

Here, in above example,

.* stands for element-wise multiplication

f, f is the smooth l1 loss function which we are assuming is in mshadow.

It looks impossible to implement this particular loss as a unary or binary operator but MXNet provides its users automatic differentiation in symbolic execution which simplifies the loss to f and f directly. Thats why we can certainly implement this particular loss as a unary operator.

Defining Shapes

As we know MXNets mshadow library requires explicit memory allocation hence we need to provide all data shapes before any calculation occurs. Before defining functions and gradient, we need to provide input shape consistency and output shape as follows:

typedef mxnet::TShape (*UnaryShapeFunction)(const mxnet::TShape& src, const EnvArguments& env); typedef mxnet::TShape (*BinaryShapeFunction)(const mxnet::TShape& lhs, const mxnet::TShape& rhs, const EnvArguments& env);

The function mxnet::Tshape is used to check input data shape and designated output data shape. In case, if you do not define this function then the default output shape would be same as input shape. For example, in case of binary operator the shape of lhs and rhs is by default checked as the same.

Now lets move on to our smooth l1 loss example. For this, we need to define an XPU to cpu or gpu in the header implementation smooth_l1_unary-inl.h. The reason is to reuse the same code in smooth_l1_unary.cc and smooth_l1_unary.cu.

#include <mxnet/operator_util.h>

#if defined(__CUDACC__)

#define XPU gpu

#else

#define XPU cpu

#endif

As in our smooth l1 loss example, the output has the same shape as the source, we can use the default behavior. It can be written as follows −

inline mxnet::TShape SmoothL1Shape_(const mxnet::TShape& src,const EnvArguments& env) {

return mxnet::TShape(src);

}

Defining Functions

We can create a unary or binary function with one input as follows −

typedef void (*UnaryFunction)(const TBlob& src, const EnvArguments& env, TBlob* ret, OpReqType req, RunContext ctx); typedef void (*BinaryFunction)(const TBlob& lhs, const TBlob& rhs, const EnvArguments& env, TBlob* ret, OpReqType req, RunContext ctx);

Following is the RunContext ctx struct which contains the information needed during runtime for execution −

struct RunContext {

void *stream; // the stream of the device, can be NULL or Stream<gpu>* in GPU mode

template<typename xpu> inline mshadow::Stream<xpu>* get_stream() // get mshadow stream from Context

} // namespace mxnet

Now, lets see how we can write the computation results in ret.

enum OpReqType {

kNullOp, // no operation, do not write anything

kWriteTo, // write gradient to provided space

kWriteInplace, // perform an in-place write

kAddTo // add to the provided space

};

Now, lets move on to our smooth l1 loss example. For this, we will use UnaryFunction to define the function of this operator as follows:

template<typename xpu>

void SmoothL1Forward_(const TBlob& src,

const EnvArguments& env,

TBlob *ret,

OpReqType req,

RunContext ctx) {

using namespace mshadow;

using namespace mshadow::expr;

mshadow::Stream<xpu> *s = ctx.get_stream<xpu>();

real_t sigma2 = env.scalar * env.scalar;

MSHADOW_TYPE_SWITCH(ret->type_flag_, DType, {

mshadow::Tensor<xpu, 2, DType> out = ret->get<xpu, 2, DType>(s);

mshadow::Tensor<xpu, 2, DType> in = src.get<xpu, 2, DType>(s);

ASSIGN_DISPATCH(out, req,

F<mshadow_op::smooth_l1_loss>(in, ScalarExp<DType>(sigma2)));

});

}

Defining Gradients

Except Input, TBlob, and OpReqType are doubled, Gradients functions of binary operators have similar structure. Lets check out below, where we created a gradient function with various types of input:

// depending only on out_grad typedef void (*UnaryGradFunctionT0)(const OutputGrad& out_grad, const EnvArguments& env, TBlob* in_grad, OpReqType req, RunContext ctx); // depending only on out_value typedef void (*UnaryGradFunctionT1)(const OutputGrad& out_grad, const OutputValue& out_value, const EnvArguments& env, TBlob* in_grad, OpReqType req, RunContext ctx); // depending only on in_data typedef void (*UnaryGradFunctionT2)(const OutputGrad& out_grad, const Input0& in_data0, const EnvArguments& env, TBlob* in_grad, OpReqType req, RunContext ctx);

As defined above Input0, Input, OutputValue, and OutputGrad all share the structure of GradientFunctionArgument. It is defined as follows −

struct GradFunctionArgument {

TBlob data;

}

Now lets move on to our smooth l1 loss example. For this to enable the chain rule of gradient we need to multiply out_grad from the top to the result of in_grad.

template<typename xpu>

void SmoothL1BackwardUseIn_(const OutputGrad& out_grad, const Input0& in_data0,

const EnvArguments& env,

TBlob *in_grad,

OpReqType req,

RunContext ctx) {

using namespace mshadow;

using namespace mshadow::expr;

mshadow::Stream<xpu> *s = ctx.get_stream<xpu>();

real_t sigma2 = env.scalar * env.scalar;

MSHADOW_TYPE_SWITCH(in_grad->type_flag_, DType, {

mshadow::Tensor<xpu, 2, DType> src = in_data0.data.get<xpu, 2, DType>(s);

mshadow::Tensor<xpu, 2, DType> ograd = out_grad.data.get<xpu, 2, DType>(s);

mshadow::Tensor<xpu, 2, DType> igrad = in_grad->get<xpu, 2, DType>(s);

ASSIGN_DISPATCH(igrad, req,

ograd * F<mshadow_op::smooth_l1_gradient>(src, ScalarExp<DType>(sigma2)));

});

}

Register SimpleOp to MXNet

Once we created the shape, function, and gradient, we need to restore them into both an NDArray operator as well as into a symbolic operator. For this, we can use the registration macro as follows −

MXNET_REGISTER_SIMPLE_OP(Name, DEV)

.set_shape_function(Shape)

.set_function(DEV::kDevMask, Function<XPU>, SimpleOpInplaceOption)

.set_gradient(DEV::kDevMask, Gradient<XPU>, SimpleOpInplaceOption)

.describe("description");

The SimpleOpInplaceOption can be defined as follows −

enum SimpleOpInplaceOption {

kNoInplace, // do not allow inplace in arguments

kInplaceInOut, // allow inplace in with out (unary)

kInplaceOutIn, // allow inplace out_grad with in_grad (unary)

kInplaceLhsOut, // allow inplace left operand with out (binary)

kInplaceOutLhs // allow inplace out_grad with lhs_grad (binary)

};

Now lets move on to our smooth l1 loss example. For this, we have a gradient function that relies on input data so that the function cannot be written in place.

MXNET_REGISTER_SIMPLE_OP(smooth_l1, XPU)

.set_function(XPU::kDevMask, SmoothL1Forward_<XPU>, kNoInplace)

.set_gradient(XPU::kDevMask, SmoothL1BackwardUseIn_<XPU>, kInplaceOutIn)

.set_enable_scalar(true)

.describe("Calculate Smooth L1 Loss(lhs, scalar)");

SimpleOp on EnvArguments

As we know some operations might need the following −

A scalar as input such as a gradient scale

A set of keyword arguments controlling behavior

A temporary space to speed up calculations.

The benefit of using EnvArguments is that it provides additional arguments and resources to make calculations more scalable and efficient.

Example

First lets define the struct as below −

struct EnvArguments {

real_t scalar; // scalar argument, if enabled

std::vector<std::pair<std::string, std::string> > kwargs; // keyword arguments

std::vector<Resource> resource; // pointer to the resources requested

};

Next, we need to request additional resources like mshadow::Random<xpu> and temporary memory space from EnvArguments.resource. It can be done as follows −

struct ResourceRequest {

enum Type { // Resource type, indicating what the pointer type is

kRandom, // mshadow::Random<xpu> object

kTempSpace // A dynamic temp space that can be arbitrary size

};

Type type; // type of resources

};

Now, the registration will request the declared resource request from mxnet::ResourceManager. After that, it will place the resources in std::vector<Resource> resource in EnvAgruments.

We can access the resources with the help of following code −

auto tmp_space_res = env.resources[0].get_space(some_shape, some_stream); auto rand_res = env.resources[0].get_random(some_stream);

If you see in our smooth l1 loss example, a scalar input is needed to mark the turning point of a loss function. Thats why in the registration process, we use set_enable_scalar(true), and env.scalar in function and gradient declarations.

Building Tensor Operation

Here the question arises that why we need to craft tensor operations? The reasons are as follows −

Computation utilizes the mshadow library and we sometimes do not have functions readily available.

If an operation is not done in an element-wise way such as softmax loss and gradient.

Example

Here, we are using the above smooth l1 loss example. We will be creating two mappers namely the scalar cases of smooth l1 loss and gradient:

namespace mshadow_op {

struct smooth_l1_loss {

// a is x, b is sigma2

MSHADOW_XINLINE static real_t Map(real_t a, real_t b) {

if (a > 1.0f / b) {

return a - 0.5f / b;

} else if (a < -1.0f / b) {

return -a - 0.5f / b;

} else {

return 0.5f * a * a * b;

}

}

};

}

Apache MXNet - Distributed Training