- Home

- Introduction

- Software Quality Factors

- SQA Components

- Software Quality Metrics

- Basics of Measurement

- Measurement and Models

- Measurement Scales

- Empirical Investigations

- Software Measurement

- Software Measurement Validation

- Software Metrics

- Data Manipulation

- Analyzing Software Measurement Data

- Internal Product Attributes

- Albrecht’s Function Point Method

- Measuring The Structure

- Standards and Certificates

- Software Process Assessment

- Quality Assurance

- Role Of Management in QA

- The SQA Unit

Software Quality Management - Quick Guide

Software Quality Management - Introduction

Quality software refers to a software which is reasonably bug or defect free, is delivered in time and within the specified budget, meets the requirements and/or expectations, and is maintainable. In the software engineering context, software quality reflects both functional quality as well as structural quality.

Software Functional Quality − It reflects how well it satisfies a given design, based on the functional requirements or specifications.

Software Structural Quality − It deals with the handling of non-functional requirements that support the delivery of the functional requirements, such as robustness or maintainability, and the degree to which the software was produced correctly.

Software Quality Assurance − Software Quality Assurance (SQA) is a set of activities to ensure the quality in software engineering processes that ultimately result in quality software products. The activities establish and evaluate the processes that produce products. It involves process-focused action.

Software Quality Control − Software Quality Control (SQC) is a set of activities to ensure the quality in software products. These activities focus on determining the defects in the actual products produced. It involves product-focused action.

The Software Quality Challenge

In the software industry, the developers will never declare that the software is free of defects, unlike other industrial product manufacturers usually do. This difference is due to the following reasons.

Product Complexity

It is the number of operational modes the product permits. Normally, an industrial product allows only less than a few thousand modes of operation with different combinations of its machine settings. However, software packages allow millions of operational possibilities. Hence, assuring of all these operational possibilities correctly is a major challenge to the software industry.

Product Visibility

Since the industrial products are visible, most of its defects can be detected during the manufacturing process. Also the absence of a part in an industrial product can be easily detected in the product. However, the defects in software products which are stored on diskettes or CDs are invisible.

Product Development and Production Process

In an industrial product, defects can be detected during the following phases −

Product development − In this phase, the designers and Quality Assurance (QA) staff checks and tests the product prototype to detect its defects.

Product production planning − During this phase, the production process and tools are designed and prepared. This phase also provides opportunities to inspect the product to detect the defects that went unnoticed during the development phase.

Manufacturing − In this phase, QA procedures are applied to detect failures of products themselves. Defects in the product detected in the first period of manufacturing can usually be corrected by a change in the products design or materials or in the production tools, in a way that eliminates such defects in products manufactured in future.

However, in the case of software, the only phase where defects can be detected is the development phase. In case of software, product production planning and manufacturing phases are not required as the manufacturing of software copies and the printing of software manuals are conducted automatically.

The factors affecting the detection of defects in software products versus other industrial products are shown in the following table.

| Characteristic | Software Products | Other Industrial Products |

|---|---|---|

| Complexity | Millions of operational options | thousand operational options |

| visibility of product | Invisible Product Difficult to detect defects by sight | Visible Product Effective detection of defects by sight |

| Nature of development and production process | can defect defects in only one phase | can detect defects in all of the following phases

|

These characteristics of software such as complexity and invisibility make the development of software quality assurance methodology and its successful implementation a highly professional challenge.

Software Quality Factors

The various factors, which influence the software, are termed as software factors. They can be broadly divided into two categories. The first category of the factors is of those that can be measured directly such as the number of logical errors, and the second category clubs those factors which can be measured only indirectly. For example, maintainability but each of the factors is to be measured to check for the content and the quality control.

Several models of software quality factors and their categorization have been suggested over the years. The classic model of software quality factors, suggested by McCall, consists of 11 factors (McCall et al., 1977). Similarly, models consisting of 12 to 15 factors, were suggested by Deutsch and Willis (1988) and by Evans and Marciniak (1987).

All these models do not differ substantially from McCalls model. The McCall factor model provides a practical, up-to-date method for classifying software requirements (Pressman, 2000).

McCalls Factor Model

This model classifies all software requirements into 11 software quality factors. The 11 factors are grouped into three categories product operation, product revision, and product transition factors.

Product operation factors − Correctness, Reliability, Efficiency, Integrity, Usability.

Product revision factors − Maintainability, Flexibility, Testability.

Product transition factors − Portability, Reusability, Interoperability.

Product Operation Software Quality Factors

According to McCalls model, product operation category includes five software quality factors, which deal with the requirements that directly affect the daily operation of the software. They are as follows −

Correctness

These requirements deal with the correctness of the output of the software system. They include −

Output mission

The required accuracy of output that can be negatively affected by inaccurate data or inaccurate calculations.

The completeness of the output information, which can be affected by incomplete data.

The up-to-dateness of the information defined as the time between the event and the response by the software system.

The availability of the information.

The standards for coding and documenting the software system.

Reliability

Reliability requirements deal with service failure. They determine the maximum allowed failure rate of the software system, and can refer to the entire system or to one or more of its separate functions.

Efficiency

It deals with the hardware resources needed to perform the different functions of the software system. It includes processing capabilities (given in MHz), its storage capacity (given in MB or GB) and the data communication capability (given in MBPS or GBPS).

It also deals with the time between recharging of the systems portable units, such as, information system units located in portable computers, or meteorological units placed outdoors.

Integrity

This factor deals with the software system security, that is, to prevent access to unauthorized persons, also to distinguish between the group of people to be given read as well as write permit.

Usability

Usability requirements deal with the staff resources needed to train a new employee and to operate the software system.

Product Revision Quality Factors

According to McCalls model, three software quality factors are included in the product revision category. These factors are as follows −

Maintainability

This factor considers the efforts that will be needed by users and maintenance personnel to identify the reasons for software failures, to correct the failures, and to verify the success of the corrections.

Flexibility

This factor deals with the capabilities and efforts required to support adaptive maintenance activities of the software. These include adapting the current software to additional circumstances and customers without changing the software. This factors requirements also support perfective maintenance activities, such as changes and additions to the software in order to improve its service and to adapt it to changes in the firms technical or commercial environment.

Testability

Testability requirements deal with the testing of the software system as well as with its operation. It includes predefined intermediate results, log files, and also the automatic diagnostics performed by the software system prior to starting the system, to find out whether all components of the system are in working order and to obtain a report about the detected faults. Another type of these requirements deals with automatic diagnostic checks applied by the maintenance technicians to detect the causes of software failures.

Product Transition Software Quality Factor

According to McCalls model, three software quality factors are included in the product transition category that deals with the adaptation of software to other environments and its interaction with other software systems. These factors are as follows −

Portability

Portability requirements tend to the adaptation of a software system to other environments consisting of different hardware, different operating systems, and so forth. The software should be possible to continue using the same basic software in diverse situations.

Reusability

This factor deals with the use of software modules originally designed for one project in a new software project currently being developed. They may also enable future projects to make use of a given module or a group of modules of the currently developed software. The reuse of software is expected to save development resources, shorten the development period, and provide higher quality modules.

Interoperability

Interoperability requirements focus on creating interfaces with other software systems or with other equipment firmware. For example, the firmware of the production machinery and testing equipment interfaces with the production control software.

SQA Components

Software Quality Assurance (SQA) is a set of activities for ensuring quality in software engineering processes. It ensures that developed software meets and complies with the defined or standardized quality specifications. SQA is an ongoing process within the Software Development Life Cycle (SDLC) that routinely checks the developed software to ensure it meets the desired quality measures.

SQA practices are implemented in most types of software development, regardless of the underlying software development model being used. SQA incorporates and implements software testing methodologies to test the software. Rather than checking for quality after completion, SQA processes test for quality in each phase of development, until the software is complete. With SQA, the software development process moves into the next phase only once the current/previous phase complies with the required quality standards. SQA generally works on one or more industry standards that help in building software quality guidelines and implementation strategies.

It includes the following activities −

- Process definition and implementation

- Auditing

- Training

Processes could be −

- Software Development Methodology

- Project Management

- Configuration Management

- Requirements Development/Management

- Estimation

- Software Design

- Testing, etc.

Once the processes have been defined and implemented, Quality Assurance has the following responsibilities −

- Identify the weaknesses in the processes

- Correct those weaknesses to continually improve the process

Components of SQA System

An SQA system always combines a wide range of SQA components. These components can be classified into the following six classes −

Pre-project components

This assures that the project commitments have been clearly defined considering the resources required, the schedule and budget; and the development and quality plans have been correctly determined.

Components of project life cycle activities assessment

The project life cycle is composed of two stages: the development life cycle stage and the operationmaintenance stage.

The development life cycle stage components detect design and programming errors. Its components are divided into the following sub-classes: Reviews, Expert opinions, and Software testing.

The SQA components used during the operationmaintenance phase include specialized maintenance components as well as development life cycle components, which are applied mainly for functionality to improve the maintenance tasks.

Components of infrastructure error prevention and improvement

The main objective of these components, which is applied throughout the entire organization, is to eliminate or at least reduce the rate of errors, based on the organizations accumulated SQA experience.

Components of software quality management

This class of components deal with several goals, such as the control of development and maintenance activities, and the introduction of early managerial support actions that mainly prevent or minimize schedule and budget failures and their outcomes.

Components of standardization, certification, and SQA system assessment

These components implement international professional and managerial standards within the organization. The main objectives of this class are utilization of international professional knowledge, improvement of coordination of the organizational quality systems with other organizations, and assessment of the achievements of quality systems according to a common scale. The various standards may be classified into two main groups: quality management standards and project process standards.

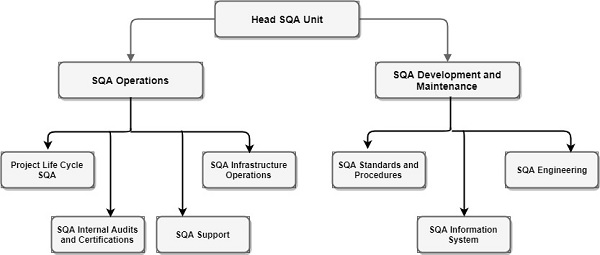

Organizing for SQA the human components

The SQA organizational base includes managers, testing personnel, the SQA unit and the persons interested in software quality such as SQA trustees, SQA committee members, and SQA forum members. Their main objectives are to initiate and support the implementation of SQA components, detect deviations from SQA procedures and methodology, and suggest improvements.

Pre-project Software Quality Components

These components help to improve the preliminary steps taken before starting a project. It includes −

- Contract Review

- Development and Quality Plans

Contract Review

Normally, a software is developed for a contract negotiated with a customer or for an internal order to develop a firmware to be embedded within a hardware product. In all these cases, the development unit is committed to an agreed-upon functional specification, budget and schedule. Hence, contract review activities must include a detailed examination of the project proposal draft and the contract drafts.

Specifically, contract review activities include −

Clarification of the customers requirements

Review of the projects schedule and resource requirement estimates

Evaluation of the professional staffs capacity to carry out the proposed project

Evaluation of the customers capacity to fulfil his obligations

Evaluation of development risks

Development and Quality Plans

After signing the software development contract with an organization or an internal department of the same organization, a development plan of the project and its integrated quality assurance activities are prepared. These plans include additional details and needed revisions based on prior plans that provided the basis for the current proposal and contract.

Most of the time, it takes several months between the tender submission and the signing of the contract. During these period, resources such as staff availability, professional capabilities may get changed. The plans are then revised to reflect the changes that occurred in the interim.

The main issues treated in the project development plan are −

- Schedules

- Required manpower and hardware resources

- Risk evaluations

- Organizational issues: team members, subcontractors and partnerships

- Project methodology, development tools, etc.

- Software reuse plans

The main issues treated in the projects quality plan are −

Quality goals, expressed in the appropriate measurable terms

Criteria for starting and ending each project stage

Lists of reviews, tests, and other scheduled verification and validation activities

Software Quality Metrics

Software metrics can be classified into three categories −

Product metrics − Describes the characteristics of the product such as size, complexity, design features, performance, and quality level.

Process metrics − These characteristics can be used to improve the development and maintenance activities of the software.

Project metrics − This metrics describe the project characteristics and execution. Examples include the number of software developers, the staffing pattern over the life cycle of the software, cost, schedule, and productivity.

Some metrics belong to multiple categories. For example, the in-process quality metrics of a project are both process metrics and project metrics.

Software quality metrics are a subset of software metrics that focus on the quality aspects of the product, process, and project. These are more closely associated with process and product metrics than with project metrics.

Software quality metrics can be further divided into three categories −

- Product quality metrics

- In-process quality metrics

- Maintenance quality metrics

Product Quality Metrics

This metrics include the following −

- Mean Time to Failure

- Defect Density

- Customer Problems

- Customer Satisfaction

Mean Time to Failure

It is the time between failures. This metric is mostly used with safety critical systems such as the airline traffic control systems, avionics, and weapons.

Defect Density

It measures the defects relative to the software size expressed as lines of code or function point, etc. i.e., it measures code quality per unit. This metric is used in many commercial software systems.

Customer Problems

It measures the problems that customers encounter when using the product. It contains the customers perspective towards the problem space of the software, which includes the non-defect oriented problems together with the defect problems.

The problems metric is usually expressed in terms of Problems per User-Month (PUM).

PUM = Total Problems that customers reported (true defect and non-defect oriented problems) for a time period + Total number of license months of the software during the period

Where,

Number of license-month of the software = Number of install license of the software × Number of months in the calculation period

PUM is usually calculated for each month after the software is released to the market, and also for monthly averages by year.

Customer Satisfaction

Customer satisfaction is often measured by customer survey data through the five-point scale −

- Very satisfied

- Satisfied

- Neutral

- Dissatisfied

- Very dissatisfied

Satisfaction with the overall quality of the product and its specific dimensions is usually obtained through various methods of customer surveys. Based on the five-point-scale data, several metrics with slight variations can be constructed and used, depending on the purpose of analysis. For example −

- Percent of completely satisfied customers

- Percent of satisfied customers

- Percent of dis-satisfied customers

- Percent of non-satisfied customers

Usually, this percent satisfaction is used.

In-process Quality Metrics

In-process quality metrics deals with the tracking of defect arrival during formal machine testing for some organizations. This metric includes −

- Defect density during machine testing

- Defect arrival pattern during machine testing

- Phase-based defect removal pattern

- Defect removal effectiveness

Defect density during machine testing

Defect rate during formal machine testing (testing after code is integrated into the system library) is correlated with the defect rate in the field. Higher defect rates found during testing is an indicator that the software has experienced higher error injection during its development process, unless the higher testing defect rate is due to an extraordinary testing effort.

This simple metric of defects per KLOC or function point is a good indicator of quality, while the software is still being tested. It is especially useful to monitor subsequent releases of a product in the same development organization.

Defect arrival pattern during machine testing

The overall defect density during testing will provide only the summary of the defects. The pattern of defect arrivals gives more information about different quality levels in the field. It includes the following −

The defect arrivals or defects reported during the testing phase by time interval (e.g., week). Here all of which will not be valid defects.

The pattern of valid defect arrivals when problem determination is done on the reported problems. This is the true defect pattern.

The pattern of defect backlog overtime. This metric is needed because development organizations cannot investigate and fix all the reported problems immediately. This is a workload statement as well as a quality statement. If the defect backlog is large at the end of the development cycle and a lot of fixes have yet to be integrated into the system, the stability of the system (hence its quality) will be affected. Retesting (regression test) is needed to ensure that targeted product quality levels are reached.

Phase-based defect removal pattern

This is an extension of the defect density metric during testing. In addition to testing, it tracks the defects at all phases of the development cycle, including the design reviews, code inspections, and formal verifications before testing.

Because a large percentage of programming defects is related to design problems, conducting formal reviews, or functional verifications to enhance the defect removal capability of the process at the front-end reduces error in the software. The pattern of phase-based defect removal reflects the overall defect removal ability of the development process.

With regard to the metrics for the design and coding phases, in addition to defect rates, many development organizations use metrics such as inspection coverage and inspection effort for in-process quality management.

Defect removal effectiveness

It can be defined as follows −

$$DRE = \frac{Defect \: removed \: during \: a \: development\:phase }{Defects\: latent \: in \: the\: product} \times 100\%$$

This metric can be calculated for the entire development process, for the front-end before code integration and for each phase. It is called early defect removal when used for the front-end and phase effectiveness for specific phases. The higher the value of the metric, the more effective the development process and the fewer the defects passed to the next phase or to the field. This metric is a key concept of the defect removal model for software development.

Maintenance Quality Metrics

Although much cannot be done to alter the quality of the product during this phase, following are the fixes that can be carried out to eliminate the defects as soon as possible with excellent fix quality.

- Fix backlog and backlog management index

- Fix response time and fix responsiveness

- Percent delinquent fixes

- Fix quality

Fix backlog and backlog management index

Fix backlog is related to the rate of defect arrivals and the rate at which fixes for reported problems become available. It is a simple count of reported problems that remain at the end of each month or each week. Using it in the format of a trend chart, this metric can provide meaningful information for managing the maintenance process.

Backlog Management Index (BMI) is used to manage the backlog of open and unresolved problems.

$$BMI = \frac{Number \: of \: problems \: closed \: during \:the \:month }{Number \: of \: problems \: arrived \: during \:the \:month} \times 100\%$$

If BMI is larger than 100, it means the backlog is reduced. If BMI is less than 100, then the backlog increased.

Fix response time and fix responsiveness

The fix response time metric is usually calculated as the mean time of all problems from open to close. Short fix response time leads to customer satisfaction.

The important elements of fix responsiveness are customer expectations, the agreed-to fix time, and the ability to meet one's commitment to the customer.

Percent delinquent fixes

It is calculated as follows −

$Percent \:Delinquent\: Fixes =$

$\frac{Number \: of \: fixes \: that\: exceeded \: the \:response \:time\:criteria\:by\:ceverity\:level}{Number \: of \: fixes \: delivered \: in \:a \:specified \:time} \times 100\%$

Fix Quality

Fix quality or the number of defective fixes is another important quality metric for the maintenance phase. A fix is defective if it did not fix the reported problem, or if it fixed the original problem but injected a new defect. For mission-critical software, defective fixes are detrimental to customer satisfaction. The metric of percent defective fixes is the percentage of all fixes in a time interval that is defective.

A defective fix can be recorded in two ways: Record it in the month it was discovered or record it in the month the fix was delivered. The first is a customer measure; the second is a process measure. The difference between the two dates is the latent period of the defective fix.

Usually the longer the latency, the more will be the customers that get affected. If the number of defects is large, then the small value of the percentage metric will show an optimistic picture. The quality goal for the maintenance process, of course, is zero defective fixes without delinquency.

Basics of Measurement

Measurement is the action of measuring something. It is the assignment of a number to a characteristic of an object or event, which can be compared with other objects or events.

Formally it can be defined as, the process by which numbers or symbols are assigned to attributes of entities in the real world, in such a way as to describe them according to clearly defined rules.

Measurement in Everyday Life

Measurement is not only used by professional technologists, but also used by all of us in everyday life. In a shop, the price acts as a measure of the value of an item. Similarly, height and size measurements will ensure whether the cloth will fit properly or not. Thus, measurement will help us compare an item with another.

The measurement takes the information about the attributes of entities. An entity is an object such as a person or an event such as a journey in the real world. An attribute is a feature or property of an entity such as the height of a person, cost of a journey, etc. In the real world, even though we are thinking of measuring the things, actually we are measuring the attributes of those things.

Attributes are mostly defined by numbers or symbols. For example, the price can be specified in number of rupees or dollars, clothing size can be specified in terms of small, medium, large.

The accuracy of a measurement depends on the measuring instrument as well as on the definition of the measurement. After obtaining the measurements, we have to analyze them and we have to derive conclusions about the entities.

Measurement is a direct quantification whereas calculation is an indirect one where we combine different measurements using some formulae.

Measurement in Software Engineering

Software Engineering involves managing, costing, planning, modeling, analyzing, specifying, designing, implementing, testing, and maintaining software products. Hence, measurement plays a significant role in software engineering. A rigorous approach will be necessary for measuring the attributes of a software product.

For most of the development projects,

- We fail to set measurable targets for our software products

- We fail to understand and quantify the component cost of software projects

- We do not quantify or predict the quality of the products we produce

Thus, for controlling software products, measuring the attributes is necessary. Every measurement action must be motivated by a particular goal or need that is clearly defined and easily understandable. The measurement objectives must be specific, tried to what managers, developers and users need to know. Measurement is required to assess the status of the project, product, processes, and resources.

In software engineering, measurement is essential for the following three basic activities −

- To understand what is happening during development and maintenance

- To control what is happening in the project

- To improve processes and goals

The Representational Theory of Measurement

Measurement tells us the rules laying the ground work for developing and reasoning about all kinds of measurement. It is the mapping from the empirical world to the formal relational world. Consequently, a measure is the number or symbol assigned to an entity by this mapping in order to characterize an entity.

Empirical Relations

In the real world, we understand the things by comparing them, not by assigning numbers to them.

For example, to compare height, we use the terms taller than, higher than. Thus, these taller than, higher than are empirical relations for height.

We can define more than one empirical relation on the same set.

For example, X is taller than Y. X, Y are much taller than Z.

Empirical relations can be unary, binary, ternary, etc.

X is tall, Y is not tall are unary relations.

X is taller than Y is a binary relation.

Empirical relations in the real world can be mapped to a formal mathematical world. Mostly these relations reflect the personal preferences.

Some of the mapping or rating technique used to map these empirical relations to the mathematical world is follows −

Likert Scale

Here, the users will be given a statement upon which they have to agree or disagree.

For example − This software performs well.

| Strongly Agree | Agree | Neither agree Nor disagree | Disagree | Strongly Disgaree |

|---|---|---|---|---|

Forced Ranking

Order the given alternatives from 1 (best) to n (worst).

For example: Rank the following 5 software modules according to their performance.

| Name of Module | Rank |

|---|---|

| Module A | |

| Module B | |

| Module C | |

| Module D | |

| Module E |

Verbal Frequency Scale

For example − How often does this program fail?

| Always | Often | Sometimes | Seldom | Never |

|---|---|---|---|---|

Ordinal Scale

Here, the users will be given a list of alternatives and they have to select one.

For example − How often does this program fail?

- Hourly

- Daily

- Weekly

- Monthly

- Several times a year

- Once or twice a year

- Never

Comparative Scale

Here, the user has to give a number by comparing the different options.

Very superiorAbout the sameVery inferior

12345678910

Numerical Scale

Here, the user has to give a number according to its importance.

UnimportantImportant

12345678910

The Rules of Mapping

To perform the mapping, we have to specify domain, range as well as the rules to perform the mapping.

For example − Domain - Real world

Range − Mathematical world such as integers, real number, etc.

Rules − For measuring the height, shoes to be worn or not

Similarly, in case of software measurement, the checklist of the statement to be included in the lines of code to be specified.

The Representational Condition of Measurement

The representational condition asserts that a measurement mapping (M) must map entities into numbers, and empirical relations into numerical relations in such a way that the empirical relations preserve and are preserved by numerical relations.

For example: The empirical relation taller than is mapped to the numerical relation >.i.e., X is taller than Y, if and only if M(X) > M(Y)

Since, there can be many relations on a given set, the representational condition also has implications for each of these relations.

For the unary relation is tall, we might have the numerical relation

X > 50

The representational condition requires that for any measure M,

X is tall if and only if M(X) > 50

Key Stages of Formal Measurement

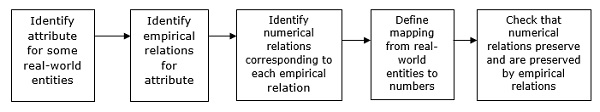

The key stages of measurement can be summarized as follows −

Measurement and Models

Models are useful for interpreting the behavior of the numerical elements of the real-world entities as well as measuring them. To help the measurement process, the model of the mapping should also be supplemented with a model of the mapping domain. A model should also specify how these entities are related to the attributes and how the characteristics relate.

Measurement is of two types −

- Direct measurement

- Indirect measurement

Direct Measurement

These are the measurements that can be measured without the involvement of any other entity or attribute.

The following direct measures are commonly used in software engineering.

- Length of source code by LOC

- Duration of testing purpose by elapsed time

- Number of defects discovered during the testing process by counting defects

- The time a programmer spends on a program

Indirect Measurement

These are measurements that can be measured in terms of any other entity or attribute.

The following indirect measures are commonly used in software engineering.

$$\small Programmer\:Productivity = \frac{LOC \: produced }{Person \:months \:of \:effort}$$

$\small Module\:Defect\:Density = \frac{Number \:of\:defects}{Module \:size}$

$$\small Defect\:Detection\:Efficiency = \frac{Number \:of\:defects\:detected}{Total \:number \:of\:defects}$$

$\small Requirement\:Stability = \frac{Number \:of\:initial\:requirements}{Total \:number \:of\:requirements}$

$\small Test\:Effectiveness\:Ratio = \frac{Number \:of\:items\:covered}{Total \:number \:of \:items}$

$\small System\:spoilage = \frac{Effort \:spent\:for\:fixing\:faults}{Total \:project \:effort}$

Measurement for Prediction

For allocating the appropriate resources to the project, we need to predict the effort, time, and cost for developing the project. The measurement for prediction always requires a mathematical model that relates the attributes to be predicted to some other attribute that we can measure now. Hence, a prediction system consists of a mathematical model together with a set of prediction procedures for determining the unknown parameters and interpreting the results.

Measurement Scales

Measurement scales are the mappings used for representing the empirical relation system. It is mainly of 5 types −

- Nominal Scale

- Ordinal Scale

- Interval Scale

- Ratio Scale

- Absolute Scale

Nominal Scale

It places the elements in a classification scheme. The classes will not be ordered. Each and every entity should be placed in a particular class or category based on the value of the attribute.

It has two major characteristics −

The empirical relation system consists only of different classes; there is no notion of ordering among the classes.

Any distinct numbering or symbolic representation of the classes is an acceptable measure, but there is no notion of magnitude associated with the numbers or symbols.

Ordinal Scale

It places the elements in an ordered classification scheme. It has the following characteristics −

The empirical relation system consists of classes that are ordered with respect to the attribute.

Any mapping that preserves the ordering is acceptable.

The numbers represent ranking only. Hence, addition, subtraction, and other arithmetic operations have no meaning.

Interval Scale

This scale captures the information about the size of the intervals that separate the classification. Hence, it is more powerful than the nominal scale and the ordinal scale.

It has the following characteristics −

It preserves order like the ordinal scale.

It preserves the differences but not the ratio.

Addition and subtraction can be performed on this scale but not multiplication or division.

If an attribute is measurable on an interval scale, and M and M are mappings that satisfy the representation condition, then we can always find two numbers a and b such that,

M = aM + b

Ratio Scale

This is the most useful scale of measurement. Here, an empirical relation exists to capture ratios. It has the following characteristics −

It is a measurement mapping that preserves ordering, the size of intervals between the entities and the ratio between the entities.

There is a zero element, representing total lack of the attributes.

The measurement mapping must start at zero and increase at equal intervals, known as units.

All arithmetic operations can be applied.

Here, mapping will be of the form

M = aM

Where a is a positive scalar.

Absolute Scale

On this scale, there will be only one possible measure for an attribute. Hence, the only possible transformation will be the identity transformation.

It has the following characteristics −

The measurement is made by counting the number of elements in the entity set.

The attribute always takes the form number of occurrences of x in the entity.

There is only one possible measurement mapping, namely the actual count.

All arithmetic operations can be performed on the resulting count.

Empirical Investigations

Empirical Investigations involve the scientific investigation of any tool, technique, or method. This investigation mainly contains the following 4 principles.

- Choosing an investigation technique

- Stating the hypothesis

- Maintaining the control over the variable

- Making the investigation meaningful

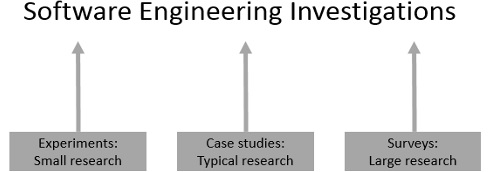

Choosing an Investigation Technique

The key components of Empirical investigation in software engineering are −

- Survey

- Case study

- Formal experiment

Survey

Survey is the retrospective study of a situation to document relationships and outcomes. It is always done after an event has occurred. For example, in software engineering, polls can be performed to determine how the users reacted to a particular method, tool, or technique to determine trends or relationships.

In this case, we have no control over the situation at hand. We can record a situation and compare it with a similar one.

Case Study

It is a research technique where you identify the key factors that may affect the outcome of an activity and then document the activity: its inputs, constraints, resources, and outputs.

Formal Experiment

It is a rigorous controlled investigation of an activity, where the key factors are identified and manipulated to document their effects on the outcome.

A particular investigation method can be chosen according to the following guidelines −

If the activity has already occurred, we can perform survey or case study. If it is yet to occur, then case study or formal experiment may be chosen.

If we have a high level of control over the variables that can affect the outcome, then we can use an experiment. If we have no control over the variable, then case study will be a preferred technique.

If replication is not possible at higher levels, then experiment is not possible.

If the cost of replication is low, then we can consider experiment.

Stating the Hypothesis

To boost the decision of a particular investigation technique, the goal of the research should be expressed as a hypothesis we want to test. The hypothesis is the tentative theory or supposition that the programmer thinks explains the behavior they want to explore.

Maintaining Control over Variables

After stating the hypothesis, next we have to decide the different variables that affect its truth as well as how much control we have over it. This is essential because the key discriminator between the experiment and the case studies is the degree of control over the variable that affects the behavior.

A state variable which is the factor that can characterize the project and can also influence the evaluation results is used to distinguish the control situation from the experimental one in the formal experiment. If we cannot differentiate control from experiment, case study technique will be a preferred one.

For example, if we want to determine whether a change in the programming language can affect the productivity of the project, then the language will be a state variable. Suppose we are currently using FORTRAN which we want to replace by Ada. Then FORTRAN will be the control language and Ada to be the experimental one.

Making the Investigation Meaningful

The results of an experiment are usually more generalizable than case study or survey. The results of the case study or survey can normally be applicable only to a particular organization. Following points prove the efficiency of these techniques to answer a variety of questions.

Conforming theories and conventional wisdom

Case studies or surveys can be used to conform the effectiveness and utility of the conventional wisdom and many other standards, methods, or tools in a single organization. However, formal experiment can investigate the situations in which the claims are generally true.

Exploring relationships

The relationship among various attributes of resources and software products can be suggested by a case study or survey.

For example, a survey of completed projects can reveal that a software written in a particular language has fewer faults than a software written in other languages.

Understanding and verifying these relationships is essential to the success of any future projects. Each of these relationships can be expressed as a hypothesis and a formal experiment can be designed to test the degree to which the relationships hold. Usually, the value of one particular attribute is observed by keeping other attributes constant or under control.

Evaluating the accuracy of models

Models are usually used to predict the outcome of an activity or to guide the use of a method or tool. It presents a particularly difficult problem when designing an experiment or case study, because their predictions often affect the outcome. The project managers often turn the predictions into targets for completion. This effect is common when the cost and schedule models are used.

Some models such as reliability models do not influence the outcome, since reliability measured as mean time to failure cannot be evaluated until the software is ready for use in the field.

Validating measures

There are many software measures to capture the value of an attribute. Hence, a study must be conducted to test whether a given measure reflects the changes in the attribute it is supposed to capture. Validation is performed by correlating one measure with another. A second measure which is also a direct and valid measure of the affecting factor should be used to validate. Such measures are not always available or easy to measure. Also, the measures used must conform to human notions of the factor being measured.

Software Measurement

The framework for software measurement is based on three principles −

- Classifying the entities to be examined

- Determining relevant measurement goals

- Identifying the level of maturity that the organization has reached

Classifying the Entities to be Examined

In software engineering, mainly three classes of entities exist. They are −

- Processes

- Products

- Resources

All of these entities have internal as well as external entities.

Internal attributes are those that can be measured purely in terms of the process, product, or resources itself. For example: Size, complexity, dependency among modules.

External attributes are those that can be measured only with respect to its relation with the environment. For example: The total number of failures experienced by a user, the length of time it takes to search the database and retrieve information.

The different attributes that can be measured for each of the entities are as follows −

Processes

Processes are collections of software-related activities. Following are some of the internal attributes that can be measured directly for a process −

The duration of the process or one of its activities

The effort associated with the process or one of its activities

The number of incidents of a specified type arising during the process or one of its activities

The different external attributes of a process are cost, controllability, effectiveness, quality and stability.

Products

Products are not only the items that the management is committed to deliver but also any artifact or document produced during the software life cycle.

The different internal product attributes are size, effort, cost, specification, length, functionality, modularity, reuse, redundancy, and syntactic correctness. Among these size, effort, and cost are relatively easy to measure than the others.

The different external product attributes are usability, integrity, efficiency, testability, reusability, portability, and interoperability. These attributes describe not only the code but also the other documents that support the development effort.

Resources

These are entities required by a process activity. It can be any input for the software production. It includes personnel, materials, tools and methods.

The different internal attributes for the resources are age, price, size, speed, memory size, temperature, etc. The different external attributes are productivity, experience, quality, usability, reliability, comfort etc.

Determining Relevant Measurement Goals

A particular measurement will be useful only if it helps to understand the process or one of its resultant products. The improvement in the process or products can be performed only when the project has clearly defined goals for processes and products. A clear understanding of goals can be used to generate suggested metrics for a given project in the context of a process maturity framework.

The GoalQuestionMetric (GQM) paradigm

The GQM approach provides a framework involving the following three steps −

Listing the major goals of the development or maintenance project

Deriving the questions from each goal that must be answered to determine if the goals are being met

Decide what must be measured in order to be able to answer the questions adequately

To use GQM paradigm, first we express the overall goals of the organization. Then, we generate the questions such that the answers are known so that we can determine whether the goals are being met. Later, analyze each question in terms of what measurement we need in order to answer each question.

Typical goals are expressed in terms of productivity, quality, risk, customer satisfaction, etc. Goals and questions are to be constructed in terms of their audience.

To help generate the goals, questions, and metrics, Basili & Rombach provided a series of templates.

Purpose − To (characterize, evaluate, predict, motivate, etc.) the (process, product, model, metric, etc.) in order to understand, assess, manage, engineer, learn, improve, etc. Example: To characterize the product in order to learn it.

Perspective − Examine the (cost, effectiveness, correctness, defects, changes, product measures, etc.) from the viewpoint of the developer, manager, customer, etc. Example: Examine the defects from the viewpoint of the customer.

Environment − The environment consists of the following: process factors, people factors, problem factors, methods, tools, constraints, etc. Example: The customers of this software are those who have no knowledge about the tools.

Measurement and Process Improvement

Normally measurement is useful for −

- Understanding the process and products

- Establishing a baseline

- Accessing and predicting the outcome

According to the maturity level of the process given by SEI, the type of measurement and the measurement program will be different. Following are the different measurement programs that can be applied at each of the maturity level.

Level 1: Ad hoc

At this level, the inputs are ill- defined, while the outputs are expected. The transition from input to output is undefined and uncontrolled. For this level of process maturity, baseline measurements are needed to provide a starting point for measuring.

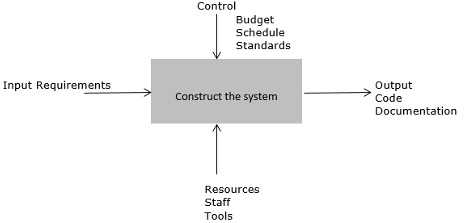

Level 2: Repeatable

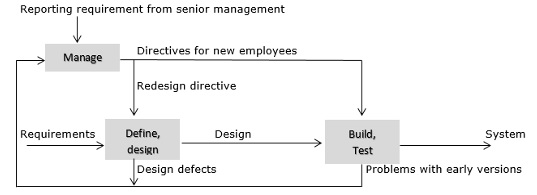

At this level, the inputs and outputs of the process, constraints, and resources are identifiable. A repeatable process can be described by the following diagram.

The input measures can be the size and volatility of the requirements. The output may be measured in terms of system size, the resources in terms of staff effort, and the constraints in terms of cost and schedule.

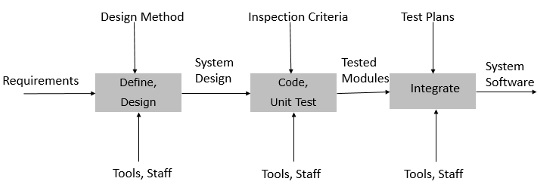

Level 3: Defined

At this level, intermediate activities are defined, and their inputs and outputs are known and understood. A simple example of the defined process is described in the following figure.

The input to and the output from the intermediate activities can be examined, measured, and assessed.

Level 4: Managed

At this level, the feedback from the early project activities can be used to set priorities for the current activities and later for the project activities. We can measure the effectiveness of the process activities. The measurement reflects the characteristics of the overall process and of the interaction among and across major activities.

Level 5: Optimizing

At this level, the measures from activities are used to improve the process by removing and adding process activities and changing the process structure dynamically in response to measurement feedback. Thus, the process change can affect the organization and the project as well as the process. The process will act as sensors and monitors, and we can change the process significantly in response to warning signs.

At a given maturity level, we can collect the measurements for that level and all levels below it.

Identifying the Level of Maturity

Process maturity suggests to measure only what is visible. Thus, the combination of process maturity with GQM will provide most useful measures.

At level 1, the project is likely to have ill-defined requirements. At this level, the measurement of requirement characteristics is difficult.

At level 2, the requirements are well-defined and the additional information such as the type of each requirement and the number of changes to each type can be collected.

At level 3, intermediate activities are defined with entry and exit criteria for each activity

The goal and question analysis will be the same, but the metric will vary with maturity. The more mature the process, the richer will be the measurements. The GQM paradigm, in concert with the process maturity, has been used as the basis for several tools that assist managers in designing measurement programs.

GQM helps to understand the need for measuring the attribute, and process maturity suggests whether we are capable of measuring it in a meaningful way. Together they provide a context for measurement.

Software Measurement Validation

Validating the measurement of software system involves two steps −

- Validating the measurement systems

- Validating the prediction systems

Validating the Measurement Systems

Measures or measurement systems are used to asses an existing entity by numerically characterizing one or more of its attributes. A measure is valid if it accurately characterizes the attribute it claims to measure.

Validating a software measurement system is the process of ensuring that the measure is a proper numerical characterization of the claimed attribute by showing that the representation condition is satisfied.

For validating a measurement system, we need both a formal model that describes entities and a numerical mapping that preserves the attribute that we are measuring. For example, if there are two programs P1 and P2, and we want to concatenate those programs, then we expect that any measure m of length to satisfy that,

m(P1+P2) = m(P1) + m(P2)

If a program P1 has more length than program P2, then any measure m should also satisfy,

m(P1) > m(P2)

The length of the program can be measured by counting the lines of code. If this count satisfies the above relationships, we can say that the lines of code are a valid measure of the length.

The formal requirement for validating a measure involves demonstrating that it characterizes the stated attribute in the sense of measurement theory. Validation can be used to make sure that the measurers are defined properly and are consistent with the entitys real world behavior.

Validating the Prediction Systems

Prediction systems are used to predict some attribute of a future entity involving a mathematical model with associated prediction procedures.

Validating prediction systems in a given environment is the process of establishing the accuracy of the prediction system by empirical means, i.e. by comparing the model performance with known data in the given environment. It involves experimentation and hypothesis testing.

The degree of accuracy acceptable for validation depends upon whether the prediction system is deterministic or stochastic as well as the person doing the assessment. Some stochastic prediction systems are more stochastic than others.

Examples of stochastic prediction systems are systems such as software cost estimation, effort estimation, schedule estimation, etc. Hence, to validate a prediction system formally, we must decide how stochastic it is, then compare the performance of the prediction system with known data.

Software Measurement Metrics

Software metrics is a standard of measure that contains many activities which involve some degree of measurement. It can be classified into three categories: product metrics, process metrics, and project metrics.

Product metrics describe the characteristics of the product such as size, complexity, design features, performance, and quality level.

Process metrics can be used to improve software development and maintenance. Examples include the effectiveness of defect removal during development, the pattern of testing defect arrival, and the response time of the fix process.

Project metrics describe the project characteristics and execution. Examples include the number of software developers, the staffing pattern over the life cycle of the software, cost, schedule, and productivity.

Some metrics belong to multiple categories. For example, the in-process quality metrics of a project are both process metrics and project metrics.

Scope of Software Metrics

Software metrics contains many activities which include the following −

- Cost and effort estimation

- Productivity measures and model

- Data collection

- Quantity models and measures

- Reliability models

- Performance and evaluation models

- Structural and complexity metrics

- Capability maturity assessment

- Management by metrics

- Evaluation of methods and tools

Software measurement is a diverse collection of these activities that range from models predicting software project costs at a specific stage to measures of program structure.

Cost and Effort Estimation

Effort is expressed as a function of one or more variables such as the size of the program, the capability of the developers and the level of reuse. Cost and effort estimation models have been proposed to predict the project cost during early phases in the software life cycle. The different models proposed are −

- Boehms COCOMO model

- Putnams slim model

- Albrechts function point model

Productivity Model and Measures

Productivity can be considered as a function of the value and the cost. Each can be decomposed into different measurable size, functionality, time, money, etc. Different possible components of a productivity model can be expressed in the following diagram.

Data Collection

The quality of any measurement program is clearly dependent on careful data collection. Data collected can be distilled into simple charts and graphs so that the managers can understand the progress and problem of the development. Data collection is also essential for scientific investigation of relationships and trends.

Quality Models and Measures

Quality models have been developed for the measurement of quality of the product without which productivity is meaningless. These quality models can be combined with productivity model for measuring the correct productivity. These models are usually constructed in a tree-like fashion. The upper branches hold important high level quality factors such as reliability and usability.

The notion of divide and conquer approach has been implemented as a standard approach to measuring software quality.

Reliability Models

Most quality models include reliability as a component factor, however, the need to predict and measure reliability has led to a separate specialization in reliability modeling and prediction. The basic problem in reliability theory is to predict when a system will eventually fail.

Performance Evaluation and Models

It includes externally observable system performance characteristics such as response times and completion rates, and the internal working of the system such as the efficiency of algorithms. It is another aspect of quality.

Structural and Complexity Metrics

Here we measure the structural attributes of representations of the software, which are available in advance of execution. Then we try to establish empirically predictive theories to support quality assurance, quality control, and quality prediction.

Capability Maturity Assessment

This model can assess many different attributes of development including the use of tools, standard practices and more. It is based on the key practices that every good contractor should be using.

Management by Metrics

For managing the software project, measurement has a vital role. For checking whether the project is on track, users and developers can rely on the measurement-based chart and graph. The standard set of measurements and reporting methods are especially important when the software is embedded in a product where the customers are not usually well-versed in software terminology.

Evaluation of Methods and Tools

This depends on the experimental design, proper identification of factors likely to affect the outcome and appropriate measurement of factor attributes.

Data Manipulation

Software metrics is a standard of measure that contains many activities, which involves some degree of measurement. The success in the software measurement lies in the quality of the data collected and analyzed.

What is Good Data?

The data collected can be considered as a good data, if it can produce the answers for the following questions −

Are they correct? − A data can be considered correct, if it was collected according to the exact rules of the definition of the metric.

Are they accurate? − Accuracy refers to the difference between the data and the actual value.

Are they appropriately precise? − Precision deals with the number of decimal places needed to express the data.

Are they consistent? − Data can be considered as consistent, if it doesnt show a major difference from one measuring device to another.

Are they associated with a particular activity or time period? − If the data is associated with a particular activity or time period, then it should be clearly specified in the data.

Can they be replicated? − Normally, the investigations such as surveys, case studies, and experiments are frequently repeated under different circumstances. Hence, the data should also be possible to replicate easily.

How to Define the Data?

Data that is collected for measurement purpose is of two types −

Raw data − Raw data results from the initial measurement of process, products, or resources. For example: Weekly timesheet of the employees in an organization.

Refined data − Refined data results from extracting essential data elements from the raw data for deriving values for attributes.

Data can be defined according to the following points −

- Location

- Timing

- Symptoms

- End result

- Mechanism

- Cause

- Severity

- Cost

How to Collect Data?

Collection of data requires human observation and reporting. Managers, system analysts, programmers, testers, and users must record row data on forms. To collect accurate and complete data, it is important to −

Keep procedures simple

Avoid unnecessary recording

Train employees in the need to record data and in the procedures to be used

Provide the results of data capture and analysis to the original providers promptly and in a useful form that will assist them in their work

Validate all data collected at a central collection point

Planning of data collection involves several steps −

Decide which products to measure based on the GQM analysis

Make sure that the product is under configuration control

Decide exactly which attributes to measure and how indirect measures will be derived

Once the set of metrics is clear and the set of components to be measured has been identified, devise a scheme for identifying each activity involved in the measurement process

Establish a procedure for handling the forms, analyzing the data, and reporting the results

Data collection planning must begin when project planning begins. Actual data collection takes place during many phases of development.

For example − Some data related to project personnel can be collected at the start of the project, while other data collection such as effort begins at project starting and continues through operation and maintenance.

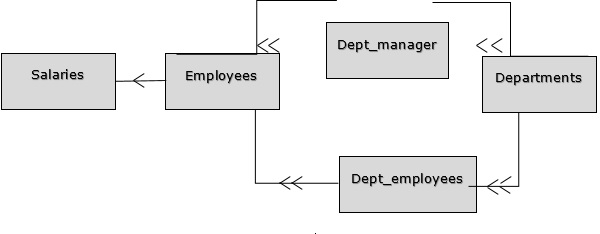

How to Store and Extract Data

In software engineering, data should be stored in a database and set up using a Database Management System (DBMS). An example of a database structure is shown in the following figure. This database will store the details of different employees working in different departments of an organization.

In the above diagram, each box is a table in the database, and the arrow denotes the many-to-one mapping from one table to another. The mappings define the constraints that preserve the logical consistency of the data.

Once the database is designed and populated with data, we can make use of the data manipulation languages to extract the data for analysis.

Analyzing Software Measurement Data

After collecting relevant data, we have to analyze it in an appropriate way. There are three major items to consider for choosing the analysis technique.

- The nature of data

- The purpose of the experiment

- Design considerations

The Nature of Data

To analyze the data, we must also look at the larger population represented by the data as well as the distribution of that data.

Sampling, population, and data distribution

Sampling is the process of selecting a set of data from a large population. Sample statistics describe and summarize the measures obtained from a group of experimental subjects.

Population parameters represent the values that would be obtained if all possible subjects were measured.

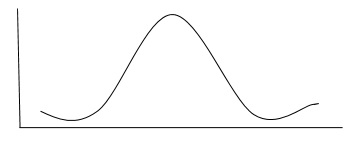

The population or sample can be described by the measures of central tendency such as mean, median, and mode and measures of dispersion such as variance and standard deviation. Many sets of data are distributed normally as shown in the following graph.

As shown above, data will be evenly distributed about the mean. which is the significant characteristics of a normal distribution.

Other distributions also exist where the data is skewed so that there are more data points on one side of the mean than other. For example: If most of the data is present on the left-hand side of the mean, then we can say that the distribution is skewed to the left.

The Purpose of the Experiment

Normally, experiments are conducted −

- To confirm a theory

- To explore a relationship

To achieve each of these, the objective should be expressed formally in terms of the hypothesis, and the analysis must address the hypothesis directly.

To confirm a theory

The investigation must be designed to explore the truth of a theory. The theory usually states that the use of a certain method, tool, or technique has a particular effect on the subjects, making it better in some way than another.

There are two cases of data to be considered: normal data and non-normal data.

If the data is from a normal distribution and there are two groups to compare then, the students t test can be used for analysis. If there are more than two groups to compare, a general analysis of variance test called F-statistics can be used.

If the data is non-normal, then the data can be analyzed using Kruskal-Wallis test by ranking it.

To explore a relationship

Investigations are designed to determine the relationship among data points describing one variable or multiple variables.

There are three techniques to answer the questions about a relationship: box plots, scatter plots, and correlation analysis.

A box plot can represent the summary of the range of a set of data.

A scatter plot represents the relationship between two variables.

Correlation analysis uses statistical methods to confirm whether there is a true relationship between two attributes.

For normally distributed values, use Pearson Correlation Coefficient to check whether or not the two variables are highly correlated.

For non- normal data, rank the data and use the Spearman Rank Correlation Coefficient as a measure of association. Another measure for non-normal data is the Kendall robust correlation coefficient, which investigates the relationship among pairs of data points and can identify a partial correlation.

If the ranking contains a large number of tied values, a chi-squared test on a contingency table can be used to test the association between the variables. Similarly, linear regression can be used to generate an equation to describe the relationship between the variables.

For more than two variables, multivariate regression can be used.

Design Considerations

The investigations design must be considered while choosing the analysis techniques. At the same time, the complexity of analysis can influence the design chosen. Multiple groups use F-statistics rather than Students T-test with two groups.

For complex factorial designs with more than two factors, more sophisticated test of association and significance is needed.

Statistical techniques can be used to account for the effect of one set of variables on others, or to compensate for the timing or learning effects.

Internal Product Attributes

Internal product attributes describe the software products in a way that is dependent only on the product itself. The major reason for measuring internal product attributes is that, it will help monitor and control the products during development.

Measuring Internal Product Attributes

The main internal product attributes include size and structure. Size can be measured statically without having to execute them. The size of the product tells us about the effort needed to create it. Similarly, the structure of the product plays an important role in designing the maintenance of the product.

Measuring the Size

Software size can be described with three attributes −

Length − It is the physical size of the product.

Functionality − It describes the functions supplied by the product to the user.

Complexity − Complexity is of different types, such as.

Problem complexity − Measures the complexity of the underlying problem.

Algorithmic complexity − Measures the complexity of the algorithm implemented to solve the problem

Structural complexity − Measures the structure of the software used to implement the algorithm.

Cognitive complexity − Measures the effort required to understand the software.

The measurement of these three attributes can be described as follows −

Length

There are three development products whose size measurement is useful for predicting the effort needed for prediction. They are specification, design, and code.

Specification and design

These documents usually combine text, graph, and special mathematical diagrams and symbols. Specification measurement can be used to predict the length of the design, which in turn is a predictor of code length.

The diagrams in the documents have uniform syntax such as labelled digraphs, data-flow diagrams or Z schemas. Since specification and design documents consist of texts and diagrams, its length can be measured in terms of a pair of numbers representing the text length and the diagram length.

For these measurements, the atomic objects are to be defined for different types of diagrams and symbols.

The atomic objects for data flow diagrams are processes, external entities, data stores, and data flows. The atomic entities for algebraic specifications are sorts, functions, operations, and axioms. The atomic entities for Z schemas are the various lines appearing in the specification.

Code

Code can be produced in different ways such as procedural language, object orientation, and visual programming. The most commonly used traditional measure of source code program length is the Lines of code (LOC).

The total length,

LOC = NCLOC + CLOC

i.e.,

LOC = Non-commented LOC + Commented LOC

Apart from the line of code, other alternatives such as the size and complexity suggested by Maurice Halsted can also be used for measuring the length.

Halsteads software science attempted to capture different attributes of a program. He proposed three internal program attributes such as length, vocabulary, and volume that reflect different views of size.

He began by defining a program P as a collection of tokens, classified by operators or operands. The basic metrics for these tokens were,

μ1 = Number of unique operators

μ2 = Number of unique operands

N1 = Total Occurrences of operators

N2 = Number of unique operators

The length P can be defined as

$$N = N_{1}+ N_{2}$$

The vocabulary of P is

$$\mu =\mu _{1}+\mu _{2}$$

The volume of program = No. of mental comparisons needed to write a program of length N, is

$$V = N\times {log_{2}} \mu$$

The program level of a program P of volume V is,

$$L = \frac{V^\ast}{V}$$

Where, $V^\ast$ is the potential volume, i.e., the volume of the minimal size implementation of P

The inverse of level is the difficulty −

$$D = 1\diagup L$$

According to Halstead theory, we can calculate an estimate L as

$${L}' = 1\diagup D = \frac{2}{\mu_{1}} \times \frac{\mu_{2}}{N_{2}}$$

Similarly, the estimated program length is, $\mu_{1}\times log_{2}\mu_{1}+\mu_{2}\times log_{2}\mu_{2}$

The effort required to generate P is given by,

$$E = V\diagup L = \frac{\mu_{1}N_{2}Nlog_{2}\mu}{2\mu_{2}}$$

Where the unit of measurement E is elementary mental discriminations needed to understand P

The other alternatives for measuring the length are −

In terms of the number of bytes of computer storage required for the program text

In terms of the number of characters in the program text

Object-oriented development suggests new ways to measure length. Pfleeger et al. found that a count of objects and methods led to more accurate productivity estimates than those using lines of code.

Functionality

The amount of functionality inherent in a product gives the measure of product size. There are so many different methods to measure the functionality of software products. We will discuss one such method the Albrechts Function Point method in the next chapter.

Albrechts Function Point Method

Function point metrics provide a standardized method for measuring the various functions of a software application. It measures the functionality from the users point of view, that is, on the basis of what the user requests and receives in return. Function point analysis is a standard method for measuring software development from the user's point of view.

The Function Point measure originally conceived by Albrecht received increased popularity with the inception of the International Function Point Users Group (IFPUG) in 1986. In 2002, IFPUG Function Points became an international ISO standard ISO/IEC 20926.

What is a Function Point?

FP (Function Point) is the most widespread functional type metrics suitable for quantifying a software application. It is based on five users identifiable logical "functions", which are divided into two data function types and three transactional function types. For a given software application, each of these elements is quantified and weighted, counting its characteristic elements, such as file references or logical fields.

The resulting numbers (Unadjusted FP) are grouped into Added, Changed, or Deleted functions sets, and combined with the Value Adjustment Factor (VAF) to obtain the final number of FP. A distinct final formula is used for each count type: Application, Development Project, or Enhancement Project.

Applying Albrechts Function Point Method

Let us now understand how to apply the Albrechts Function Point method. Its procedure is as follows −

Determine the number of components (EI, EO, EQ, ILF, and ELF)

EI − The number of external inputs. These are elementary processes in which derived data passes across the boundary from outside to inside. In an example library database system, enter an existing patron's library card number.

EO − The number of external output. These are elementary processes in which derived data passes across the boundary from inside to outside. In an example library database system, display a list of books checked out to a patron.

EQ − The number of external queries. These are elementary processes with both input and output components that result in data retrieval from one or more internal logical files and external interface files. In an example library database system, determine what books are currently checked out to a patron.

ILF − The number of internal log files. These are user identifiable groups of logically related data that resides entirely within the applications boundary that are maintained through external inputs. In an example library database system, the file of books in the library.

ELF − The number of external log files. These are user identifiable groups of logically related data that are used for reference purposes only, and which reside entirely outside the system. In an example library database system, the file that contains transactions in the library's billing system.

Compute the Unadjusted Function Point Count (UFC)

Rate each component as low, average, or high.

For transactions (EI, EO, and EQ), the rating is based on FTR and DET.

FTR − The number of files updated or referenced.

DET − The number of user-recognizable fields.

Based on the following table, an EI that references 2 files and 10 data elements would be ranked as average.

| FTRs | DETs | |||

|---|---|---|---|---|

| 1-5 | 6-15 | >15 | ||

| 0-1 | Low | Low | Average | |

| 2-3 | Low | Average | High | |

| >3 | Average | High | High | |

For files (ILF and ELF), the rating is based on the RET and DET.

RET − The number of user-recognizable data elements in an ILF or ELF.

DET − The number of user-recognizable fields.

Based on the following table, an ILF that contains 10 data elements and 5 fields would be ranked as high.

| RETs | DETs | |||

|---|---|---|---|---|

| 1-5 | 6-15 | >15 | ||

| 1 | Low | Low | Average | |

| 2-5 | Low | Average | High | |

| >5 | Average | High | High | |

Convert ratings into UFCs.

| Rating | Values | ||||

|---|---|---|---|---|---|

| EO | EQ | EI | ILF | ELF | |

| Low | 4 | 3 | 3 | 7 | 5 |

| Average | 5 | 4 | 4 | 10 | 7 |

| High | 6 | 5 | 6 | 15 | 10 |

Compute the Final Function Point Count (FPC)