Article Categories

- All Categories

-

Data Structure

Data Structure

-

Networking

Networking

-

RDBMS

RDBMS

-

Operating System

Operating System

-

Java

Java

-

MS Excel

MS Excel

-

iOS

iOS

-

HTML

HTML

-

CSS

CSS

-

Android

Android

-

Python

Python

-

C Programming

C Programming

-

C++

C++

-

C#

C#

-

MongoDB

MongoDB

-

MySQL

MySQL

-

Javascript

Javascript

-

PHP

PHP

-

Economics & Finance

Economics & Finance

Best practices for writing a Dockerfile

If you want to build a new container image, you need to specify the instructions in a separate document called Dockerfile. This will allow a developer to create an execution environment and will help him to automate the process and make it repeatable. It provides you with flexibility, readability, accountability and helps in easy versioning of the project.

No doubt, writing a Dockerfile is one of the most important aspects of a project which includes development using docker. However, how you write a Dockerfile might have a great impact on the performance of your project if you are deploying it on a large scale.

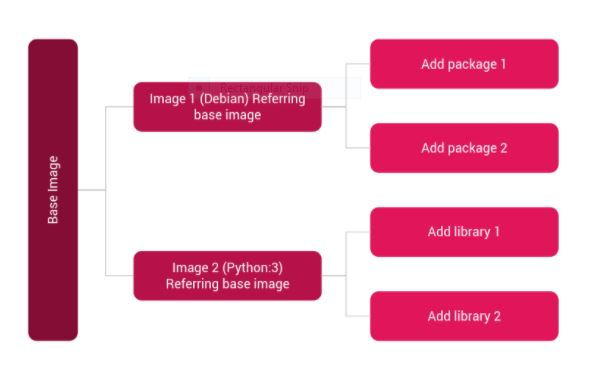

Before we dive into an in-depth analysis of how to efficiently create a Dockerfile which would help you to boost up the project performance, a point to be noted is that the architecture of any docker image can be considered as a layered structure which contains some base images which might be pulled from dockerhub and over that some modifications inside the base image or addition of new images. This architecture allows users to reuse the pulled docker images, allows efficient utilisation of disk storage and allows caching of the docker build process.

So, here are some of the base practices that would help you to boost up your docker project performance.

Order of statements matters.

You need to order your steps in such a way that the least frequently changing statements appear first. This is so because when you change or modify a line in the dockerfile and its cache gets invalidated, the subsequent line’s will break due to these changes. Hence, you need to keep the most frequently changing lines as last as possible.

Consider the example below.

FROM python:3 #set working directory WORKDIR /usr/src/myapp #copying all the files in the container COPY . . RUN apt-get -y update RUN apt-get -y install vim #specify the port number to be exposed EXPOSE 8887 # run the command CMD ["python3", "./file.py"]

This can be modified to -

FROM python:3 #set working directory WORKDIR /usr/src/myapp RUN apt-get -y update RUN apt-get -y install vim #copying all the files in the container COPY . . #specify the port number to be exposed EXPOSE 8887 # run the command CMD ["python3", "./file.py"]

Building cache.

When you build a docker image, docker wil try to execute the instructions in the dockerfile one by one. In every instruction execution, it will look for any existing image layer in a cache and if it does not exist it will create a new image layer. Now, if we set the --no-cache option to true in the build command, docker does not use cache at all and hence, for every instruction it will try to build a new image layer.

Try to avoid installing unnecessary packages.

Installing unnecessary packages simply increases your build time and reduces the overall performance. Hence, try to specify and install only those packages that you actually need in your project. One useful method is to install packages by specifying them in a separate requirements.txt file and then running the following command.

RUN pip3 install -r requirements.txt

This helps to keep track of the packages that you install in a separate and more precise manner.

Always use a .dockerignore file.

Similar to .gitignore, docker provides its developers with an option to create a .dockerignore file which helps to exclude certain files or directories that are mentioned inside that file. This helps to increase the build performance.

Try to include more specific COPY statements.

You should always try to only copy those files which are actually needed from your directory to the docker container. This helps in avoiding cache bursts. Because any changes that you make after building the image to the copy statement, will break the cache statement.

Try to chain all the cacheable units.

Every RUN statement can be considered as a cacheable unit. Try to chain all the RUN statements into a single statement. Also, make sure that chaining too many of them can easily lead to cache burst.

For example,

RUN apt-get -y update RUN apt-get -y install vim

Can be written as

RUN apt-get update \ && apt-get -y install vim

Try to avoid package dependencies that you don’t need.

Try to remove all the unused packages or dependencies before building an image. You can do so by using the --no-install-recommends flag which will configure the apt package manager to not install redundant packages.

So, these are some of the measures that could be adopted while creating a dockerfile which could boost up the performance of the project and helps to manage the project with better efficiency and flexibility while allowing the developer to reuse the code multiple times in multiple projects.