- Apache Oozie - Home

- Apache Oozie - Introduction

- Apache Oozie - Workflow

- Apache Oozie - Property File

- Apache Oozie - Coordinator

- Apache Oozie - Bundle

- Apache Oozie - CLI and Extensions

Apache Oozie - Introduction

In this chapter, we will start with the fundamentals of Apache Oozie. Following is a detailed explanation about Oozie along with a few examples and screenshots for better understanding.

What is Apache Oozie?

Apache Oozie is a scheduler system to run and manage Hadoop jobs in a distributed environment. It allows to combine multiple complex jobs to be run in a sequential order to achieve a bigger task. Within a sequence of task, two or more jobs can also be programmed to run parallel to each other.

One of the main advantages of Oozie is that it is tightly integrated with Hadoop stack supporting various Hadoop jobs like Hive, Pig, Sqoop as well as system-specific jobs like Java and Shell.

Oozie is an Open Source Java Web-Application available under Apache license 2.0. It is responsible for triggering the workflow actions, which in turn uses the Hadoop execution engine to actually execute the task. Hence, Oozie is able to leverage the existing Hadoop machinery for load balancing, fail-over, etc.

Oozie detects completion of tasks through callback and polling. When Oozie starts a task, it provides a unique callback HTTP URL to the task, and notifies that URL when it is complete. If the task fails to invoke the callback URL, Oozie can poll the task for completion.

Following three types of jobs are common in Oozie −

Oozie Workflow Jobs − These are represented as Directed Acyclic Graphs (DAGs) to specify a sequence of actions to be executed.

Oozie Coordinator Jobs − These consist of workflow jobs triggered by time and data availability.

Oozie Bundle − These can be referred to as a package of multiple coordinator and workflow jobs.

We will look into each of these in detail in the following chapters.

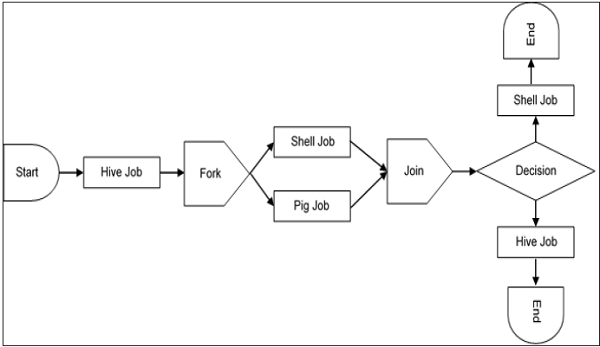

A sample workflow with Controls (Start, Decision, Fork, Join and End) and Actions (Hive, Shell, Pig) will look like the following diagram −

Workflow will always start with a Start tag and end with an End tag.

Use-Cases of Apache Oozie

Apache Oozie is used by Hadoop system administrators to run complex log analysis on HDFS. Hadoop Developers use Oozie for performing ETL operations on data in a sequential order and saving the output in a specified format (Avro, ORC, etc.) in HDFS.

In an enterprise, Oozie jobs are scheduled as coordinators or bundles.

Oozie Editors

Before we dive into Oozie lets have a quick look at the available editors for Oozie.

Most of the time, you wont need an editor and will write the workflows using any popular text editors (like Notepad++, Sublime or Atom) as we will be doing in this tutorial.

But as a beginner it makes some sense to create a workflow by the drag and drop method using the editor and then see how the workflow gets generated. Also, to map GUI with the actual workflow.xml created by the editor. This is the only section where we will discuss about Oozie editors and wont use it in our tutorial.

The most popular among Oozie editors is Hue.

Hue Editor for Oozie

This editor is very handy to use and is available with almost all Hadoop vendors solutions.

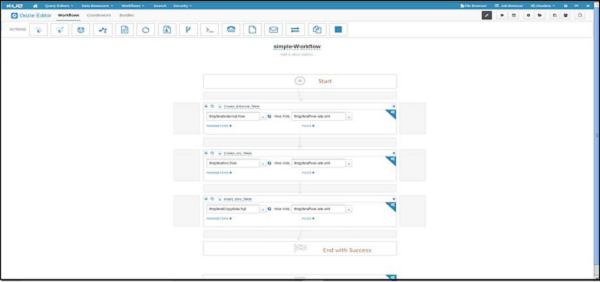

The following screenshot shows an example workflow created by this editor.

You can drag and drop controls and actions and add your job inside these actions.

A good resource to learn more on this topic −

http://gethue.com/new-apache-oozie-workflow-coordinator-bundle-editors/

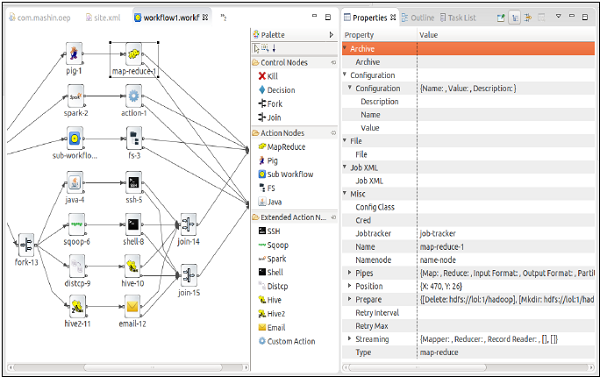

Oozie Eclipse Plugin (OEP)

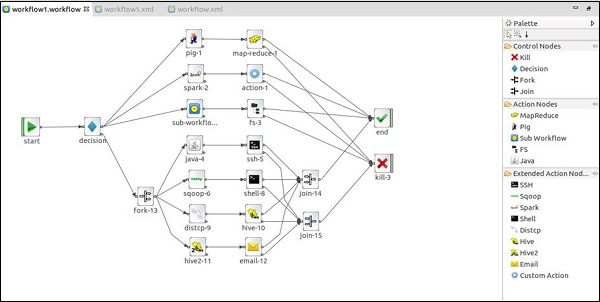

Oozie Eclipse plugin (OEP) is an Eclipse plugin for editing Apache Oozie workflows graphically. It is a graphical editor for editing Apache Oozie workflows inside Eclipse.

Composing Apache Oozie workflows is becoming much simpler. It becomes a matter of drag-and-drop, a matter of connecting lines between the nodes.

The following screenshots are examples of OEP.

To learn more on OEP, you can visit https://www.infoq.com/articles/oozie-plugin-eclipse/

Now lets go to our next lesson and start writing Oozie workflow.