Article Categories

- All Categories

-

Data Structure

Data Structure

-

Networking

Networking

-

RDBMS

RDBMS

-

Operating System

Operating System

-

Java

Java

-

MS Excel

MS Excel

-

iOS

iOS

-

HTML

HTML

-

CSS

CSS

-

Android

Android

-

Python

Python

-

C Programming

C Programming

-

C++

C++

-

C#

C#

-

MongoDB

MongoDB

-

MySQL

MySQL

-

Javascript

Javascript

-

PHP

PHP

-

Economics & Finance

Economics & Finance

How Autoencoders works?

Autoencoders are a powerful category of neural networks used for unsupervised learning and dimensionality reduction. They learn compact representations of input data by encoding it into a lower-dimensional latent space and then decoding it back to reconstruct the original input. This article explores how autoencoders work in Python using Keras.

What are Autoencoders?

Autoencoders are neural networks designed to reconstruct input data through two main components:

Encoder: Compresses input data into a lower-dimensional latent representation

Decoder: Reconstructs the original input from the compressed representation

The network is trained to minimize reconstruction error, forcing it to learn meaningful patterns and features in the data. Common applications include dimensionality reduction, anomaly detection, denoising, and generative modeling.

How Autoencoders Work

Autoencoders learn by minimizing the difference between input and reconstructed output. The encoder compresses data into essential features, while the decoder reconstructs the original from this compressed representation. This process forces the network to learn meaningful data patterns.

Implementation Example

Let's build an autoencoder using the MNIST dataset to demonstrate how it works ?

import numpy as np

import matplotlib.pyplot as plt

from keras.datasets import mnist

from keras.layers import Input, Dense

from keras.models import Model

# Load and preprocess the MNIST dataset

(x_train_m, _), (x_test_m, _) = mnist.load_data()

# Normalize pixel values between 0 and 1

x_train_m = x_train_m.astype('float32') / 255.

x_test_m = x_test_m.astype('float32') / 255.

# Reshape the input images to flatten them

x_train = x_train_m.reshape((len(x_train_m), np.prod(x_train_m.shape[1:])))

x_test = x_test_m.reshape((len(x_test_m), np.prod(x_test_m.shape[1:])))

# Define the size of the latent space

latent_dim = 32

# Define the input layer

input_img = Input(shape=(784,))

# Define the encoder layers

encoded1 = Dense(128, activation='relu')(input_img)

encoded2 = Dense(latent_dim, activation='relu')(encoded1)

# Define the decoder layers

decoded1 = Dense(128, activation='relu')(encoded2)

decoded2 = Dense(784, activation='sigmoid')(decoded1)

# Create the autoencoder model

autoencoder = Model(input_img, decoded2)

# Create separate encoder and decoder models

encoder = Model(input_img, encoded2)

# Define the decoder input

latent_input = Input(shape=(latent_dim,))

decoder_layer = autoencoder.layers[-2](latent_input)

decoder_layer = autoencoder.layers[-1](decoder_layer)

decoder = Model(latent_input, decoder_layer)

# Compile the autoencoder

autoencoder.compile(optimizer='adam', loss='binary_crossentropy')

# Train the autoencoder

autoencoder.fit(x_train, x_train,

epochs=50,

batch_size=256,

shuffle=True,

validation_data=(x_test, x_test))

# Encode and decode the test data

encoded_imgs = encoder.predict(x_test)

decoded_imgs = decoder.predict(encoded_imgs)

# Display original and reconstructed images

n = 10

plt.figure(figsize=(20, 4))

for i in range(n):

# Original image

ax = plt.subplot(2, n, i+1)

plt.imshow(x_test[i].reshape(28, 28))

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

# Reconstructed image

ax = plt.subplot(2, n, i+n+1)

plt.imshow(decoded_imgs[i].reshape(28, 28))

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.show()

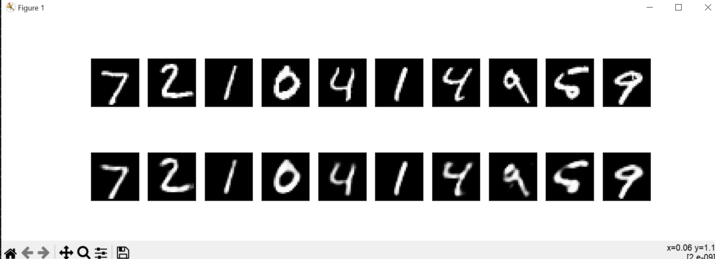

Output

Key Components Explained

| Component | Input Size | Output Size | Purpose |

|---|---|---|---|

| Input Layer | 784 | 784 | Flattened 28×28 images |

| Encoder Layer 1 | 784 | 128 | First compression |

| Latent Space | 128 | 32 | Compressed representation |

| Decoder Layer 1 | 32 | 128 | Begin reconstruction |

| Output Layer | 128 | 784 | Reconstructed image |

Conclusion

Autoencoders provide a powerful framework for learning compressed data representations through encoder-decoder architecture. They effectively capture important features and patterns, making them valuable for dimensionality reduction, anomaly detection, and generative modeling tasks.

---